## Diagram: CNN Architecture with Residual Blocks

### Overview

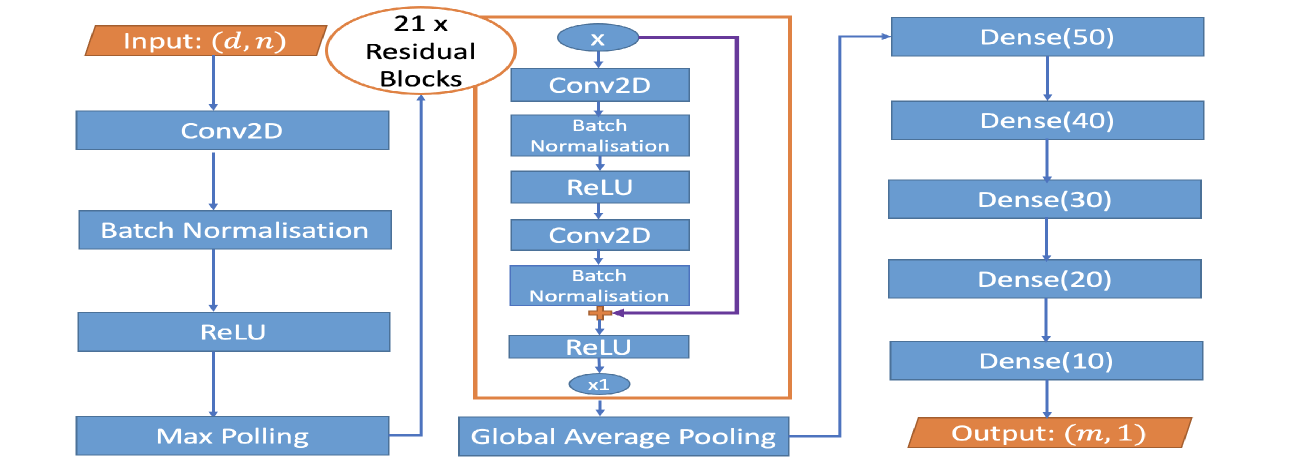

The image is a diagram illustrating the architecture of a Convolutional Neural Network (CNN) that incorporates residual blocks. The diagram shows the flow of data through various layers, including convolutional layers (Conv2D), batch normalization, ReLU activation, max pooling, global average pooling, and dense layers. The network takes an input of size (d, n) and produces an output of size (m, 1).

### Components/Axes

* **Input:** Labeled "Input: (d, n)" in an orange box at the top-left.

* **Conv2D:** Convolutional Layer.

* **Batch Normalisation:** Batch Normalization Layer.

* **ReLU:** Rectified Linear Unit Activation Function.

* **Max Pooling:** Max Pooling Layer.

* **Global Average Pooling:** Global Average Pooling Layer.

* **Residual Blocks:** A brown rounded rectangle labeled "21 x Residual Blocks" encloses a repeating block of layers.

* **Dense(X):** Fully connected (Dense) layers with X neurons. The values of X are 50, 40, 30, 20, and 10.

* **Output:** Labeled "Output: (m, 1)" in an orange box at the bottom-right.

* **Skip Connection:** A purple line represents a skip connection within the residual block.

### Detailed Analysis or ### Content Details

1. **Input Layer:** The network starts with an input layer denoted as "Input: (d, n)".

2. **Initial Layers:** The input is fed into a sequence of layers:

* Conv2D

* Batch Normalisation

* ReLU

* Max Pooling

3. **Residual Blocks:** The output of the Max Pooling layer is then fed into a series of 21 residual blocks. Each residual block consists of the following layers:

* Conv2D

* Batch Normalisation

* ReLU

* Conv2D

* Batch Normalisation

* ReLU

* A skip connection (represented by a purple line) adds the input of the first Conv2D layer to the output of the second ReLU layer (element-wise addition, denoted by a plus sign inside a circle).

* The output of the addition is multiplied by x1 (denoted by "x1" inside an oval).

4. **Global Average Pooling:** After the residual blocks, a Global Average Pooling layer is applied.

5. **Dense Layers:** The output of the Global Average Pooling layer is fed into a series of fully connected (Dense) layers:

* Dense(50)

* Dense(40)

* Dense(30)

* Dense(20)

* Dense(10)

6. **Output Layer:** The final layer is an output layer denoted as "Output: (m, 1)".

### Key Observations

* The diagram illustrates a deep CNN architecture with residual connections.

* The residual blocks are a key component of the network, allowing for the training of deeper networks by mitigating the vanishing gradient problem.

* The skip connection within the residual block adds the input of the block to its output, enabling the network to learn identity mappings.

* The network uses a combination of convolutional, pooling, and fully connected layers to extract features and make predictions.

### Interpretation

The diagram represents a CNN architecture designed for a specific task, likely involving image or feature processing. The use of residual blocks suggests that the task requires a deep network to capture complex patterns in the input data. The architecture is structured to learn hierarchical representations of the input, with convolutional layers extracting local features, pooling layers reducing the dimensionality of the feature maps, and fully connected layers combining the features to make a final prediction. The skip connections in the residual blocks facilitate the flow of information through the network, enabling the training of deeper and more powerful models. The final output layer "Output: (m, 1)" suggests that the network is designed to produce a single output value or a vector of 'm' values.