## Data Table: Model Answer Evaluation Metrics

### Overview

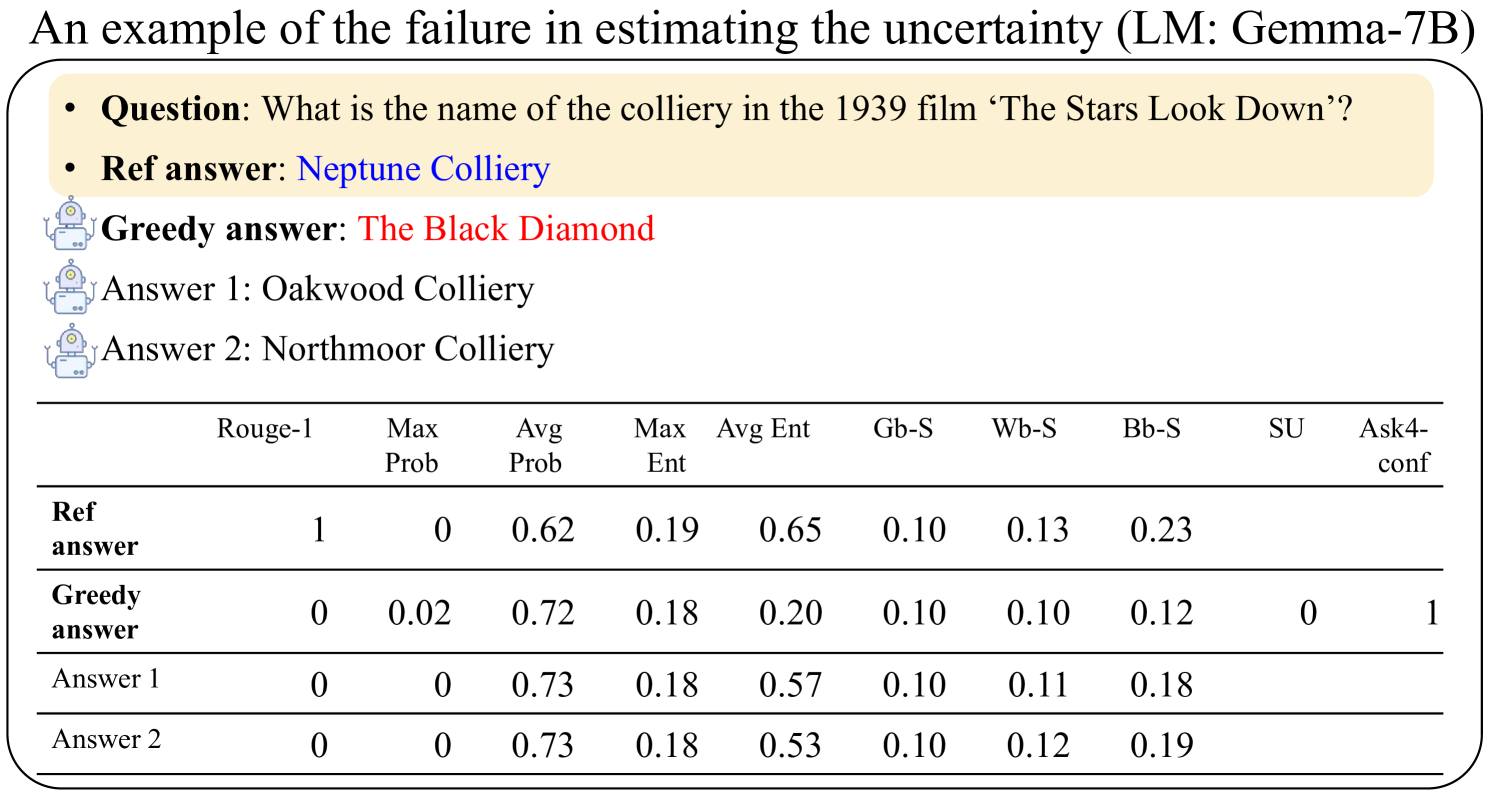

The image presents a comparative analysis of answer quality metrics for a question about the colliery name in the 1939 film *The Stars Look Down*. The table evaluates four answer variants (Reference, Greedy, Answer 1, Answer 2) across multiple uncertainty estimation metrics. The Reference answer ("Neptune Colliery") is correct, while the Greedy answer ("The Black Diamond") is incorrect but statistically favored by the model.

### Components/Axes

- **Rows**:

- Reference answer (correct)

- Greedy answer (incorrect)

- Answer 1 ("Oakwood Colliery")

- Answer 2 ("Northmoor Colliery")

- **Columns**:

- Rouge-1 (precision metric)

- Max Prob (maximum probability assigned)

- Avg Prob (average probability)

- Max Ent (maximum entropy)

- Avg Ent (average entropy)

- Gb-S (Gibbs similarity)

- Wb-S (Wasserstein similarity)

- Bb-S (Bhattacharyya similarity)

- SU (success rate)

- Ask4-conf (confidence in Ask4)

### Detailed Analysis

| Metric | Reference Answer | Greedy Answer | Answer 1 | Answer 2 |

|-----------------|------------------|---------------|----------|----------|

| **Rouge-1** | 1.00 | 0.00 | 0.00 | 0.00 |

| **Max Prob** | 0.00 | 0.02 | 0.00 | 0.00 |

| **Avg Prob** | 0.62 | 0.72 | 0.73 | 0.73 |

| **Max Ent** | 0.19 | 0.18 | 0.18 | 0.18 |

| **Avg Ent** | 0.65 | 0.20 | 0.57 | 0.53 |

| **Gb-S** | 0.10 | 0.10 | 0.10 | 0.10 |

| **Wb-S** | 0.13 | 0.10 | 0.11 | 0.12 |

| **Bb-S** | 0.23 | 0.12 | 0.18 | 0.19 |

| **SU** | - | 0.00 | - | - |

| **Ask4-conf** | - | 1.00 | - | - |

### Key Observations

1. **Reference Answer Dominance**:

- Achieves perfect Rouge-1 score (1.00) and highest Bb-S similarity (0.23), confirming its correctness.

- Low Max Prob (0.00) and Avg Prob (0.62) suggest the model underestimates its confidence in the correct answer.

2. **Greedy Answer Anomaly**:

- Highest Avg Prob (0.72) and Ask4-conf (1.00), indicating the model assigns excessive confidence to the incorrect answer.

- Lowest Bb-S similarity (0.12) and Rouge-1 (0.00), highlighting its factual inaccuracy.

3. **Alternative Answers**:

- Answer 1 and 2 share identical Avg Prob (0.73) and Max Ent (0.18), suggesting similar model uncertainty.

- Answer 1 has marginally higher Bb-S (0.18) than Answer 2 (0.19), but both are inferior to the Reference.

### Interpretation

The table reveals a critical failure in the model's uncertainty estimation (LM: Gemma-7B). While the correct answer ("Neptune Colliery") receives low probability scores (Avg Prob: 0.62), the incorrect greedy answer ("The Black Diamond") is assigned disproportionately high confidence (Avg Prob: 0.72, Ask4-conf: 1.00). This discrepancy suggests the model:

- **Overestimates confidence** in incorrect answers due to superficial similarity metrics (e.g., high Avg Prob despite factual errors).

- **Underestimates confidence** in the correct answer, failing to recognize its semantic and contextual relevance.

- Relies on **probabilistic heuristics** (e.g., Max Prob, Avg Prob) that prioritize statistical likelihood over factual accuracy, leading to poor performance in high-stakes QA tasks.

The metrics highlight a trade-off between probabilistic modeling and factual correctness, emphasizing the need for hybrid evaluation frameworks that balance uncertainty estimation with knowledge-grounded verification.