TECHNICAL ASSET FINGERPRINT

980d60a880664979dbabfdc4

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Communication System Diagram

### Overview

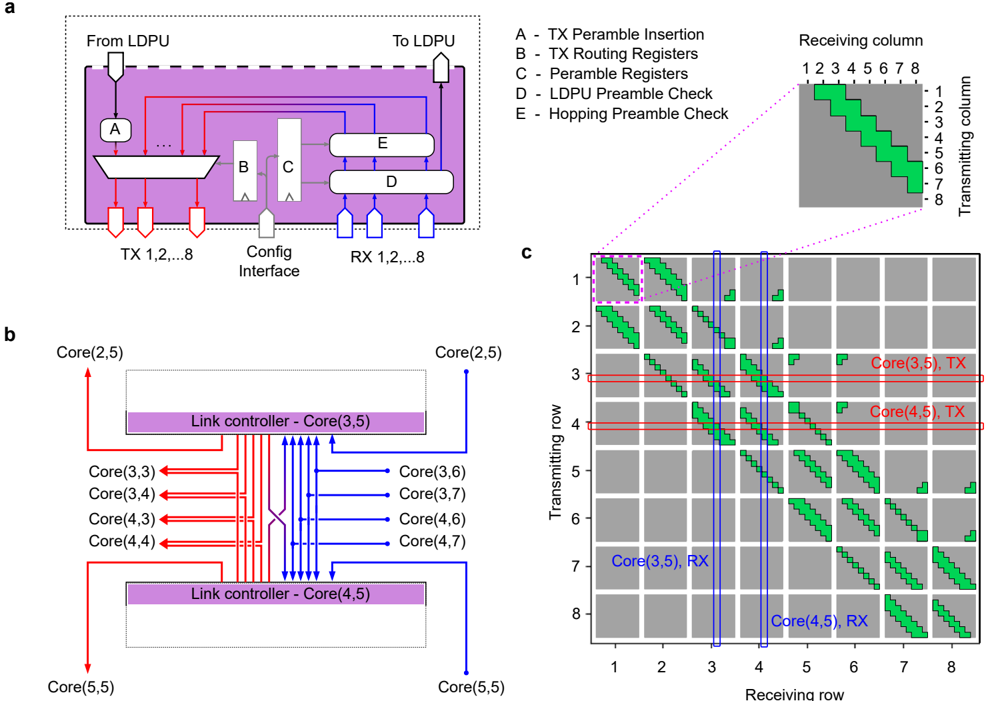

The image presents a communication system diagram, comprising three sub-figures (a, b, and c). Sub-figure (a) illustrates a high-level block diagram of a communication module, showing data flow and key components. Sub-figure (b) depicts a link controller setup with multiple cores and their interconnections. Sub-figure (c) displays a matrix representing the communication paths between transmitting and receiving columns/rows.

### Components/Axes

**Sub-figure a:**

* **Labels:**

* "From LDPU" (top-left)

* "To LDPU" (top-right)

* "TX 1,2,...8" (bottom-left)

* "Config Interface" (bottom-center)

* "RX 1,2,...8" (bottom-right)

* "A - TX Peramble Insertion" (top-right, outside the diagram)

* "B - TX Routing Registers" (top-right, outside the diagram)

* "C - Peramble Registers" (top-right, outside the diagram)

* "D - LDPU Preamble Check" (top-right, outside the diagram)

* "E - Hopping Preamble Check" (top-right, outside the diagram)

* **Components:**

* Block A (TX Peramble Insertion)

* Block B (TX Routing Registers)

* Block C (Peramble Registers)

* Block D (LDPU Preamble Check)

* Block E (Hopping Preamble Check)

* **Data Flow:**

* Red arrows indicate transmission (TX) paths.

* Blue arrows indicate reception (RX) paths.

**Sub-figure b:**

* **Labels:**

* "Core(2,5)" (top-left and top-right)

* "Core(3,3)", "Core(3,4)", "Core(4,3)", "Core(4,4)" (left side)

* "Core(3,6)", "Core(3,7)", "Core(4,6)", "Core(4,7)" (right side)

* "Core(5,5)" (bottom-left and bottom-right)

* "Link controller - Core(3,5)" (top center)

* "Link controller - Core(4,5)" (bottom center)

* **Data Flow:**

* Red arrows indicate transmission (TX) paths.

* Blue arrows indicate reception (RX) paths.

**Sub-figure c:**

* **Axes:**

* X-axis: "Receiving row" (labeled 1 to 8)

* Y-axis: "Transmitting row" (labeled 1 to 8)

* **Elements:**

* Green blocks indicate active communication paths.

* Gray blocks indicate inactive communication paths.

* **Annotations:**

* "Core(3,5), TX" (right of row 3)

* "Core(4,5), TX" (right of row 4)

* "Core(3,5), RX" (left of row 7)

* "Core(4,5), RX" (left of row 8)

* **Inset:**

* "Receiving column" (labeled 1 to 8)

* "Transmitting column" (labeled 1 to 8)

* Shows a zoomed-in view of the communication paths.

### Detailed Analysis or ### Content Details

**Sub-figure a:**

* The diagram shows a module that handles both transmission (TX) and reception (RX) of data.

* The "Config Interface" likely allows for configuration of the module's parameters.

* The blocks A, B, C, D, and E represent different stages in the TX and RX processes, including preamble insertion, routing, and checks.

**Sub-figure b:**

* Two link controllers, "Core(3,5)" and "Core(4,5)", are shown.

* Each link controller is connected to multiple cores.

* The red arrows indicate data transmission from "Core(3,3)", "Core(3,4)", "Core(4,3)", "Core(4,4)" to "Core(3,5)" and "Core(4,5)".

* The blue arrows indicate data reception from "Core(3,5)" and "Core(4,5)" to "Core(3,6)", "Core(3,7)", "Core(4,6)", "Core(4,7)".

**Sub-figure c:**

* The matrix represents the communication paths between transmitting and receiving rows.

* Green blocks indicate active communication paths, forming diagonal patterns.

* The inset provides a zoomed-in view of the communication paths.

* The annotations "Core(3,5), TX", "Core(4,5), TX", "Core(3,5), RX", and "Core(4,5), RX" indicate the transmitting and receiving cores for specific rows.

* The green blocks are present in a diagonal pattern, offset by one position each row.

### Key Observations

* Sub-figure (a) provides a high-level overview of the communication module.

* Sub-figure (b) illustrates the interconnection between link controllers and cores.

* Sub-figure (c) visualizes the communication paths between transmitting and receiving rows.

* The diagonal patterns in sub-figure (c) suggest a specific communication scheme or routing algorithm.

### Interpretation

The diagram illustrates a communication system with multiple cores and link controllers. The system utilizes a specific communication scheme, as indicated by the diagonal patterns in sub-figure (c). The link controllers manage the data flow between the cores, and the communication module handles the transmission and reception of data. The diagram provides insights into the architecture and functionality of the communication system. The cores are arranged in a grid-like structure, and the communication paths are designed to facilitate efficient data transfer between them. The use of preambles and routing registers ensures reliable and efficient communication.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Diagram: Network on Chip (NoC) Architecture and Communication Flow

### Overview

The image presents a diagram illustrating the architecture and communication flow within a Network on Chip (NoC). It consists of three main parts: (a) a block diagram of a transceiver unit, (b) a schematic of the core interconnection network, and (c) a visualization of data transmission paths within the NoC.

### Components/Axes

**Part (a): Transceiver Unit**

* **Input:** From LDPU (Local Data Processing Unit)

* **Output:** To LDPU

* **Components:**

* A: TX Preamble Insertion

* B: TX Routing Registers

* C: Preamble Registers

* D: LDPU Preamble Check

* E: Hopping Preamble Check

* **Interfaces:** TX 1,2,...8 and RX 1,2,...8

* **Visual Elements:** Rectangular blocks representing functional units, arrows indicating data flow.

**Part (b): Core Interconnection Network**

* **Cores:** Core(2,5), Core(3,3), Core(3,4), Core(4,3), Core(4,4), Core(3,6), Core(3,7), Core(4,6), Core(4,7), Core(5,5)

* **Link Controllers:** Core(3,5) and Core(4,5)

* **Connections:** Bidirectional arrows representing data paths between cores and link controllers.

**Part (c): Data Transmission Visualization**

* **Axes:** Transmitting row (1-8) and Receiving row (1-8).

* **Visual Elements:** Grid of squares representing the NoC array. Green squares indicate active transmission paths, and a single blue square indicates a receiving core.

* **Labels:** Core(3,5), TX; Core(4,5), TX; Core(3,5), RX; Core(4,5), RX.

### Detailed Analysis or Content Details

**Part (a): Transceiver Unit**

The transceiver unit receives data from the LDPU, inserts a preamble (A), routes the data (B), stores preamble information (C), performs preamble checks (D & E), and transmits the data to the LDPU. The unit has 8 transmit (TX) and 8 receive (RX) interfaces.

**Part (b): Core Interconnection Network**

The core interconnection network connects multiple cores through link controllers. Core(3,5) and Core(4,5) act as link controllers, managing data flow between the other cores. The connections are bidirectional, allowing for two-way communication.

**Part (c): Data Transmission Visualization**

The visualization shows data transmission paths within the NoC.

* **Core(3,5) TX:** Transmits data from row 3 to rows 1, 2, 3, 4, 5, 6, 7, and 8. The transmission path is diagonal, starting from row 3 and extending to all other rows.

* **Core(4,5) TX:** Transmits data from row 4 to rows 1, 2, 3, 4, 5, 6, 7, and 8. The transmission path is also diagonal, starting from row 4 and extending to all other rows.

* **Core(3,5) RX:** Receives data in row 3.

* **Core(4,5) RX:** Receives data in row 4.

### Key Observations

* The transceiver unit (a) handles the physical layer aspects of communication, including preamble insertion and checking.

* The core interconnection network (b) provides a flexible and scalable way to connect multiple cores.

* The data transmission visualization (c) demonstrates the diagonal communication pattern within the NoC.

* The transmission paths from Core(3,5) and Core(4,5) cover the entire NoC array, indicating a broadcast or multi-cast communication scheme.

### Interpretation

The diagram illustrates a typical NoC architecture used in modern multi-core processors and systems-on-chip. The NoC provides a scalable and efficient communication infrastructure for interconnecting multiple cores. The transceiver unit handles the physical layer details, while the core interconnection network provides the routing and switching functionality. The diagonal transmission pattern suggests a wormhole routing scheme, where data packets are transmitted through the NoC in a pipelined manner. The use of link controllers simplifies the routing process and improves performance. The diagram highlights the key components and communication flow within the NoC, providing a clear understanding of its architecture and operation. The visualization in part (c) suggests a relatively simple routing scheme, potentially optimized for latency or throughput. The fact that the transmission paths cover the entire array indicates a broadcast or multicast capability, which could be used for synchronization or data distribution.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Diagram Set: Network-on-Chip Link Controller and Core Connectivity

### Overview

The image is a technical figure composed of three interconnected panels (a, b, c) illustrating the architecture and connectivity of a network-on-chip (NoC) communication system. Panel **a** details the internal block diagram of a single link controller. Panel **b** shows the interconnection of two specific link controllers with surrounding processing cores. Panel **c** presents a connectivity matrix (heatmap) showing active transmission paths between cores across the entire chip grid.

### Components/Axes

**Panel a: Link Controller Block Diagram**

* **Main Block:** A purple rectangle representing the link controller.

* **Inputs:**

* "From LDPU" (top-left, white arrow).

* "Config Interface" (bottom-center, white arrow).

* "RX 1,2,...8" (bottom-right, 8 white arrows).

* **Outputs:**

* "To LDPU" (top-right, white arrow).

* "TX 1,2,...8" (bottom-left, 8 white arrows).

* **Internal Components (Labeled A-E):**

* **A:** "TX Preamble Insertion" (connected to the TX multiplexer).

* **B:** "TX Routing Registers" (connected to the TX multiplexer and Config Interface).

* **C:** "Preamble Registers" (connected to Config Interface and components D/E).

* **D:** "LDPU Preamble Check" (connected to RX inputs and component C).

* **E:** "Hopping Preamble Check" (connected to RX inputs and component C).

* **Legend (Top-Right):** A list mapping letters to component names (A through E as listed above).

* **Inset Diagram (Top-Right):** A small 8x8 grid labeled "Receiving column" (1-8, top) and "Transmitting column" (1-8, right). A diagonal pattern of green squares runs from (1,1) to (8,8).

**Panel b: Core Interconnection Diagram**

* **Central Elements:** Two purple rectangles labeled "Link controller - Core(3,5)" (top) and "Link controller - Core(4,5)" (bottom).

* **Connected Cores (Red Arrows - Transmitting):**

* From Core(2,5) (top-left, arrow pointing down to top link controller).

* From Core(3,3), Core(3,4), Core(4,3), Core(4,4) (left side, arrows pointing right to both link controllers).

* From Core(5,5) (bottom-left, arrow pointing up to bottom link controller).

* **Connected Cores (Blue Arrows - Receiving):**

* To Core(2,5) (top-right, arrow pointing up from top link controller).

* To Core(3,6), Core(3,7), Core(4,6), Core(4,7) (right side, arrows pointing right from both link controllers).

* To Core(5,5) (bottom-right, arrow pointing down from bottom link controller).

* **Cross-Connection:** Red and blue lines cross between the two link controllers, indicating a direct link between Core(3,5) and Core(4,5).

**Panel c: Connectivity Matrix (Heatmap)**

* **Axes:**

* **X-axis (Bottom):** "Receiving row", numbered 1 through 8.

* **Y-axis (Left):** "Transmitting row", numbered 1 through 8.

* **Grid Structure:** An 8x8 grid of large gray squares. Each large square is subdivided into an 8x8 sub-grid of smaller cells.

* **Data Representation:** Green squares within the sub-grids indicate an active connection from a specific transmitting column to a specific receiving column within the given row-to-row link.

* **Highlighted Rows/Columns:**

* **Red Horizontal Lines:** Highlight rows 3 and 4 on the Y-axis. Labels: "Core(3,5), TX" (next to row 3) and "Core(4,5), TX" (next to row 4).

* **Blue Vertical Lines:** Highlight columns 3 and 4 on the X-axis. Labels: "Core(3,5), RX" (below column 3) and "Core(4,5), RX" (below column 4).

* **Pattern:** The green squares form a repeating diagonal block pattern across the matrix. The pattern is consistent for most row-to-row connections, but the highlighted rows/columns show the specific connectivity for the cores detailed in panel **b**.

### Detailed Analysis

**Panel a - Link Controller Flow:**

1. **Transmit Path (Red Lines):** Data "From LDPU" enters, goes through "TX Preamble Insertion" (A), and is routed by a multiplexer controlled by "TX Routing Registers" (B) to one of the eight TX outputs.

2. **Receive Path (Blue Lines):** Data from eight RX inputs passes through "LDPU Preamble Check" (D) and "Hopping Preamble Check" (E). These checks use configuration data from "Preamble Registers" (C). Validated data is sent "To LDPU".

3. **Configuration:** The "Config Interface" writes to registers B and C to set up routing and preamble checking rules.

**Panel b - Core Connectivity:**

* The link controller for Core(3,5) handles transmissions **from** cores (2,5), (3,3), (3,4), (4,3), (4,4) and sends data **to** cores (2,5), (3,6), (3,7), (4,6), (4,7).

* The link controller for Core(4,5) handles transmissions **from** cores (4,3), (4,4), (5,5) and sends data **to** cores (4,6), (4,7), (5,5).

* There is a direct, bidirectional connection between the two link controllers (Core(3,5) and Core(4,5)).

**Panel c - Connectivity Matrix Data:**

* The matrix shows that for any given transmitting row `i` and receiving row `j`, communication is possible only between specific column pairs. This creates the diagonal green blocks.

* **For Core(3,5) as Transmitter (Row 3):** It can send to Receiving Rows 1, 2, 3, 4, 5, 6, 7, and 8. The specific receiving columns vary per row, following the diagonal pattern.

* **For Core(3,5) as Receiver (Column 3):** It can receive from Transmitting Rows 1, 2, 3, 4, 5, 6, 7, and 8. The specific transmitting columns vary per row.

* The same logic applies to Core(4,5) (Row 4 / Column 4). The pattern indicates a structured, likely dimension-ordered routing network.

### Key Observations

1. **Hierarchical Design:** The system is shown at three levels: internal controller logic (a), local core cluster interconnect (b), and global chip-wide connectivity (c).

2. **Structured Routing:** The strict diagonal pattern in the heatmap (c) suggests a deterministic routing algorithm (e.g., XY routing), where a packet's path is determined by its source and destination coordinates.

3. **Dedicated Link Controllers:** Cores (3,5) and (4,5) are not just processing elements but also act as communication hubs (routers) for their local 3x3 neighborhoods of cores, as shown in panel **b**.

4. **Bidirectional & Multicast Capability:** Panel **b** shows a single link controller handling both incoming (RX) and outgoing (TX) traffic for multiple neighbor cores, and the cross-connection suggests direct core-to-core links.

### Interpretation

This figure describes a **2D mesh Network-on-Chip (NoC)** architecture. The data demonstrates a scalable communication infrastructure for a multi-core processor.

* **Panel a** reveals the **microarchitecture** of the network interface/router, handling packet encapsulation (preamble insertion/checking) and routing decisions.

* **Panel b** illustrates the **local view**, showing how specific router nodes (Cores 3,5 and 4,5) manage traffic for their adjacent cores. The red/blue color coding effectively separates transmit and receive paths.

* **Panel c** provides the **global, systemic view**. The connectivity matrix is a formal representation of the network's routing table. The repeating diagonal pattern is the visual signature of a **dimension-ordered routing** scheme (like XY routing), which is deadlock-free and commonly used in mesh NoCs. The highlighted rows and columns for Cores (3,5) and (4,5) directly map the local connections from panel **b** onto this global routing table, confirming their role as routers.

**Notable Anomaly/Insight:** The heatmap shows that a core in row `i` can communicate with cores in *all other rows* (1-8), not just adjacent ones. This indicates the network supports long-distance, multi-hop communication across the entire chip, with the link controllers in panel **a** handling the hop-by-hop forwarding. The system is designed for both local (neighbor) and global (chip-wide) data exchange.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 2

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Network Architecture Diagram: System Components and Data Flow

### Overview

The image presents three interconnected diagrams illustrating a network architecture with components for data transmission, routing, and peramble management. Key elements include LDPU (Link Data Processing Unit), TX/RX units, link controllers, and core nodes. The diagrams emphasize data flow paths, peramble checks, and core-node interactions.

---

### Components/Axes

#### Diagram A (Top-Left)

- **Components**:

- **A**: TX Peramble Insertion (red arrows)

- **B**: TX Routing Registers (red arrows)

- **C**: Peramble Registers (gray arrows)

- **D**: LDPU Peramble Check (blue arrows)

- **E**: Hopping Peramble Check (blue arrows)

- **Flow**:

- Data originates from LDPU (top-left), flows through TX 1-8 (red arrows), and returns via RX 1-8 (blue arrows).

- **Receiving Column Chart** (Top-Right):

- **Axes**:

- X-axis: Transmitting column (1-8)

- Y-axis: Receiving column (1-8)

- **Legend**:

- Green bars: Active data paths

- **Key Feature**: Diagonal green bars indicate hopping patterns.

#### Diagram B (Bottom-Left)

- **Components**:

- **Link Controllers**:

- Core(3,5) (purple box)

- Core(4,5) (purple box)

- **Cores**:

- Core(2,5), Core(3,3), Core(3,4), Core(3,6), Core(3,7), Core(4,3), Core(4,4), Core(4,6), Core(4,7), Core(5,5)

- **Arrows**:

- Red: Data transmission paths

- Blue: Control signals

- **Flow**:

- Data flows between cores via link controllers, with red arrows indicating bidirectional communication.

#### Diagram C (Bottom-Right)

- **Grid Structure**:

- **Axes**:

- X-axis: Receiving row (1-8)

- Y-axis: Transmitting row (1-8)

- **Legend**:

- Green bars: Active data paths

- Red lines: Core(3,5) TX

- Blue lines: Core(4,5) RX

- **Key Features**:

- Red horizontal lines at rows 3 and 4 (Core(3,5) and Core(4,5) TX)

- Blue vertical lines at columns 3 and 4 (Core(3,5) and Core(4,5) RX)

---

### Detailed Analysis

#### Diagram A

- **TX/RX Flow**:

- Data enters from LDPU, passes through TX Peramble Insertion (A), TX Routing Registers (B), and Peramble Registers (C) before reaching TX units.

- Return paths involve LDPU Peramble Check (D) and Hopping Peramble Check (E).

- **Peramble Checks**:

- Red and blue arrows differentiate peramble insertion (TX) from peramble checks (RX).

#### Diagram B

- **Link Controller Function**:

- Core(3,5) and Core(4,5) act as central hubs, managing data flow between adjacent cores.

- Red arrows show data transmission between cores (e.g., Core(3,3) → Core(3,5)).

- Blue arrows indicate control signals (e.g., Core(5,5) → Core(4,5)).

#### Diagram C

- **Data Path Visualization**:

- Green bars form diagonal patterns, suggesting hopping sequences.

- Red lines (Core(3,5) TX) and blue lines (Core(4,5) RX) intersect at specific grid points, indicating targeted data paths.

---

### Key Observations

1. **Peramble Management**:

- Peramble insertion (A) and checks (D, E) are critical for data integrity.

2. **Core Connectivity**:

- Link controllers (Core(3,5), Core(4,5)) centralize data routing.

3. **Grid Patterns**:

- Diagonal green bars in Diagram C suggest dynamic routing or frequency hopping.

4. **Color Consistency**:

- Red (TX) and blue (RX) align with legend labels in all diagrams.

---

### Interpretation

The diagrams depict a distributed network architecture where:

- **LDPU** initiates data transmission, which is routed through TX units and validated via peramble checks.

- **Link controllers** (Core(3,5), Core(4,5)) serve as intermediaries, coordinating data flow between cores.

- The grid in Diagram C visualizes hopping patterns, with Core(3,5) and Core(4,5) acting as focal points for transmission and reception.

**Notable Trends**:

- Core(3,5) and Core(4,5) are central to both data transmission (red lines) and reception (blue lines), suggesting they are critical nodes.

- The diagonal green bars in Diagram C imply a structured hopping mechanism to avoid interference or optimize bandwidth.

This architecture emphasizes redundancy (multiple peramble checks) and efficiency (centralized link controllers), likely designed for high-reliability communication systems.

DECODING INTELLIGENCE...