TECHNICAL ASSET FINGERPRINT

98251ecaf5c4a1c1b6441916

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Scatter Plot: Harmlessness vs. Helpfulness

### Overview

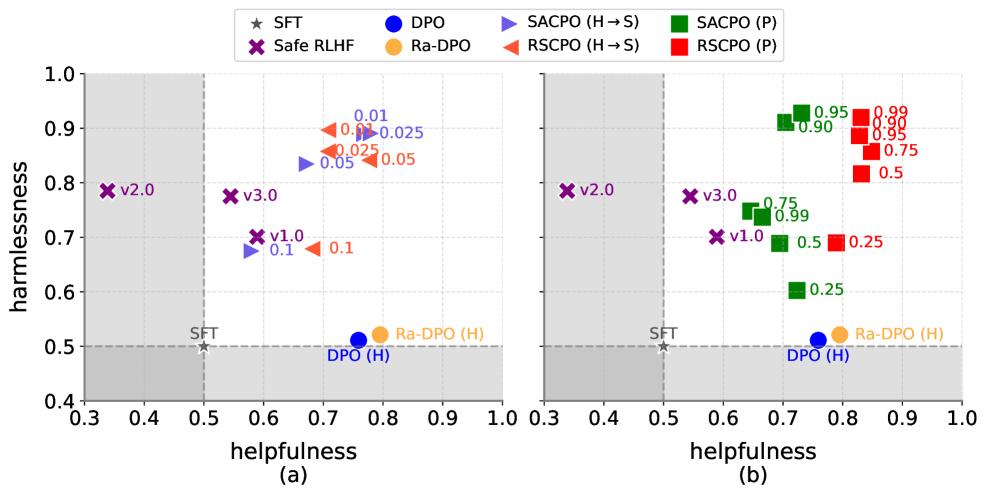

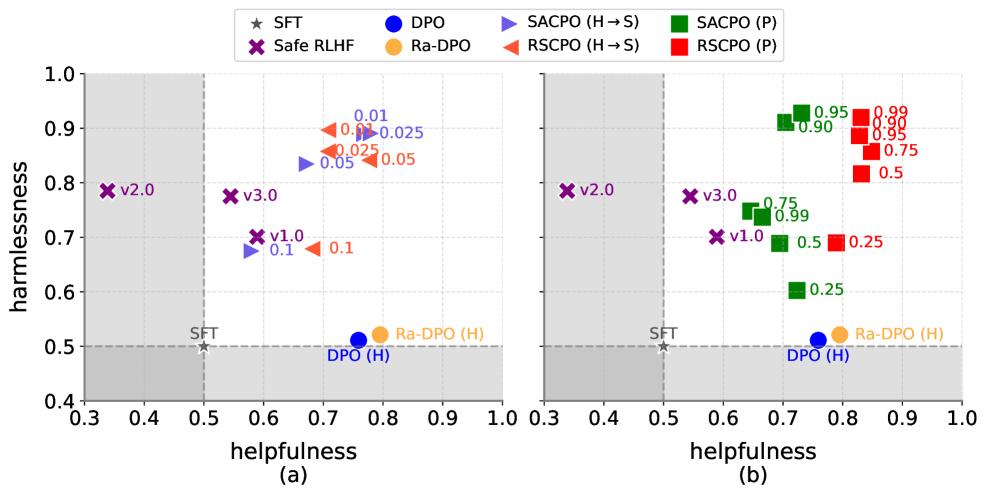

The image presents two scatter plots, (a) and (b), comparing the harmlessness and helpfulness of different models. The plots show the performance of various algorithms, including SFT, Safe RLHF, DPO, Ra-DPO, SACPO (H->S), SACPO (P), RSCPO (H->S), and RSCPO (P). The x-axis represents helpfulness, and the y-axis represents harmlessness. A gray shaded region indicates an area of lower performance.

### Components/Axes

* **Title:** Harmlessness vs. Helpfulness

* **X-axis (Helpfulness):** Ranges from 0.3 to 1.0 in both plots.

* **Y-axis (Harmlessness):** Ranges from 0.4 to 1.0 in both plots.

* **Legend (Top):**

* `★ SFT` (Gray Star)

* `X Safe RLHF` (Purple X)

* `DPO` (Blue Circle)

* `Ra-DPO` (Orange Circle)

* `SACPO (H->S)` (Blue Triangle pointing right)

* `SACPO (P)` (Green Square)

* `RSCPO (H->S)` (Orange Triangle pointing right)

* `RSCPO (P)` (Red Square)

* **Plot (a):** Labeled as "(a)" below the x-axis.

* **Plot (b):** Labeled as "(b)" below the x-axis.

* **Gray Shaded Region:** Located in the bottom-left corner of each plot, indicating a region of lower performance. The gray region extends to x=0.5 and y=0.5.

* **Axis Markers:** Both axes have grid lines at intervals of 0.1.

### Detailed Analysis or Content Details

#### Plot (a)

* **SFT (Gray Star):** Located at approximately (0.5, 0.5).

* **Safe RLHF (Purple X):**

* v2.0: Located at approximately (0.35, 0.8).

* v3.0: Located at approximately (0.55, 0.8).

* v1.0: Located at approximately (0.6, 0.7).

* **DPO (Blue Circle):** Located at approximately (0.7, 0.52). Labeled as DPO (H).

* **Ra-DPO (Orange Circle):** Located at approximately (0.8, 0.52). Labeled as Ra-DPO (H).

* **SACPO (H->S) (Blue Triangle):** Data points are clustered around (0.7, 0.7) and (0.7, 0.9). Specific values are approximately 0.01, 0.025, and 0.05 for Harmlessness.

* **RSCPO (H->S) (Orange Triangle):** Data points are clustered around (0.75, 0.7) and (0.75, 0.9). Specific values are approximately 0.025, 0.05, and 0.1 for Harmlessness.

#### Plot (b)

* **SFT (Gray Star):** Located at approximately (0.5, 0.5).

* **Safe RLHF (Purple X):**

* v2.0: Located at approximately (0.35, 0.8).

* v3.0: Located at approximately (0.55, 0.8).

* v1.0: Located at approximately (0.6, 0.7).

* **DPO (Blue Circle):** Located at approximately (0.75, 0.52). Labeled as DPO (H).

* **Ra-DPO (Orange Circle):** Located at approximately (0.8, 0.52). Labeled as Ra-DPO (H).

* **SACPO (P) (Green Square):** Data points are clustered in the top-right quadrant. Specific values for Harmlessness are approximately 0.25, 0.5, 0.75, 0.90, 0.95, and 0.99. Helpfulness values are approximately 0.7.

* **RSCPO (P) (Red Square):** Data points are clustered in the top-right quadrant. Specific values for Harmlessness are approximately 0.25, 0.5, 0.75, 0.90, 0.95, and 0.99. Helpfulness values are approximately 0.8.

### Key Observations

* **SFT:** Serves as a baseline, consistently positioned at (0.5, 0.5) in both plots.

* **Safe RLHF:** The "Safe RLHF" data points (v1.0, v2.0, v3.0) remain in the same position in both plots.

* **DPO and Ra-DPO:** The DPO (H) and Ra-DPO (H) points are located near each other in both plots, indicating similar performance.

* **SACPO and RSCPO (H->S) in Plot (a):** These algorithms show a cluster of points with varying harmlessness values, but relatively low helpfulness.

* **SACPO and RSCPO (P) in Plot (b):** These algorithms show a cluster of points with high harmlessness and helpfulness values.

### Interpretation

The plots compare the harmlessness and helpfulness of different models. Plot (a) shows the performance of SACPO and RSCPO when trained using a specific method (H->S), while plot (b) shows the performance when trained using a different method (P). The results suggest that the training method significantly impacts the performance of SACPO and RSCPO. Specifically, the (P) method leads to much higher harmlessness and helpfulness compared to the (H->S) method. The Safe RLHF variants remain consistent across both plots, suggesting their performance is independent of the SACPO/RSCPO training variations. The DPO and Ra-DPO models show similar performance in terms of harmlessness and helpfulness. The gray shaded region highlights the area where models perform poorly in both harmlessness and helpfulness.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Scatter Plot: Model Performance Comparison on Helpfulness vs. Harmlessness

### Overview

The image displays two side-by-side scatter plots, labeled (a) and (b), comparing the performance of various AI alignment methods. The plots measure models on two axes: "helpfulness" (x-axis) and "harmlessness" (y-axis). A legend at the top center defines the methods and their corresponding markers. A gray shaded region in the bottom-left quadrant of both plots indicates a zone of lower performance on both metrics.

### Components/Axes

* **X-Axis:** Labeled "helpfulness". Scale ranges from 0.3 to 1.0, with major tick marks at 0.3, 0.4, 0.5, 0.6, 0.7, 0.8, 0.9, and 1.0.

* **Y-Axis:** Labeled "harmlessness". Scale ranges from 0.4 to 1.0, with major tick marks at 0.4, 0.5, 0.6, 0.7, 0.8, 0.9, and 1.0.

* **Legend (Top Center):** Defines eight methods:

* `☆ SFT` (Gray Star)

* `✖ Safe RLHF` (Purple X)

* `● DPO` (Blue Circle)

* `● Ra-DPO` (Orange Circle)

* `▶ SACPO (H → S)` (Blue Right-Pointing Triangle)

* `◀ RSCPO (H → S)` (Orange Left-Pointing Triangle)

* `■ SACPO (P)` (Green Square)

* `■ RSCPO (P)` (Red Square)

* **Gray Shaded Region:** Covers the area where helpfulness ≤ 0.5 and harmlessness ≤ 0.5. A dashed line marks the boundary at helpfulness=0.5 and harmlessness=0.5.

* **Baseline Points:** A gray star labeled `SFT` is positioned at approximately (0.5, 0.5), at the corner of the shaded region.

### Detailed Analysis

**Subplot (a):**

* **Safe RLHF (Purple X):** Three versions are plotted.

* `v1.0`: Positioned at approximately (0.58, 0.70).

* `v2.0`: Positioned at approximately (0.35, 0.79).

* `v3.0`: Positioned at approximately (0.55, 0.78).

* **DPO (H) (Blue Circle):** Positioned at approximately (0.76, 0.51).

* **Ra-DPO (H) (Orange Circle):** Positioned at approximately (0.80, 0.52).

* **SACPO (H → S) (Blue Triangles):** Points are labeled with numerical values (likely hyperparameters).

* Point labeled `0.1`: Positioned at (0.60, 0.68).

* Point labeled `0.05`: Positioned at (0.70, 0.83).

* Point labeled `0.025`: Positioned at (0.78, 0.88).

* Point labeled `0.01`: Positioned at (0.76, 0.90).

* **RSCPO (H → S) (Orange Triangles):** Points are labeled with numerical values.

* Point labeled `0.1`: Positioned at (0.68, 0.70).

* Point labeled `0.05`: Positioned at (0.78, 0.84).

* Point labeled `0.025`: Positioned at (0.75, 0.87).

* Point labeled `0.01`: Positioned at (0.73, 0.90).

**Subplot (b):**

* **Safe RLHF (Purple X):** Same three versions as in (a), with identical positions.

* **DPO (H) (Blue Circle):** Same position as in (a).

* **Ra-DPO (H) (Orange Circle):** Same position as in (a).

* **SACPO (P) (Green Squares):** Points are labeled with numerical values.

* Point labeled `0.25`: Positioned at (0.75, 0.60).

* Point labeled `0.5`: Positioned at (0.73, 0.69).

* Point labeled `0.75`: Positioned at (0.72, 0.75).

* Point labeled `0.9`: Positioned at (0.70, 0.90).

* Point labeled `0.95`: Positioned at (0.75, 0.91).

* **RSCPO (P) (Red Squares):** Points are labeled with numerical values.

* Point labeled `0.25`: Positioned at (0.82, 0.69).

* Point labeled `0.5`: Positioned at (0.85, 0.80).

* Point labeled `0.75`: Positioned at (0.87, 0.86).

* Point labeled `0.9`: Positioned at (0.85, 0.89).

* Point labeled `0.95`: Positioned at (0.84, 0.90).

### Key Observations

1. **Performance Clustering:** Methods cluster into distinct regions. Safe RLHF variants are in the mid-harmlessness, lower-helpfulness area. DPO and Ra-DPO are in the high-helpfulness, low-harmlessness area. The SACPO and RSCPO methods (both H→S and P variants) occupy the high-harmlessness region, with helpfulness varying based on the variant and hyperparameter.

2. **Hyperparameter Trend:** For both SACPO and RSCPO methods, the numerical labels (likely representing a hyperparameter like a penalty weight) show a clear trend: as the value decreases (e.g., from 0.1 to 0.01 in H→S variants, or increases from 0.25 to 0.95 in P variants), the model's position moves upward (higher harmlessness) and often rightward (higher helpfulness).

3. **Variant Comparison:** The `(P)` variants in subplot (b) generally achieve higher helpfulness scores than their `(H → S)` counterparts in subplot (a) for similar harmlessness levels. The `RSCPO (P)` (red squares) points are the furthest to the top-right, indicating the best combined performance.

4. **Baseline:** The SFT model sits at the threshold of the low-performance gray zone (0.5, 0.5). All other methods shown improve upon this baseline in at least one dimension.

### Interpretation

This visualization demonstrates the trade-off and potential synergy between helpfulness and harmlessness in AI alignment techniques. The data suggests that the **P variants of SACPO and RSCPO are the most effective** at simultaneously maximizing both metrics, pushing the Pareto frontier toward the ideal top-right corner (1.0, 1.0).

The clear trend with the hyperparameter labels indicates these methods offer a **tunable knob** to navigate the helpfulness-harmlessness trade-off. Lower values in the H→S variants and higher values in the P variants appear to prioritize harmlessness without severely sacrificing helpfulness.

The positioning of Safe RLHF suggests it achieves moderate harmlessness but at a significant cost to helpfulness. Conversely, standard DPO and Ra-DPO achieve high helpfulness but remain near the harmlessness baseline. The SACPO/RSCPO methods, particularly the P variants, appear to resolve this tension more effectively, representing a significant advancement in balancing these two critical and often competing objectives for AI systems. The gray zone serves as a visual reminder of the undesirable state (low on both metrics) that these alignment techniques aim to move models away from.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Scatter Plot: Helpfulness vs. Harmlessness (Two Subplots)

### Overview

The image contains two scatter plots (subplots a and b) comparing **helpfulness** (x-axis) and **harmlessness** (y-axis) for various model configurations. Each subplot includes data points labeled with model names, versions, and numerical values. The plots use distinct colors and markers to differentiate categories, with shaded regions indicating thresholds or regions of interest.

---

### Components/Axes

- **X-axis (Helpfulness)**: Ranges from 0.3 to 1.0 in both subplots.

- **Y-axis (Harmlessness)**: Ranges from 0.4 to 1.0 in both subplots.

- **Legend**:

- **SFT**: Star symbol (gray).

- **DPO**: Blue circle.

- **SACPO (H→S)**: Blue triangle.

- **SACPO (P)**: Green square.

- **Safe RLHF**: Purple cross (v1.0, v2.0, v3.0).

- **Ra-DPO (H)**: Orange circle.

- **RSCPO (H→S)**: Red triangle.

- **RSCPO (P)**: Red square.

- **Shaded Regions**:

- Subplot (a): Gray area from x=0.3 to 0.5.

- Subplot (b): Gray area from x=0.3 to 0.6.

---

### Detailed Analysis

#### Subplot (a)

- **Safe RLHF (Purple Crosses)**:

- v1.0: (0.6, 0.7)

- v2.0: (0.55, 0.8)

- v3.0: (0.65, 0.75)

- **SACPO (H→S) (Blue Triangles)**:

- 0.05: (0.65, 0.9)

- 0.01: (0.7, 0.85)

- 0.025: (0.75, 0.8)

- **RSCPO (H→S) (Red Triangles)**:

- 0.1: (0.6, 0.7)

- 0.05: (0.65, 0.8)

- 0.025: (0.7, 0.75)

- **DPO (H) (Blue Circle)**: (0.7, 0.5)

- **Ra-DPO (H) (Orange Circle)**: (0.8, 0.5)

- **SFT (Star)**: (0.5, 0.5)

#### Subplot (b)

- **SACPO (P) (Green Squares)**:

- 0.95: (0.65, 0.95)

- 0.99: (0.7, 0.99)

- 0.90: (0.75, 0.90)

- 0.75: (0.6, 0.75)

- 0.5: (0.55, 0.5)

- **RSCPO (P) (Red Squares)**:

- 0.5: (0.65, 0.5)

- 0.75: (0.7, 0.75)

- 0.95: (0.75, 0.95)

- 0.25: (0.6, 0.25)

- **DPO (H) (Blue Circle)**: (0.7, 0.5)

- **Ra-DPO (H) (Orange Circle)**: (0.8, 0.5)

- **SFT (Star)**: (0.5, 0.5)

---

### Key Observations

1. **Safe RLHF Models**:

- Clustered in the lower-left region (helpfulness < 0.7, harmlessness > 0.6).

- v2.0 has the highest harmlessness (0.8) but lower helpfulness (0.55).

2. **SACPO (H→S) and RSCPO (H→S)**:

- SACPO (H→S) (blue triangles) shows higher harmlessness (0.8–0.9) but lower helpfulness (0.65–0.75).

- RSCPO (H→S) (red triangles) has higher helpfulness (0.6–0.7) but lower harmlessness (0.75–0.8).

3. **DPO (H) and Ra-DPO (H)**:

- Both at (0.7, 0.5) and (0.8, 0.5), respectively, indicating moderate helpfulness and low harmlessness.

- Ra-DPO (H) has higher helpfulness but same harmlessness as DPO (H).

4. **SACPO (P) and RSCPO (P)**:

- SACPO (P) (green squares) dominates in harmlessness (0.75–0.99) with high helpfulness (0.6–0.9).

- RSCPO (P) (red squares) shows lower harmlessness (0.25–0.95) despite similar helpfulness.

5. **Shaded Regions**:

- Subplot (a) highlights models with helpfulness < 0.5 (SFT, Safe RLHF).

- Subplot (b) emphasizes models with helpfulness < 0.6 (SFT, SACPO (P), RSCPO (P)).

---

### Interpretation

- **Trade-offs Between Helpfulness and Harmlessness**:

- Safe RLHF models prioritize harmlessness over helpfulness, placing them in the lower-left quadrant.

- SACPO (H→S) and RSCPO (H→S) represent intermediate trade-offs, with SACPO (H→S) favoring harmlessness and RSCPO (H→S) favoring helpfulness.

- SACPO (P) and RSCPO (P) in subplot (b) show that SACPO (P) maintains high harmlessness even at high helpfulness, while RSCPO (P) sacrifices harmlessness for helpfulness.

- **Outliers**:

- Ra-DPO (H) (0.8, 0.5) and DPO (H) (0.7, 0.5) are outliers in subplot (a), showing high helpfulness but low harmlessness.

- SACPO (P) (0.7, 0.99) in subplot (b) is an outlier with near-perfect harmlessness.

- **Implications**:

- Models like SACPO (P) and Safe RLHF v2.0 may be preferable for safety-critical applications.

- RSCPO (P) and Ra-DPO (H) might be better for scenarios requiring higher helpfulness, even at the cost of increased risk.

- The shaded regions suggest thresholds for acceptable performance, with some models (e.g., SACPO (P)) exceeding these limits.

DECODING INTELLIGENCE...