TECHNICAL ASSET FINGERPRINT

985b63992da1162f1add441a

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Line Charts: Accuracy vs. Interactions for Different Models

### Overview

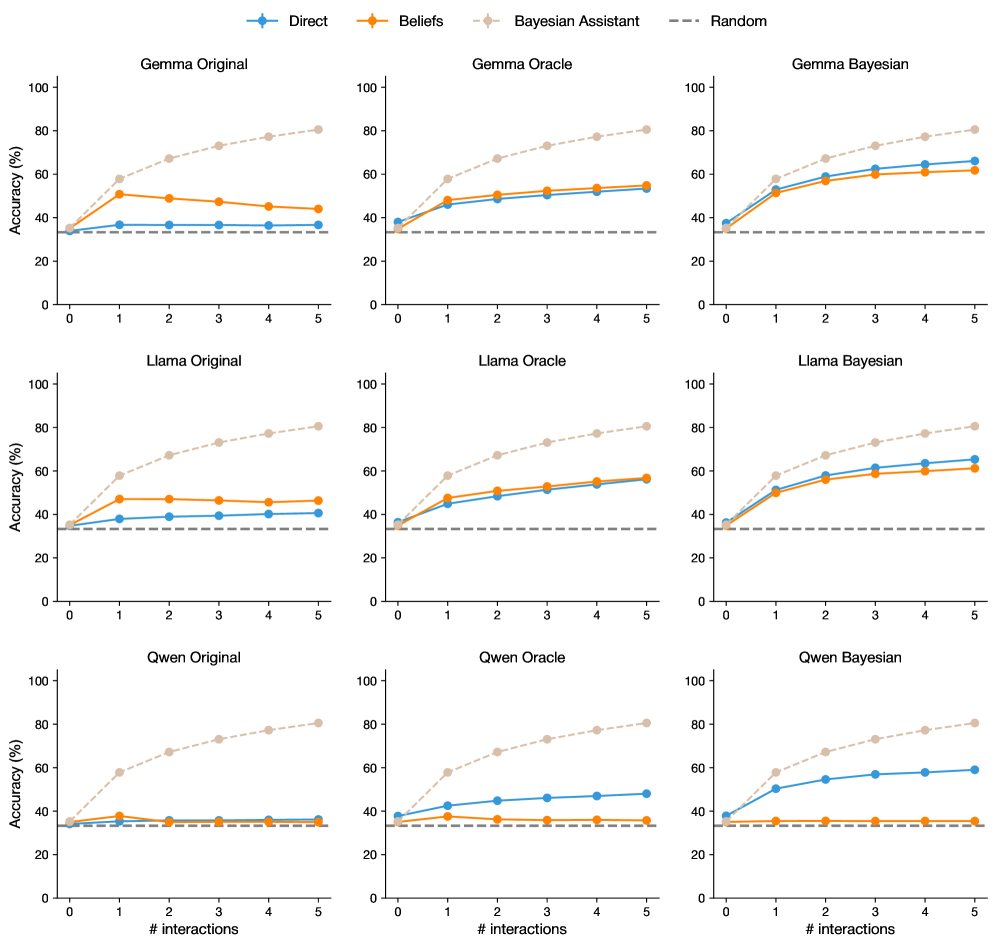

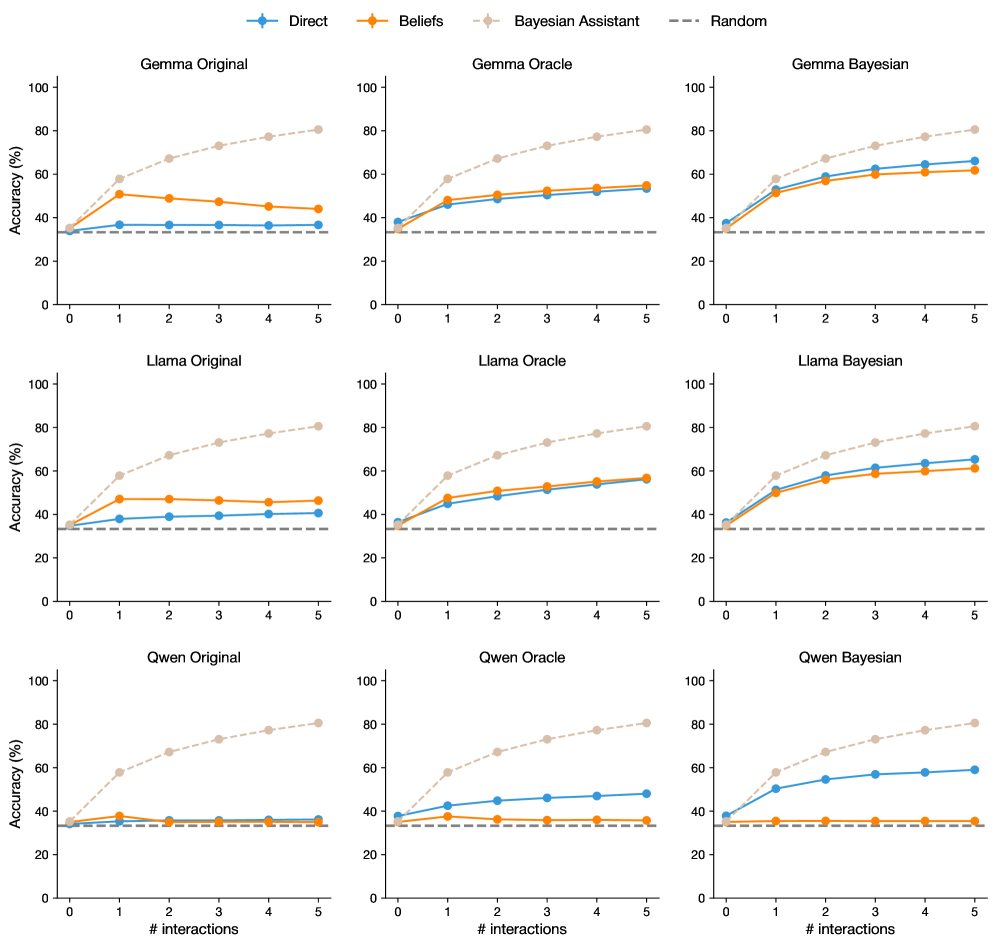

The image presents a series of line charts comparing the accuracy of different language models (Gemma, Llama, Qwen) under various interaction strategies (Direct, Beliefs, Bayesian Assistant, Random). Each row represents a different language model, while each column represents a different interaction strategy. The x-axis represents the number of interactions, and the y-axis represents the accuracy in percentage.

### Components/Axes

* **Title:** Accuracy vs. Interactions for Different Models

* **X-axis:** "# interactions" with markers at 0, 1, 2, 3, 4, and 5.

* **Y-axis:** "Accuracy (%)" with markers at 0, 20, 40, 60, 80, and 100.

* **Legend:** Located at the top of the image.

* **Direct:** Blue line with circular markers.

* **Beliefs:** Orange line with circular markers.

* **Bayesian Assistant:** Light brown dashed line with circular markers.

* **Random:** Gray dashed line.

* **Chart Titles (Top Row):**

* Gemma Original (Top-Left)

* Gemma Oracle (Top-Center)

* Gemma Bayesian (Top-Right)

* **Chart Titles (Middle Row):**

* Llama Original (Middle-Left)

* Llama Oracle (Middle-Center)

* Llama Bayesian (Middle-Right)

* **Chart Titles (Bottom Row):**

* Qwen Original (Bottom-Left)

* Qwen Oracle (Bottom-Center)

* Qwen Bayesian (Bottom-Right)

### Detailed Analysis

**Gemma Models:**

* **Gemma Original:**

* Direct (Blue): Starts at approximately 35% and decreases slightly to around 33% at 5 interactions.

* Beliefs (Orange): Starts at approximately 45% and decreases slightly to around 42% at 5 interactions.

* Bayesian Assistant (Light Brown): Starts at approximately 35% and increases to approximately 80% at 5 interactions.

* Random (Gray): Constant at approximately 33%.

* **Gemma Oracle:**

* Direct (Blue): Starts at approximately 35% and increases to approximately 65% at 5 interactions.

* Beliefs (Orange): Starts at approximately 45% and increases to approximately 55% at 5 interactions.

* Bayesian Assistant (Light Brown): Starts at approximately 35% and increases to approximately 80% at 5 interactions.

* Random (Gray): Constant at approximately 33%.

* **Gemma Bayesian:**

* Direct (Blue): Starts at approximately 45% and increases to approximately 65% at 5 interactions.

* Beliefs (Orange): Starts at approximately 50% and increases to approximately 60% at 5 interactions.

* Bayesian Assistant (Light Brown): Starts at approximately 45% and increases to approximately 75% at 5 interactions.

* Random (Gray): Constant at approximately 33%.

**Llama Models:**

* **Llama Original:**

* Direct (Blue): Starts at approximately 35% and increases slightly to around 40% at 5 interactions.

* Beliefs (Orange): Starts at approximately 40% and increases slightly to around 45% at 5 interactions.

* Bayesian Assistant (Light Brown): Starts at approximately 35% and increases to approximately 85% at 5 interactions.

* Random (Gray): Constant at approximately 33%.

* **Llama Oracle:**

* Direct (Blue): Starts at approximately 35% and increases to approximately 60% at 5 interactions.

* Beliefs (Orange): Starts at approximately 45% and increases to approximately 55% at 5 interactions.

* Bayesian Assistant (Light Brown): Starts at approximately 35% and increases to approximately 80% at 5 interactions.

* Random (Gray): Constant at approximately 33%.

* **Llama Bayesian:**

* Direct (Blue): Starts at approximately 45% and increases to approximately 65% at 5 interactions.

* Beliefs (Orange): Starts at approximately 50% and increases to approximately 70% at 5 interactions.

* Bayesian Assistant (Light Brown): Starts at approximately 45% and increases to approximately 80% at 5 interactions.

* Random (Gray): Constant at approximately 33%.

**Qwen Models:**

* **Qwen Original:**

* Direct (Blue): Starts at approximately 35% and increases slightly to around 38% at 5 interactions.

* Beliefs (Orange): Starts at approximately 38% and decreases slightly to around 36% at 5 interactions.

* Bayesian Assistant (Light Brown): Starts at approximately 35% and increases to approximately 80% at 5 interactions.

* Random (Gray): Constant at approximately 33%.

* **Qwen Oracle:**

* Direct (Blue): Starts at approximately 35% and increases slightly to around 48% at 5 interactions.

* Beliefs (Orange): Starts at approximately 38% and increases slightly to around 40% at 5 interactions.

* Bayesian Assistant (Light Brown): Starts at approximately 35% and increases to approximately 80% at 5 interactions.

* Random (Gray): Constant at approximately 33%.

* **Qwen Bayesian:**

* Direct (Blue): Starts at approximately 45% and increases slightly to around 50% at 5 interactions.

* Beliefs (Orange): Starts at approximately 40% and decreases slightly to around 38% at 5 interactions.

* Bayesian Assistant (Light Brown): Starts at approximately 45% and increases to approximately 80% at 5 interactions.

* Random (Gray): Constant at approximately 33%.

### Key Observations

* The "Bayesian Assistant" strategy (light brown dashed line) consistently shows the most significant improvement in accuracy across all models (Gemma, Llama, Qwen) and configurations (Original, Oracle, Bayesian) as the number of interactions increases.

* The "Random" strategy (gray dashed line) remains constant across all models and configurations, serving as a baseline.

* The "Direct" and "Beliefs" strategies (blue and orange lines, respectively) show varying degrees of improvement or even slight decreases in accuracy depending on the model and configuration.

* The "Oracle" and "Bayesian" configurations generally result in higher accuracy compared to the "Original" configuration for the "Direct" and "Beliefs" strategies.

### Interpretation

The data suggests that the "Bayesian Assistant" interaction strategy is highly effective in improving the accuracy of language models as the number of interactions increases. This indicates that incorporating Bayesian methods into the interaction process can significantly enhance the model's performance. The "Random" strategy serves as a control, demonstrating the baseline accuracy without any specific interaction strategy. The varying performance of the "Direct" and "Beliefs" strategies highlights the importance of choosing an appropriate interaction strategy based on the specific language model and configuration. The "Oracle" and "Bayesian" configurations appear to provide additional information or context that aids the model in improving its accuracy, particularly when using the "Direct" and "Beliefs" strategies.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Line Charts: Accuracy vs. Interactions for Different Models & Belief Systems

### Overview

This image presents nine separate line charts, arranged in a 3x3 grid. Each chart displays the relationship between the number of interactions and accuracy (%) for different language models (Gemma, Llama, Qwen) under different belief systems (Original, Oracle, Bayesian) and compared to a random baseline. The x-axis represents the number of interactions, ranging from 0 to 5. The y-axis represents accuracy, ranging from 0% to 100%. Each chart includes four lines representing "Direct", "Beliefs", "Bayesian Assistant", and "Random" approaches.

### Components/Axes

* **X-axis Label (all charts):** "# interactions"

* **Y-axis Label (all charts):** "Accuracy (%)"

* **Legend (top-right of each chart):**

* Blue Solid Line: "Direct"

* Orange Dashed Line: "Beliefs"

* Gray Solid Line: "Bayesian Assistant"

* Black Dashed-Dotted Line: "Random"

* **Chart Titles (top-center of each chart):**

* Gemma Original

* Gemma Oracle

* Gemma Bayesian

* Llama Original

* Llama Oracle

* Llama Bayesian

* Qwen Original

* Qwen Oracle

* Qwen Bayesian

### Detailed Analysis or Content Details

**Gemma Original:**

* Direct: Starts at approximately 45%, increases to approximately 65% at 5 interactions.

* Beliefs: Starts at approximately 40%, increases to approximately 50% at 5 interactions.

* Bayesian Assistant: Starts at approximately 35%, increases to approximately 45% at 5 interactions.

* Random: Remains relatively flat around 30% throughout all interactions.

**Gemma Oracle:**

* Direct: Starts at approximately 50%, increases to approximately 70% at 5 interactions.

* Beliefs: Starts at approximately 40%, increases to approximately 55% at 5 interactions.

* Bayesian Assistant: Starts at approximately 40%, remains around 45% throughout all interactions.

* Random: Remains relatively flat around 30% throughout all interactions.

**Gemma Bayesian:**

* Direct: Starts at approximately 45%, increases to approximately 65% at 5 interactions.

* Beliefs: Starts at approximately 40%, increases to approximately 55% at 5 interactions.

* Bayesian Assistant: Starts at approximately 40%, increases to approximately 50% at 5 interactions.

* Random: Remains relatively flat around 30% throughout all interactions.

**Llama Original:**

* Direct: Starts at approximately 40%, increases to approximately 60% at 5 interactions.

* Beliefs: Starts at approximately 35%, increases to approximately 45% at 5 interactions.

* Bayesian Assistant: Starts at approximately 30%, increases to approximately 40% at 5 interactions.

* Random: Remains relatively flat around 30% throughout all interactions.

**Llama Oracle:**

* Direct: Starts at approximately 45%, increases to approximately 65% at 5 interactions.

* Beliefs: Starts at approximately 40%, increases to approximately 55% at 5 interactions.

* Bayesian Assistant: Starts at approximately 35%, remains around 40% throughout all interactions.

* Random: Remains relatively flat around 30% throughout all interactions.

**Llama Bayesian:**

* Direct: Starts at approximately 40%, increases to approximately 60% at 5 interactions.

* Beliefs: Starts at approximately 35%, increases to approximately 50% at 5 interactions.

* Bayesian Assistant: Starts at approximately 30%, increases to approximately 40% at 5 interactions.

* Random: Remains relatively flat around 30% throughout all interactions.

**Qwen Original:**

* Direct: Starts at approximately 45%, increases to approximately 65% at 5 interactions.

* Beliefs: Starts at approximately 40%, increases to approximately 50% at 5 interactions.

* Bayesian Assistant: Starts at approximately 35%, increases to approximately 45% at 5 interactions.

* Random: Remains relatively flat around 30% throughout all interactions.

**Qwen Oracle:**

* Direct: Starts at approximately 45%, increases to approximately 65% at 5 interactions.

* Beliefs: Starts at approximately 40%, increases to approximately 55% at 5 interactions.

* Bayesian Assistant: Starts at approximately 35%, remains around 40% throughout all interactions.

* Random: Remains relatively flat around 30% throughout all interactions.

**Qwen Bayesian:**

* Direct: Starts at approximately 45%, increases to approximately 65% at 5 interactions.

* Beliefs: Starts at approximately 40%, increases to approximately 55% at 5 interactions.

* Bayesian Assistant: Starts at approximately 35%, increases to approximately 45% at 5 interactions.

* Random: Remains relatively flat around 30% throughout all interactions.

### Key Observations

* The "Direct" approach consistently outperforms the other approaches across all models and belief systems.

* The "Random" approach consistently shows the lowest accuracy, serving as a baseline.

* The "Beliefs" and "Bayesian Assistant" approaches generally show similar trends, with moderate improvements in accuracy as the number of interactions increases.

* The "Oracle" belief system generally leads to slightly higher accuracy compared to the "Original" belief system for the "Direct" approach.

* The "Bayesian Assistant" approach appears to plateau in accuracy after a certain number of interactions (around 3-4) for the "Oracle" belief system in all models.

### Interpretation

The data suggests that a "Direct" approach to interacting with these language models yields the highest accuracy, and that accuracy generally increases with more interactions. The "Oracle" belief system seems to provide a slight advantage in accuracy, particularly when combined with the "Direct" approach. The "Beliefs" and "Bayesian Assistant" approaches offer some improvement over the "Random" baseline, but are significantly less effective than the "Direct" approach. The plateauing of the "Bayesian Assistant" approach with the "Oracle" belief system could indicate a limit to the benefits of Bayesian reasoning after a certain level of information is gathered. The consistent low performance of the "Random" approach highlights the importance of structured interaction for achieving higher accuracy. The similar performance across Gemma, Llama, and Qwen suggests that the underlying model architecture has less impact on the effectiveness of these interaction strategies than the interaction strategy itself.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Line Charts: Accuracy vs. Interactions for Various Models and Methods

### Overview

The image displays a 3x3 grid of line charts. Each chart plots "Accuracy (%)" on the y-axis against "# interactions" (from 0 to 5) on the x-axis. The charts compare the performance of three different methods ("Direct", "Beliefs", "Bayesian Assistant") across three different models ("Gemma", "Llama", "Qwen") under three different conditions ("Original", "Oracle", "Bayesian"). A "Random" baseline is also shown.

### Components/Axes

* **Legend (Top Center):** Located above the grid of charts.

* `Direct`: Blue line with diamond markers.

* `Beliefs`: Orange line with circle markers.

* `Bayesian Assistant`: Beige/light brown dashed line with diamond markers.

* `Random`: Gray dashed line (no markers).

* **Chart Titles (Top of each subplot):** The 3x3 grid is organized as follows:

* **Top Row (Gemma):** "Gemma Original", "Gemma Oracle", "Gemma Bayesian"

* **Middle Row (Llama):** "Llama Original", "Llama Oracle", "Llama Bayesian"

* **Bottom Row (Qwen):** "Qwen Original", "Qwen Oracle", "Qwen Bayesian"

* **Axes:**

* **Y-axis (All charts):** Label: "Accuracy (%)". Scale: 0 to 100, with major ticks at 0, 20, 40, 60, 80, 100.

* **X-axis (All charts):** Label: "# interactions". Scale: 0 to 5, with integer ticks at 0, 1, 2, 3, 4, 5. The label is explicitly shown only on the bottom row of charts.

### Detailed Analysis

**General Trend Verification:** Across all charts, the "Bayesian Assistant" (beige dashed line) shows a strong, consistent upward trend, starting near the random baseline and rising steeply. The "Direct" (blue) and "Beliefs" (orange) lines show more varied trends depending on the model and condition. The "Random" baseline (gray dashed) is flat at approximately 33% accuracy.

**Chart-by-Chart Data Points (Approximate Values):**

1. **Gemma Original:**

* Bayesian Assistant: Starts ~35% (0), rises to ~80% (5).

* Beliefs: Starts ~35% (0), peaks at ~50% (1), then slowly declines to ~45% (5).

* Direct: Starts ~35% (0), rises slightly to ~38% (1), then plateaus near ~38% (5).

* Random: Flat at ~33%.

2. **Gemma Oracle:**

* Bayesian Assistant: Similar steep rise to ~80% (5).

* Beliefs: Starts ~35% (0), rises steadily to ~55% (5).

* Direct: Starts ~38% (0), rises steadily to ~53% (5).

* Random: Flat at ~33%.

3. **Gemma Bayesian:**

* Bayesian Assistant: Similar steep rise to ~80% (5).

* Beliefs: Starts ~35% (0), rises to ~62% (5).

* Direct: Starts ~38% (0), rises to ~65% (5).

* Random: Flat at ~33%.

4. **Llama Original:**

* Bayesian Assistant: Similar steep rise to ~80% (5).

* Beliefs: Starts ~35% (0), rises to ~48% (1), then plateaus near ~47% (5).

* Direct: Starts ~35% (0), rises slowly to ~40% (5).

* Random: Flat at ~33%.

5. **Llama Oracle:**

* Bayesian Assistant: Similar steep rise to ~80% (5).

* Beliefs: Starts ~35% (0), rises steadily to ~57% (5).

* Direct: Starts ~35% (0), rises steadily to ~56% (5).

* Random: Flat at ~33%.

6. **Llama Bayesian:**

* Bayesian Assistant: Similar steep rise to ~80% (5).

* Beliefs: Starts ~35% (0), rises to ~62% (5).

* Direct: Starts ~35% (0), rises to ~65% (5).

* Random: Flat at ~33%.

7. **Qwen Original:**

* Bayesian Assistant: Similar steep rise to ~80% (5).

* Beliefs: Starts ~35% (0), rises to ~38% (1), then declines to ~35% (5).

* Direct: Starts ~35% (0), rises very slightly to ~37% (5).

* Random: Flat at ~33%.

8. **Qwen Oracle:**

* Bayesian Assistant: Similar steep rise to ~80% (5).

* Beliefs: Starts ~35% (0), remains flat near ~36% (5).

* Direct: Starts ~38% (0), rises steadily to ~48% (5).

* Random: Flat at ~33%.

9. **Qwen Bayesian:**

* Bayesian Assistant: Similar steep rise to ~80% (5).

* Beliefs: Starts ~35% (0), remains flat near ~36% (5).

* Direct: Starts ~38% (0), rises to ~59% (5).

* Random: Flat at ~33%.

### Key Observations

1. **Dominant Performance:** The "Bayesian Assistant" method consistently and significantly outperforms all other methods across every model and condition, achieving ~80% accuracy by 5 interactions.

2. **Condition Impact:** The "Original" condition appears most challenging for the "Direct" and "Beliefs" methods, often leading to performance plateaus or declines after an initial rise. The "Oracle" and "Bayesian" conditions generally allow these methods to improve with more interactions.

3. **Model-Specific Behavior:** The "Qwen" model shows a distinct pattern where the "Beliefs" method performs poorly (near random) in the "Oracle" and "Bayesian" conditions, while the "Direct" method improves. In contrast, for "Gemma" and "Llama", "Beliefs" and "Direct" often perform similarly in the "Oracle" and "Bayesian" conditions.

4. **Baseline:** The "Random" baseline is consistently at ~33%, suggesting a 3-class classification problem where random guessing yields one-third accuracy.

### Interpretation

This data strongly suggests that the "Bayesian Assistant" method is highly effective at leveraging multiple interactions to improve accuracy, regardless of the underlying model (Gemma, Llama, Qwen) or the testing condition. Its steep, consistent learning curve indicates a robust mechanism for incorporating feedback.

The performance of the "Direct" and "Beliefs" methods is highly sensitive to the condition. The "Original" condition likely represents a standard or zero-shot setup where these methods struggle to improve beyond a low ceiling. The "Oracle" and "Bayesian" conditions probably provide additional information or a more favorable evaluation framework, enabling gradual learning. The stark underperformance of "Beliefs" with Qwen in these conditions is a notable anomaly, suggesting a potential incompatibility between that method and the Qwen model's architecture or output format in those specific settings.

Overall, the charts demonstrate a clear hierarchy: Bayesian Assistant >> (Direct ≈ Beliefs) > Random, with the gap between the top method and the others being substantial. The key takeaway is the superior sample efficiency and effectiveness of the Bayesian Assistant approach for this task.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Graphs: Model Performance Across Interaction Counts

### Overview

The image contains a 3x3 grid of line graphs comparing the accuracy of three AI models (Gemma, Llama, Qwen) across three configurations (Original, Oracle, Bayesian) over 5 interaction steps. Four performance metrics are tracked: Direct, Beliefs, Bayesian Assistant, and Random. Each graph shows distinct trends in accuracy improvement with increasing interactions.

### Components/Axes

- **X-axis**: "# interactions" (0–5, integer steps)

- **Y-axis**: "Accuracy (%)" (0–100, linear scale)

- **Legends**: Positioned at the top of each graph with four categories:

- **Direct** (blue circles)

- **Beliefs** (orange squares)

- **Bayesian Assistant** (beige diamonds)

- **Random** (gray dashed line)

- **Graph Titles**: Format = "[Model] [Configuration]" (e.g., "Gemma Original")

### Detailed Analysis

#### Gemma Original

- **Direct**: Starts at ~30%, rises to ~60% by interaction 5

- **Beliefs**: Peaks at ~50% (interaction 2), drops to ~45% by interaction 5

- **Bayesian Assistant**: Steady climb from ~30% to ~80%

- **Random**: Flat at ~30%

#### Gemma Oracle

- **Direct**: ~30% → ~50%

- **Beliefs**: Peaks at ~55% (interaction 3), drops to ~50%

- **Bayesian Assistant**: ~30% → ~75%

- **Random**: Flat at ~30%

#### Gemma Bayesian

- **Direct**: ~30% → ~65%

- **Beliefs**: Peaks at ~60% (interaction 3), drops to ~55%

- **Bayesian Assistant**: ~30% → ~85%

- **Random**: Flat at ~30%

#### Llama Original

- **Direct**: ~30% → ~50%

- **Beliefs**: Peaks at ~50% (interaction 2), drops to ~45%

- **Bayesian Assistant**: ~30% → ~80%

- **Random**: Flat at ~30%

#### Llama Oracle

- **Direct**: ~30% → ~55%

- **Beliefs**: Peaks at ~55% (interaction 3), drops to ~50%

- **Bayesian Assistant**: ~30% → ~75%

- **Random**: Flat at ~30%

#### Llama Bayesian

- **Direct**: ~30% → ~60%

- **Beliefs**: Peaks at ~60% (interaction 3), drops to ~55%

- **Bayesian Assistant**: ~30% → ~85%

- **Random**: Flat at ~30%

#### Qwen Original

- **Direct**: ~30% → ~50%

- **Beliefs**: Peaks at ~40% (interaction 1), drops to ~35%

- **Bayesian Assistant**: ~30% → ~80%

- **Random**: Flat at ~30%

#### Qwen Oracle

- **Direct**: ~30% → ~50%

- **Beliefs**: Peaks at ~40% (interaction 1), drops to ~35%

- **Bayesian Assistant**: ~30% → ~75%

- **Random**: Flat at ~30%

#### Qwen Bayesian

- **Direct**: ~30% → ~60%

- **Beliefs**: Peaks at ~50% (interaction 3), drops to ~45%

- **Bayesian Assistant**: ~30% → ~85%

- **Random**: Flat at ~30%

### Key Observations

1. **Bayesian Assistant Dominance**: Consistently achieves highest accuracy across all models and configurations, suggesting Bayesian methods significantly enhance performance.

2. **Direct vs. Beliefs**: Both metrics show initial improvement with interactions but plateau or decline after 3–4 steps, indicating diminishing returns.

3. **Random Baseline**: All models outperform the random baseline (30%) by interaction 1, demonstrating baseline effectiveness.

4. **Model-Specific Trends**:

- Qwen shows the most pronounced drop in Beliefs accuracy after peak

- Llama and Gemma maintain more stable Beliefs performance

- Bayesian configurations show 20–30% absolute improvement over Original/Oracle

### Interpretation

The data suggests Bayesian approaches (Bayesian Assistant) provide the most reliable accuracy gains, likely through probabilistic reasoning and uncertainty quantification. Direct and Beliefs metrics indicate that while interaction depth improves performance initially, excessive interactions may introduce noise or overfitting. The consistent Random baseline (30%) across all graphs implies these models outperform chance-level guessing by at least 30 percentage points. Notably, the Bayesian Assistant's performance improvement correlates with interaction count, suggesting it effectively leverages additional context. The Beliefs metric's volatility across models may reflect differences in how each architecture handles uncertainty representation.

DECODING INTELLIGENCE...