\n

## Diagram: AI Reasoning Process - Decision Making, Programming, and Reasoning

### Overview

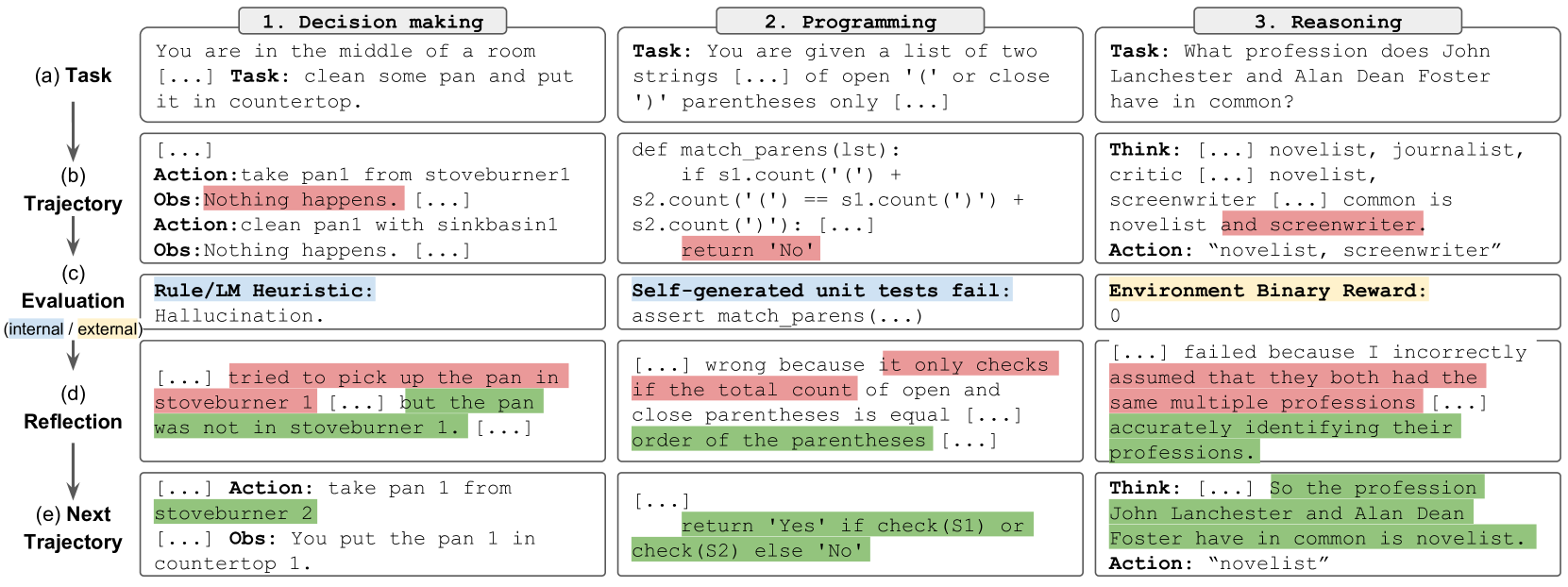

The image presents a diagram illustrating a comparison of an AI's reasoning process across three tasks: Decision Making, Programming, and Reasoning. Each task is broken down into five stages: (a) Task, (b) Trajectory, (c) Evaluation, (d) Reflection, and (e) Next Trajectory. The diagram showcases the AI's internal thought processes, actions, observations, and self-evaluation at each stage.

### Components/Axes

The diagram is structured into three columns, each representing a different task. Each column is further divided into five rows, corresponding to the stages (a) through (e). The stages are labeled along the left side of the diagram. The diagram uses text boxes to represent the AI's internal monologue and actions. There are no explicit axes or scales.

### Detailed Analysis or Content Details

**1. Decision Making (Left Column)**

* **(a) Task:** "You are in the middle of a room … Task: clean some pan and put it in countertop."

* **(b) Trajectory:** "Action: take pan from stoveburner1 Obs: Nothing happens. … Action: clean pan with sinkbasin1 Obs: Nothing happens."

* **(c) Evaluation:** "Rule/IM Heuristic: (internal) hallucination."

* **(d) Reflection:** "… tried to pick up the pan in stoveburner 1 … but the pan was not in stoveburner 1 …"

* **(e) Next Trajectory:** "… Action: take pan 1 from stoveburner 2 … Obs: You put the pan 1 in countertop 1."

**2. Programming (Middle Column)**

* **(a) Task:** "You are given a list of two strings … of open '(' or close ')' parentheses only …"

* **(b) Trajectory:** `def match_parens(lst): if s1.count('(') + s2.count('(') == s1.count(')') + s2.count(')'): return 'No' `

* **(c) Evaluation:** "Self-generated unit tests fail: assert match_parens(...) "

* **(d) Reflection:** "… wrong because it only checks if the total count of open and close parentheses is equal … order of the parentheses …"

* **(e) Next Trajectory:** `return 'Yes' if check(S1) or check(S2) else 'No'`

**3. Reasoning (Right Column)**

* **(a) Task:** "What profession does John Lanchester and Alan Dean Foster have in common?"

* **(b) Trajectory:** "Think: … novelist, journalist, critic … novelist, screenwriter … common is novelist and screenwriter. Action: “novelist, screenwriter”"

* **(c) Evaluation:** "Environment Binary Reward: 0"

* **(d) Reflection:** "… failed because I incorrectly assumed that they both had the same multiple professions … accurately identifying their professions."

* **(e) Next Trajectory:** "Think: … So the profession John Lanchester and Alan Dean Foster have in common is novelist. Action: “novelist”"

### Key Observations

* The "Evaluation" stage consistently highlights failures or shortcomings in the AI's initial approach.

* The "Reflection" stage demonstrates the AI's ability to identify the errors in its reasoning or code.

* The "Next Trajectory" stage shows the AI attempting to correct its approach based on the reflection.

* The Decision Making task involves physical actions and observations, while the Programming task involves code generation and testing, and the Reasoning task involves logical deduction.

* The "Environment Binary Reward" in the Reasoning task is 0, indicating a failed attempt.

### Interpretation

This diagram illustrates a simplified model of how an AI might approach problem-solving through a cycle of task definition, action/code generation, evaluation, reflection, and refinement. The diagram highlights the importance of self-evaluation and iterative improvement in AI systems. The AI doesn't simply arrive at the correct answer; it goes through a process of trial and error, identifying its mistakes, and adjusting its strategy. The differences between the three tasks demonstrate the adaptability of the AI's reasoning process to different domains. The "hallucination" in the Decision Making task suggests the AI can sometimes generate incorrect assumptions or perceptions of the environment. The programming example shows the AI's ability to generate code, test it, and debug based on the test results. The reasoning task demonstrates the AI's ability to identify commonalities between entities, but also its susceptibility to making incorrect assumptions. The diagram suggests a learning process where the AI improves its performance through repeated cycles of evaluation and reflection. The binary reward system in the reasoning task provides a simple feedback mechanism for the AI to learn from its mistakes.