## Diagram: Automated Model Card Population Process Flow

### Overview

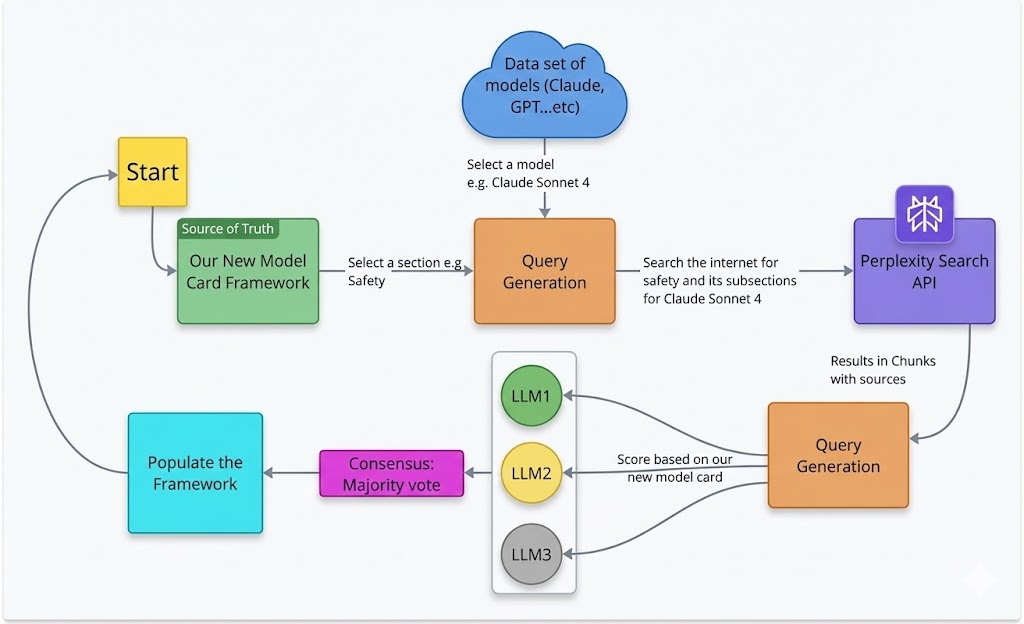

This image is a technical flowchart diagram illustrating a multi-step, automated process for populating a "New Model Card Framework" using external data sources and multiple Large Language Models (LLMs) for consensus-based scoring. The process involves querying the internet, processing results through multiple AI models, and using a majority vote to finalize the framework's content.

### Components/Axes

The diagram is composed of colored rectangular and cloud-shaped nodes connected by directional arrows with descriptive labels. The components are spatially arranged in a roughly circular flow.

**Nodes (Components):**

1. **Start** (Yellow box, top-left): The initiation point of the process.

2. **Our New Model Card Framework** (Green box, left-center): Labeled as the "Source of Truth." This is the target document being populated.

3. **Query Generation** (Orange box, center): The first of two nodes with this label. It receives input from the framework and the model dataset.

4. **Data set of models (Claude, GPT...etc)** (Blue cloud, top-center): Represents an external repository of AI models.

5. **Perplexity Search API** (Purple box, right-center): An external search service used to gather information.

6. **Query Generation** (Orange box, bottom-right): The second node with this label. It processes search results.

7. **LLM1, LLM2, LLM3** (Green, Yellow, Grey circles, vertically stacked in a white container, bottom-center): Three distinct Large Language Models used for analysis and scoring.

8. **Consensus: Majority vote** (Pink box, bottom-left): The decision-making step based on LLM outputs.

9. **Populate the Framework** (Cyan box, bottom-left): The final action step that updates the source document.

**Flow Connections (Arrows & Labels):**

* **Start** → **Our New Model Card Framework**

* **Our New Model Card Framework** → **Query Generation (1st)**: Label: "Select a section e.g. Safety"

* **Data set of models** → **Query Generation (1st)**: Label: "Select a model e.g. Claude Sonnet 4"

* **Query Generation (1st)** → **Perplexity Search API**: Label: "Search the internet for safety and its subsections for Claude Sonnet 4"

* **Perplexity Search API** → **Query Generation (2nd)**: Label: "Results in Chunks with sources"

* **Query Generation (2nd)** → **LLM1, LLM2, LLM3**: Three separate arrows, one to each LLM. Label on the arrow to LLM2: "Score based on our new model card"

* **LLM1, LLM2, LLM3** → **Consensus: Majority vote**: Three separate arrows converging on the consensus node.

* **Consensus: Majority vote** → **Populate the Framework**

* **Populate the Framework** → **Start**: A long, curved arrow completing the loop, indicating the process can be iterative.

### Detailed Analysis / Content Details

The process flow is sequential with a feedback loop:

1. **Initiation & Scoping:** The process begins at "Start." It uses the existing "New Model Card Framework" as the source of truth. A specific section (e.g., "Safety") is selected from this framework.

2. **Model Selection & Query Formulation:** Concurrently, a specific AI model (e.g., "Claude Sonnet 4") is selected from an external dataset. These two inputs (section and model name) are fed into the first "Query Generation" step.

3. **Information Retrieval:** The generated query is sent to the "Perplexity Search API" to search the internet for relevant information (e.g., "safety and its subsections for Claude Sonnet 4").

4. **Result Processing:** The search API returns "Results in Chunks with sources." These results are processed by the second "Query Generation" node.

5. **Multi-Model Analysis & Scoring:** The processed information is sent to three separate LLMs (LLM1, LLM2, LLM3). The label indicates they "Score based on our new model card," meaning they evaluate the retrieved information against the framework's criteria.

6. **Consensus Decision:** The scores or outputs from the three LLMs are aggregated in the "Consensus: Majority vote" step to determine the final, agreed-upon content.

7. **Action & Iteration:** The consensus result is used to "Populate the Framework." An arrow loops back from this step to "Start," indicating the entire process can be repeated for another section or model.

### Key Observations

* **Redundancy for Accuracy:** The use of three separate LLMs (LLM1, LLM2, LLM3) and a majority vote consensus mechanism is a key design choice to improve reliability and reduce bias or error from any single model.

* **External Data Dependency:** The process critically depends on two external services: a "Data set of models" for selection and the "Perplexity Search API" for real-time information retrieval.

* **Iterative Design:** The feedback loop from "Populate the Framework" back to "Start" suggests this is designed as a continuous or batch process to fill out the entire model card section by section.

* **Clear Role Separation:** There is a clear separation between query formulation (orange boxes), information retrieval (purple box), analysis (LLM circles), and decision-making (pink box).

### Interpretation

This diagram outlines a sophisticated, automated pipeline for creating or updating technical documentation (model cards) for AI systems. The core problem it solves is the manual, time-consuming effort required to gather and synthesize accurate, up-to-date information about AI model capabilities and safety from disparate internet sources.

The process demonstrates a **Peircean investigative approach**:

1. **Abduction (Hypothesis Generation):** The "Query Generation" steps form a hypothesis about what information is needed (e.g., "What are Claude Sonnet 4's safety subsections?").

2. **Deduction (Prediction):** The search API is used to deduce where that information might be found online.

3. **Induction (Testing & Generalization):** The three LLMs act as independent testers, evaluating the retrieved evidence against the model card framework. The majority vote is an inductive step to generalize the most reliable conclusion from multiple observations.

The **underlying principle** is leveraging consensus among multiple AI agents to produce trustworthy, sourced content, thereby automating a complex research and synthesis task. The "Source of Truth" label on the framework implies it is the canonical structure, while the external search and LLM analysis provide the dynamic content to fill it. The outlier in the flow is the direct link from the model dataset to query generation, highlighting that the process is model-specific, not generic.