## Diagram: AI Pipeline with Security and Functional Trade-offs

### Overview

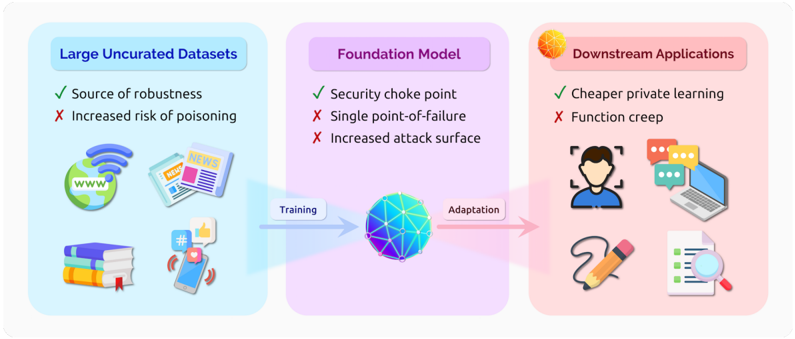

The image is a conceptual diagram illustrating a three-stage pipeline in modern AI systems, highlighting the benefits and risks at each stage. It flows from left to right, showing how data is used to train a central foundation model, which is then adapted for various downstream applications. The diagram uses color-coded sections, icons, and bullet points to convey its message.

### Components/Axes

The diagram is divided into three main rectangular sections, arranged horizontally from left to right:

1. **Left Section (Light Blue Background):**

* **Header:** "Large Uncurated Datasets"

* **Content:** Two bullet points and four illustrative icons.

* **Icons:** A globe with "www" and Wi-Fi signal, a stack of newspapers/books, a stack of books, and a smartphone with social media icons (like, comment, share).

2. **Center Section (Light Purple Background):**

* **Header:** "Foundation Model"

* **Content:** Three bullet points and a central icon.

* **Icon:** A stylized, multi-faceted geometric sphere (representing a complex AI model).

3. **Right Section (Light Pink Background):**

* **Header:** "Downstream Applications"

* **Content:** Two bullet points and four illustrative icons.

* **Icons:** A person's silhouette within a facial recognition frame, a laptop with chat bubbles, a pencil drawing a line, and a document with a magnifying glass.

**Flow Arrows:**

* A blue arrow labeled **"Training"** points from the "Large Uncurated Datasets" section to the "Foundation Model" icon.

* A pink arrow labeled **"Adaptation"** points from the "Foundation Model" icon to the "Downstream Applications" section.

### Detailed Analysis

**Textual Content Transcription:**

* **Large Uncurated Datasets:**

* ✅ Source of robustness

* ❌ Increased risk of poisoning

* **Foundation Model:**

* ✅ Security choke point

* ❌ Single point-of-failure

* ❌ Increased attack surface

* **Downstream Applications:**

* ✅ Cheaper private learning

* ❌ Function creep

**Visual Relationships:**

The diagram establishes a clear cause-and-effect chain. The "Large Uncurated Datasets" are the input for "Training" the "Foundation Model." This model then undergoes "Adaptation" to create various "Downstream Applications." The checkmarks (✅) denote advantages or intended functions, while the crosses (❌) denote disadvantages, risks, or unintended consequences at each stage.

### Key Observations

1. **Asymmetric Risk-Benefit Profile:** Each stage presents a mix of one primary benefit and one or more significant risks. The foundation model stage is notably risk-heavy, with two disadvantages listed against one advantage.

2. **Centralized Vulnerability:** The "Foundation Model" is explicitly labeled as both a "Security choke point" and a "Single point-of-failure," emphasizing its critical and vulnerable position in the pipeline.

3. **Source of Risk vs. Source of Robustness:** The same element ("Large Uncurated Datasets") is framed as both the foundational strength ("Source of robustness") and a primary vulnerability ("Increased risk of poisoning").

4. **Application-Level Risk:** The final stage introduces the concept of "Function creep," suggesting that applications may evolve beyond their original intended use, posing ethical or security concerns.

### Interpretation

This diagram provides a critical, security-focused analysis of the modern AI development paradigm centered on large foundation models. It argues that while this paradigm offers efficiency gains (e.g., "Cheaper private learning" via adaptation), it introduces systemic vulnerabilities.

The core message is one of **trade-offs and concentrated risk**. The pursuit of model robustness through massive, uncurated data inherently increases the attack surface for data poisoning. The resulting powerful foundation model, while useful, becomes a high-value target and a critical single point of failure for all downstream applications. Finally, the ease of adapting this model can lead to applications expanding in unforeseen and potentially harmful ways ("Function creep").

The diagram serves as a conceptual warning for AI system architects and security professionals, highlighting that the benefits of scale and adaptability come with the cost of creating centralized, attractive targets for attack and introducing new categories of operational and ethical risk. It frames the AI pipeline not just as a technical workflow, but as a system with inherent security and governance challenges at every step.