## Diagram: AI System Architecture and Risks

### Overview

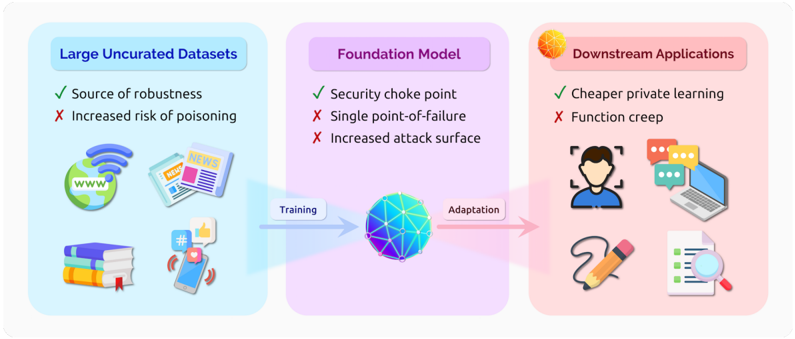

The diagram illustrates a three-stage pipeline for AI systems: **Large Uncurated Datasets** → **Foundation Model** → **Downstream Applications**. Each stage includes pros/cons (checkmarks/crosses), icons, and directional flow. The central "Foundation Model" acts as a security choke point, while downstream applications highlight trade-offs between cost and functionality.

---

### Components/Axes

1. **Sections**:

- **Large Uncurated Datasets** (Blue)

- **Foundation Model** (Purple)

- **Downstream Applications** (Pink)

2. **Textual Elements**:

- **Pros/Cons**:

- **Large Uncurated Datasets**:

- ✅ "Source of robustness"

- ❌ "Increased risk of poisoning"

- **Foundation Model**:

- ✅ "Security choke point"

- ❌ "Single point-of-failure"

- ❌ "Increased attack surface"

- **Downstream Applications**:

- ✅ "Cheaper private learning"

- ❌ "Function creep"

3. **Icons**:

- **Large Uncurated Datasets**: Globe (WWW), newspaper, smartphone with social media icons, stack of books.

- **Foundation Model**: Abstract 3D network (neural network visualization).

- **Downstream Applications**: Person with speech bubbles, laptop, pencil, document with magnifying glass.

4. **Flow Arrows**:

- **Training**: Blue arrow from "Large Uncurated Datasets" to "Foundation Model".

- **Adaptation**: Pink arrow from "Foundation Model" to "Downstream Applications".

---

### Detailed Analysis

- **Large Uncurated Datasets**:

- **Pros**: Robustness from diverse data sources (symbolized by global connectivity and varied media).

- **Cons**: High risk of poisoning (e.g., malicious data injection).

- **Foundation Model**:

- **Pros**: Centralized security (acts as a choke point for threats).

- **Cons**: Vulnerable to single-point failures and expanded attack surfaces due to complexity.

- **Downstream Applications**:

- **Pros**: Cost efficiency via private learning (e.g., localized data processing).

- **Cons**: Ethical/functional risks from "function creep" (unintended behavioral expansion).

---

### Key Observations

1. **Centralization Risks**: The Foundation Model is a critical bottleneck, amplifying vulnerabilities if compromised.

2. **Trade-offs in Applications**: Downstream systems prioritize cost savings but face ethical challenges.

3. **Data Quality Impact**: Uncurated datasets enable robustness but introduce poisoning risks, affecting all downstream stages.

---

### Interpretation

The diagram emphasizes the **interdependence** of AI components:

- **Data Quality → Model Integrity → Application Safety**: Poor data quality (poisoning) cascades through the pipeline, undermining model security and application reliability.

- **Centralization vs. Decentralization**: While centralized models offer security advantages, they create systemic risks. Downstream applications mitigate costs but inherit foundational flaws.

- **Ethical Implications**: "Function creep" in applications highlights the need for guardrails to prevent misuse, even when private learning reduces costs.

This structure underscores the importance of balancing scalability, security, and ethics in AI development.