## Bar Chart: Project CodeNet Dataset Success Rates by GPT-4o Temperature

### Overview

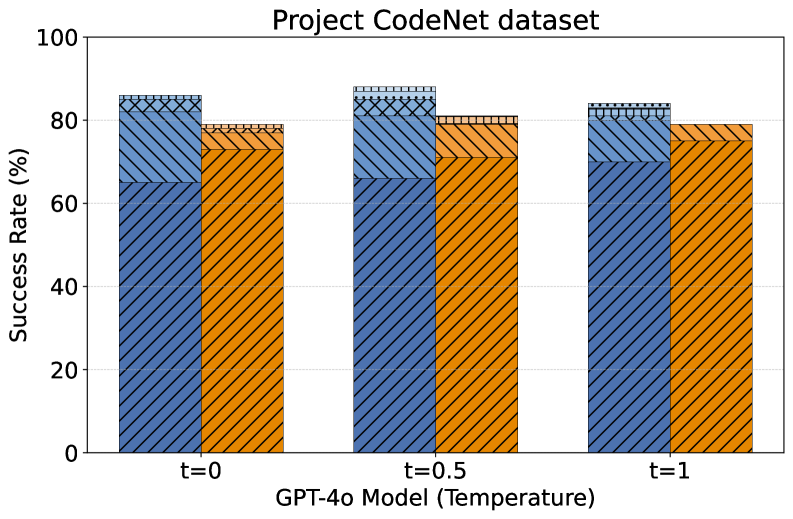

This is a grouped bar chart titled "Project CodeNet dataset". It displays the success rate (in percentage) of a model, identified as "GPT-4o Model", across three different temperature settings (t=0, t=0.5, t=1). For each temperature setting, there are two bars, distinguished by color and pattern, representing two different metrics or conditions.

### Components/Axes

* **Title:** "Project CodeNet dataset" (centered at the top).

* **Y-Axis:**

* **Label:** "Success Rate (%)"

* **Scale:** Linear, from 0 to 100.

* **Tick Marks:** 0, 20, 40, 60, 80, 100.

* **X-Axis:**

* **Label:** "GPT-4o Model (Temperature)"

* **Categories:** Three discrete temperature settings: "t=0", "t=0.5", "t=1".

* **Data Series (Bars):**

* **Series 1 (Blue with diagonal stripes):** Positioned as the left bar in each group.

* **Series 2 (Orange with cross-hatching):** Positioned as the right bar in each group.

* **Legend:** No explicit legend is present in the image. The two series are differentiated solely by color and pattern. The specific meaning of the blue vs. orange bars is not stated in the chart.

### Detailed Analysis

**Data Points (Approximate Values):**

* **At t=0:**

* Blue Bar: ~85%

* Orange Bar: ~78%

* **At t=0.5:**

* Blue Bar: ~88% (appears to be the highest value in the chart)

* Orange Bar: ~80%

* **At t=1:**

* Blue Bar: ~83%

* Orange Bar: ~79%

**Trend Verification:**

* **Blue Series Trend:** The success rate starts high at t=0 (~85%), increases slightly to a peak at t=0.5 (~88%), and then decreases at t=1 (~83%). The overall trend is a slight arch.

* **Orange Series Trend:** The success rate starts at ~78% at t=0, increases to ~80% at t=0.5, and remains nearly level at ~79% at t=1. The trend is relatively flat with a minor peak at t=0.5.

### Key Observations

1. **Consistent Performance Gap:** The blue series consistently shows a higher success rate than the orange series across all three temperature settings. The gap is approximately 5-8 percentage points.

2. **Optimal Temperature:** Both series achieve their highest observed success rate at the intermediate temperature setting of t=0.5.

3. **Stability:** The orange series exhibits less variation across temperatures compared to the blue series.

4. **High Baseline:** All success rates are relatively high, clustered between approximately 78% and 88%.

### Interpretation

The chart demonstrates the performance of the GPT-4o model on the Project CodeNet dataset under varying levels of randomness (temperature). The data suggests two key findings:

1. **Temperature Sensitivity:** Model performance, as measured by success rate, is sensitive to the temperature parameter. For the metric represented by the blue bars, there is a clear optimal setting at t=0.5. Performance degrades when the temperature is set to its minimum (t=0) or maximum (t=1) within this test range. This implies a "sweet spot" for balancing determinism and creativity in the model's outputs for this task.

2. **Metric Disparity:** The consistent gap between the blue and orange bars indicates that the two measured outcomes (e.g., perhaps "Pass@1" vs. "Pass@10", or "exact match" vs. "functional correctness") have different levels of difficulty. The blue metric is consistently easier for the model to achieve. The fact that both metrics peak at the same temperature (t=0.5) suggests that the optimal setting for one metric is also optimal for the other, which is a useful insight for tuning.

**Note on Missing Information:** The critical absence of a legend means the specific definitions of the blue and orange data series are unknown. To fully interpret the results, one would need to know what these two bars represent (e.g., different evaluation metrics, different programming languages, different problem difficulties). The analysis above is based solely on the visual trends and numerical values presented.