TECHNICAL ASSET FINGERPRINT

99383fee17cc8abf6470b9aa

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Diagram: State Transition Diagrams

### Overview

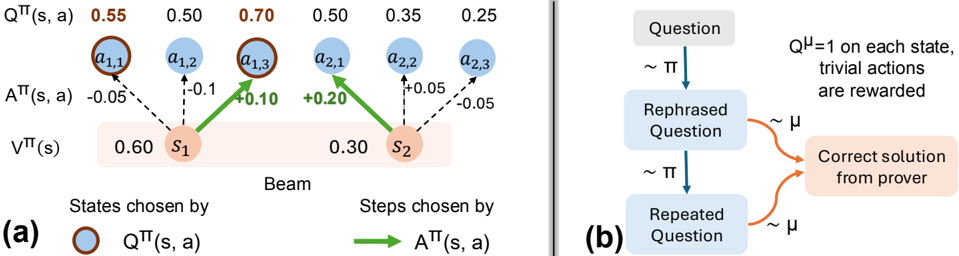

The image presents two diagrams illustrating state transitions. Diagram (a) depicts a beam search process with states, actions, and associated values. Diagram (b) shows a sequence of questions and solutions.

### Components/Axes

**Diagram (a): Beam Search**

* **Nodes:**

* `s1`: State 1, with a value `Vπ(s)` of 0.60. Located on the left side of the "Beam" label.

* `s2`: State 2, with a value `Vπ(s)` of 0.30. Located on the right side of the "Beam" label.

* `a1,1`, `a1,2`, `a1,3`: Actions associated with state `s1`.

* `a2,1`, `a2,2`, `a2,3`: Actions associated with state `s2`.

* **Edges:**

* Dashed black lines represent transitions with negative `Aπ(s, a)` values.

* Solid green lines represent transitions with positive `Aπ(s, a)` values.

* **Values:**

* `Qπ(s, a)`: Q-values for each action. The values are 0.55 for `a1,1`, 0.50 for `a1,2`, 0.70 for `a1,3`, 0.50 for `a2,1`, 0.35 for `a2,2`, and 0.25 for `a2,3`.

* `Aπ(s, a)`: Advantage values for each action. The values are -0.05 for `a1,1`, -0.1 for `a1,2`, +0.10 for `a1,3`, +0.20 for `a2,1`, +0.05 for `a2,2`, and -0.05 for `a2,3`.

* `Vπ(s)`: State values for `s1` (0.60) and `s2` (0.30).

* **Labels:**

* `Qπ(s, a)`: Q-value of state-action pair.

* `Aπ(s, a)`: Advantage of state-action pair.

* `Vπ(s)`: Value of state.

* "Beam": Indicates the beam search process.

* "States chosen by": Label for the states.

* "Steps chosen by": Label for the actions.

* **Legend:**

* Brown-outlined blue circle: `Qπ(s, a)`

* Green arrow: `Aπ(s, a)`

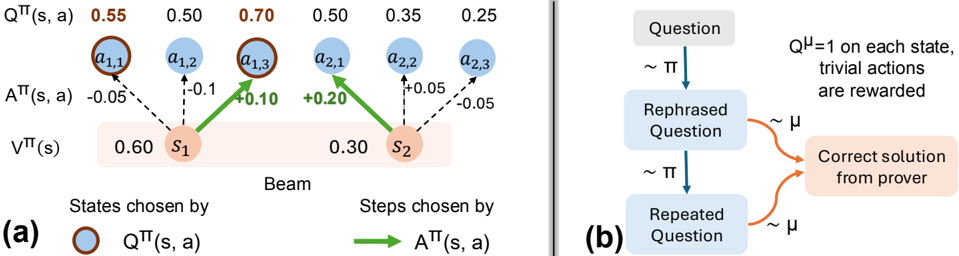

**Diagram (b): Question-Answering Process**

* **Nodes:**

* "Question": Initial question.

* "Rephrased Question": Rephrased version of the question.

* "Repeated Question": Repeated version of the question.

* "Correct solution from prover": The correct solution.

* **Edges:**

* Blue arrows labeled "~ π": Transitions between question states.

* Orange arrows labeled "~ μ": Transitions from question states to the correct solution.

* **Text:**

* "Qμ = 1 on each state, trivial actions are rewarded": Describes the reward structure.

### Detailed Analysis

**Diagram (a): Beam Search**

* The beam search starts with two states, `s1` and `s2`, having values 0.60 and 0.30, respectively.

* From `s1`, actions `a1,1`, `a1,2`, and `a1,3` are possible, with Q-values 0.55, 0.50, and 0.70, and advantages -0.05, -0.1, and +0.10, respectively.

* From `s2`, actions `a2,1`, `a2,2`, and `a2,3` are possible, with Q-values 0.50, 0.35, and 0.25, and advantages +0.20, +0.05, and -0.05, respectively.

* The actions with positive advantages (green arrows) are `a1,3` from `s1` and `a2,1` from `s2`.

**Diagram (b): Question-Answering Process**

* The process starts with a "Question".

* The question is rephrased, leading to a "Rephrased Question".

* The rephrased question is repeated, leading to a "Repeated Question".

* From both the "Rephrased Question" and "Repeated Question", the process can transition to the "Correct solution from prover".

* The transitions between question states are governed by a policy π, while the transitions to the correct solution are governed by a policy μ.

* The text indicates that Qμ = 1 on each state, and trivial actions are rewarded.

### Key Observations

* In diagram (a), the Q-values and advantage values vary for different actions from each state.

* In diagram (b), the question-answering process involves rephrasing and repeating the question before arriving at the correct solution.

### Interpretation

The diagrams illustrate two different processes. Diagram (a) shows a beam search algorithm, where the algorithm explores different actions from different states, guided by Q-values and advantage values. Diagram (b) depicts a question-answering process, where the question is iteratively refined before a solution is obtained. The use of policies π and μ suggests a reinforcement learning framework for question answering. The statement "Qμ = 1 on each state, trivial actions are rewarded" implies that the reward structure favors actions that lead to the correct solution, even if they are trivial.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Diagram: Reinforcement Learning Process & Question Rephrasing

### Overview

The image presents two diagrams, labeled (a) and (b), illustrating components of a reinforcement learning process and a question rephrasing mechanism. Diagram (a) depicts a state transition diagram with Q-values and policy values, while diagram (b) shows a flow chart for rephrasing questions and obtaining solutions.

### Components/Axes

**Diagram (a):**

* **States:** s1, s2

* **Actions:** a1,1, a1,2, a1,3, a2,1, a2,2, a2,3

* **Q-values:** Q<sup>π</sup>(s, a) – values ranging from 0.25 to 0.70.

* **Policy Values:** V<sup>π</sup>(s) – 0.60 for s1 and 0.30 for s2.

* **Policy Transition Weights:** A<sup>π</sup>(s, a) – values ranging from -0.10 to +0.20.

* **Legend:**

* Blue circles: Q<sup>π</sup>(s, a)

* Green arrows: A<sup>π</sup>(s, a)

* **Label:** "Beam" – positioned below states s1 and s2.

**Diagram (b):**

* **Input:** "Question"

* **Process:** "Rephrased Question", "Repeated Question"

* **Output:** "Correct solution from prover"

* **Distribution:** ~π (sampling from a policy) and ~μ (sampling from a distribution)

* **Reward:** Q<sup>μ</sup> = 1 on each state, trivial actions are rewarded.

* **Arrows:** Indicate flow of information.

### Detailed Analysis or Content Details

**Diagram (a):**

* **State s1:**

* Q<sup>π</sup>(s1, a1,1) = 0.55

* Q<sup>π</sup>(s1, a1,2) = 0.50

* Q<sup>π</sup>(s1, a1,3) = 0.70

* V<sup>π</sup>(s1) = 0.60

* A<sup>π</sup>(s1, a1,1) = -0.05

* A<sup>π</sup>(s1, a1,2) = -0.10

* A<sup>π</sup>(s1, a1,3) = +0.20

* **State s2:**

* Q<sup>π</sup>(s2, a2,1) = 0.50

* Q<sup>π</sup>(s2, a2,2) = 0.35

* Q<sup>π</sup>(s2, a2,3) = 0.25

* V<sup>π</sup>(s2) = 0.30

* A<sup>π</sup>(s2, a2,1) = +0.05

* A<sup>π</sup>(s2, a2,2) = -0.05

**Diagram (b):**

* A "Question" is fed into a process that generates a "Rephrased Question" sampled from a policy π.

* The "Rephrased Question" leads to a "Correct solution from prover".

* The process is repeated with a "Repeated Question" also sampled from policy π.

* The "Repeated Question" is sampled from a distribution μ.

* The reward function Q<sup>μ</sup> assigns a value of 1 to each state, rewarding trivial actions.

### Key Observations

* In diagram (a), the Q-values for state s1 are generally higher than those for state s2, suggesting s1 is a more desirable state.

* The policy transition weights (A<sup>π</sup>(s, a)) indicate the probability of taking specific actions from each state. Positive values suggest a higher probability, while negative values suggest a lower probability.

* Diagram (b) illustrates an iterative process of question rephrasing and solution seeking, potentially aiming to improve the quality or accuracy of the solutions obtained.

### Interpretation

Diagram (a) represents a simplified reinforcement learning environment. The Q-values represent the expected cumulative reward for taking a specific action in a given state, following a particular policy (π). The policy values (V<sup>π</sup>(s)) represent the expected cumulative reward for being in a given state, following the same policy. The policy transition weights (A<sup>π</sup>(s, a)) show the probability of taking each action from each state. The "Beam" label suggests a beam search algorithm might be used to explore the state space.

Diagram (b) depicts a mechanism for refining questions to obtain better solutions. The rephrasing process, guided by a policy π, aims to generate questions that are more likely to elicit correct answers. The iterative nature of the process, with repeated questioning and sampling from distribution μ, suggests a search for optimal question formulations. The reward function Q<sup>μ</sup> incentivizes trivial actions, potentially indicating a need to balance exploration and exploitation in the question-answering process.

The two diagrams together suggest a system where reinforcement learning is used to guide the rephrasing of questions, ultimately leading to improved solution quality. The system appears to be designed to explore different question formulations and learn which ones are most effective in eliciting correct answers.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Technical Diagram: Beam Search Visualization and Question Rephrasing Flowchart

### Overview

The image contains two distinct technical diagrams, labeled (a) and (b), presented side-by-side. Diagram (a) is a visualization of a beam search process in a reinforcement learning or decision-making context, showing states, actions, and associated values. Diagram (b) is a flowchart illustrating a process involving question rephrasing and solution generation, likely for a language model or reasoning system.

### Components/Axes

**Diagram (a) - Left Side:**

* **Title/Label:** "(a)" at the bottom-left corner.

* **Main Structure:** A "Beam" search diagram with two primary states, `s₁` and `s₂`, represented as orange circles within a light orange rectangular background.

* **States:** `s₁` (left) and `s₂` (right).

* **Actions:** Six blue circular nodes representing actions, arranged in two rows above the states.

* Top row (from left to right): `a₁,₁`, `a₁,₂`, `a₁,₃`, `a₂,₁`, `a₂,₂`, `a₂,₃`.

* **Value Labels (Top Row):** Above each action node, a numerical value labeled `Q^π(s, a)`:

* Above `a₁,₁`: `0.55` (in orange text)

* Above `a₁,₂`: `0.50`

* Above `a₁,₃`: `0.70` (in orange text)

* Above `a₂,₁`: `0.50`

* Above `a₂,₂`: `0.35`

* Above `a₂,₃`: `0.25`

* **Advantage Labels (Middle):** Between the action nodes and states, values labeled `A^π(s, a)`:

* Between `a₁,₁` and `s₁`: `-0.05`

* Between `a₁,₂` and `s₁`: `-0.1`

* Between `a₁,₃` and `s₁`: `+0.10` (in green text)

* Between `a₂,₁` and `s₂`: `+0.20` (in green text)

* Between `a₂,₂` and `s₂`: `+0.05`

* Between `a₂,₃` and `s₂`: `-0.05`

* **State Values (Bottom):** Below the states, values labeled `V^π(s)`:

* Below `s₁`: `0.60`

* Below `s₂`: `0.30`

* **Legend (Bottom of Diagram a):**

* Left side: An orange circle icon followed by the text "States chosen by `Q^π(s, a)`".

* Right side: A green arrow icon followed by the text "Steps chosen by `A^π(s, a)`".

* **Visual Flow:** Solid green arrows point from `s₁` to `a₁,₃` and from `s₂` to `a₂,₁`. Dashed black arrows connect states to all their respective action nodes.

**Diagram (b) - Right Side:**

* **Title/Label:** "(b)" at the bottom-left corner.

* **Main Structure:** A vertical flowchart with three main rectangular boxes connected by arrows.

* **Flowchart Boxes (from top to bottom):**

1. Top box: "Question"

2. Middle box: "Rephrased Question"

3. Bottom box: "Repeated Question"

* **Flow Arrows & Annotations:**

* A blue arrow points from "Question" to "Rephrased Question", labeled with "~ π".

* A blue arrow points from "Rephrased Question" to "Repeated Question", labeled with "~ π".

* Two orange curved arrows point from the right side of both the "Rephrased Question" and "Repeated Question" boxes to a final box on the right. These arrows are labeled with "~ μ".

* **Final Output Box:** A light orange box on the right containing the text: "Correct solution from prover".

* **Annotation Text (Top-Right):** "Q^μ=1 on each state, trivial actions are rewarded".

### Detailed Analysis

**Diagram (a) Analysis:**

* **Spatial Grounding:** The legend is positioned at the bottom of the diagram. The orange "States chosen" icon corresponds to the orange circles for `s₁` and `s₂`. The green "Steps chosen" arrow corresponds to the two solid green arrows in the main diagram.

* **Trend Verification & Data Points:**

* For state `s₁`: The chosen action (green arrow) is `a₁,₃`, which has the highest `Q^π(s, a)` value (0.70) and a positive advantage `A^π(s, a)` (+0.10). The other actions from `s₁` (`a₁,₁` and `a₁,₂`) have lower Q-values and negative advantages.

* For state `s₂`: The chosen action (green arrow) is `a₂,₁`, which has the highest `Q^π(s, a)` value (0.50) among actions from `s₂` and the highest positive advantage `A^π(s, a)` (+0.20).

* The state value `V^π(s)` for `s₁` (0.60) is higher than for `s₂` (0.30).

* **Component Isolation:** The diagram is segmented into a top layer (Q-values), a middle layer (actions and advantages), and a bottom layer (states and state values). The legend is a separate explanatory component.

**Diagram (b) Analysis:**

* **Flow:** The primary flow is vertical: `Question` → `Rephrased Question` → `Repeated Question`. A secondary, convergent flow comes from both the rephrased and repeated questions to generate the final "Correct solution".

* **Process Labels:** The "~ π" and "~ μ" labels likely denote different policies, models, or processes applied at each step. The annotation clarifies that under policy `μ`, the Q-value is 1 for each state, and trivial actions receive rewards.

### Key Observations

1. **Diagram (a):** The beam search selects actions based on a combination of high Q-value (`Q^π(s, a)`) and positive advantage (`A^π(s, a)`). The chosen actions (`a₁,₃` and `a₂,₁`) are not necessarily the ones with the absolute highest Q-value in the entire set (e.g., `a₁,₁` has Q=0.55, which is higher than `a₂,₁`'s Q=0.50), but they are the best *for their respective parent states*.

2. **Diagram (a):** The advantage function `A^π(s, a)` appears to be calculated as `Q^π(s, a) - V^π(s)`. For example, for `s₁` and `a₁,₃`: 0.70 - 0.60 = +0.10, which matches the labeled advantage.

3. **Diagram (b):** The process suggests that rephrasing a question (using process `π`) and then potentially repeating it can lead to a correct solution when processed by a different system or policy (`μ`). The note implies that under `μ`, the task is simplified (Q^μ=1, trivial actions rewarded).

### Interpretation

These diagrams together likely illustrate components of a system for improving reasoning or problem-solving, possibly in the context of large language models or reinforcement learning from human feedback.

* **Diagram (a)** demonstrates a principled method for selecting the most promising partial solutions (actions) during a search process. It shows that the selection isn't greedy based on a single metric but considers both the expected return (`Q`) and how much better an action is compared to the average for that state (`A`). This is a core concept in advanced reinforcement learning algorithms.

* **Diagram (b)** proposes a meta-strategy: transforming the input (the question) through rephrasing and repetition can make the underlying problem more tractable for a solver (the "prover"). The different policies (`π` for transformation, `μ` for solving) suggest a separation of concerns—one system prepares the problem, and another, perhaps more specialized or reward-sensitive system, solves it.

* **Connection:** The "beam search" in (a) could be the mechanism used by the "prover" in (b) to explore possible solution paths after receiving a rephrased or repeated question. The reward structure mentioned in (b) (`Q^μ=1, trivial actions are rewarded`) would directly influence the `Q` and `A` values calculated in a process like (a).

**Language Declaration:** All text in the image is in English, with standard mathematical notation (Greek letters π, μ).

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Diagram: Reinforcement Learning Process with Question Refinement

### Overview

The image contains two interconnected diagrams:

**(a)** A reinforcement learning (RL) process modeling state-action dynamics with numerical values for Q-values, advantage functions, and state values.

**(b)** A flowchart illustrating a question refinement process leading to a correct solution, with probabilistic transitions and rewards.

---

### Components/Axes

#### Diagram (a): RL Process

- **States**:

- `S1` (value: 0.60)

- `S2` (value: 0.30)

- **Actions**:

- `a1,1`, `a1,2`, `a1,3` (associated with `S1`)

- `a2,1`, `a2,2`, `a2,3` (associated with `S2`)

- **Key Labels**:

- `QTT(s, a)`: Action-value function (blue circles)

- `ATT(s, a)`: Advantage function (green arrows)

- `VTT(s)`: State-value function (orange boxes)

- `Beam`: Mechanism selecting states/actions

- **Legend**:

- Blue circles: `QTT(s, a)`

- Brown circles: `ATT(s, a)`

- Green arrows: `ATT(s, a)` transitions

#### Diagram (b): Question Refinement

- **Nodes**:

- `Question` → `Rephrased Question` → `Repeated Question` → `Correct solution from prover`

- **Transitions**:

- `~π`: Probabilistic transition (e.g., rephrasing)

- `~μ`: Reward mechanism (trivial actions rewarded)

- **Legend**:

- `Qμ=1`: Reward for trivial actions

---

### Detailed Analysis

#### Diagram (a): RL Process

- **Q-values (`QTT(s, a)`)**:

- `S1` actions: 0.55, 0.50, 0.70

- `S2` actions: 0.50, 0.35, 0.25

- **Trend**: Q-values decrease from `a1,3` (0.70) to `a2,3` (0.25).

- **Advantage values (`ATT(s, a)`)**:

- `S1` transitions: -0.05, -0.10, +0.10

- `S2` transitions: +0.20, -0.05

- **Trend**: Mixed positive/negative advantages; `S2` has a larger positive shift (+0.20).

- **State values (`VTT(s)`)**:

- `S1`: 0.60

- `S2`: 0.30

- **Trend**: State values decrease from `S1` to `S2`.

#### Diagram (b): Question Refinement

- **Flow**:

- `Question` → `Rephrased Question` (via `~π`) → `Repeated Question` (via `~π`) → `Correct solution` (via `~μ`).

- **Rewards**:

- `Qμ=1` ensures trivial actions (e.g., rephrasing) are rewarded.

---

### Key Observations

1. **RL Process (a)**:

- `S1` has higher Q-values and state value than `S2`, suggesting it is a more favorable state.

- `a1,3` (Q=0.70) is the optimal action in `S1`, while `a2,3` (Q=0.25) is suboptimal in `S2`.

- Advantage values indicate exploration incentives (e.g., +0.20 for `a2,1`).

2. **Question Refinement (b)**:

- Iterative rephrasing (`~π`) and repetition (`~π`) lead to a correct solution, with rewards (`~μ`) guiding the process.

---

### Interpretation

- **RL Process (a)**:

The beam selects states (`S1`, `S2`) based on `QTT` and `ATT` values. Higher Q-values in `S1` suggest it is prioritized, while `ATT` values guide action selection within states. The decline in `VTT` from `S1` to `S2` implies diminishing returns in later states.

- **Question Refinement (b)**:

The process mirrors RL exploration: rephrasing (`~π`) and repetition (`~π`) refine the question, while rewards (`~μ`) reinforce progress toward the correct solution. The `Qμ=1` condition ensures trivial steps are incentivized, aligning with the RL framework’s reward structure.

- **Integration**:

Diagram (a) models the agent’s decision-making, while (b) abstracts the cognitive process of refining questions. Both emphasize iterative improvement guided by value functions and rewards.

---

### Uncertainties

- Numerical values in (a) are approximate (e.g., 0.55 ±0.05).

- The exact relationship between `~π` and `~μ` in (b) is not quantified.

DECODING INTELLIGENCE...