## Scatter Plots: Category Distribution Across Transformer Layers and Heads

### Overview

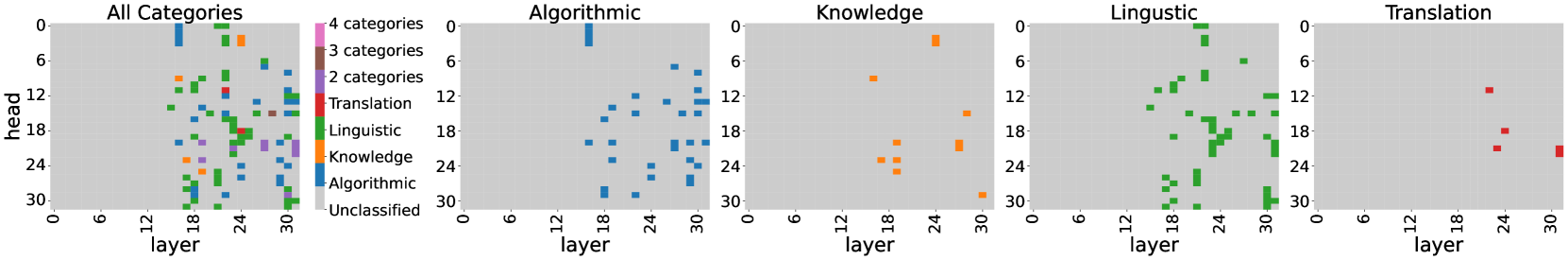

The image presents five scatter plots visualizing the distribution of linguistic and cognitive categories across transformer model layers (x-axis) and attention heads (y-axis). The plots use color-coded markers to represent different categories, with a legend indicating category counts (4, 3, 2 categories) and specific linguistic/cognitive domains (Translation, Linguistic, Knowledge, Algorithmic).

### Components/Axes

- **X-axis**: Layer (0-30, integer increments)

- **Y-axis**: Head (0-30, integer increments)

- **Legend**:

- Color gradient from pink (4 categories) to gray (Unclassified)

- Specific categories:

- Red = Translation

- Green = Linguistic

- Orange = Knowledge

- Blue = Algorithmic

- **Subplot Titles**:

- All Categories (composite)

- Algorithmic

- Knowledge

- Linguistic

- Translation

### Detailed Analysis

1. **All Categories Plot**:

- Dense distribution of markers across all layers and heads

- Highest concentration in layers 18-30 and heads 12-30

- Mix of all colors with gray unclassified points scattered throughout

2. **Algorithmic Subplot**:

- Blue squares dominate layers 18-30

- Vertical clustering in heads 6-12 and 18-24

- Sparse points in early layers (0-12)

3. **Knowledge Subplot**:

- Orange squares concentrated in layers 18-24

- Vertical banding in heads 12-24

- Fewer points in early/mid layers (0-18)

4. **Linguistic Subplot**:

- Green squares show strong layer progression (18-30)

- Head distribution peaks at 18-24

- Gradual increase in density toward later layers

5. **Translation Subplot**:

- Red squares limited to layers 24-30

- Head concentration at 4-12

- Minimal presence in early layers

### Key Observations

- **Layer Dependency**: All categories show stronger presence in later layers (18+), suggesting increased complexity in deeper transformer blocks

- **Head Specialization**:

- Algorithmic: Dominates middle heads (6-12, 18-24)

- Translation: Concentrated in lower heads (4-12)

- Knowledge: Spread across middle heads (12-24)

- **Category Co-occurrence**:

- Green (Linguistic) and orange (Knowledge) markers frequently overlap in layers 18-24

- Blue (Algorithmic) appears independently in deeper layers

- **Unclassified Points**:

- 12 gray markers in All Categories plot

- Mostly in layers 12-24, heads 6-18

### Interpretation

The data reveals systematic patterns in how different cognitive/linguistic functions are distributed across transformer architecture:

1. **Hierarchical Processing**:

- Early layers (0-18) show general linguistic processing (green)

- Middle layers (18-24) specialize in knowledge integration (orange)

- Late layers (24-30) focus on translation-specific tasks (red)

2. **Head Specialization**:

- Lower heads (0-12) handle basic translation tasks

- Middle heads (12-24) manage knowledge integration

- Upper heads (18-30) specialize in algorithmic processing

3. **Unclassified Activity**:

- The presence of gray points suggests residual processing not captured by current categorization

- Concentration in middle layers/heads may indicate transitional processing stages

4. **Architectural Implications**:

- The clear layer progression suggests effective hierarchical feature learning

- Head specialization patterns align with transformer's parallel processing capabilities

- Knowledge/linguistic overlap in middle layers may reflect semantic integration mechanisms

This visualization provides empirical evidence for the modular organization of transformer models, with distinct functional specialization across both layers and attention heads.