# Technical Document Extraction: RAG / Iterative RAG Prompt Structure

## 1. Overview

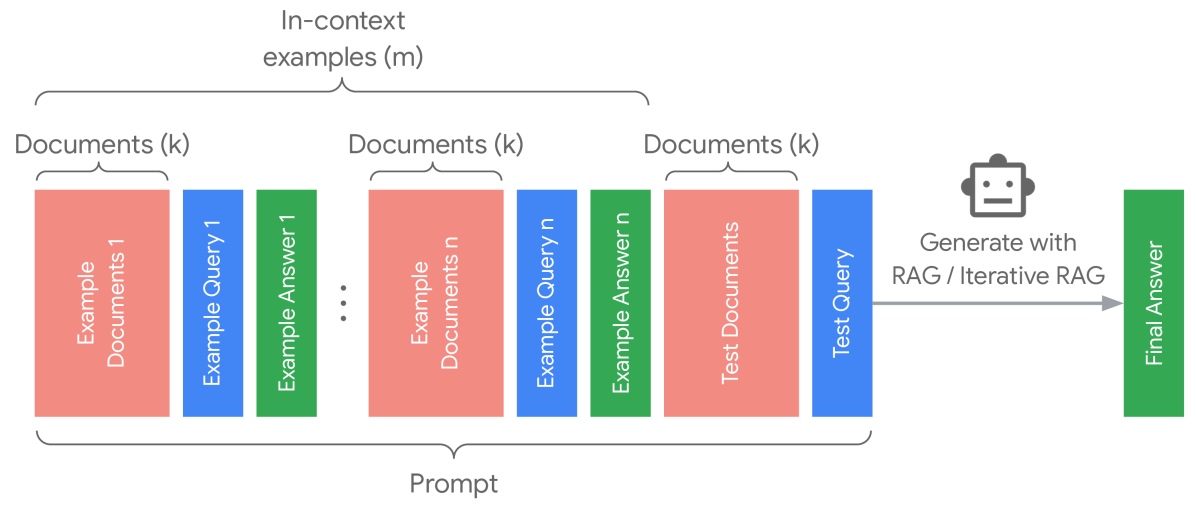

This image is a technical diagram illustrating the architectural composition of a prompt used for Retrieval-Augmented Generation (RAG) or Iterative RAG. It demonstrates how multiple in-context examples and test data are sequenced to generate a final answer.

## 2. Component Isolation

### Region A: Header / Global Brackets

* **Top Bracket:** Spans the first two sets of examples. Label: `In-context examples (m)`.

* **Bottom Bracket:** Spans the entire sequence from the first example to the test query. Label: `Prompt`.

### Region B: Main Sequence (Input Data)

The input is composed of repeating blocks and a final test block, color-coded by function:

* **Red Blocks:** Represent source material. Label: `Documents (k)`.

* **Blue Blocks:** Represent queries.

* **Green Blocks:** Represent answers.

#### Sequence Breakdown:

1. **Example Set 1:**

* **Red Block:** `Example Documents 1` (Sub-labeled `Documents (k)`)

* **Blue Block:** `Example Query 1`

* **Green Block:** `Example Answer 1`

2. **Ellipsis (`...`):** Indicates a continuation of the pattern for $n$ examples.

3. **Example Set n:**

* **Red Block:** `Example Documents n` (Sub-labeled `Documents (k)`)

* **Blue Block:** `Example Query n`

* **Green Block:** `Example Answer n`

4. **Test Set:**

* **Red Block:** `Test Documents` (Sub-labeled `Documents (k)`)

* **Blue Block:** `Test Query`

### Region C: Processing and Output

* **Icon:** A stylized robot head representing an AI model/LLM.

* **Transition Arrow:** A grey arrow pointing from the `Prompt` sequence to the `Final Answer`.

* **Process Label:** `Generate with RAG / Iterative RAG`.

* **Output Block (Green):** `Final Answer`.

## 3. Data Structure and Logic

The diagram defines a specific mathematical and logical relationship for the prompt construction:

| Component | Variable | Description |

| :--- | :--- | :--- |

| **In-context examples** | $m$ | The total number of few-shot examples provided in the prompt. |

| **Documents** | $k$ | The number of retrieved documents provided for each specific query (both example and test). |

| **Prompt** | N/A | The concatenation of $m$ examples (Document + Query + Answer) followed by the Test Document and Test Query. |

## 4. Flow Description

1. **Contextualization:** The system retrieves $k$ documents for $m$ different examples. Each example consists of the documents, a query, and the correct answer.

2. **Test Input:** The system retrieves $k$ documents for the current `Test Query`.

3. **Prompt Assembly:** All $m$ examples and the current test data are bundled into a single `Prompt`.

4. **Generation:** The AI model processes the `Prompt` using a `RAG` or `Iterative RAG` methodology.

5. **Termination:** The process results in a single `Final Answer`.

## 5. Text Transcription (Precise)

* **Top Labels:** "In-context examples (m)", "Documents (k)" (repeated 3 times).

* **Internal Block Text (Vertical):**

* "Example Documents 1"

* "Example Query 1"

* "Example Answer 1"

* "Example Documents n"

* "Example Query n"

* "Example Answer n"

* "Test Documents"

* "Test Query"

* "Final Answer"

* **Action Text:** "Generate with RAG / Iterative RAG"

* **Bottom Label:** "Prompt"