TECHNICAL ASSET FINGERPRINT

9ac6425bba1a5395b6d342a6

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Diagram: Comparison of Machine Learning Paradigms

### Overview

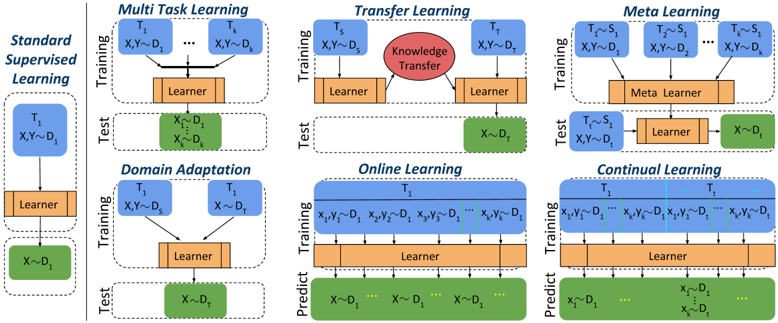

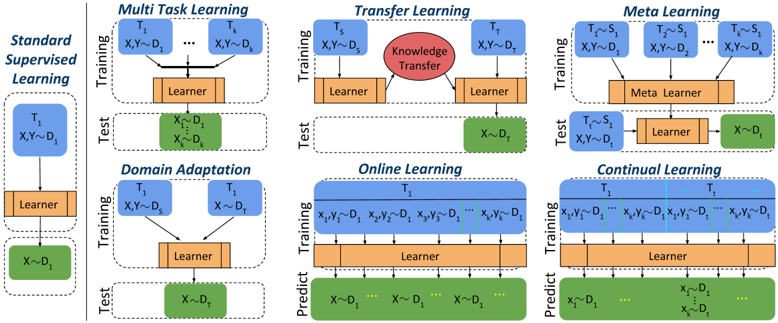

The image presents a comparative diagram illustrating different machine learning paradigms: Standard Supervised Learning, Multi-Task Learning, Domain Adaptation, Transfer Learning, Online Learning, Meta Learning, and Continual Learning. Each paradigm is depicted with a simplified flow diagram showing the training and testing/prediction phases, highlighting the data used in each phase and the role of the learner.

### Components/Axes

Each paradigm is represented by a diagram consisting of the following components:

* **Training Phase:** Represented by a blue rounded rectangle labeled "Training". Inside, the data used for training is specified in the format "T<sub>i</sub>, X, Y ~ D<sub>i</sub>", where T represents the task, X represents the input data, Y represents the labels, and D represents the data distribution.

* **Learner:** Represented by an orange rectangle labeled "Learner" or "Meta Learner". This represents the model being trained.

* **Testing/Prediction Phase:** Represented by a green rounded rectangle labeled "Test" or "Predict". Inside, the data used for testing/prediction is specified in the format "X ~ D<sub>i</sub>", where X represents the input data and D represents the data distribution.

* **Arrows:** Arrows indicate the flow of data and the learning process.

* **Knowledge Transfer (Transfer Learning):** Represented by a red circle with the text "Knowledge Transfer" inside.

### Detailed Analysis

**1. Standard Supervised Learning:**

* **Training:** T<sub>1</sub>, X, Y ~ D<sub>1</sub>

* **Process:** Data flows from the training set to the learner.

* **Testing:** X ~ D<sub>1</sub>

* **Process:** Trained learner is used to predict on data from the same distribution.

**2. Multi-Task Learning:**

* **Training:** T<sub>1</sub>, X, Y ~ D<sub>1</sub> ... T<sub>k</sub>, X, Y ~ D<sub>k</sub>

* **Process:** Multiple tasks are learned simultaneously by a single learner.

* **Testing:** X<sub>1</sub> ~ D<sub>1</sub> ... X<sub>k</sub> ~ D<sub>k</sub>

* **Process:** The trained learner is tested on data from each of the learned distributions.

**3. Domain Adaptation:**

* **Training:** T<sub>1</sub>, X, Y ~ D<sub>S</sub> and T<sub>1</sub>, X ~ D<sub>T</sub>

* **Process:** The learner is trained on data from a source domain (D<sub>S</sub>) and a target domain (D<sub>T</sub>).

* **Testing:** X ~ D<sub>T</sub>

* **Process:** The trained learner is tested on data from the target domain.

**4. Transfer Learning:**

* **Training:** T<sub>S</sub>, X, Y ~ D<sub>S</sub>

* **Process:** The learner is trained on data from a source domain (D<sub>S</sub>).

* **Knowledge Transfer:** Knowledge is transferred from the source domain to the target domain.

* **Training:** T<sub>T</sub>, X, Y ~ D<sub>T</sub>

* **Process:** The learner is further trained on data from the target domain (D<sub>T</sub>).

* **Testing:** X ~ D<sub>T</sub>

* **Process:** The trained learner is tested on data from the target domain.

**5. Online Learning:**

* **Training:** T<sub>1</sub>, x<sub>1</sub>, y<sub>1</sub> ~ D<sub>1</sub>, x<sub>2</sub>, y<sub>2</sub> ~ D<sub>1</sub>, x<sub>3</sub>, y<sub>3</sub> ~ D<sub>1</sub> ... x<sub>k</sub>, y<sub>k</sub> ~ D<sub>1</sub>

* **Process:** The learner is trained sequentially on data points.

* **Prediction:** X ~ D<sub>1</sub> ... X ~ D<sub>1</sub> ...

* **Process:** The trained learner is used to predict on data from the same distribution.

**6. Meta Learning:**

* **Training:** T<sub>1</sub> ~ S<sub>1</sub>, X, Y ~ D<sub>1</sub>, T<sub>2</sub> ~ S<sub>1</sub>, X, Y ~ D<sub>2</sub> ... T<sub>k</sub> ~ S<sub>1</sub>, X, Y ~ D<sub>k</sub>

* **Process:** A meta-learner learns from a distribution of tasks.

* **Testing:** T ~ S<sub>1</sub>, X, Y ~ D<sub>t</sub>

* **Process:** The meta-learner is tested on a new task from the same distribution.

* **Learner:** A learner is trained on the new task.

* **Testing:** X ~ D<sub>t</sub>

* **Process:** The trained learner is tested on data from the new task's distribution.

**7. Continual Learning:**

* **Training:** T<sub>1</sub>, x<sub>1</sub>, y<sub>1</sub> ~ D<sub>1</sub> ... x<sub>k</sub>, y<sub>k</sub> ~ D<sub>1</sub>, T<sub>t</sub>, x<sub>1</sub>, y<sub>1</sub> ~ D<sub>1</sub> ... x<sub>k</sub>, y<sub>k</sub> ~ D<sub>1</sub>

* **Process:** The learner is trained sequentially on data points from different tasks.

* **Prediction:** X ~ D<sub>1</sub> ... X ~ D<sub>1</sub> ...

* **Process:** The trained learner is used to predict on data from the same distribution.

### Key Observations

* **Data Distribution:** The diagrams highlight the importance of data distribution (D) in different learning paradigms. Standard supervised learning assumes the training and testing data come from the same distribution. Domain adaptation and transfer learning deal with different distributions.

* **Task Variation:** Multi-task learning and meta-learning involve multiple tasks (T), while standard supervised learning focuses on a single task.

* **Learning Process:** The diagrams illustrate the different learning processes involved in each paradigm, such as simultaneous learning (multi-task), knowledge transfer (transfer learning), and sequential learning (online and continual learning).

### Interpretation

The diagram provides a high-level overview of different machine learning paradigms and their key characteristics. It emphasizes the importance of data distribution, task variation, and the learning process in each paradigm. The diagrams are useful for understanding the differences between these paradigms and for choosing the appropriate paradigm for a given problem.

* **Standard Supervised Learning:** Serves as the baseline, where the model learns from labeled data and is tested on data from the same distribution.

* **Multi-Task Learning:** Improves generalization by learning multiple related tasks simultaneously.

* **Domain Adaptation:** Addresses the problem of distribution shift between training and testing data.

* **Transfer Learning:** Leverages knowledge gained from a source domain to improve learning in a target domain.

* **Online Learning:** Adapts to new data as it becomes available, making it suitable for dynamic environments.

* **Meta Learning:** Learns how to learn, enabling fast adaptation to new tasks.

* **Continual Learning:** Learns new tasks without forgetting previously learned ones.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: Machine Learning Paradigms

### Overview

The image presents a comparative diagram illustrating six different machine learning paradigms: Standard Supervised Learning, Multi-Task Learning, Transfer Learning, Meta Learning, Domain Adaptation, Online Learning, and Continual Learning. Each paradigm is depicted as a two-stage process – Training and Test – with visual representations of data flow and model components. The diagram emphasizes how each approach handles data and learning processes differently.

### Components/Axes

The diagram is structured into six vertical columns, each representing a different learning paradigm. Each column is further divided into two sections: "Training" (top) and "Test" (bottom), separated by a horizontal line.

Common elements across paradigms include:

* **Input Data:** Represented as `X` (input features) and `Y` (labels) or `D` (domain). Subscripts indicate different tasks or datasets (e.g., `X₁`, `Y₁`, `D₁`).

* **Learner:** A rectangular orange block representing the machine learning model.

* **Meta Learner:** A rectangular orange block representing the meta-learning model.

* **Knowledge Transfer:** A red circle with the text "Knowledge Transfer" inside, representing the transfer of knowledge from one task to another.

* **Prediction:** The "Predict" label is used in Online and Continual Learning.

Specific labels for each paradigm are:

* **Standard Supervised Learning:** No additional labels.

* **Multi-Task Learning:** `T₁` to `Tₖ` (representing multiple tasks).

* **Transfer Learning:** `Tₛ` (source task), `Tₜ` (target task).

* **Meta Learning:** `Tₛ, Sᵢ` (source tasks and samples).

* **Domain Adaptation:** `T₁`, `T₂` (representing different domains).

* **Online Learning:** `X₁, Y₁`, `X₂, Y₂`, `Xₙ, Yₙ` (representing sequential data).

* **Continual Learning:** `T₁`, `T₂`, `Tₙ` (representing sequential tasks).

### Detailed Analysis or Content Details

Let's analyze each paradigm individually:

1. **Standard Supervised Learning:**

* **Training:** Input `X₁Y₁` flows into the "Learner".

* **Test:** Input `X~₁D₁` flows into the "Learner".

2. **Multi-Task Learning:**

* **Training:** Multiple inputs `X₁Y₁` to `XₖYₖ` flow into the "Learner".

* **Test:** Input `X~₁D₁` flows into the "Learner".

3. **Transfer Learning:**

* **Training:** Input `X₁Y₁` flows into the "Learner", with a "Knowledge Transfer" connection to the next stage.

* **Test:** Input `X~₁D₁` flows into the "Learner".

4. **Meta Learning:**

* **Training:** Multiple inputs `Tₛ, Sᵢ` and `XᵢYᵢ` flow into the "Meta Learner".

* **Test:** Input `X~₁D₁` flows into the "Learner".

5. **Domain Adaptation:**

* **Training:** Inputs `X₁Y₁` and `X₂Y₂` flow into the "Learner".

* **Test:** Input `X~₁D₁` flows into the "Learner".

6. **Online Learning:**

* **Training:** Sequential inputs `X₁, Y₁`, `X₂, Y₂`, ..., `Xₙ, Yₙ` flow into the "Learner".

* **Predict:** Sequential inputs `X~₁, D₁`, `X~₂, D₂`, ..., `X~ₙ, Dₙ` flow into the "Learner".

7. **Continual Learning:**

* **Training:** Sequential inputs `X₁, Y₁`, `X₂, Y₂`, ..., `Xₙ, Yₙ` flow into the "Learner".

* **Predict:** Sequential inputs `X~₁, D₁`, `X~₂, D₂`, ..., `X~ₙ, Dₙ` flow into the "Learner".

### Key Observations

* All paradigms share a common structure of training and testing phases.

* The key difference lies in how the data is presented and utilized during training.

* Multi-Task Learning uses multiple tasks simultaneously.

* Transfer Learning explicitly highlights the transfer of knowledge.

* Meta Learning employs a "Meta Learner" to learn how to learn.

* Domain Adaptation focuses on adapting to different data domains.

* Online and Continual Learning handle sequential data streams.

### Interpretation

The diagram effectively illustrates the diverse approaches within machine learning, each designed to address specific challenges. Standard Supervised Learning serves as a baseline, while the other paradigms represent advancements aimed at improving generalization, efficiency, and adaptability.

* **Multi-Task Learning** aims to leverage shared representations across tasks, potentially improving performance on each individual task.

* **Transfer Learning** seeks to apply knowledge gained from one task to another, reducing the need for extensive training data.

* **Meta Learning** focuses on learning learning algorithms, enabling faster adaptation to new tasks.

* **Domain Adaptation** addresses the issue of domain shift, where the training and testing data distributions differ.

* **Online Learning** and **Continual Learning** are designed for dynamic environments where data arrives sequentially, requiring models to adapt continuously.

The diagram highlights the evolution of machine learning from static, task-specific models to more flexible and adaptive systems. The use of visual cues like arrows and different block types effectively conveys the flow of information and the core concepts of each paradigm. The diagram does not provide quantitative data, but rather a qualitative comparison of different learning strategies.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

\n

## Diagram: Machine Learning Paradigms Comparison

### Overview

The image is a technical diagram comparing six different machine learning paradigms. It visually contrasts their training and testing/prediction workflows using a consistent color-coded schematic. The primary language is English. The diagram is structured as a grid with one column on the left for the baseline and a 2x3 grid for the other paradigms.

### Components/Axes

The diagram is segmented into six distinct boxes, each representing a learning paradigm. Each box is divided into two horizontal sections: **Training** (top) and **Test** or **Predict** (bottom).

**Color Legend (Implicit):**

* **Blue Boxes:** Represent Tasks (labeled T₁, T₂, etc.) and their associated data distributions (D₁, D₂, etc.).

* **Orange Boxes:** Represent a "Learner" model.

* **Green Boxes:** Represent data used for testing or prediction.

* **Red Oval:** Represents "Knowledge Transfer" (only in Transfer Learning).

* **Light Orange Box:** Represents a "Meta Learner" (only in Meta Learning).

**Spatial Layout:**

* **Left Column:** Contains the baseline paradigm, "Standard Supervised Learning."

* **Right 2x3 Grid:** Contains the other five paradigms. The top row (left to right): Multi Task Learning, Transfer Learning, Meta Learning. The bottom row (left to right): Domain Adaptation, Online Learning, Continual Learning.

### Detailed Analysis

**1. Standard Supervised Learning (Left Column)**

* **Training:** A single task `T₁` with data `(X,Y) ~ D₁` feeds into a `Learner`.

* **Test:** The trained `Learner` is applied to new data `X ~ D₁`.

**2. Multi Task Learning (Top-Left of Grid)**

* **Training:** Multiple tasks `T₁` to `Tₙ`, each with data `(X,Y) ~ D₁` to `(X,Y) ~ Dₙ`, all feed into a single shared `Learner`.

* **Test:** The shared `Learner` is applied to data `X ~ D₂` (a different distribution than any training distribution).

**3. Transfer Learning (Top-Center of Grid)**

* **Training:** A source task `Tₛ` with data `(X,Y) ~ D₁` feeds into a `Learner`. A red oval labeled `Knowledge Transfer` connects this to a second `Learner` for a target task `Tₜ` with data `(X,Y) ~ D₂`.

* **Test:** The target task's `Learner` is applied to data `X ~ D₂`.

**4. Meta Learning (Top-Right of Grid)**

* **Training:** Multiple tasks `T₁` to `Tₙ`, each with data `(X,Y) ~ D₁`, feed into a `Meta Learner`.

* **Test:** A new task `Tₜ ~ Sₜ` (where `Sₜ` likely represents a task distribution) with data `(X,Y) ~ D₂` is given to the `Meta Learner`, which produces a new task-specific `Learner`. This new `Learner` is then applied to data `X ~ D₂`.

**5. Domain Adaptation (Bottom-Left of Grid)**

* **Training:** Two tasks, `T₁` and `T₂`, both with data `(X,Y) ~ D₁`, feed into a single `Learner`.

* **Test:** The `Learner` is applied to data `X ~ D₂`.

**6. Online Learning (Bottom-Center of Grid)**

* **Training:** A single task `T₁` receives a sequential stream of data points: `(x₁,y₁) ~ D₁, (x₂,y₂) ~ D₁, ..., (xₙ,yₙ) ~ D₁`. All points feed into the `Learner`.

* **Predict:** The `Learner` makes predictions on a sequential stream of new data: `X ~ D₂, X ~ D₂, X ~ D₂, ...`.

**7. Continual Learning (Bottom-Right of Grid)**

* **Training:** A sequence of tasks `T₁` to `Tₙ`, each with its own data stream `(x₁,y₁) ~ D₁, ..., (xₙ,yₙ) ~ D₁`, all feed into a single `Learner`.

* **Predict:** The `Learner` must handle a mixed stream of data: `X ~ D₂, ...` (new domain) and also `x₁ ~ D₁, ..., xₙ ~ D₁, ...` (data from previous tasks/domains).

### Key Observations

* **Consistent Visual Language:** The diagram uses identical shapes and colors for analogous components (tasks, learners, data) across all paradigms, enabling direct visual comparison.

* **Increasing Complexity:** The paradigms progress from a simple single-task setup (Standard) to more complex scenarios involving multiple tasks, sequential data, or knowledge transfer.

* **Key Differentiators:** The critical differences lie in the *number of tasks* during training, the *relationship between training and test data distributions* (same D₁ vs. different D₂), and the *structure of the learning process* (single learner, meta-learner, sequential updates).

* **Data Flow:** Arrows clearly indicate the direction of information flow from data/tasks, through the learner(s), to the output (test/predict).

### Interpretation

This diagram serves as a conceptual map for understanding the landscape of modern machine learning approaches beyond basic supervised learning. It highlights how each paradigm addresses a specific challenge:

* **Multi Task Learning** aims to improve generalization by learning related tasks jointly.

* **Transfer Learning** leverages knowledge from a rich source domain to perform better on a target domain with potentially less data.

* **Meta Learning** ("learning to learn") focuses on creating models that can rapidly adapt to new tasks with minimal data.

* **Domain Adaptation** specifically tackles the problem where training and test data come from different but related distributions.

* **Online and Continual Learning** deal with non-stationary environments where data arrives sequentially, and the model must update or remember without catastrophic forgetting.

The visual juxtaposition emphasizes that the choice of paradigm depends fundamentally on the structure of the available data (single vs. multiple tasks, static vs. sequential) and the ultimate goal (generalization, adaptation, or lifelong learning). The consistent use of `D₁` for training and `D₂` for testing in most paradigms (except Standard) underscores the common challenge of distributional shift that these advanced methods are designed to overcome.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Diagram: Machine Learning Paradigms Comparison

### Overview

The diagram illustrates six machine learning paradigms (Standard Supervised Learning, Domain Adaptation, Online Learning, Multi-Task Learning, Transfer Learning, and Meta Learning) through a structured layout of training, test, and learner components. Arrows indicate data flow, knowledge transfer, and task relationships.

### Components/Axes

- **Left Column**:

- **Standard Supervised Learning**: Single task `T₁` with training data `X,Y ~ D₁` and test data `X ~ D₁`.

- **Domain Adaptation**: Training on `T₁` with source domain `X,Y ~ Dₛ` and target domain `X ~ Dₜ`.

- **Online Learning**: Continuous training on `T₁` with sequential data `X₁,Y₁ ~ D₁` to `Xₖ,Yₖ ~ D₁`, predicting `X ~ D₁`.

- **Right Column**:

- **Multi-Task Learning**: Shared learner for tasks `T₁` to `Tₖ` with data `X,Y ~ D₁` to `X,Y ~ Dₖ`.

- **Transfer Learning**: Knowledge transfer (red oval) from source task `Tₛ` (`X,Y ~ Dₛ`) to target task `Tₜ` (`X,Y ~ Dₜ`).

- **Meta Learning**: Meta learner handling tasks `T₁` to `Tₖ` with shared structure `X,Y ~ D₁` to `X,Y ~ Dₖ`, testing on `X ~ Dₜ`.

- **Color Coding**:

- Blue: Training data (`X,Y ~ D`).

- Green: Test data (`X ~ D`).

- Orange: Learner component.

- Red: Knowledge transfer (Transfer Learning only).

### Detailed Analysis

1. **Standard Supervised Learning**:

- Simple pipeline: Training (`T₁`) → Learner → Test (`X ~ D₁`).

- No adaptation or transfer; assumes static, task-specific data.

2. **Domain Adaptation**:

- Addresses domain shift: Training on source domain `Dₛ` → Learner → Test on target domain `Dₜ`.

3. **Online Learning**:

- Sequential data ingestion: `X₁,Y₁ ~ D₁` to `Xₖ,Yₖ ~ D₁` → Learner → Predictions on `X ~ D₁`.

- Emphasizes real-time adaptation to streaming data.

4. **Multi-Task Learning**:

- Shared learner across tasks `T₁` to `Tₖ` with distinct datasets `D₁` to `Dₖ`.

- Leverages cross-task knowledge for improved generalization.

5. **Transfer Learning**:

- Explicit knowledge transfer (red oval) from source task `Tₛ` to target task `Tₜ`.

- Source task `Tₛ` trains on `Dₛ`; target task `Tₜ` tests on `Dₜ`.

6. **Meta Learning**:

- Meta learner generalizes across tasks `T₁` to `Tₖ` with shared structure.

- Tests on unseen task `Tₜ` with data `X ~ Dₜ`.

### Key Observations

- **Hierarchy of Complexity**: Paradigms progress from simple (Standard Supervised) to complex (Meta Learning).

- **Knowledge Reuse**: Transfer Learning and Meta Learning explicitly model knowledge reuse across tasks/domains.

- **Dynamic Data Handling**: Online Learning and Domain Adaptation address non-stationary or domain-shifted data.

- **Shared Learners**: Multi-Task and Meta Learning use shared architectures for efficiency.

### Interpretation

The diagram highlights the evolution of machine learning from task-specific models to adaptive, generalizable frameworks. Transfer Learning and Meta Learning emphasize leveraging prior knowledge, while Domain Adaptation and Online Learning focus on real-world data challenges. The red "Knowledge Transfer" oval in Transfer Learning underscores its role as a bridge between source and target tasks. Meta Learning’s "Meta Learner" abstracts task-specific patterns, enabling rapid adaptation to new tasks. This progression reflects the field’s shift toward robustness, efficiency, and scalability in dynamic environments.

DECODING INTELLIGENCE...