\n

## Diagram: Machine Learning Paradigms

### Overview

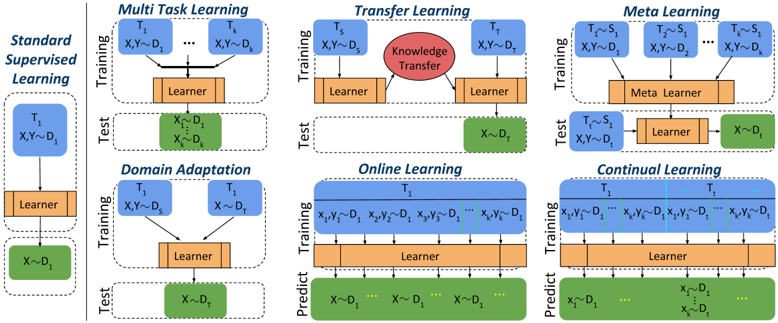

The image presents a comparative diagram illustrating six different machine learning paradigms: Standard Supervised Learning, Multi-Task Learning, Transfer Learning, Meta Learning, Domain Adaptation, Online Learning, and Continual Learning. Each paradigm is depicted as a two-stage process – Training and Test – with visual representations of data flow and model components. The diagram emphasizes how each approach handles data and learning processes differently.

### Components/Axes

The diagram is structured into six vertical columns, each representing a different learning paradigm. Each column is further divided into two sections: "Training" (top) and "Test" (bottom), separated by a horizontal line.

Common elements across paradigms include:

* **Input Data:** Represented as `X` (input features) and `Y` (labels) or `D` (domain). Subscripts indicate different tasks or datasets (e.g., `X₁`, `Y₁`, `D₁`).

* **Learner:** A rectangular orange block representing the machine learning model.

* **Meta Learner:** A rectangular orange block representing the meta-learning model.

* **Knowledge Transfer:** A red circle with the text "Knowledge Transfer" inside, representing the transfer of knowledge from one task to another.

* **Prediction:** The "Predict" label is used in Online and Continual Learning.

Specific labels for each paradigm are:

* **Standard Supervised Learning:** No additional labels.

* **Multi-Task Learning:** `T₁` to `Tₖ` (representing multiple tasks).

* **Transfer Learning:** `Tₛ` (source task), `Tₜ` (target task).

* **Meta Learning:** `Tₛ, Sᵢ` (source tasks and samples).

* **Domain Adaptation:** `T₁`, `T₂` (representing different domains).

* **Online Learning:** `X₁, Y₁`, `X₂, Y₂`, `Xₙ, Yₙ` (representing sequential data).

* **Continual Learning:** `T₁`, `T₂`, `Tₙ` (representing sequential tasks).

### Detailed Analysis or Content Details

Let's analyze each paradigm individually:

1. **Standard Supervised Learning:**

* **Training:** Input `X₁Y₁` flows into the "Learner".

* **Test:** Input `X~₁D₁` flows into the "Learner".

2. **Multi-Task Learning:**

* **Training:** Multiple inputs `X₁Y₁` to `XₖYₖ` flow into the "Learner".

* **Test:** Input `X~₁D₁` flows into the "Learner".

3. **Transfer Learning:**

* **Training:** Input `X₁Y₁` flows into the "Learner", with a "Knowledge Transfer" connection to the next stage.

* **Test:** Input `X~₁D₁` flows into the "Learner".

4. **Meta Learning:**

* **Training:** Multiple inputs `Tₛ, Sᵢ` and `XᵢYᵢ` flow into the "Meta Learner".

* **Test:** Input `X~₁D₁` flows into the "Learner".

5. **Domain Adaptation:**

* **Training:** Inputs `X₁Y₁` and `X₂Y₂` flow into the "Learner".

* **Test:** Input `X~₁D₁` flows into the "Learner".

6. **Online Learning:**

* **Training:** Sequential inputs `X₁, Y₁`, `X₂, Y₂`, ..., `Xₙ, Yₙ` flow into the "Learner".

* **Predict:** Sequential inputs `X~₁, D₁`, `X~₂, D₂`, ..., `X~ₙ, Dₙ` flow into the "Learner".

7. **Continual Learning:**

* **Training:** Sequential inputs `X₁, Y₁`, `X₂, Y₂`, ..., `Xₙ, Yₙ` flow into the "Learner".

* **Predict:** Sequential inputs `X~₁, D₁`, `X~₂, D₂`, ..., `X~ₙ, Dₙ` flow into the "Learner".

### Key Observations

* All paradigms share a common structure of training and testing phases.

* The key difference lies in how the data is presented and utilized during training.

* Multi-Task Learning uses multiple tasks simultaneously.

* Transfer Learning explicitly highlights the transfer of knowledge.

* Meta Learning employs a "Meta Learner" to learn how to learn.

* Domain Adaptation focuses on adapting to different data domains.

* Online and Continual Learning handle sequential data streams.

### Interpretation

The diagram effectively illustrates the diverse approaches within machine learning, each designed to address specific challenges. Standard Supervised Learning serves as a baseline, while the other paradigms represent advancements aimed at improving generalization, efficiency, and adaptability.

* **Multi-Task Learning** aims to leverage shared representations across tasks, potentially improving performance on each individual task.

* **Transfer Learning** seeks to apply knowledge gained from one task to another, reducing the need for extensive training data.

* **Meta Learning** focuses on learning learning algorithms, enabling faster adaptation to new tasks.

* **Domain Adaptation** addresses the issue of domain shift, where the training and testing data distributions differ.

* **Online Learning** and **Continual Learning** are designed for dynamic environments where data arrives sequentially, requiring models to adapt continuously.

The diagram highlights the evolution of machine learning from static, task-specific models to more flexible and adaptive systems. The use of visual cues like arrows and different block types effectively conveys the flow of information and the core concepts of each paradigm. The diagram does not provide quantitative data, but rather a qualitative comparison of different learning strategies.