## Diagram: Machine Learning Paradigms Comparison

### Overview

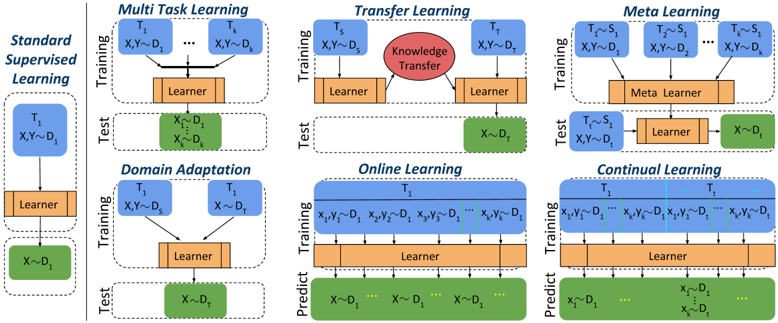

The diagram illustrates six machine learning paradigms (Standard Supervised Learning, Domain Adaptation, Online Learning, Multi-Task Learning, Transfer Learning, and Meta Learning) through a structured layout of training, test, and learner components. Arrows indicate data flow, knowledge transfer, and task relationships.

### Components/Axes

- **Left Column**:

- **Standard Supervised Learning**: Single task `T₁` with training data `X,Y ~ D₁` and test data `X ~ D₁`.

- **Domain Adaptation**: Training on `T₁` with source domain `X,Y ~ Dₛ` and target domain `X ~ Dₜ`.

- **Online Learning**: Continuous training on `T₁` with sequential data `X₁,Y₁ ~ D₁` to `Xₖ,Yₖ ~ D₁`, predicting `X ~ D₁`.

- **Right Column**:

- **Multi-Task Learning**: Shared learner for tasks `T₁` to `Tₖ` with data `X,Y ~ D₁` to `X,Y ~ Dₖ`.

- **Transfer Learning**: Knowledge transfer (red oval) from source task `Tₛ` (`X,Y ~ Dₛ`) to target task `Tₜ` (`X,Y ~ Dₜ`).

- **Meta Learning**: Meta learner handling tasks `T₁` to `Tₖ` with shared structure `X,Y ~ D₁` to `X,Y ~ Dₖ`, testing on `X ~ Dₜ`.

- **Color Coding**:

- Blue: Training data (`X,Y ~ D`).

- Green: Test data (`X ~ D`).

- Orange: Learner component.

- Red: Knowledge transfer (Transfer Learning only).

### Detailed Analysis

1. **Standard Supervised Learning**:

- Simple pipeline: Training (`T₁`) → Learner → Test (`X ~ D₁`).

- No adaptation or transfer; assumes static, task-specific data.

2. **Domain Adaptation**:

- Addresses domain shift: Training on source domain `Dₛ` → Learner → Test on target domain `Dₜ`.

3. **Online Learning**:

- Sequential data ingestion: `X₁,Y₁ ~ D₁` to `Xₖ,Yₖ ~ D₁` → Learner → Predictions on `X ~ D₁`.

- Emphasizes real-time adaptation to streaming data.

4. **Multi-Task Learning**:

- Shared learner across tasks `T₁` to `Tₖ` with distinct datasets `D₁` to `Dₖ`.

- Leverages cross-task knowledge for improved generalization.

5. **Transfer Learning**:

- Explicit knowledge transfer (red oval) from source task `Tₛ` to target task `Tₜ`.

- Source task `Tₛ` trains on `Dₛ`; target task `Tₜ` tests on `Dₜ`.

6. **Meta Learning**:

- Meta learner generalizes across tasks `T₁` to `Tₖ` with shared structure.

- Tests on unseen task `Tₜ` with data `X ~ Dₜ`.

### Key Observations

- **Hierarchy of Complexity**: Paradigms progress from simple (Standard Supervised) to complex (Meta Learning).

- **Knowledge Reuse**: Transfer Learning and Meta Learning explicitly model knowledge reuse across tasks/domains.

- **Dynamic Data Handling**: Online Learning and Domain Adaptation address non-stationary or domain-shifted data.

- **Shared Learners**: Multi-Task and Meta Learning use shared architectures for efficiency.

### Interpretation

The diagram highlights the evolution of machine learning from task-specific models to adaptive, generalizable frameworks. Transfer Learning and Meta Learning emphasize leveraging prior knowledge, while Domain Adaptation and Online Learning focus on real-world data challenges. The red "Knowledge Transfer" oval in Transfer Learning underscores its role as a bridge between source and target tasks. Meta Learning’s "Meta Learner" abstracts task-specific patterns, enabling rapid adaptation to new tasks. This progression reflects the field’s shift toward robustness, efficiency, and scalability in dynamic environments.