\n

## Diagram: Interactive Theorem Prover Architecture

### Overview

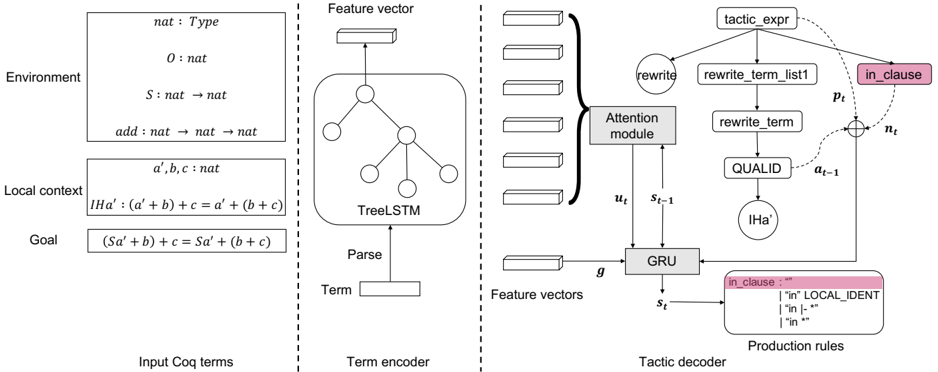

The image depicts the architecture of an interactive theorem prover, likely a system for formal verification or automated reasoning. It illustrates the flow of information from input Coq terms through a term encoder, and then to a tactic decoder, ultimately influencing the proof state. The diagram is segmented into three main sections: Input Coq terms, Term encoder, and Tactic decoder.

### Components/Axes

The diagram contains the following labeled components:

* **Environment:** Contains definitions like `nat : Type`, `O : nat`, `S : nat -> nat`, `add : nat -> nat -> nat`.

* **Local context:** Contains variables `a, b, c : nat` and a hypothesis `[Ha' : (a + b) + c = a' + (b + c)]`.

* **Goal:** Contains the goal `(Sa' + b) + c = Sa' + (b + c)`.

* **Input Coq terms:** The section containing the Environment, Local context, and Goal.

* **Parse:** A box indicating the parsing process.

* **TreeLSTM:** A component within the Term encoder, representing a Tree-structured Long Short-Term Memory network.

* **Term encoder:** The section responsible for encoding the Coq terms into feature vectors.

* **Feature vector:** Represents the output of the Term encoder.

* **Feature vectors:** Represents the output of the GRU.

* **GRU:** A Gated Recurrent Unit, part of the Tactic decoder.

* **Attention module:** A component within the Tactic decoder, used to focus on relevant parts of the input.

* **Tactic decoder:** The section responsible for generating tactics.

* **rewrite:** A label indicating a rewrite operation.

* **rewrite\_term\_list:** A list of terms to be rewritten.

* **rewrite\_term:** A single term to be rewritten.

* **QUALID:** A component within the Tactic decoder.

* **IHa:** A component within the Tactic decoder.

* **Production rules:** A section listing production rules like `"in clause :"` and `"in |-"`.

* **in\_clause:** A component within the Tactic decoder.

* **pt:** A variable representing a point.

* **nt:** A variable representing a new point.

* **u<sub>t</sub>:** Output of the attention module.

* **s<sub>t-1</sub>:** Previous state of the GRU.

* **s<sub>t</sub>:** Current state of the GRU.

* **g:** A function within the GRU.

* **tactic\_expr:** The output of the Tactic decoder.

### Detailed Analysis or Content Details

The diagram illustrates a sequential process:

1. **Input:** Coq terms (Environment, Local context, Goal) are provided as input.

2. **Parsing:** The input terms are parsed.

3. **Term Encoding:** The parsed terms are fed into a TreeLSTM network within the Term encoder, which transforms them into a feature vector.

4. **Feature Vector Processing:** The feature vector is then processed by a GRU, generating a sequence of feature vectors.

5. **Attention Mechanism:** The GRU's output is fed into an Attention module, which produces a context vector `u<sub>t</sub>`.

6. **Tactic Decoding:** The context vector `u<sub>t</sub>` and the previous state `s<sub>t-1</sub>` are used by the GRU to generate the current state `s<sub>t</sub>`.

7. **Tactic Generation:** The GRU's output is used to generate a tactic expression `tactic_expr` through components like `rewrite`, `rewrite_term_list`, `rewrite_term`, and `QUALID`.

8. **Application:** The tactic is applied to the proof state, potentially modifying the Local context and Goal.

The diagram also shows a feedback loop from the Tactic decoder back to the Attention module, indicated by the arrow from `a<sub>t-1</sub>` to the Attention module. The `in_clause` section lists production rules used in the tactic generation process, including `"in clause :"` and `"in |-"`.

### Key Observations

The diagram highlights the use of deep learning techniques (TreeLSTM, GRU, Attention) within an interactive theorem prover. The attention mechanism suggests that the system can focus on relevant parts of the input when generating tactics. The feedback loop indicates an iterative refinement process. The diagram is highly abstract and does not provide specific numerical values or data points.

### Interpretation

This diagram represents a modern approach to interactive theorem proving, leveraging the power of deep learning to automate parts of the proof process. The system aims to learn how to generate effective tactics based on the current proof state (Environment, Local context, Goal). The use of a TreeLSTM suggests that the system is capable of understanding the hierarchical structure of mathematical expressions. The attention mechanism allows the system to focus on the most relevant parts of the input, improving the efficiency and accuracy of tactic generation. The overall architecture suggests a system that can assist human mathematicians in the process of formal verification, potentially automating tedious or repetitive steps and helping to discover new proofs. The diagram does not provide information about the performance of the system or the types of theorems it can prove. It is a high-level architectural overview, focusing on the flow of information and the key components involved.