## Diagram: Neural Network Architecture for Tactic Prediction

### Overview

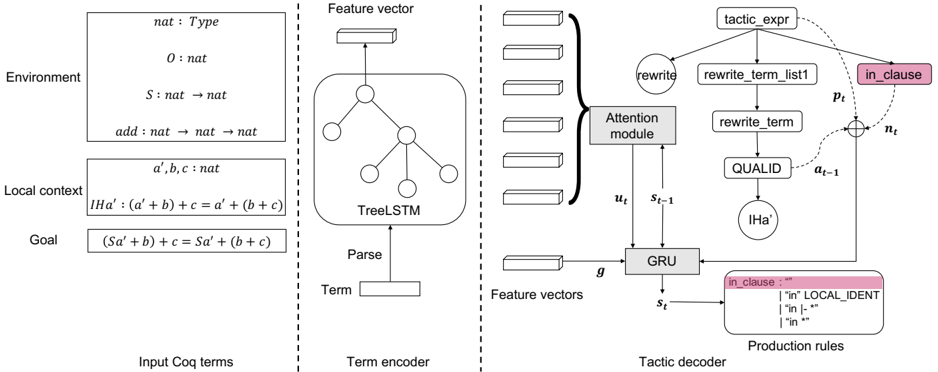

The image presents a diagram of a neural network architecture designed for tactic prediction in a formal proof environment, likely Coq. The architecture consists of three main components: an input section showing Coq terms, a term encoder using a TreeLSTM, and a tactic decoder using a GRU with an attention mechanism.

### Components/Axes

* **Environment:** Contains type and function declarations:

* `nat : Type`

* `0 : nat`

* `S : nat -> nat`

* `add : nat -> nat -> nat`

* **Local context:** Contains variable declarations:

* `a', b, c : nat`

* `IHa' : (a' + b) + c = a' + (b + c)`

* **Goal:** Contains the current goal to be proven:

* `(Sa' + b) + c = Sa' + (b + c)`

* **Input Coq terms:** Label for the left-most section containing the environment, local context, and goal.

* **Term encoder:** The middle section of the diagram, responsible for encoding the input term.

* **Term:** Input to the term encoder.

* **Parse:** Indicates the parsing step.

* **TreeLSTM:** A tree-structured LSTM used to encode the parsed term.

* **Feature vector:** Output of the TreeLSTM, representing the encoded term.

* **Tactic decoder:** The right-most section of the diagram, responsible for predicting the next tactic.

* **Feature vectors:** Input to the attention module.

* **Attention module:** Attends to the feature vectors. Receives inputs `u_t` and `s_{t-1}` and outputs to the GRU.

* **GRU:** Gated Recurrent Unit, the core of the tactic decoder. Receives inputs `g` from the feature vectors, `u_t` from the attention module, and outputs `s_t`.

* **tactic\_expr:** The predicted tactic expression.

* **rewrite\_term\_list1:** A component of the tactic expression.

* **rewrite\_term:** Another component of the tactic expression.

* **QUALID:** Another component of the tactic expression.

* **IHa':** Another component of the tactic expression.

* **in\_clause:** A component of the tactic expression, highlighted in pink.

* **Production rules:** Contains possible production rules for `in_clause`:

* `"in" LOCAL_IDENT`

* `"in |- *"`

* `"in *"`

* **p\_t:** Probability associated with the tactic expression.

* **n\_t:** Noise term.

* **a\_{t-1}:** Previous action.

### Detailed Analysis or ### Content Details

The diagram illustrates the flow of information from the input Coq terms through the term encoder and finally to the tactic decoder. The term encoder uses a TreeLSTM to capture the structure of the input term, and the tactic decoder uses a GRU with an attention mechanism to predict the next tactic. The attention mechanism allows the decoder to focus on the most relevant parts of the encoded term. The production rules show possible expansions for the `in_clause` component of the tactic expression.

### Key Observations

* The architecture uses a TreeLSTM to encode the input term, which is suitable for capturing the hierarchical structure of formal expressions.

* The attention mechanism in the tactic decoder allows the model to focus on the most relevant parts of the encoded term.

* The production rules provide a way to generate valid tactic expressions.

### Interpretation

The diagram presents a neural network architecture for tactic prediction in a formal proof environment. The architecture is designed to capture the structure of the input term and to predict the next tactic based on the current goal and context. The use of a TreeLSTM and an attention mechanism suggests that the architecture is capable of handling complex formal expressions. The production rules provide a way to ensure that the predicted tactics are valid. This architecture could be used to automate the process of formal proof, which could have significant implications for software verification and other areas of computer science.