## Diagram: Neural Theorem Proving Architecture for Coq

### Overview

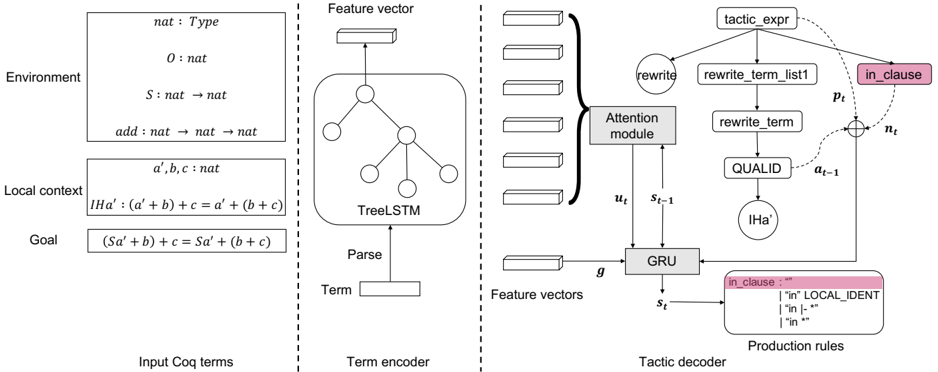

The image is a technical system architecture diagram illustrating a neural network model designed for automated tactic generation in the Coq proof assistant. It is divided into three distinct vertical sections separated by dashed lines, representing the pipeline from input proof state to output tactic prediction. The diagram details the processing of Coq proof terms through encoding and decoding stages.

### Components/Axes

The diagram is segmented into three primary regions:

1. **Left Section: Input Coq terms**

* **Environment**: Contains foundational type and function definitions.

* `nat : Type`

* `O : nat`

* `S : nat → nat`

* `add : nat → nat → nat`

* **Local context**: Lists hypotheses and variables.

* `a', b, c : nat`

* `IHa' : (a' + b) + c = a' + (b + c)`

* **Goal**: States the proof goal.

* `(Sa' + b) + c = Sa' + (b + c)`

2. **Middle Section: Term encoder**

* **Input**: A box labeled `Term`.

* **Process**: An arrow labeled `Parse` points to a `TreeLSTM` (Tree-structured Long Short-Term Memory) network, depicted as a network graph inside a rounded rectangle.

* **Output**: An arrow points upward to a box labeled `Feature vector`.

3. **Right Section: Tactic decoder**

* **Input**: A vertical stack of five boxes representing `Feature vectors`.

* **Core Components**:

* **GRU**: A Gated Recurrent Unit (GRU) cell, receiving input `g` and previous state `s_{t-1}`.

* **Attention module**: Connected to the GRU, taking inputs `u_t` and `s_{t-1}`.

* **Tactic Prediction Flow**: A sequence of nodes connected by arrows:

* `tactic_expr` (top node)

* `rewrite` (connected to `tactic_expr`)

* `rewrite_term_list1` (connected to `tactic_expr`)

* `rewrite_term` (connected to `rewrite_term_list1`)

* `QUALID` (connected to `rewrite_term`)

* `IHa'` (connected to `QUALID`)

* **State Update**: A circle with a plus sign `⊕` combines `p_t` (from `tactic_expr`) and `n_t` (from `in_clause`), with an arrow labeled `a_{t-1}` pointing to it.

* **Production Rules**: A pink-shaded box in the bottom-right corner defining a grammar rule.

* Header: `in_clause : “”`

* Rules:

* `| “in” LOCAL_IDENT`

* `| “in” |- “”`

* `| “in” “”`

* **Output State**: An arrow from the GRU points to a box labeled `s_t`.

### Detailed Analysis

The diagram illustrates a two-stage neural architecture:

1. **Encoding Stage (Left & Middle)**: The proof state (Environment, Local context, Goal) is parsed into a tree structure. This tree is processed by a TreeLSTM to generate a fixed-size `Feature vector` that represents the semantic meaning of the proof term.

2. **Decoding Stage (Right)**: The sequence of feature vectors (likely from multiple proof steps or components) is fed into a GRU-based decoder. This decoder uses an attention mechanism (`Attention module`) to focus on relevant parts of the input. It generates a tactic expression (`tactic_expr`) step-by-step. The diagram shows a specific prediction path for a `rewrite` tactic, where it must identify a qualified identifier (`QUALID`), such as the inductive hypothesis `IHa'`. The `in_clause` component, governed by the production rules, appears to be a sub-component for specifying locations in a rewrite tactic (e.g., "in H", "in |-"). The pink highlighting on `in_clause` and its production rules box suggests it is a key or specialized part of the grammar.

### Key Observations

* **Color Coding**: The `in_clause` node and its corresponding production rules box are shaded pink, indicating they are a focal point or a specific example within the broader system.

* **Flow Direction**: The overall data flow is from left to right: from raw Coq terms, through encoding, to tactic generation. Within the decoder, the flow is cyclical, with the GRU state `s_t` being updated and used for the next step.

* **Mathematical Notation**: The input section uses precise Coq syntax for types (`nat : Type`), constructors (`O`, `S`), functions (`add`), and hypotheses (`IHa'`).

* **Hybrid Architecture**: The model combines symbolic AI (the structured Coq terms and production rules) with neural networks (TreeLSTM, GRU, Attention).

### Interpretation

This diagram represents a **neural theorem prover** specialized for the Coq proof assistant. The system's purpose is to learn the "tactics" (proof steps) that a human would use to transform a given proof state (the Goal) into a solved state.

* **How it works**: It translates the symbolic, tree-structured proof state into a neural representation (feature vector). A recurrent decoder then generates a sequence of tokens that form a valid tactic command, guided by attention to the input and constrained by a grammar (the production rules).

* **Significance**: This architecture bridges the gap between formal, symbolic reasoning (Coq) and statistical machine learning. It aims to automate the labor-intensive process of writing proofs by learning from a corpus of existing proofs.

* **Notable Detail**: The specific example shown involves using an inductive hypothesis (`IHa'`) in a `rewrite` tactic, a very common pattern in mathematical proofs. The model must learn to identify and apply the correct hypothesis from the local context to the correct subterm in the goal. The `in_clause` rules suggest the model is also learning the syntactic variations for specifying where to apply the rewrite.