## Diagram: Natural Language Parsing and Tactic Generation System

### Overview

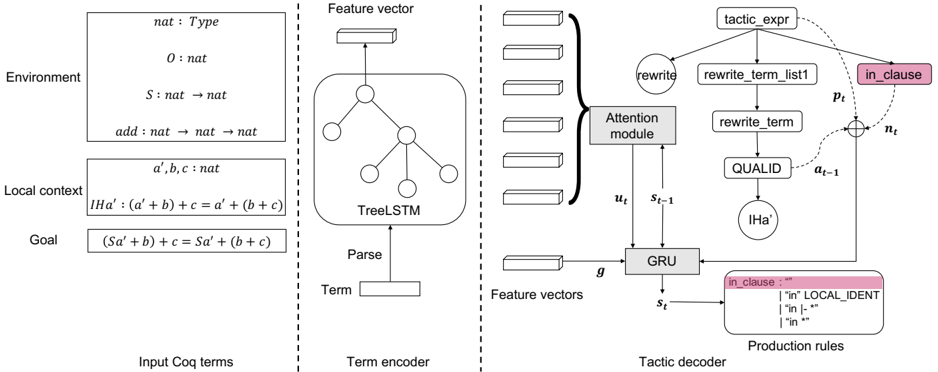

The diagram illustrates a technical architecture for parsing natural language input and generating formal tactics or production rules. It combines syntactic parsing (TreeLSTM), attention mechanisms, and rule-based decoding to transform input terms into structured output clauses.

### Components/Axes

1. **Left Panel (Environment & Local Context)**:

- **Environment**: Defines types (`nat : Type`, `O : nat`, `S : nat → nat`, `add : nat → nat → nat`).

- **Local Context**: Variables (`a', b, c : nat`) and equations (`IHa' : (a' + b) + c = a' + (b + c)`, `Goal : (Sa' + b) + c = Sa' + (b + c)`).

- **Input**: `Input Coq terms` (e.g., `IHa'`, `Goal`).

2. **Middle Panel (Term Encoder)**:

- **TreeLSTM**: Processes input terms into a hierarchical feature vector.

- **Parse**: Outputs a syntactic tree structure.

3. **Right Panel (Tactic Decoder)**:

- **Attention Module**: Focuses on relevant features from the TreeLSTM output.

- **GRU (Gated Recurrent Unit)**: Processes sequential data from the attention module.

- **Production Rules**: Includes highlighted rules like `in_clause : "" | "in" LOCAL_IDENT | "in" |- * | "in" *`.

### Detailed Analysis

- **TreeLSTM**: Encodes input terms into a feature vector, capturing syntactic dependencies.

- **Attention Mechanism**: Selects critical features (`u_t`, `s_t-1`) for the GRU.

- **GRU**: Generates hidden states (`s_t`) that inform production rule selection.

- **Production Rules**: Define output clause templates (e.g., `in_clause` with placeholders for local identifiers and logical operators).

### Key Observations

- **Hierarchical Parsing**: Input terms (`IHa'`, `Goal`) are parsed into a tree structure before being processed by the attention-GRU-decoder pipeline.

- **Rule-Based Output**: The system generates formal clauses using predefined templates (e.g., `in_clause` with variable substitutions).

- **Attention Focus**: The attention module prioritizes specific terms (e.g., `LOCAL_IDENT`) for rule application.

### Interpretation

This architecture demonstrates a hybrid approach to natural language processing, combining neural networks (TreeLSTM, GRU) with symbolic rule-based systems. The attention mechanism bridges the gap between learned representations and formal logic, enabling the system to:

1. Parse ambiguous input terms into structured representations.

2. Dynamically select relevant features for rule application.

3. Generate precise formal clauses (e.g., `in_clause`) that align with the input context.

The use of `IHa'` (inductive hypothesis) and `Goal` equations suggests the system is designed for theorem proving or formal verification tasks in programming languages like Coq. The highlighted `in_clause` rule indicates a focus on spatial or logical relationships within the parsed input.