## Line Chart: Language Model Loss vs. Compute Scale (Projected)

### Overview

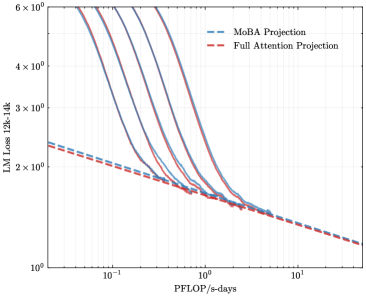

The image is a log-log line chart projecting the relationship between language model (LM) loss and computational scale, measured in PFLOP/s-days. It compares two projection methodologies: "MoBA Projection" and "Full Attention Projection." The chart illustrates how model loss decreases as computational investment increases, with multiple curves suggesting different starting conditions or model configurations converging toward a common scaling trend.

### Components/Axes

* **Chart Type:** Log-Log Line Chart.

* **X-Axis:**

* **Label:** `PFLOP/s-days`

* **Scale:** Logarithmic, ranging from `10^-1` (0.1) to `10^1` (10).

* **Major Ticks:** `10^-1`, `10^0`, `10^1`.

* **Y-Axis:**

* **Label:** `LM Loss 128k-16k`

* **Scale:** Logarithmic, ranging from `10^0` (1) to `6 x 10^0` (6).

* **Major Ticks:** `10^0`, `2 x 10^0`, `3 x 10^0`, `4 x 10^0`, `6 x 10^0`.

* **Legend:**

* **Position:** Top-right corner of the plot area.

* **Entry 1:** `MoBA Projection` - Represented by a blue dashed line (`--`).

* **Entry 2:** `Full Attention Projection` - Represented by a red dashed line (`--`).

* **Data Series:**

* **Full Attention Projection (Red Dashed Line):** A single, straight line sloping downward from left to right. It originates near `(x=0.1, y≈2.2)` and terminates near `(x=10, y≈1.2)`. Its linearity on a log-log plot indicates a power-law relationship.

* **MoBA Projection (Blue Solid Lines):** A family of approximately 6-7 distinct solid blue curves. **Note:** The legend indicates a dashed blue line, but the plotted lines are solid. This is a visual discrepancy. Each curve starts at a different, higher loss value on the left side of the chart (varying between y≈2.5 and y≈6 at x=0.1) and slopes downward steeply. As they move right (increasing compute), they converge and asymptotically approach the path of the red "Full Attention Projection" line, merging with it around `x=1` to `x=2`.

### Detailed Analysis

* **Trend Verification:**

* **Full Attention (Red):** Exhibits a consistent, linear downward slope across the entire compute range. This represents a stable, predictable scaling law where loss decreases proportionally with increased compute.

* **MoBA (Blue):** Each curve shows a steep initial decline in loss for small increases in compute (left side of chart). The rate of loss reduction (slope) is much steeper than the red line initially. As compute increases, the slope of each blue curve flattens, and they all converge onto the trajectory defined by the red line.

* **Data Points & Convergence:**

* At `x = 0.1 PFLOP/s-days`: Blue curves are spread between `y ≈ 2.5` and `y ≈ 6.0`. The red line is at `y ≈ 2.2`.

* At `x = 1 PFLOP/s-days`: Most blue curves have descended to between `y ≈ 1.6` and `y ≈ 1.8`, closely approaching the red line at `y ≈ 1.6`.

* At `x = 10 PFLOP/s-days`: All lines (blue and red) appear to converge at approximately `y ≈ 1.2`.

* **Spatial Grounding:** The legend is placed in the top-right, avoiding overlap with the data. The convergence zone where blue lines meet the red line is in the center-right portion of the plot area, between `x=1` and `x=2`.

### Key Observations

1. **Convergence to a Scaling Law:** The most prominent feature is the convergence of all "MoBA Projection" curves onto the single "Full Attention Projection" line at higher compute scales (`>1 PFLOP/s-days`).

2. **Diminishing Returns:** The steep initial slope of the blue curves indicates high efficiency (large loss reduction per unit of compute) at lower scales. The flattening slope demonstrates diminishing returns as compute increases.

3. **Power-Law Behavior:** The straight red line on the log-log plot confirms that the projected loss follows a power-law relationship with compute (`Loss ∝ (Compute)^-α`).

4. **Initial Condition Variance:** The multiple blue curves starting at different loss values suggest that the "MoBA" method's performance at low compute is sensitive to some initial parameter (e.g., model size, data mixture, or training recipe), but this variance becomes irrelevant at high compute.

### Interpretation

This chart presents a technical argument about the scalability of two different methods ("MoBA" and "Full Attention") for training large language models.

* **What the data suggests:** It demonstrates that while the "MoBA" method may have variable and often worse (higher) loss at low computational budgets, its scaling trajectory ultimately matches that of the "Full Attention" method. The "Full Attention" line acts as a fundamental scaling limit or target.

* **Relationship between elements:** The "Full Attention Projection" serves as a benchmark or theoretical baseline. The "MoBA Projection" curves illustrate a practical method that, despite initial inefficiencies, is predicted to achieve the same optimal scaling behavior when given sufficient compute resources.

* **Notable Implication:** The key takeaway is that the choice of method ("MoBA" vs. "Full Attention") may not affect the ultimate model quality achievable at very large scale (high PFLOP/s-days), but it significantly impacts the efficiency and loss trajectory during the earlier, lower-compute phases of training. This has implications for cost and resource allocation in model development. The convergence suggests a universal scaling law governs the final performance, regardless of the initial path taken.