TECHNICAL ASSET FINGERPRINT

9b6e0941ba58de6dac26d9f1

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

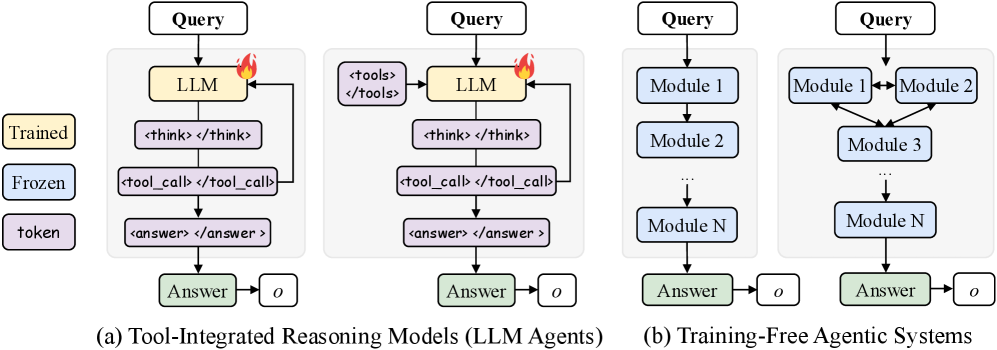

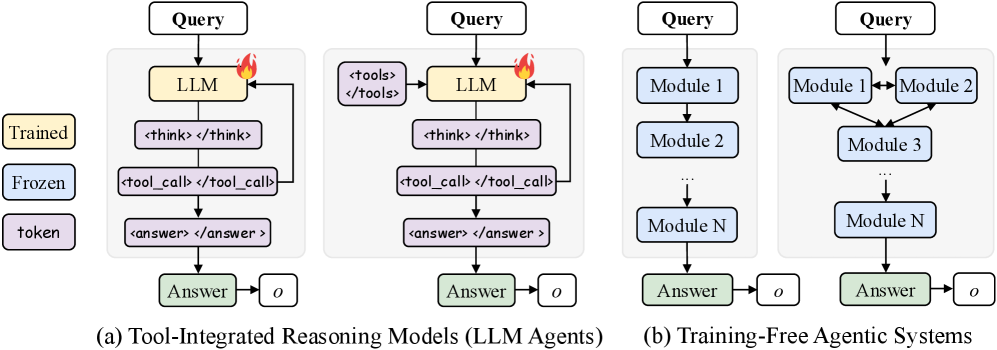

## Diagram: Tool-Integrated Reasoning Models vs. Training-Free Agentic Systems

### Overview

The image presents two diagrams illustrating different approaches to reasoning models: Tool-Integrated Reasoning Models (LLM Agents) and Training-Free Agentic Systems. The diagrams depict the flow of information and processing steps within each system, highlighting the use of Large Language Models (LLMs) in the former and modular components in the latter.

### Components/Axes

* **Legend (Left Side):**

* Trained: Yellow box

* Frozen: Blue box

* token: Purple box

* **Diagram (a) - Tool-Integrated Reasoning Models (LLM Agents):**

* Input: Query (white box)

* LLM: Yellow box (Trained)

* `<think> </think>`: Purple box (token)

* `<tool_call> </tool_call>`: Purple box (token)

* `<answer> </answer>`: Purple box (token)

* Answer: Green box

* o: White box

* A flame icon is present next to the LLM box.

* A loop connects the `<tool_call> </tool_call>` box back to the LLM box.

* **Diagram (a) - Tool-Integrated Reasoning Models (LLM Agents) - ALTERNATIVE FLOW:**

* Input: Query (white box)

* LLM: Yellow box (Trained)

* `<tools> </tools>`: Purple box (token)

* `<think> </think>`: Purple box (token)

* `<tool_call> </tool_call>`: Purple box (token)

* `<answer> </answer>`: Purple box (token)

* Answer: Green box

* o: White box

* A flame icon is present next to the LLM box.

* A loop connects the `<tool_call> </tool_call>` box back to the LLM box.

* **Diagram (b) - Training-Free Agentic Systems:**

* Input: Query (white box)

* Module 1: Blue box (Frozen)

* Module 2: Blue box (Frozen)

* Module 3: Blue box (Frozen)

* Module N: Blue box (Frozen)

* Answer: Green box

* o: White box

* Ellipsis (...) indicates a continuation of modules.

### Detailed Analysis

**Diagram (a) - Tool-Integrated Reasoning Models (LLM Agents):**

1. **Query Input:** The process begins with a "Query" input.

2. **LLM Processing:** The query is fed into a "LLM" (Large Language Model) which is marked as "Trained" (yellow).

3. **Reasoning Steps:** The LLM then goes through a series of steps represented by tokens: `<think> </think>`, `<tool_call> </tool_call>`, and `<answer> </answer>`.

4. **Tool Integration:** The `<tool_call> </tool_call>` step indicates the use of external tools. A loop from this step back to the LLM suggests that the LLM can iteratively call tools and refine its reasoning.

5. **Answer Output:** Finally, the system produces an "Answer" (green) and an output "o".

**Diagram (a) - Tool-Integrated Reasoning Models (LLM Agents) - ALTERNATIVE FLOW:**

1. **Query Input:** The process begins with a "Query" input.

2. **LLM Processing:** The query is fed into a "LLM" (Large Language Model) which is marked as "Trained" (yellow).

3. **Tool Selection:** The LLM then selects a tool from `<tools> </tools>`.

4. **Reasoning Steps:** The LLM then goes through a series of steps represented by tokens: `<think> </think>`, `<tool_call> </tool_call>`, and `<answer> </answer>`.

5. **Tool Integration:** The `<tool_call> </tool_call>` step indicates the use of external tools. A loop from this step back to the LLM suggests that the LLM can iteratively call tools and refine its reasoning.

6. **Answer Output:** Finally, the system produces an "Answer" (green) and an output "o".

**Diagram (b) - Training-Free Agentic Systems:**

1. **Query Input:** The process starts with a "Query".

2. **Modular Processing:** The query is processed through a series of "Frozen" (blue) modules: "Module 1", "Module 2", and so on, up to "Module N".

3. **Inter-Module Communication:** "Module 1" and "Module 2" have bidirectional arrows between them, indicating communication and interaction. Both modules feed into "Module 3".

4. **Sequential Processing:** The modules are arranged in a sequence, suggesting a flow of information from one module to the next.

5. **Answer Output:** The system generates an "Answer" (green) and an output "o".

### Key Observations

* **LLM-Centric vs. Modular:** Diagram (a) emphasizes the role of a central LLM, while diagram (b) highlights a modular approach.

* **Tool Integration:** Diagram (a) explicitly shows the integration of external tools into the reasoning process.

* **Training Requirement:** Diagram (a) involves a "Trained" LLM, while diagram (b) uses "Frozen" modules, implying no further training is required.

* **Iterative Reasoning:** The loop in diagram (a) suggests an iterative reasoning process, where the LLM can refine its reasoning based on tool outputs.

### Interpretation

The diagrams illustrate two distinct paradigms for building reasoning systems. Tool-Integrated Reasoning Models leverage the power of pre-trained LLMs and augment them with external tools to perform complex tasks. The iterative nature of tool calls allows the LLM to refine its reasoning and improve its accuracy. In contrast, Training-Free Agentic Systems rely on a network of pre-built, "Frozen" modules that work together to process information. This approach offers the advantage of not requiring further training but may be less flexible than the LLM-based approach. The choice between these two approaches depends on the specific requirements of the task, the availability of pre-trained models and tools, and the desired level of flexibility and adaptability.

DECODING INTELLIGENCE...

EXPERT: gemini-2.5-flash-free VERSION 1

RUNTIME: google-free/gemini-2.5-flash

INTEL_VERIFIED

## Diagram: Architectures of Agentic Systems

### Overview

This image presents a technical diagram illustrating two primary categories of agentic systems: (a) Tool-Integrated Reasoning Models (LLM Agents) and (b) Training-Free Agentic Systems. Each category is further broken down into two distinct architectural examples, showcasing different internal flows and component interactions. The diagram uses color-coding to denote the state or type of each component (Trained, Frozen, or token) and includes a legend to clarify these distinctions.

### Components/Axes

The diagram is structured with a legend on the top-left and two main sections labeled (a) and (b) at the bottom.

**Legend (top-left):**

* A yellow rounded rectangle labeled "Trained"

* A light blue rounded rectangle labeled "Frozen"

* A purple rounded rectangle labeled "token"

**Common Elements across all diagrams:**

* **Input:** A white rounded rectangle at the top of each flow, labeled "Query".

* **Output:** A green rounded rectangle at the bottom of each flow, labeled "Answer".

* **Final Output Object:** A white square with slightly rounded corners, labeled "o", connected by an arrow from the "Answer" box.

* **System Boundary:** Each set of internal components for a system is enclosed within a light grey shaded background.

### Detailed Analysis

The image is divided into two main sections, (a) and (b), each containing two sub-diagrams.

**Section (a): Tool-Integrated Reasoning Models (LLM Agents)**

This section is located on the left side of the image.

* **Sub-diagram (a.1) - Leftmost LLM Agent:**

* **Flow:**

1. An arrow points from the "Query" (white) input to an "LLM" (yellow) component.

2. The "LLM" box has a small red flame icon at its top-right, indicating it is trainable or actively being used in a dynamic, adaptable manner.

3. An arrow points from the "LLM" to a purple box labeled ``.

4. An arrow points from `` to a purple box labeled `<tool_call> </tool_call>`.

5. A feedback loop arrow points from the bottom of `<tool_call> </tool_call>` back to the right side of the "LLM" box.

6. An arrow points from `<tool_call> </tool_call>` to a purple box labeled `<answer> </answer>`.

7. An arrow points from `<answer> </answer>` to the "Answer" (green) output.

8. An arrow points from "Answer" to the final output object "o" (white).

* **Component Colors:** "LLM" is yellow (Trained). `<think>`, `<tool_call>`, and `<answer>` are purple (token). "Answer" is green.

* **Sub-diagram (a.2) - Rightmost LLM Agent (within section a):**

* **Flow:**

1. An arrow points from the "Query" (white) input to an "LLM" (yellow) component.

2. An arrow points from `<tools>` (purple) to the "LLM" (yellow) component.

3. The "LLM" box has a small red flame icon at its top-right.

4. An arrow points from the "LLM" to a purple box labeled ``.

5. An arrow points from `` to a purple box labeled `<tool_call> </tool_call>`.

6. A feedback loop arrow points from the bottom of `<tool_call> </tool_call>` back to the right side of the "LLM" box.

7. An arrow points from `<tool_call> </tool_call>` to a purple box labeled `<answer> </answer>`.

8. An arrow points from `<answer> </answer>` to the "Answer" (green) output.

9. An arrow points from "Answer" to the final output object "o" (white).

* **Component Colors:** "LLM" is yellow (Trained). `<tools>`, `<think>`, `<tool_call>`, and `<answer>` are purple (token). "Answer" is green.

**Section (b): Training-Free Agentic Systems**

This section is located on the right side of the image.

* **Sub-diagram (b.1) - Leftmost Training-Free System:**

* **Flow:**

1. An arrow points from the "Query" (white) input to "Module 1" (light blue).

2. An arrow points from "Module 1" to "Module 2" (light blue).

3. A vertical ellipsis "..." with arrows above and below indicates a sequence of intermediate modules.

4. An arrow points from the ellipsis to "Module N" (light blue).

5. An arrow points from "Module N" to the "Answer" (green) output.

6. An arrow points from "Answer" to the final output object "o" (white).

* **Component Colors:** "Module 1", "Module 2", and "Module N" are light blue (Frozen). "Answer" is green. No flame icon is present.

* **Sub-diagram (b.2) - Rightmost Training-Free System (within section b):**

* **Flow:**

1. An arrow points from the "Query" (white) input to "Module 1" (light blue) and also to "Module 2" (light blue).

2. A double-headed arrow connects "Module 1" and "Module 2", indicating bidirectional communication.

3. Arrows point from both "Module 1" and "Module 2" downwards to "Module 3" (light blue).

4. A vertical ellipsis "..." with arrows above and below indicates a sequence of intermediate modules.

5. An arrow points from the ellipsis to "Module N" (light blue).

6. An arrow points from "Module N" to the "Answer" (green) output.

7. An arrow points from "Answer" to the final output object "o" (white).

* **Component Colors:** "Module 1", "Module 2", "Module 3", and "Module N" are light blue (Frozen). "Answer" is green. No flame icon is present.

### Key Observations

* **Color-Coding Significance:** The legend clearly defines the state of components: "Trained" (yellow) for the core LLM, "Frozen" (light blue) for fixed modules, and "token" (purple) for intermediate outputs or structured prompts within LLM agents.

* **Trainability vs. Fixed Modules:** LLM Agents (a) feature a "Trained" LLM with a flame icon, implying adaptability or fine-tuning. Training-Free Agentic Systems (b) use "Frozen" modules, indicating pre-defined, unchangeable components.

* **LLM Agent Internal Process:** LLM Agents demonstrate an iterative reasoning process involving explicit "tokens" for thinking (`<think>`), tool invocation (`<tool_call>`), and answer formulation (`<answer>`), with a feedback loop from tool calls back to the LLM.

* **Tool Integration:** Sub-diagram (a.2) explicitly shows `<tools>` as an input to the LLM, highlighting a mechanism for providing external capabilities to the LLM's reasoning.

* **Training-Free System Modularity:** Training-Free systems (b) emphasize modularity, with flows ranging from simple sequential execution (b.1) to more complex, interconnected module interactions (b.2).

* **Consistent Output:** All four architectures ultimately produce an "Answer" and an associated output object "o", suggesting a common goal despite diverse internal mechanisms.

### Interpretation

The diagram provides a clear conceptual distinction between two major paradigms for designing intelligent agents.

**Tool-Integrated Reasoning Models (LLM Agents)** represent a paradigm where a central, adaptable Large Language Model (LLM) acts as the primary orchestrator and reasoner. The "Trained" (yellow) LLM with the flame icon signifies its dynamic nature, capable of learning, adapting, or being fine-tuned. The use of "token" (purple) tags like `<think>`, `<tool_call>`, and `<answer>` suggests that the LLM generates structured internal thoughts or prompts to guide its own reasoning process. The feedback loop from `<tool_call>` back to the LLM is crucial, enabling iterative refinement: the LLM can call a tool, observe its output, and then use that information to further refine its thinking or make subsequent tool calls. This architecture is highly flexible and can handle complex, open-ended tasks by leveraging the LLM's emergent reasoning capabilities and its ability to interact with external tools. The explicit `<tools>` input in (a.2) further emphasizes the LLM's role in integrating and utilizing external functionalities.

**Training-Free Agentic Systems**, in contrast, represent a more traditional, modular approach. The "Frozen" (light blue) modules indicate that these components have fixed functionalities and are not subject to runtime training or adaptation. This paradigm is suitable for tasks where the sub-problems are well-defined and can be encapsulated within specialized, pre-built modules. Sub-diagram (b.1) illustrates a straightforward sequential pipeline, where information flows linearly through a series of modules, each performing a specific step. Sub-diagram (b.2) demonstrates a more sophisticated modular design, allowing for parallel processing or bidirectional communication between modules (e.g., "Module 1" and "Module 2") before converging into a subsequent processing chain. This approach offers greater control, transparency, and potentially higher reliability for specific tasks, as the behavior of each module is predictable.

In essence, the diagram highlights a trade-off: LLM Agents offer adaptability and emergent intelligence through a central, trainable model, often at the cost of full transparency and predictability. Training-Free Agentic Systems offer predictability and control through a composition of fixed, specialized modules, potentially at the cost of adaptability to novel situations. Both approaches aim to process a "Query" and yield an "Answer" with an associated output "o", indicating that the choice of architecture depends on the specific requirements of the agent's task, including the need for flexibility, interpretability, and performance.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: LLM Agent Architectures

### Overview

The image presents a comparative diagram illustrating two distinct architectures for Large Language Model (LLM) agents: Tool-Integrated Reasoning Models (LLM Agents) and Training-Free Agentic Systems. Both architectures take a "Query" as input and produce an "Answer" as output. The diagram highlights the internal processes and components within each architecture.

### Components/Axes

The diagram consists of four main sections, labeled (a) and (b), each representing a different system. Each section includes the following components:

* **Query:** The initial input to the system.

* **LLM (or Modules):** The core processing unit. In (a), it's a single LLM; in (b), it's a series of Modules.

* **Intermediate Steps:** Represented by boxes containing text like `<tools>`, `<think>`, `<tool_call>`, `<answer>`.

* **Answer:** The final output of the system.

* **"o":** A small circle placed near the "Answer" box, potentially indicating an output signal or completion marker.

* **Trained, Frozen, Token:** Labels associated with the LLM in section (a).

### Detailed Analysis or Content Details

**Section (a): Tool-Integrated Reasoning Models (LLM Agents)**

* **Input:** "Query" enters the system.

* **LLM:** The "Query" is fed into an LLM, which is labeled as "Trained," "Frozen," and "Token."

* **Process Flow:**

1. The LLM generates `<think>`.

2. `<think>` leads to `<tool_call>`.

3. `<tool_call>` results in `<answer>`.

4. `<answer>` produces the final "Answer."

* **Connections:** Arrows indicate a sequential flow of information from the "Query" through the LLM and intermediate steps to the "Answer."

**Section (b): Training-Free Agentic Systems**

* **Input:** "Query" enters the system.

* **Modules:** The "Query" is distributed to multiple "Module" components (Module 1, Module 2, ... Module N).

* **Process Flow:**

1. The "Query" is processed by multiple Modules in parallel.

2. The outputs of the Modules converge to produce the final "Answer."

* **Connections:** Arrows indicate the flow of information from the "Query" to the Modules and then to the "Answer." The Modules are connected in a cascading manner, with Module 1 feeding into Module 2, and so on, until Module N.

### Key Observations

* **Sequential vs. Parallel Processing:** The key difference between the two architectures is the processing approach. (a) uses a sequential, single-LLM approach, while (b) employs a parallel, multi-module approach.

* **Tool Integration:** Architecture (a) explicitly highlights the use of "tools" through the `<tools>` and `<tool_call>` steps, suggesting the LLM can interact with external resources.

* **Training Status:** The labels "Trained," "Frozen," and "Token" associated with the LLM in (a) indicate the LLM's training state.

* **Scalability:** Architecture (b) suggests scalability through the use of "Module N," implying the system can be expanded by adding more modules.

### Interpretation

The diagram illustrates two contrasting approaches to building LLM agents. The Tool-Integrated Reasoning Model (a) relies on a single, pre-trained LLM that leverages external tools to enhance its reasoning capabilities. The Training-Free Agentic System (b) utilizes a network of specialized modules, potentially allowing for more flexible and scalable agent behavior without requiring further training of the core LLM.

The use of `<think>`, `<tool_call>`, and `<answer>` tags in (a) suggests a structured reasoning process where the LLM explicitly considers its actions and utilizes tools before generating a final response. The parallel processing in (b) could lead to faster response times and improved robustness, as the system can leverage the strengths of multiple modules.

The "Trained, Frozen, Token" labels in (a) indicate that the LLM is likely a pre-trained model that has been fine-tuned or adapted for specific tasks. The "Training-Free" designation in (b) suggests that the modules are designed to operate without requiring additional training, potentially making the system more adaptable to new tasks and environments. The "o" symbol near the answer may indicate a completion signal or a flag indicating the answer is ready.

The diagram effectively communicates the core architectural differences between these two approaches, highlighting their respective strengths and weaknesses. It suggests a trade-off between the complexity of managing a single, powerful LLM versus the scalability and flexibility of a modular system.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

and "<tool_call> </tool_call>", then a green "Answer" box leading to "o". The left flowchart in (a) has the LLM box in yellow, while the right one has it in yellow but with "<tools> </tools>" above it.

Section (b) shows two different flowcharts. The left one has a linear sequence of blue "Module" boxes from 1 to N, leading to a green "Answer" box. The right one has interconnected blue "Module" boxes with arrows between them, also leading to "Answer".

I need to extract all text precisely, including labels, axis titles (though there are no axes here), and legends. The user emphasized spatial grounding, so I should describe the positioning of elements. For example, the legend is on the far left, section (a) is on the left half, and section (b) is on the right half.

The user also wants trend verification, but since this is a diagram, not a chart, I should focus on components and flow. I'll describe the flow direction and relationships between components.

I should cross-reference colors with the legend. The yellow boxes are "Trained," blue are "Frozen," and purple are "token." The green "Answer" boxes aren't in the legend, but they're consistently green.

Now, structuring the response. The user provided a markdown structure, but I need to adapt it since this is a diagram. I'll use "Diagram Type: Comparative Flowchart" as the title.

Overview: Summarize the image as comparing two AI system architectures.

Components: Describe the legend and the two main sections.

Detailed Analysis: Break down each flowchart in (a) and (b), noting the flow and components.

Key Observations: Highlight differences between the two approaches, like the use of tools in (a) versus modular systems in (b).

Interpretation: Explain what these diagrams suggest about AI system design, such as the trade-offs between integrated reasoning and modular, training-free approaches.

I need to ensure all text is transcribed accurately, including the angle brackets in "## Diagram Type: Comparative Flowchart of AI System Architectures

### Overview

The image is a technical diagram comparing two paradigms for building AI systems that can reason and use tools. It is divided into two main sections, labeled (a) and (b), each containing two flowchart variations. A legend on the far left defines the color-coding for component types.

### Components/Axes

* **Legend (Far Left):**

* **Yellow Box:** Labeled "Trained". Indicates components that are trained or fine-tuned.

* **Blue Box:** Labeled "Frozen". Indicates pre-trained components that are not updated during this process.

* **Purple Box:** Labeled "token". Indicates specific token sequences or prompts used for communication.

* **Section (a) Title (Bottom Left):** "(a) Tool-Integrated Reasoning Models (LLM Agents)"

* **Section (b) Title (Bottom Right):** "(b) Training-Free Agentic Systems"

### Detailed Analysis

#### **Section (a): Tool-Integrated Reasoning Models (LLM Agents)**

This section depicts two similar architectures where a central Large Language Model (LLM) is actively involved in reasoning and tool use.

* **Left Flowchart in (a):**

1. **Input:** A "Query" box at the top.

2. **Core Processing:** The query flows into a yellow "Trained" **LLM** box, which has a small flame icon (🔥) in its top-right corner, suggesting active computation or generation.

3. **Internal Reasoning Loop:** The LLM outputs a purple "token" sequence: ``. An arrow loops back from this sequence to the LLM, indicating iterative reasoning.

4. **Tool Invocation:** The next purple "token" sequence is `<tool_call> </tool_call>`.

5. **Output Generation:** This leads to a green "Answer" box (color not in legend), which outputs a final result denoted by a small circle "o".

* **Right Flowchart in (a):**

1. **Input:** A "Query" box at the top.

2. **Tool-Augmented Core:** The query flows into a yellow "Trained" **LLM** box (with flame icon 🔥). Above this LLM box is a purple "token" box containing `<tools> </tools>`, indicating the LLM has access to a defined set of tools.

3. **Internal Reasoning Loop:** Identical to the left flowchart: the LLM outputs `` which loops back.

4. **Tool Invocation & Output:** Identical to the left flowchart: `<tool_call> </tool_call>` leads to a green "Answer" box and output "o".

**Spatial Grounding for (a):** Both flowcharts are vertically oriented. The legend is to their left. The "Query" boxes are centered at the top of their respective columns. The LLM boxes are central, with the reasoning loops (`<think>`) positioned directly below them. The tool call and answer sequences follow in a linear, downward flow.

#### **Section (b): Training-Free Agentic Systems**

This section depicts two architectures that rely on composing multiple, potentially frozen, modules without central LLM training.

* **Left Flowchart in (b):**

1. **Input:** A "Query" box at the top.

2. **Linear Pipeline:** The query flows into a blue "Frozen" **Module 1**.

3. **Sequential Processing:** Module 1 connects to **Module 2**, which connects to a vertical ellipsis "..." indicating a sequence, leading to **Module N**.

4. **Output:** Module N connects to a green "Answer" box, which outputs "o".

* **Right Flowchart in (b):**

1. **Input:** A "Query" box at the top.

2. **Interconnected Network:** The query flows into a blue "Frozen" **Module 1**.

3. **Complex Flow:** Module 1 has a bidirectional arrow connecting to **Module 2**. Both Module 1 and Module 2 have arrows pointing to **Module 3**. Module 3 connects to a vertical ellipsis "...", leading to **Module N**.

4. **Output:** Module N connects to a green "Answer" box, which outputs "o".

**Spatial Grounding for (b):** These flowcharts are also vertically oriented and positioned to the right of section (a). The linear pipeline (left) has a strict top-to-bottom flow. The network diagram (right) shows more complex, non-linear connections between the early modules (1, 2, 3) before converging into a linear sequence.

### Key Observations

1. **Architectural Dichotomy:** The diagram contrasts a centralized, LLM-driven approach (a) with a decentralized, modular approach (b).

2. **Role of Training:** In (a), the core LLM is "Trained" (yellow). In (b), all modules are "Frozen" (blue), implying the system's capability comes from orchestration, not module updates.

3. **Communication Protocol:** The LLM Agents in (a) use a specific, structured token-based protocol (`<think>`, `<tool_call>`) for internal reasoning and external action. The Agentic Systems in (b) use direct module-to-module connections.

4. **Complexity vs. Simplicity:** The right-hand flowchart in each section shows a more complex variant: (a)-right includes explicit tool definitions, and (b)-right shows a networked module graph versus a simple pipeline.

5. **Common Output Structure:** All four architectures culminate in an identical "Answer" -> "o" output stage, suggesting a unified interface for the final result despite different internal processes.

### Interpretation

This diagram illustrates a fundamental design choice in building capable AI systems. The **Tool-Integrated Reasoning Models (LLM Agents)** represent an "emergent" approach, where a single, powerful, trained model learns to reason and use tools through its internal mechanisms and prompted protocols. The flame icon emphasizes its active, generative nature. This approach is flexible but relies heavily on the capabilities and alignment of the core LLM.

In contrast, the **Training-Free Agentic Systems** represent a "compositional" or "engineered" approach. Here, intelligence arises from connecting pre-existing, specialized modules (which could be other models, APIs, or algorithms) in a pipeline or network. This is potentially more interpretable, controllable, and efficient as it avoids retraining, but requires careful system design to orchestrate the modules effectively.

The progression from left to right within each section (adding tool definitions or moving from pipeline to network) suggests increasing sophistication within each paradigm. The diagram serves as a conceptual map for understanding different paths toward creating AI agents, highlighting the trade-offs between training a monolithic model versus composing frozen modules.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

```markdown

## Diagram: Tool-Integrated vs. Training-Free Agentic Systems

### Overview

The image compares two system architectures for processing queries:

1. **Tool-Integrated Reasoning Models (LLM Agents)** (Section a)

2. **Training-Free Agentic Systems** (Section b)

Both sections depict workflows from "Query" to "Answer," with distinct component structures and feedback mechanisms.

---

### Components/Axes

#### Section (a): Tool-Integrated Reasoning Models

- **Key Components**:

- **Query** (Top box, black text)

- **LLM** (Central yellow box with flame icon, labeled "LLM")

- **Answer** (Bottom green box, labeled "Answer")

- **Process Flow**:

- Arrows show sequential steps:

1. `Query` → `LLM`

2. `LLM` → `

DECODING INTELLIGENCE...