## Diagram: Tool-Integrated Reasoning Models vs. Training-Free Agentic Systems

### Overview

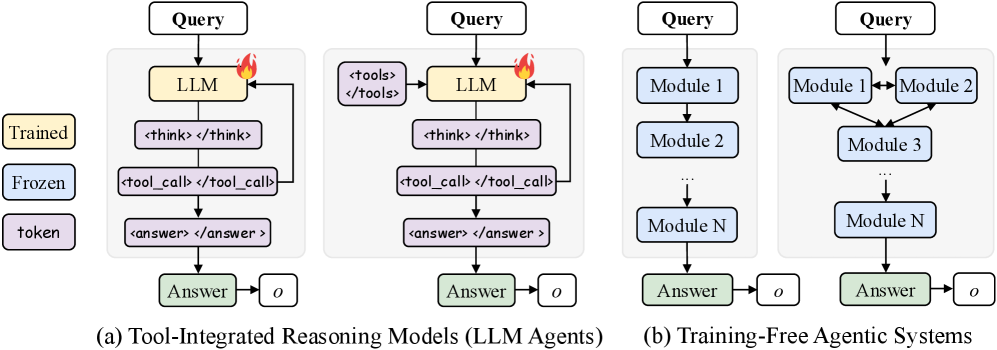

The image presents two diagrams illustrating different approaches to reasoning models: Tool-Integrated Reasoning Models (LLM Agents) and Training-Free Agentic Systems. The diagrams depict the flow of information and processing steps within each system, highlighting the use of Large Language Models (LLMs) in the former and modular components in the latter.

### Components/Axes

* **Legend (Left Side):**

* Trained: Yellow box

* Frozen: Blue box

* token: Purple box

* **Diagram (a) - Tool-Integrated Reasoning Models (LLM Agents):**

* Input: Query (white box)

* LLM: Yellow box (Trained)

* `<think> </think>`: Purple box (token)

* `<tool_call> </tool_call>`: Purple box (token)

* `<answer> </answer>`: Purple box (token)

* Answer: Green box

* o: White box

* A flame icon is present next to the LLM box.

* A loop connects the `<tool_call> </tool_call>` box back to the LLM box.

* **Diagram (a) - Tool-Integrated Reasoning Models (LLM Agents) - ALTERNATIVE FLOW:**

* Input: Query (white box)

* LLM: Yellow box (Trained)

* `<tools> </tools>`: Purple box (token)

* `<think> </think>`: Purple box (token)

* `<tool_call> </tool_call>`: Purple box (token)

* `<answer> </answer>`: Purple box (token)

* Answer: Green box

* o: White box

* A flame icon is present next to the LLM box.

* A loop connects the `<tool_call> </tool_call>` box back to the LLM box.

* **Diagram (b) - Training-Free Agentic Systems:**

* Input: Query (white box)

* Module 1: Blue box (Frozen)

* Module 2: Blue box (Frozen)

* Module 3: Blue box (Frozen)

* Module N: Blue box (Frozen)

* Answer: Green box

* o: White box

* Ellipsis (...) indicates a continuation of modules.

### Detailed Analysis

**Diagram (a) - Tool-Integrated Reasoning Models (LLM Agents):**

1. **Query Input:** The process begins with a "Query" input.

2. **LLM Processing:** The query is fed into a "LLM" (Large Language Model) which is marked as "Trained" (yellow).

3. **Reasoning Steps:** The LLM then goes through a series of steps represented by tokens: `<think> </think>`, `<tool_call> </tool_call>`, and `<answer> </answer>`.

4. **Tool Integration:** The `<tool_call> </tool_call>` step indicates the use of external tools. A loop from this step back to the LLM suggests that the LLM can iteratively call tools and refine its reasoning.

5. **Answer Output:** Finally, the system produces an "Answer" (green) and an output "o".

**Diagram (a) - Tool-Integrated Reasoning Models (LLM Agents) - ALTERNATIVE FLOW:**

1. **Query Input:** The process begins with a "Query" input.

2. **LLM Processing:** The query is fed into a "LLM" (Large Language Model) which is marked as "Trained" (yellow).

3. **Tool Selection:** The LLM then selects a tool from `<tools> </tools>`.

4. **Reasoning Steps:** The LLM then goes through a series of steps represented by tokens: `<think> </think>`, `<tool_call> </tool_call>`, and `<answer> </answer>`.

5. **Tool Integration:** The `<tool_call> </tool_call>` step indicates the use of external tools. A loop from this step back to the LLM suggests that the LLM can iteratively call tools and refine its reasoning.

6. **Answer Output:** Finally, the system produces an "Answer" (green) and an output "o".

**Diagram (b) - Training-Free Agentic Systems:**

1. **Query Input:** The process starts with a "Query".

2. **Modular Processing:** The query is processed through a series of "Frozen" (blue) modules: "Module 1", "Module 2", and so on, up to "Module N".

3. **Inter-Module Communication:** "Module 1" and "Module 2" have bidirectional arrows between them, indicating communication and interaction. Both modules feed into "Module 3".

4. **Sequential Processing:** The modules are arranged in a sequence, suggesting a flow of information from one module to the next.

5. **Answer Output:** The system generates an "Answer" (green) and an output "o".

### Key Observations

* **LLM-Centric vs. Modular:** Diagram (a) emphasizes the role of a central LLM, while diagram (b) highlights a modular approach.

* **Tool Integration:** Diagram (a) explicitly shows the integration of external tools into the reasoning process.

* **Training Requirement:** Diagram (a) involves a "Trained" LLM, while diagram (b) uses "Frozen" modules, implying no further training is required.

* **Iterative Reasoning:** The loop in diagram (a) suggests an iterative reasoning process, where the LLM can refine its reasoning based on tool outputs.

### Interpretation

The diagrams illustrate two distinct paradigms for building reasoning systems. Tool-Integrated Reasoning Models leverage the power of pre-trained LLMs and augment them with external tools to perform complex tasks. The iterative nature of tool calls allows the LLM to refine its reasoning and improve its accuracy. In contrast, Training-Free Agentic Systems rely on a network of pre-built, "Frozen" modules that work together to process information. This approach offers the advantage of not requiring further training but may be less flexible than the LLM-based approach. The choice between these two approaches depends on the specific requirements of the task, the availability of pre-trained models and tools, and the desired level of flexibility and adaptability.