\n

## Diagram: LLM Agent Architectures

### Overview

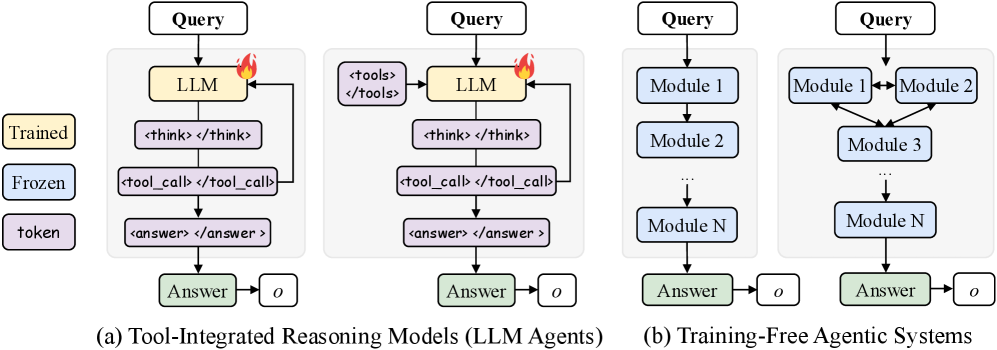

The image presents a comparative diagram illustrating two distinct architectures for Large Language Model (LLM) agents: Tool-Integrated Reasoning Models (LLM Agents) and Training-Free Agentic Systems. Both architectures take a "Query" as input and produce an "Answer" as output. The diagram highlights the internal processes and components within each architecture.

### Components/Axes

The diagram consists of four main sections, labeled (a) and (b), each representing a different system. Each section includes the following components:

* **Query:** The initial input to the system.

* **LLM (or Modules):** The core processing unit. In (a), it's a single LLM; in (b), it's a series of Modules.

* **Intermediate Steps:** Represented by boxes containing text like `<tools>`, `<think>`, `<tool_call>`, `<answer>`.

* **Answer:** The final output of the system.

* **"o":** A small circle placed near the "Answer" box, potentially indicating an output signal or completion marker.

* **Trained, Frozen, Token:** Labels associated with the LLM in section (a).

### Detailed Analysis or Content Details

**Section (a): Tool-Integrated Reasoning Models (LLM Agents)**

* **Input:** "Query" enters the system.

* **LLM:** The "Query" is fed into an LLM, which is labeled as "Trained," "Frozen," and "Token."

* **Process Flow:**

1. The LLM generates `<think>`.

2. `<think>` leads to `<tool_call>`.

3. `<tool_call>` results in `<answer>`.

4. `<answer>` produces the final "Answer."

* **Connections:** Arrows indicate a sequential flow of information from the "Query" through the LLM and intermediate steps to the "Answer."

**Section (b): Training-Free Agentic Systems**

* **Input:** "Query" enters the system.

* **Modules:** The "Query" is distributed to multiple "Module" components (Module 1, Module 2, ... Module N).

* **Process Flow:**

1. The "Query" is processed by multiple Modules in parallel.

2. The outputs of the Modules converge to produce the final "Answer."

* **Connections:** Arrows indicate the flow of information from the "Query" to the Modules and then to the "Answer." The Modules are connected in a cascading manner, with Module 1 feeding into Module 2, and so on, until Module N.

### Key Observations

* **Sequential vs. Parallel Processing:** The key difference between the two architectures is the processing approach. (a) uses a sequential, single-LLM approach, while (b) employs a parallel, multi-module approach.

* **Tool Integration:** Architecture (a) explicitly highlights the use of "tools" through the `<tools>` and `<tool_call>` steps, suggesting the LLM can interact with external resources.

* **Training Status:** The labels "Trained," "Frozen," and "Token" associated with the LLM in (a) indicate the LLM's training state.

* **Scalability:** Architecture (b) suggests scalability through the use of "Module N," implying the system can be expanded by adding more modules.

### Interpretation

The diagram illustrates two contrasting approaches to building LLM agents. The Tool-Integrated Reasoning Model (a) relies on a single, pre-trained LLM that leverages external tools to enhance its reasoning capabilities. The Training-Free Agentic System (b) utilizes a network of specialized modules, potentially allowing for more flexible and scalable agent behavior without requiring further training of the core LLM.

The use of `<think>`, `<tool_call>`, and `<answer>` tags in (a) suggests a structured reasoning process where the LLM explicitly considers its actions and utilizes tools before generating a final response. The parallel processing in (b) could lead to faster response times and improved robustness, as the system can leverage the strengths of multiple modules.

The "Trained, Frozen, Token" labels in (a) indicate that the LLM is likely a pre-trained model that has been fine-tuned or adapted for specific tasks. The "Training-Free" designation in (b) suggests that the modules are designed to operate without requiring additional training, potentially making the system more adaptable to new tasks and environments. The "o" symbol near the answer may indicate a completion signal or a flag indicating the answer is ready.

The diagram effectively communicates the core architectural differences between these two approaches, highlighting their respective strengths and weaknesses. It suggests a trade-off between the complexity of managing a single, powerful LLM versus the scalability and flexibility of a modular system.