# Technical Document Extraction: Attention Forward Speed Benchmark

## 1. Header Information

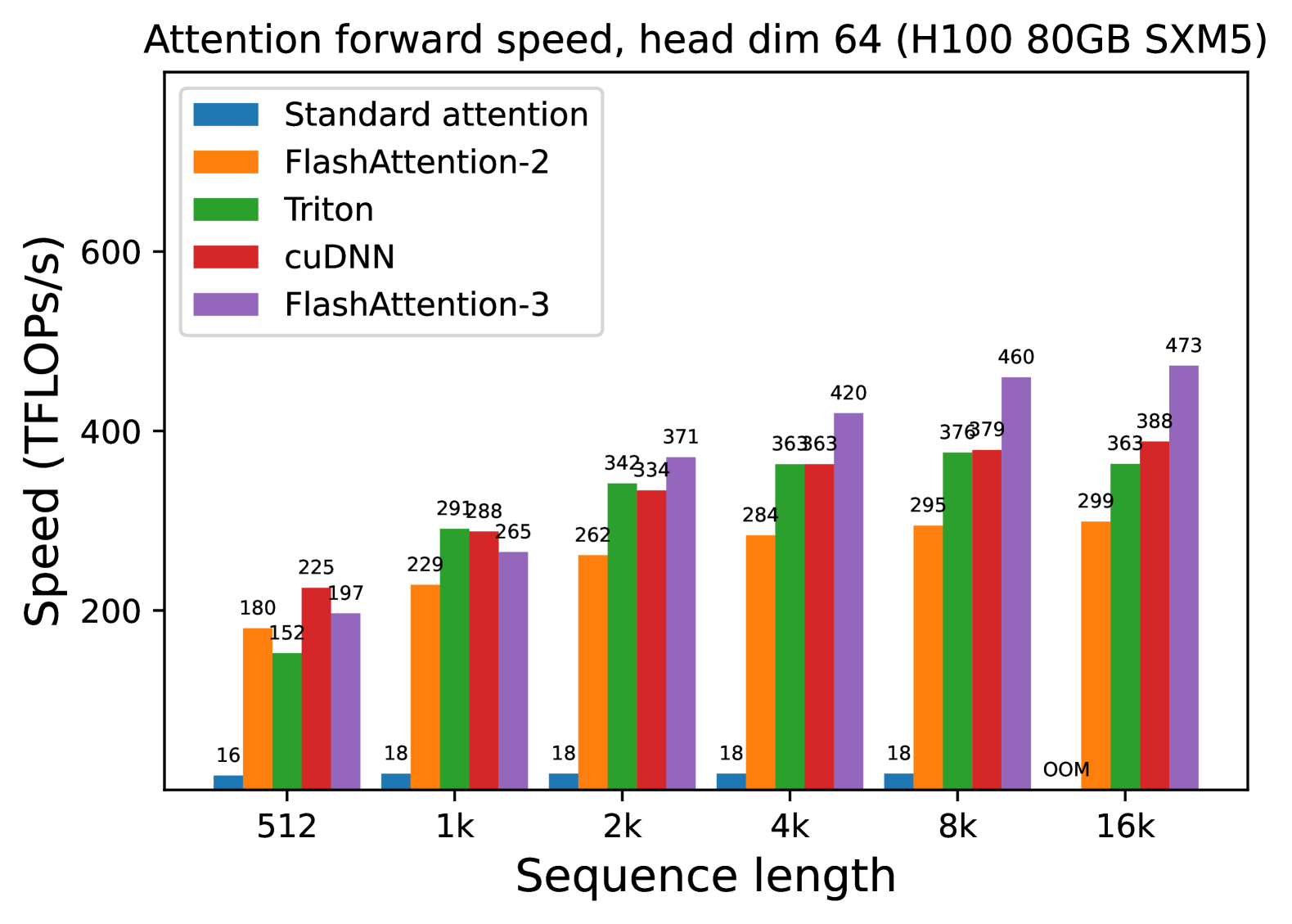

* **Title:** Attention forward speed, head dim 64 (H100 80GB SXM5)

* **Hardware Context:** NVIDIA H100 80GB SXM5 GPU.

* **Parameter Configuration:** Head dimension is fixed at 64.

## 2. Chart Metadata

* **Type:** Grouped Bar Chart.

* **X-Axis Label:** Sequence length

* **X-Axis Categories:** 512, 1k, 2k, 4k, 8k, 16k.

* **Y-Axis Label:** Speed (TFLOPS/s)

* **Y-Axis Scale:** 0 to 600 (increments of 200 labeled).

* **Legend Location:** Top-left [x: ~0.15, y: ~0.85].

## 3. Legend and Series Identification

The chart compares five different attention implementations, color-coded as follows:

1. **Standard attention** (Blue): Represents the baseline implementation.

2. **FlashAttention-2** (Orange): An optimized attention mechanism.

3. **Triton** (Green): Implementation using the Triton language/compiler.

4. **cuDNN** (Red): NVIDIA's deep neural network library implementation.

5. **FlashAttention-3** (Purple): The latest iteration of the FlashAttention algorithm.

## 4. Data Table Reconstruction

The following table transcribes the numerical values (TFLOPS/s) displayed above each bar in the chart.

| Sequence Length | Standard attention (Blue) | FlashAttention-2 (Orange) | Triton (Green) | cuDNN (Red) | FlashAttention-3 (Purple) |

| :--- | :---: | :---: | :---: | :---: | :---: |

| **512** | 16 | 180 | 152 | 225 | 197 |

| **1k** | 18 | 229 | 291 | 288 | 265 |

| **2k** | 18 | 262 | 342 | 334 | 371 |

| **4k** | 18 | 284 | 363 | 363 | 420 |

| **8k** | 18 | 295 | 376 | 379 | 460 |

| **16k** | OOM* | 299 | 363 | 388 | 473 |

*\*OOM: Out of Memory*

## 5. Trend Analysis and Component Observations

### Standard attention (Blue)

* **Trend:** Extremely low and flat performance.

* **Observation:** Maintains a near-constant speed of 16-18 TFLOPS/s until it fails at 16k sequence length due to memory constraints (OOM).

### FlashAttention-2 (Orange)

* **Trend:** Steady upward slope that plateaus as sequence length increases.

* **Observation:** Performance grows from 180 to 299 TFLOPS/s, showing significant improvement over standard attention but trailing behind the other optimized methods at higher sequence lengths.

### Triton (Green)

* **Trend:** Rapid initial growth, leveling off after 4k.

* **Observation:** Performance jumps significantly between 512 (152 TFLOPS/s) and 2k (342 TFLOPS/s), eventually stabilizing around 363-376 TFLOPS/s.

### cuDNN (Red)

* **Trend:** Consistent upward slope.

* **Observation:** Starts as the fastest method at the shortest sequence length (225 TFLOPS/s at 512). It maintains a very similar performance profile to Triton from 1k to 8k, ending slightly higher at 388 TFLOPS/s for 16k.

### FlashAttention-3 (Purple)

* **Trend:** Strong, continuous upward slope with the highest ceiling.

* **Observation:** While it starts in the middle of the pack at 512 (197 TFLOPS/s), it scales the best of all tested methods. It becomes the clear leader at sequence lengths of 2k and above, reaching a peak of 473 TFLOPS/s at 16k.

## 6. Summary of Findings

FlashAttention-3 demonstrates superior scaling for long sequences on H100 hardware, outperforming FlashAttention-2 by approximately 58% and cuDNN by approximately 22% at a sequence length of 16k. Standard attention is non-viable for these workloads due to both extremely low throughput and memory inefficiency.