## [Diagram Type]: Document Structure Flowchart

### Overview

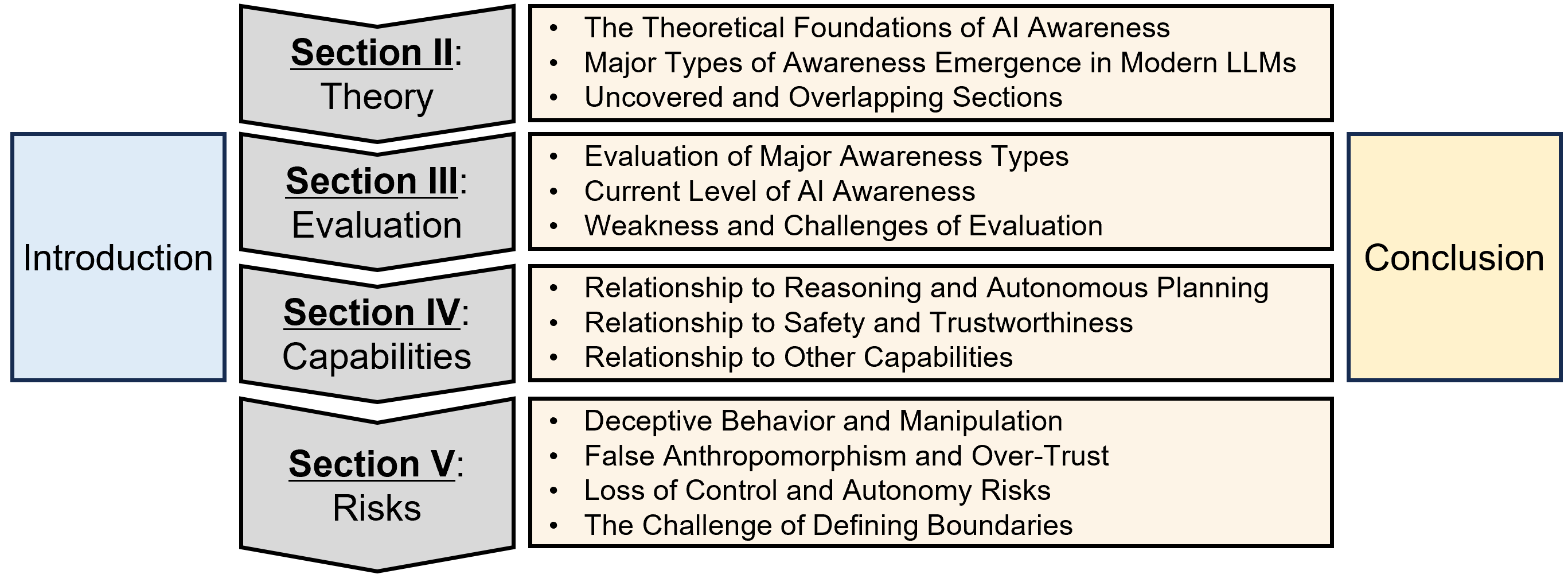

The image is a flowchart diagram illustrating the high-level structure and content outline of a technical document or presentation focused on "AI Awareness." It visually organizes the document into a logical sequence from an introduction, through four core thematic sections, to a conclusion.

### Components/Axes

The diagram is composed of rectangular and chevron-shaped boxes connected by implied flow (left to right, top to bottom within the central column). The elements are color-coded and spatially arranged as follows:

* **Left Column (Blue Box):**

* **Position:** Far left, vertically centered.

* **Label:** `Introduction`

* **Central Column (Four Gray Chevron Boxes & Associated Beige Content Boxes):**

* **Position:** Center of the image, stacked vertically.

* **Structure:** Each gray chevron box (pointing right) contains a section title. To its right is a beige rectangle containing bullet points detailing the section's content.

* **Section II: Theory**

* **Content Bullets:**

* The Theoretical Foundations of AI Awareness

* Major Types of Awareness Emergence in Modern LLMs

* Uncovered and Overlapping Sections

* **Section III: Evaluation**

* **Content Bullets:**

* Evaluation of Major Awareness Types

* Current Level of AI Awareness

* Weakness and Challenges of Evaluation

* **Section IV: Capabilities**

* **Content Bullets:**

* Relationship to Reasoning and Autonomous Planning

* Relationship to Safety and Trustworthiness

* Relationship to Other Capabilities

* **Section V: Risks**

* **Content Bullets:**

* Deceptive Behavior and Manipulation

* False Anthropomorphism and Over-Trust

* Loss of Control and Autonomy Risks

* The Challenge of Defining Boundaries

* **Right Column (Yellow Box):**

* **Position:** Far right, vertically centered.

* **Label:** `Conclusion`

### Detailed Analysis

The diagram explicitly lists the following textual information, structured by section:

**Main Flow:**

`Introduction` -> `[Sections II-V]` -> `Conclusion`

**Section Details:**

1. **Section II: Theory**

* Focuses on foundational concepts: theoretical bases, types of awareness in Large Language Models (LLMs), and areas of knowledge that are either not covered or overlap.

2. **Section III: Evaluation**

* Concerns the assessment of awareness: methods for evaluating different types, the current state of awareness, and the difficulties inherent in this evaluation process.

3. **Section IV: Capabilities**

* Explores the interconnections between AI awareness and other system attributes: its link to reasoning/planning, its implications for safety/trust, and its relationship to other unspecified capabilities.

4. **Section V: Risks**

* Details potential dangers and ethical concerns: deceptive actions, human tendencies to over-trust or anthropomorphize the AI, risks of losing control or autonomy, and the fundamental difficulty in setting clear limits.

### Key Observations

* **Hierarchical Organization:** The diagram presents a clear, linear progression from introduction to conclusion, with the core analysis divided into four distinct but logically connected pillars (Theory, Evaluation, Capabilities, Risks).

* **Content Scope:** The bullet points reveal the document's comprehensive approach, moving from definition and theory (Section II) to measurement (Section III), practical implications (Section IV), and finally to ethical and safety considerations (Section V).

* **Visual Coding:** Color is used functionally: blue for the starting point, gray for the main analytical sections, beige for detailed content, and yellow for the endpoint. The chevron shape of the section titles implies forward progression or a step-by-step process.

### Interpretation

This diagram serves as a conceptual map for a deep-dive investigation into AI awareness. It suggests the document argues that understanding AI awareness is not a single question but a multi-stage problem:

1. **Foundation First:** One must first establish what AI awareness *is* (Theory) before anything else.

2. **Measurement Challenge:** Once defined, the immediate next challenge is figuring out how to *measure* it (Evaluation), acknowledging the current limitations in doing so.

3. **Practical Integration:** The analysis then shifts to why awareness matters in practice, linking it to core AI functions (Capabilities) like planning and safety. This implies awareness isn't an isolated trait but is intertwined with system performance and reliability.

4. **Critical Examination of Perils:** Finally, the structure dedicates significant space to risks, indicating that the potential negative consequences of AI awareness (or of our perception of it) are a major, concluding concern. The inclusion of "False Anthropomorphism" and "Defining Boundaries" suggests a focus on human-AI interaction pitfalls and governance challenges.

The flow implies that a responsible discourse on AI awareness must progress from theoretical grounding through empirical assessment to an analysis of real-world impacts and dangers. The separation of "Capabilities" and "Risks" is particularly noteworthy, framing awareness as a double-edged sword that can enhance functionality but also introduce new vulnerabilities.