## Timeline and Bar Chart: Evolution of Computational Models and LLM Architectures

### Overview

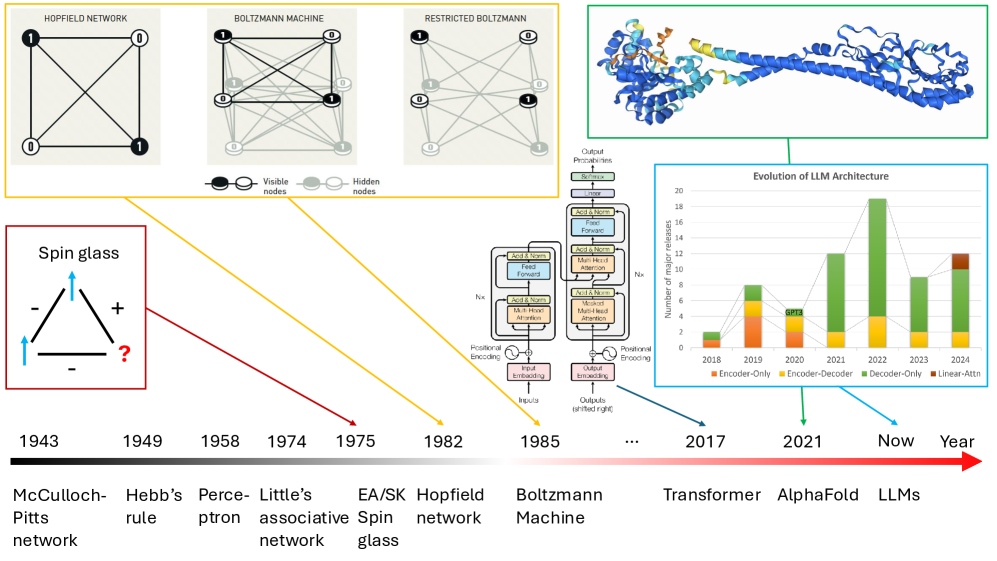

The image presents a dual visualization: a historical timeline of computational models (left) and a bar chart showing the evolution of LLM architectures (right). The timeline traces key milestones from 1943 to 2024, while the bar chart quantifies publication trends in LLM research from 2018–2024. A Spin Glass diagram (bottom-left) connects to the Hopfield Network (1982) via a red arrow.

---

### Components/Axes

#### Timeline (Left)

- **X-axis**: Years (1943–2024), marked at intervals (1943, 1949, 1958, 1974, 1975, 1982, 1985, 2017, 2021, "Now", "Year").

- **Y-axis**: Unlabeled, but represents vertical placement of models/diagrams.

- **Key Elements**:

- **Spin Glass Diagram**: Bottom-left, triangular structure with "+", "-", and "?" labels, connected by arrows.

- **Model Diagrams**:

- **Hopfield Network** (1982): Grid of nodes with visible (black) and hidden (gray) nodes.

- **Boltzmann Machine** (1985): Dense network with visible (black) and hidden (gray) nodes.

- **Restricted Boltzmann Machine** (1985): Sparse connections between visible and hidden nodes.

- **Text Labels**: Model names and years (e.g., "McCulloch-Pitts network" at 1943, "Transformer" at 2017).

#### Bar Chart (Right)

- **X-axis**: Years (2018–2024), labeled sequentially.

- **Y-axis**: "Number of major publications" (0–20), with gridlines.

- **Legend**: Right-side, color-coded categories:

- **Encoder-Only**: Orange

- **Encoder-Decoder**: Yellow

- **Decoder-Only**: Green

- **Linear-Attention**: Brown

- **Data**: Stacked bars for each year, showing cumulative contributions per category.

---

### Detailed Analysis

#### Timeline

1. **1943**: McCulloch-Pitts network (first neural network model).

2. **1949**: Hebb’s rule (learning mechanism for synaptic weights).

3. **1958**: Perceptron (early single-layer neural network).

4. **1974**: Little’s associative network (early associative memory model).

5. **1975**: EA/SK Spin Glass (theoretical model linking physics and computation).

6. **1982**: Hopfield Network (energy-based associative memory).

7. **1985**: Boltzmann Machine (stochastic neural network with hidden layers).

8. **2017**: Transformer (self-attention architecture).

9. **2020**: AlphaFold (protein structure prediction).

10. **2021–2024**: LLMs (large language models, e.g., GPT, BERT).

#### Bar Chart

- **2018**:

- Encoder-Only: 2 publications

- Encoder-Decoder: 5

- Decoder-Only: 3

- Linear-Attention: 0

- **2019**:

- Encoder-Only: 4

- Encoder-Decoder: 6

- Decoder-Only: 2

- Linear-Attention: 0

- **2020**:

- Encoder-Only: 3

- Encoder-Decoder: 4

- Decoder-Only: 5

- Linear-Attention: 0

- **2021**:

- Encoder-Only: 2

- Encoder-Decoder: 3

- Decoder-Only: 8

- Linear-Attention: 0

- **2022**:

- Encoder-Only: 1

- Encoder-Decoder: 2

- Decoder-Only: 12

- Linear-Attention: 0

- **2023**:

- Encoder-Only: 0

- Encoder-Decoder: 1

- Decoder-Only: 9

- Linear-Attention: 3

- **2024**:

- Encoder-Only: 0

- Encoder-Decoder: 0

- Decoder-Only: 6

- Linear-Attention: 4

---

### Key Observations

1. **Timeline**:

- The Spin Glass (1975) is explicitly linked to the Hopfield Network (1982) via a red arrow, suggesting a conceptual bridge between physics-inspired models and neural networks.

- The "?" in the Spin Glass diagram (1943) implies unresolved questions about its role in later architectures.

2. **Bar Chart**:

- **Peak in 2022**: Decoder-Only models dominate (12 publications), reflecting the rise of architectures like GPT-3.

- **Linear-Attention Emergence**: First appears in 2023, growing to 4 publications by 2024.

- **Encoder-Decoder Decline**: Drops from 6 (2019) to 1 (2023), indicating shifting research focus.

---

### Interpretation

1. **Historical Progression**:

- Early models (1943–1985) laid foundational principles (e.g., Hebb’s rule, Boltzmann Machines) that influenced later architectures.

- The Spin Glass (1975) represents an interdisciplinary link between physics and computation, later formalized in the Hopfield Network.

2. **LLM Evolution**:

- The 2022 peak aligns with breakthroughs like GPT-3, which popularized Decoder-Only models.

- Linear-Attention’s rise (2023–2024) suggests growing interest in efficiency improvements for long-sequence tasks.

3. **Unresolved Questions**:

- The Spin Glass’s "?" (1943) highlights gaps in understanding its direct impact on modern architectures.

- The timeline’s abrupt end at "Year" (2024) leaves future trends speculative.

---

### Spatial Grounding

- **Legend**: Right-aligned, colors match bar chart categories (orange=Encoder-Only, green=Decoder-Only).

- **Spin Glass Diagram**: Bottom-left, isolated from the timeline but connected via the red arrow to the Hopfield Network.

- **Bar Chart**: Right-side, stacked bars with clear color coding; legend positioned adjacent to data.

---

### Conclusion

The image illustrates the trajectory from early computational models to modern LLMs, emphasizing the Spin Glass’s theoretical role and the dominance of Decoder-Only architectures in recent years. The 2022 publication peak underscores rapid innovation, while Linear-Attention’s emergence hints at future directions. The Spin Glass’s unresolved status invites further interdisciplinary exploration.