\n

## Chart: Generalization Error vs. Training Parameters

### Overview

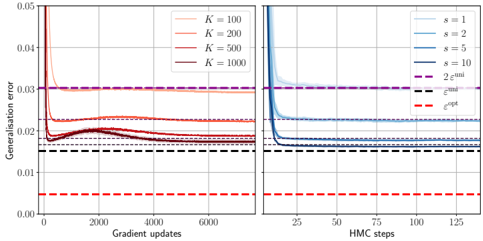

The image presents two charts side-by-side, both depicting the relationship between training parameters and generalization error. The left chart shows generalization error as a function of gradient updates, varying the parameter *K*. The right chart shows generalization error as a function of HMC steps, varying the parameter *s*. Both charts use logarithmic scales for the y-axis (generalization error).

### Components/Axes

**Left Chart:**

* **X-axis:** Gradient updates (scale: 0 to 6000, approximately).

* **Y-axis:** Generalization error (scale: 0 to 0.05, approximately, logarithmic).

* **Legend:**

* *K* = 100 (Red)

* *K* = 200 (Orange)

* *K* = 500 (Brown)

* *K* = 1000 (Gray)

**Right Chart:**

* **X-axis:** HMC steps (scale: 0 to 125, approximately).

* **Y-axis:** Generalization error (scale: 0 to 0.05, approximately, logarithmic).

* **Legend:**

* *s* = 1 (Light Blue)

* *s* = 2 (Blue)

* *s* = 5 (Dark Blue)

* *s* = 10 (Purple)

* 2 e<sup>-uni</sup> (Magenta, dashed)

* e<sup>-opt</sup> (Red, dashed)

Both charts share a horizontal dashed black line at approximately y = 0.023.

### Detailed Analysis or Content Details

**Left Chart:**

* **K = 100 (Red):** Starts at approximately 0.048, rapidly decreases to around 0.025 by 1000 gradient updates, then plateaus around 0.026-0.028.

* **K = 200 (Orange):** Starts at approximately 0.048, decreases to around 0.024 by 1000 gradient updates, then plateaus around 0.025-0.027.

* **K = 500 (Brown):** Starts at approximately 0.048, decreases to around 0.023 by 1000 gradient updates, then plateaus around 0.023-0.025.

* **K = 1000 (Gray):** Starts at approximately 0.048, decreases to around 0.022 by 1000 gradient updates, then plateaus around 0.022-0.024.

* All lines converge towards the dashed black line around 6000 gradient updates.

**Right Chart:**

* **s = 1 (Light Blue):** Starts at approximately 0.048, rapidly decreases to around 0.025 by 25 HMC steps, then slowly decreases to around 0.022 by 125 HMC steps.

* **s = 2 (Blue):** Starts at approximately 0.048, rapidly decreases to around 0.024 by 25 HMC steps, then slowly decreases to around 0.021 by 125 HMC steps.

* **s = 5 (Dark Blue):** Starts at approximately 0.048, rapidly decreases to around 0.023 by 25 HMC steps, then slowly decreases to around 0.020 by 125 HMC steps.

* **s = 10 (Purple):** Starts at approximately 0.048, rapidly decreases to around 0.022 by 25 HMC steps, then slowly decreases to around 0.019 by 125 HMC steps.

* **2 e<sup>-uni</sup> (Magenta, dashed):** Horizontal line at approximately 0.025.

* **e<sup>-opt</sup> (Red, dashed):** Horizontal line at approximately 0.018.

### Key Observations

* In the left chart, increasing *K* leads to a lower generalization error, with the plateauing occurring at lower error values for larger *K*.

* In the right chart, increasing *s* leads to a lower generalization error.

* The dashed lines on the right chart represent theoretical bounds or benchmarks for generalization error.

* Both charts show a rapid initial decrease in generalization error followed by a plateau.

### Interpretation

The charts demonstrate the impact of different training parameters on the generalization error of a model. The left chart suggests that increasing the number of components (*K*) improves generalization performance up to a point, after which the improvement diminishes. The right chart indicates that increasing the step size (*s*) in the HMC algorithm also improves generalization, potentially approaching theoretical limits represented by the dashed lines. The initial rapid decrease in error likely represents the model learning the training data, while the plateau indicates convergence and the model's ability to generalize to unseen data. The convergence to the dashed lines on the right chart suggests that the HMC algorithm is approaching optimal performance. The consistent starting point of all lines (approximately 0.048) suggests a common initial state or error level before training begins. The logarithmic scale emphasizes the relative changes in error, highlighting the significant improvements achieved through parameter tuning.