TECHNICAL ASSET FINGERPRINT

9bfc0d5703337883bfe236bb

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Diagram: Latent Analysis and Classifier Training and Jailbreak Mitigation at Inference

### Overview

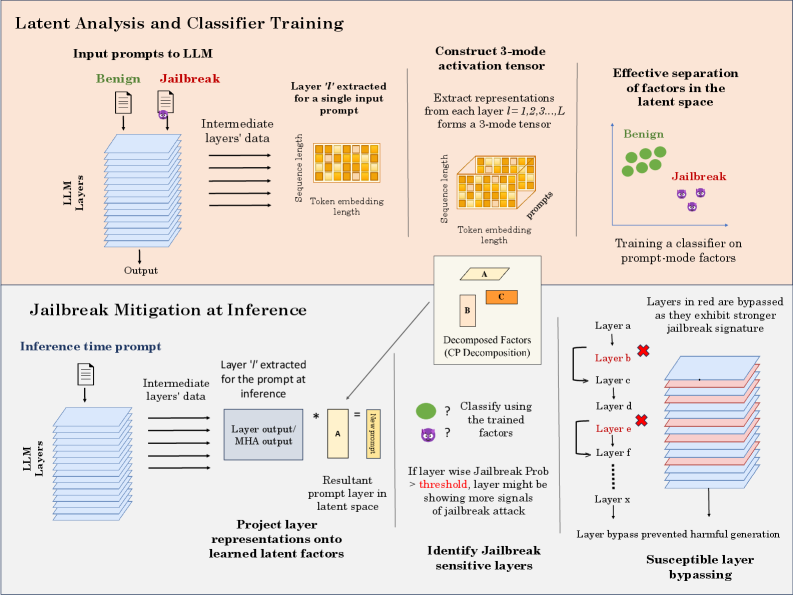

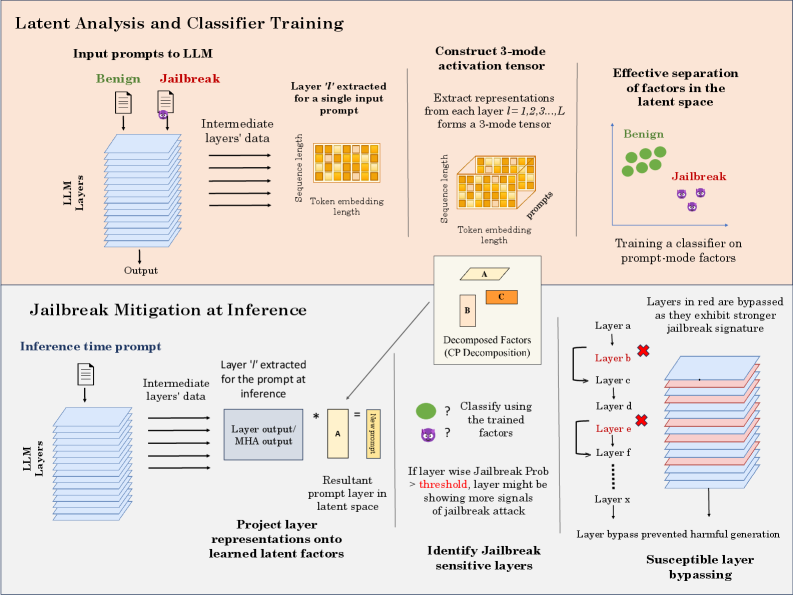

The image presents a diagram illustrating two main processes: "Latent Analysis and Classifier Training" and "Jailbreak Mitigation at Inference." The first process describes how a classifier is trained to distinguish between benign and jailbreak prompts based on latent factors extracted from an LLM. The second process outlines a method for mitigating jailbreak attempts during inference by identifying and bypassing sensitive layers.

### Components/Axes

**Latent Analysis and Classifier Training (Top Section):**

* **Input prompts to LLM:** Shows two input prompts, one labeled "Benign" (represented by a green document icon) and the other "Jailbreak" (represented by a purple document icon with a lock).

* **LLM Layers:** A stack of blue layers representing the layers of the Large Language Model (LLM).

* **Intermediate layers' data:** Arrows indicate data flow from the input prompts through the LLM layers.

* **Layer 'l' extracted for a single input prompt:** A yellow rectangle representing the extracted layer, labeled with "Sequence length" and "Token embedding length."

* **Construct 3-mode activation tensor:** A 3D cube composed of smaller yellow cubes, representing the activation tensor. Labeled with "Sequence length," "prompts," and "Token embedding length."

* **Effective separation of factors in the latent space:** A scatter plot showing two clusters: "Benign" (green circles) and "Jailbreak" (purple figures).

* **Decomposed Factors (CP Decomposition):** Three rectangles labeled A, B, and C. A is yellow, B is light red, and C is orange.

* **Training a classifier on prompt-mode factors:** Text indicating the purpose of the scatter plot.

**Jailbreak Mitigation at Inference (Bottom Section):**

* **Inference time prompt:** A document icon representing the input prompt.

* **LLM Layers:** A stack of blue layers representing the LLM layers.

* **Intermediate layers' data:** Arrows indicate data flow from the input prompt through the LLM layers.

* **Layer 'l' extracted for the prompt at inference:** A rectangle labeled "Layer output/MHA output."

* **Project layer representations onto learned latent factors:** Text describing the process.

* **Resultant prompt layer in latent space:** A rectangle labeled "New prompt."

* **Identify Jailbreak sensitive layers:** Text describing the goal of this section.

* **Classify using the trained factors:** Question marks next to a green circle and a purple figure, indicating classification.

* **If layer wise Jailbreak Prob > threshold, layer might be showing more signals of jailbreak attack:** Text describing the condition for identifying jailbreak-sensitive layers.

* **Susceptible layer bypassing:** Text describing the outcome of the process.

* **Layers in red are bypassed as they exhibit stronger jailbreak signature:** Text explaining the bypassing mechanism.

* **Layer bypass prevented harmful generation:** Text describing the benefit of layer bypassing.

* **Layer B, Layer E:** Layers marked in red with a cross, indicating they are bypassed.

* **Layer a, Layer c, Layer d, Layer f, Layer x:** Layers in blue.

### Detailed Analysis or ### Content Details

**Latent Analysis and Classifier Training:**

1. **Input Prompts:** The diagram starts with two types of input prompts: "Benign" and "Jailbreak." These prompts are fed into the LLM.

2. **LLM Layers:** The prompts pass through multiple layers of the LLM, represented by a stack of blue rectangles.

3. **Layer Extraction:** For a single input prompt, a specific layer 'l' is extracted. This layer is represented as a yellow rectangle, characterized by "Sequence length" and "Token embedding length."

4. **3-Mode Activation Tensor:** Representations from each layer (l = 1, 2, 3, ..., L) are extracted and formed into a 3-mode tensor. This tensor is visualized as a 3D cube.

5. **Factor Separation:** The process aims to achieve effective separation of factors in the latent space. This is visualized as a scatter plot where "Benign" prompts cluster separately from "Jailbreak" prompts.

6. **Classifier Training:** A classifier is trained on these prompt-mode factors to distinguish between benign and jailbreak attempts.

7. **Decomposed Factors:** The factors are decomposed into components A, B, and C.

**Jailbreak Mitigation at Inference:**

1. **Inference Time Prompt:** An input prompt is provided to the LLM during inference.

2. **LLM Layers:** The prompt passes through the LLM layers.

3. **Layer Extraction:** A specific layer 'l' is extracted for the prompt at inference. This layer's output is labeled "Layer output/MHA output."

4. **Projection onto Latent Factors:** The layer representations are projected onto learned latent factors.

5. **Resultant Prompt Layer:** This projection results in a "New prompt" layer in latent space.

6. **Jailbreak Detection:** The system classifies whether the prompt is a jailbreak attempt using the trained factors.

7. **Layer Bypassing:** If the layer-wise jailbreak probability exceeds a threshold, the layer is identified as jailbreak-sensitive and bypassed. Layers in red (Layer B, Layer E) are bypassed because they exhibit a stronger jailbreak signature.

8. **Harmful Generation Prevention:** Layer bypassing prevents harmful generation by avoiding the use of jailbreak-sensitive layers.

### Key Observations

* The diagram illustrates a two-stage process: training a classifier to detect jailbreak attempts and then using this classifier to mitigate jailbreak attempts during inference.

* The key idea is to identify and bypass layers that are highly sensitive to jailbreak attacks.

* The use of latent factor analysis allows for the separation of benign and jailbreak prompts in the latent space.

### Interpretation

The diagram presents a method for enhancing the safety and reliability of Large Language Models (LLMs) by mitigating jailbreak attempts. The approach involves training a classifier to distinguish between benign and malicious prompts based on latent factors extracted from the LLM's layers. During inference, the system identifies layers that are highly sensitive to jailbreak attacks and bypasses them, preventing the generation of harmful or inappropriate content.

The diagram highlights the importance of understanding the internal representations of LLMs and using this knowledge to improve their robustness. By identifying and mitigating jailbreak-sensitive layers, the system can effectively reduce the risk of malicious use and ensure that the LLM is used responsibly. The use of CP decomposition to decompose the factors into components A, B, and C suggests a method for further analyzing and understanding the underlying factors that contribute to jailbreak vulnerability.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: Latent Analysis and Classifier Training & Jailbreak Mitigation at Inference

### Overview

This diagram illustrates a two-part process: first, the latent analysis and training of a classifier to distinguish between benign and jailbreak prompts to a Large Language Model (LLM); and second, the application of this classifier during inference to mitigate jailbreak attacks. The diagram uses a series of stacked blocks representing LLM layers, and visual representations of data transformations.

### Components/Axes

The diagram is divided into two main sections: "Latent Analysis and Classifier Training" (top) and "Jailbreak Mitigation at Inference" (bottom). Each section contains several components:

* **LLM Layers:** Represented as stacked rectangular blocks in teal.

* **Input Prompts:** "Benign" and "Jailbreak" prompts are shown as input to the LLM.

* **Intermediate Layers' Data:** Represented as stacked rectangular blocks in grey.

* **Layer 't' extracted for a single input prompt:** A rectangular block representing a specific layer's output.

* **3-mode activation tensor:** A 3D representation of the extracted layer data.

* **Decomposed Factors (CP Decomposition):** Represented as blocks labeled A, B, and C.

* **Resultant prompt layer in latent space:** A rectangular block representing the projected prompt.

* **Classifier:** Represented as a question mark within a circle.

* **Layers in red are bypassed:** Layers highlighted in red, indicating they are bypassed during inference.

* **Susceptible layer bypassing:** A visual representation of bypassed layers.

The diagram also includes labels for key concepts like "Sequence length", "Token embedding length", "Effective separation of factors in the latent space", "Training a classifier on prompt-mode factors", "Identify Jailbreak sensitive layers", and "Layer bypass prevented harmful generation".

### Detailed Analysis or Content Details

**Latent Analysis and Classifier Training (Top Section):**

1. **Input Prompts:** Two types of input prompts are shown: "Benign" (green checkmark) and "Jailbreak" (red lock).

2. **LLM Layers:** The LLM is represented by a stack of teal blocks.

3. **Intermediate Layers' Data:** The output of the LLM layers is represented by a stack of grey blocks.

4. **Layer 't' extracted for a single input prompt:** A single layer's output is extracted for analysis. The dimensions are labeled "Sequence length" and "Token embedding length".

5. **Construct 3-mode activation tensor:** The extracted layer data is transformed into a 3-mode tensor, visualized as a 3D block with dimensions "Sequence length", "Token embedding length", and "prompts".

6. **Decomposed Factors (CP Decomposition):** The 3-mode tensor is decomposed into factors A, B, and C.

7. **Effective separation of factors in the latent space:** Benign prompts are represented as green dots clustered together, while Jailbreak prompts are represented as red dots clustered separately. This indicates successful separation in the latent space.

8. **Training a classifier on prompt-mode factors:** The decomposed factors are used to train a classifier to distinguish between benign and jailbreak prompts.

**Jailbreak Mitigation at Inference (Bottom Section):**

1. **Inference time prompt:** A single prompt is input to the LLM during inference.

2. **LLM Layers:** The LLM is again represented by a stack of teal blocks.

3. **Intermediate Layers' Data:** The output of the LLM layers is represented by a stack of grey blocks.

4. **Layer output/MHA output:** The output of a specific layer is extracted.

5. **Resultant prompt layer in latent space:** The layer output is projected onto the learned latent factors.

6. **Classify using the trained factors:** The projected prompt is classified using the trained classifier. A question mark indicates the classification result.

7. **If layer wise Jailbreak Prob > threshold, layer might be showing more signals of jailbreak attack:** A conditional statement indicating that if the jailbreak probability for a layer exceeds a threshold, the layer is considered susceptible to jailbreak attacks.

8. **Layers in red are bypassed:** Layers identified as susceptible to jailbreak attacks are bypassed (highlighted in red).

9. **Layer bypass prevented harmful generation:** The diagram states that bypassing these layers prevents harmful generation.

### Key Observations

* The diagram highlights the importance of latent space analysis for identifying and mitigating jailbreak attacks.

* The use of CP decomposition to extract factors from the LLM layers is a key step in the process.

* The classifier is trained to distinguish between benign and jailbreak prompts based on these factors.

* During inference, the classifier is used to identify susceptible layers, which are then bypassed to prevent harmful generation.

* The diagram visually emphasizes the separation of benign and jailbreak prompts in the latent space.

### Interpretation

The diagram demonstrates a method for enhancing the security of LLMs against jailbreak attacks. By analyzing the latent representations of prompts, the system can identify layers that are vulnerable to manipulation and bypass them during inference. This approach aims to prevent the generation of harmful or unintended outputs. The use of CP decomposition suggests a dimensionality reduction technique to simplify the analysis of the high-dimensional latent space. The classifier acts as a gatekeeper, identifying potentially harmful prompts and triggering the bypassing mechanism. The diagram suggests a proactive approach to security, rather than relying solely on reactive measures. The conditional statement regarding the jailbreak probability threshold indicates a tunable parameter for controlling the sensitivity of the mitigation system. The diagram does not provide specific numerical data or performance metrics, but it clearly outlines the conceptual framework for a jailbreak mitigation strategy. The diagram is a conceptual illustration and does not provide details on the specific algorithms or implementation details used.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

\n

## Technical Process Diagram: Latent Analysis and Classifier Training for LLM Jailbreak Detection and Mitigation

### Overview

This diagram illustrates a two-phase technical framework for detecting and mitigating "jailbreak" attacks on Large Language Models (LLMs). The top phase focuses on **offline training** of a classifier using latent space analysis of model activations. The bottom phase describes the **inference-time application** of this classifier to identify and bypass model layers susceptible to jailbreak attacks, thereby preventing harmful output generation.

### Components/Axes & Process Flow

The diagram is divided into two primary horizontal sections:

**1. Top Section: Latent Analysis and Classifier Training (Offline Phase)**

* **Input:** "Input prompts to LLM" are shown as two document icons, labeled **"Benign"** (green) and **"Jailbreak"** (purple with a skull icon).

* **Process:**

* These prompts are fed into an "LLM Layers" stack (represented as a blue 3D block).

* "Intermediate layers' data" is extracted. A callout specifies: "Layer *T* extracted for a single input prompt," showing a 2D grid with axes "Sequence length" and "Token embedding length."

* This extraction is repeated: "Extract representations from each layer *l = 1,2,3...L* forms a 3-mode tensor." A 3D grid visualizes this tensor with axes: "Sequence length," "Token embedding length," and "prompts."

* The tensor undergoes "Decomposed Factors (CP Decomposition)," visualized as three factor matrices labeled **A**, **B**, and **C**.

* **Output/Goal:** "Effective separation of factors in the latent space." A 2D scatter plot shows green circles ("Benign") clustered separately from purple skull icons ("Jailbreak"). The final step is "Training a classifier on prompt-mode factors."

**2. Bottom Section: Jailbreak Mitigation at Inference (Deployment Phase)**

* **Input:** An "Inference time prompt" (document icon) is fed into the same "LLM Layers" stack.

* **Process:**

* "Layer *T* extracted for the prompt at inference." The extracted "Layer output/ MHA output" is multiplied (`*`) with the pre-trained factor matrix **A**.

* This results in a "Resultant prompt layer in latent space," shown as a vector labeled "latent factors."

* The system will "Project layer representations onto learned latent factors."

* Next, it will "Classify using the trained factors" (green circle and purple skull icons with question marks).

* A decision rule is stated: "If layer wise Jailbreak Prob > threshold, layer might be showing more signals of jailbreak attack."

* This leads to "Identify Jailbreak sensitive layers." A list shows layers `a` through `x`. Layers **b** and **e** are highlighted in red with a red 'X', indicating they are "bypassed as they exhibit stronger jailbreak signature."

* The final step is "Susceptible layer bypassing," showing the LLM layer stack with layers `b` and `e` visually skipped (red lines bypass them), leading to "Layer bypass prevented harmful generation."

### Detailed Analysis / Content Details

* **Key Technical Terms:** LLM (Large Language Model), MHA (Multi-Head Attention), CP Decomposition (CANDECOMP/PARAFAC), Latent Space, 3-mode Tensor, Jailbreak Attack.

* **Data Flow:** The process is a pipeline: Raw Prompts -> Layer-wise Activation Extraction -> Tensor Construction -> Decomposition -> Classifier Training -> Inference-time Projection -> Classification -> Layer Identification -> Conditional Bypassing.

* **Visual Coding:**

* **Color:** Green consistently represents "Benign" data/behavior. Purple represents "Jailbreak" data/behavior. Red is used for layers identified as sensitive/bypassed.

* **Icons:** Document icons for prompts. Skull icon for jailbreak. 'X' marks for bypassed layers.

* **Spatial Layout:** The training phase flows left-to-right on top. The inference phase flows left-to-right on the bottom, with a central connection showing the use of the pre-trained factor matrix **A**.

### Key Observations

1. **Layer-Specific Analysis:** The method does not treat the LLM as a black box. It analyzes activations at *each intermediate layer* (`l = 1,2,3...L`) to find granular attack signatures.

2. **Factor Decomposition:** The core innovation is using tensor decomposition (CP) on the 3-mode activation tensor (sequence × embedding × prompt) to disentangle the underlying factors that distinguish benign from jailbreak prompts.

3. **Dynamic Bypassing:** Mitigation is not a static filter. It dynamically identifies which specific layers in the model are most activated by a given suspicious prompt at inference time and bypasses only those layers.

4. **Threshold-Based Decision:** The system uses a probabilistic threshold ("Jailbreak Prob > threshold") to decide on layer bypassing, introducing a tunable sensitivity parameter.

### Interpretation

This diagram outlines a sophisticated, **proactive defense mechanism** against adversarial attacks on LLMs. The underlying premise is that jailbreak prompts cause the model's internal representations (activations) to deviate from a "benign" manifold in a predictable way that can be captured in the latent space of layer activations.

* **What it demonstrates:** It shows a complete pipeline from offline analysis to real-time defense. The key insight is that jailbreak attacks leave a "signature" not just in the final output, but in the *process* of computation across specific layers. By learning this signature via tensor factorization, the system can detect it early.

* **How elements relate:** The top section (training) creates the "detector" (the classifier and factor matrices). The bottom section (inference) is the "deployment" where this detector is applied to new prompts. The critical link is the projection of new layer outputs onto the learned latent factors (**A**), allowing for comparison with the trained classifier.

* **Notable Implications:**

* **Efficiency:** Bypassing only sensitive layers could be more computationally efficient than re-running the prompt or using a separate large classifier model.

* **Adaptability:** The classifier can be retrained as new jailbreak techniques emerge, updating the latent factor understanding.

* **Transparency:** This method provides some interpretability—by identifying *which* layers are sensitive, developers might gain insight into *how* the model is being manipulated.

* **Potential Limitation:** The effectiveness hinges on the assumption that future jailbreak attacks will project onto the same latent factors learned during training. Novel attacks might evade detection. The threshold setting also presents a trade-off between false positives (blocking benign prompts) and false negatives (missing jailbreaks).

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Diagram: Latent Analysis and Classifier Training for Jailbreak Mitigation

### Overview

The diagram illustrates a two-phase process for detecting and mitigating jailbreak attacks in large language models (LLMs). It combines latent space analysis, classifier training, and inference-time mitigation strategies to identify and bypass harmful prompt-mode factors.

---

### Components/Axes

1. **Top Section: Latent Analysis and Classifier Training**

- **Input Prompts to LLM**:

- Two input types: *Benign* (green) and *Jailbreak* (red) prompts.

- Arrows show data flow through *LLM Layers* to an *Output*.

- **Intermediate Layers' Data**:

- Extracted from a specific layer (`Layer 'l'`) for a single input prompt.

- Visualized as a 2D grid with dimensions: *Sequence length* (rows) and *Token embedding length* (columns).

- **3-Mode Activation Tensor**:

- Constructed by extracting representations from all layers (`l=1,2,3,...,L`).

- Forms a 3D tensor with dimensions: *Sequence length*, *Token embedding length*, and *Layer depth*.

- **Effective Separation of Factors**:

- Visualized as clusters in latent space: *Benign* (green dots) and *Jailbreak* (purple dots).

- A classifier is trained on *prompt-mode factors* to distinguish these clusters.

2. **Bottom Section: Jailbreak Mitigation at Inference**

- **Inference-Time Prompt**:

- Input prompt processed through *LLM Layers*.

- Intermediate data extracted from `Layer 'l'` and multiplied by matrix **A** to form a *New Prompt Layer*.

- **Decomposed Factors (CP Decomposition)**:

- Visualized as a 3D tensor decomposed into factors **A**, **B**, and **C**.

- **Project Layer Representations**:

- Outputs are projected onto *learned latent factors* for classification.

- **Identify Jailbreak-Sensitive Layers**:

- If `Jailbreak Prob > threshold`, the layer is flagged as sensitive.

- **Susceptible Layer Bypassing**:

- Layers marked in red (e.g., `Layer b`, `Layer e`) are bypassed during inference to prevent harmful outputs.

---

### Detailed Analysis

- **Latent Space Separation**:

- Benign and jailbreak prompts are separated in latent space, enabling classifier training on prompt-mode factors.

- **3-Mode Tensor Construction**:

- Captures multi-dimensional interactions between sequence position, token embeddings, and layer depth.

- **Inference Mitigation**:

- Matrix **A** transforms intermediate layer outputs into a new prompt layer, which is analyzed for jailbreak signals.

- Layers with high jailbreak probability are bypassed to avoid harmful outputs.

---

### Key Observations

1. **Layer Sensitivity**:

- Certain layers (marked in red) exhibit stronger jailbreak signatures and are bypassed during inference.

2. **Classifier Training**:

- The classifier uses prompt-mode factors derived from the 3-mode tensor to distinguish benign from jailbreak inputs.

3. **Decomposition**:

- CP decomposition breaks down the activation tensor into interpretable factors (**A**, **B**, **C**), aiding in mitigation.

---

### Interpretation

This diagram demonstrates a defense mechanism against jailbreak attacks by leveraging latent space analysis. During training, the model learns to separate benign and malicious prompts in latent space. At inference, it dynamically identifies sensitive layers and bypasses them to prevent harmful outputs. The use of CP decomposition suggests an emphasis on interpretability, allowing the system to isolate and neutralize jailbreak signals effectively. The red-marked layers likely represent critical points where jailbreak attempts manifest, making them prime candidates for bypassing.

DECODING INTELLIGENCE...