## Stacked Bar Chart: Token Allocation by Expert Across Layers

### Overview

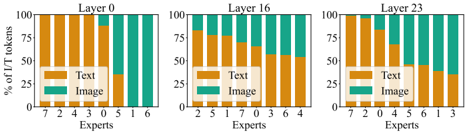

The image displays three stacked bar charts arranged horizontally, each representing a different layer (Layer 0, Layer 16, Layer 23) of a neural network or similar model. Each chart shows the percentage of "TT tokens" (likely "Text-Text" or a specific token type) allocated to "Text" versus "Image" processing across a set of numbered experts. The overall trend shows a shift from text-dominant processing in early layers to a more balanced or image-heavy allocation in deeper layers.

### Components/Axes

* **Chart Type:** Stacked Bar Charts (3 panels).

* **Panel Titles (Top Center):** "Layer 0", "Layer 16", "Layer 23".

* **Y-Axis (Left Side):** Label: "% of TT tokens". Scale: 0, 25, 50, 75, 100 (percentages).

* **X-Axis (Bottom):** Label: "Experts". Each bar is labeled with an expert number below it.

* **Legend (Bottom Right of each panel):** A two-color key.

* Orange/Gold box: "Text"

* Teal/Green box: "Image"

* **Data Series:** Each bar is a stack of two segments: the lower orange segment represents the percentage of tokens for "Text", and the upper teal segment represents the percentage for "Image".

### Detailed Analysis

**Layer 0 (Left Panel):**

* **Experts (Left to Right):** 7, 1, 4, 3, 5, 2, 1, 6. *(Note: Expert "1" appears twice).*

* **Trend:** The "Text" (orange) segment dominates all bars, generally occupying 70-95% of the token allocation. The "Image" (teal) segment is a small portion at the top.

* **Approximate Data Points (Text %, Image %):**

* Expert 7: ~95% Text, ~5% Image.

* Expert 1 (first): ~90% Text, ~10% Image.

* Expert 4: ~85% Text, ~15% Image.

* Expert 3: ~80% Text, ~20% Image.

* Expert 5: ~75% Text, ~25% Image.

* Expert 2: ~70% Text, ~30% Image.

* Expert 1 (second): ~65% Text, ~35% Image.

* Expert 6: ~60% Text, ~40% Image.

**Layer 16 (Middle Panel):**

* **Experts (Left to Right):** 2, 5, 7, 0, 3, 6, 4.

* **Trend:** The "Image" (teal) segment is significantly larger than in Layer 0. The allocation is more varied, with some experts still text-heavy and others becoming image-heavy.

* **Approximate Data Points (Text %, Image %):**

* Expert 2: ~80% Text, ~20% Image.

* Expert 5: ~75% Text, ~25% Image.

* Expert 7: ~70% Text, ~30% Image.

* Expert 0: ~55% Text, ~45% Image.

* Expert 3: ~50% Text, ~50% Image.

* Expert 6: ~45% Text, ~55% Image.

* Expert 4: ~40% Text, ~60% Image.

**Layer 23 (Right Panel):**

* **Experts (Left to Right):** 7, 1, 0, 2, 5, 6, 1, 3. *(Note: Expert "1" appears twice again).*

* **Trend:** The "Image" (teal) segment is now dominant or co-dominant for most experts. The shift towards image processing is most pronounced here.

* **Approximate Data Points (Text %, Image %):**

* Expert 7: ~70% Text, ~30% Image.

* Expert 1 (first): ~65% Text, ~35% Image.

* Expert 0: ~50% Text, ~50% Image.

* Expert 2: ~45% Text, ~55% Image.

* Expert 5: ~40% Text, ~60% Image.

* Expert 6: ~35% Text, ~65% Image.

* Expert 1 (second): ~30% Text, ~70% Image.

* Expert 3: ~25% Text, ~75% Image.

### Key Observations

1. **Layer-Dependent Specialization:** There is a clear progression from Layer 0 to Layer 23. Early layers are heavily specialized for text token processing, while deeper layers show a much higher allocation to image tokens.

2. **Expert Heterogeneity:** Within each layer, different experts show different allocation ratios, indicating functional specialization among experts even at the same depth.

3. **Duplicate Expert Labels:** The expert number "1" appears twice in both Layer 0 and Layer 23. This could indicate two distinct experts with the same ID, a labeling error, or a representation of different attention heads or sub-modules within the same expert.

4. **Inversion of Dominance:** The expert with the highest text allocation in Layer 0 (Expert 7, ~95%) still has a relatively high text allocation in Layer 23 (~70%), but the expert with the highest image allocation in Layer 23 (Expert 3, ~75% Image) had a moderate text allocation in Layer 0 (~80% Text). This suggests the ranking of experts by function changes across layers.

### Interpretation

This visualization demonstrates the hierarchical processing within a multimodal model. The data suggests that:

* **Early Layers (e.g., Layer 0)** are primarily engaged in processing textual information, likely extracting basic linguistic features. The minimal image token allocation here might represent initial visual feature grounding or cross-modal alignment.

* **Intermediate Layers (e.g., Layer 16)** show a transition where visual information becomes increasingly important. This could correspond to stages where the model integrates textual and visual features for more complex understanding.

* **Deep Layers (e.g., Layer 23)** are heavily involved in processing image-derived tokens. This implies that high-level reasoning, scene understanding, or generation tasks in the model rely more on visual representations, which have been built up from the earlier layers.

The variation among experts within a layer indicates a **"Mixture of Experts" (MoE)** architecture, where different sub-networks (experts) specialize in different types of data or tasks. The shift in specialization across layers is a key finding, showing that the model's processing strategy is not static but evolves with depth, moving from text-centric to a more balanced or image-centric focus for higher-level abstraction. The duplicate expert IDs warrant further investigation to understand the model's exact architecture.