## Pie Chart: Accuracy of GPT-4 Description (Conditional on Q1 Answer)

### Overview

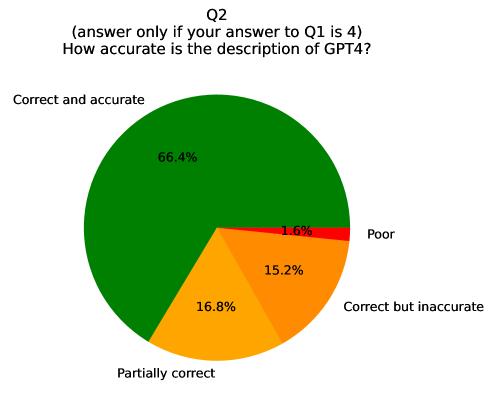

This image displays a pie chart representing survey results for a specific question (Q2). The question is conditional, asking respondents to answer only if their answer to a previous question (Q1) was "4." The core question is: "How accurate is the description of GPT4?" The chart visualizes the distribution of responses across four categories of accuracy.

### Components/Axes

* **Chart Type:** Pie Chart.

* **Title:** "Q2 (answer only if your answer to Q1 is 4) How accurate is the description of GPT4?"

* **Data Series (Segments):** The chart is divided into four colored segments, each representing a response category. The legend is provided as labels placed directly adjacent to their corresponding slices.

* **Segment Labels & Percentages:**

1. **Correct and accurate** (Green slice, positioned on the left side of the pie): 66.4%

2. **Partially correct** (Orange slice, positioned at the bottom of the pie): 16.8%

3. **Correct but inaccurate** (Orange slice, positioned on the lower-right side of the pie): 15.2%

4. **Poor** (Red slice, positioned on the right side of the pie, adjacent to the "Correct and accurate" slice): 1.6%

### Detailed Analysis

The data presents a clear hierarchy of responses regarding the perceived accuracy of a description of GPT-4:

* The overwhelming majority of respondents, **66.4%**, selected the highest accuracy category: **"Correct and accurate."** This is the dominant segment, occupying nearly two-thirds of the chart.

* The next two categories are similar in size and are both colored orange. **"Partially correct"** accounts for **16.8%**, while **"Correct but inaccurate"** accounts for **15.2%**. Together, these "middle-ground" responses constitute 32.0% of the total.

* The smallest segment by a significant margin is the **"Poor"** category, represented by a thin red slice, comprising only **1.6%** of responses.

### Key Observations

1. **Strong Positive Skew:** The distribution is heavily skewed towards positive accuracy assessments. Over 83% of respondents (66.4% + 15.2%) indicated the description was at least "correct," with the majority of those finding it fully accurate.

2. **Minimal Negative Feedback:** The "Poor" rating is a clear outlier on the low end, representing a negligible fraction of the feedback.

3. **Ambiguity in Middle Categories:** The two orange categories, "Partially correct" and "Correct but inaccurate," are semantically similar but distinct. Their near-equal split suggests respondents differentiated between a description that was incomplete versus one that was factually right but poorly executed or lacking precision.

### Interpretation

This chart suggests that, among the subset of survey participants who answered "4" to Q1 (the nature of which is unknown), there is a high level of confidence in the accuracy of the provided description of GPT-4. The data indicates the description was largely successful, with very few respondents finding it fundamentally flawed.

The conditional nature of the question ("answer only if your answer to Q1 is 4") is critical. It implies this result is not from all respondents, but from a specific subgroup defined by their response to a prior question. Without knowing what Q1 was, we cannot generalize these findings to a broader population. The results are strong within this subgroup, but the subgroup itself may be biased (e.g., perhaps Q1 filtered for users with a certain level of familiarity or a specific prior opinion).

The near-even split in the middle categories is noteworthy. It highlights that for about a third of this group, the description had notable shortcomings—either in completeness ("Partially correct") or in the quality/precision of the correct information ("Correct but inaccurate"). This provides actionable feedback for refinement, even amidst the overall positive reception.