## Horizontal Bar Chart: AI Model Accuracy Comparison (Syntax vs. NLU)

### Overview

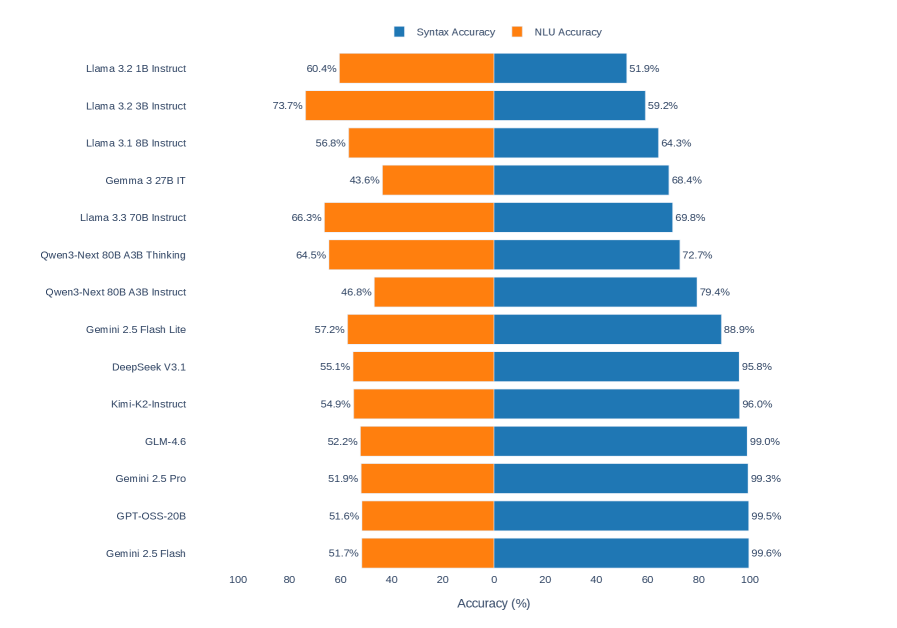

The image displays a horizontal bar chart comparing the performance of 14 different large language models (LLMs) on two distinct accuracy metrics: **Syntax Accuracy** and **NLU (Natural Language Understanding) Accuracy**. The chart uses a diverging bar format, with NLU Accuracy plotted to the left (orange) and Syntax Accuracy plotted to the right (blue) from a central zero axis. The models are listed vertically on the left side.

### Components/Axes

* **Chart Type:** Horizontal diverging bar chart.

* **Y-Axis (Vertical):** Lists the names of 14 AI models. From top to bottom:

1. Llama 3.2 1B Instruct

2. Llama 3.2 3B Instruct

3. Llama 3.1 8B Instruct

4. Gemma 3 27B IT

5. Llama 3.3 70B Instruct

6. Qwen3-Next 80B A3B Thinking

7. Qwen3-Next 80B A3B Instruct

8. Gemini 2.5 Flash Lite

9. DeepSeek V3.1

10. Kimi-K2-Instruct

11. GLM-4.6

12. Gemini 2.5 Pro

13. GPT-OSS-20B

14. Gemini 2.5 Flash

* **X-Axis (Horizontal):** Labeled "Accuracy (%)". The scale runs from 0 at the center to 100 on both the left (for NLU) and right (for Syntax) sides. Major tick marks are at 0, 20, 40, 60, 80, and 100.

* **Legend:** Positioned at the top center of the chart.

* A blue square is labeled "Syntax Accuracy".

* An orange square is labeled "NLU Accuracy".

* **Data Series:** Two series are plotted for each model.

* **NLU Accuracy (Orange Bars):** Extend leftward from the central axis. The numerical percentage value is printed to the left of each orange bar.

* **Syntax Accuracy (Blue Bars):** Extend rightward from the central axis. The numerical percentage value is printed to the right of each blue bar.

### Detailed Analysis

The following table reconstructs the data presented in the chart. Values are transcribed directly from the labels on the bars.

| Model Name | NLU Accuracy (Orange, Left) | Syntax Accuracy (Blue, Right) |

| :--- | :--- | :--- |

| Llama 3.2 1B Instruct | 60.4% | 51.9% |

| Llama 3.2 3B Instruct | 73.7% | 59.2% |

| Llama 3.1 8B Instruct | 56.8% | 64.3% |

| Gemma 3 27B IT | 43.6% | 68.4% |

| Llama 3.3 70B Instruct | 66.3% | 69.8% |

| Qwen3-Next 80B A3B Thinking | 64.5% | 72.7% |

| Qwen3-Next 80B A3B Instruct | 46.8% | 79.4% |

| Gemini 2.5 Flash Lite | 57.2% | 88.9% |

| DeepSeek V3.1 | 55.1% | 95.8% |

| Kimi-K2-Instruct | 54.9% | 96.0% |

| GLM-4.6 | 52.2% | 99.0% |

| Gemini 2.5 Pro | 51.9% | 99.3% |

| GPT-OSS-20B | 51.6% | 99.5% |

| Gemini 2.5 Flash | 51.7% | 99.6% |

**Trend Verification:**

* **NLU Accuracy (Orange, Leftward Trend):** The orange bars show no single monotonic trend. The highest NLU score is for **Llama 3.2 3B Instruct (73.7%)**, located near the top. The scores generally fluctuate between the mid-40s and low-70s, with the bottom seven models (from Gemini 2.5 Flash Lite downwards) clustering in a narrow band between 51.6% and 57.2%.

* **Syntax Accuracy (Blue, Rightward Trend):** The blue bars exhibit a very clear upward trend from top to bottom. The lowest Syntax score is for **Llama 3.2 1B Instruct (51.9%)** at the top. The scores increase steadily, with the bottom four models achieving near-perfect scores above 99%.

### Key Observations

1. **Inverse Performance Relationship:** There is a strong inverse relationship between the two metrics across the model list. Models at the top of the chart (e.g., Llama 3.2 variants) tend to have higher NLU scores but lower Syntax scores. Models at the bottom (e.g., Gemini 2.5 Flash, GPT-OSS-20B) have exceptionally high Syntax scores but relatively lower, clustered NLU scores.

2. **Syntax Accuracy Ceiling:** The bottom four models (GLM-4.6, Gemini 2.5 Pro, GPT-OSS-20B, Gemini 2.5 Flash) have effectively reached a performance ceiling on the Syntax Accuracy metric, all scoring between 99.0% and 99.6%.

3. **NLU Performance Cluster:** The bottom half of the models (from Gemini 2.5 Flash Lite to Gemini 2.5 Flash) show remarkably similar NLU Accuracy, all falling within a ~6 percentage point range (51.6% to 57.2%), despite vast differences in their Syntax scores.

4. **Model Variant Comparison:** The "Qwen3-Next 80B A3B" model is listed in two variants: "Thinking" and "Instruct". The "Instruct" variant has significantly higher Syntax Accuracy (79.4% vs. 72.7%) but much lower NLU Accuracy (46.8% vs. 64.5%) compared to the "Thinking" variant.

### Interpretation

This chart visualizes a potential trade-off or specialization in LLM capabilities. The data suggests that the evaluated models can be broadly categorized into two groups based on this benchmark:

* **NLU-Focused/Generalist Models (Top of Chart):** Models like the Llama 3.2 series prioritize or excel at Natural Language Understanding tasks, achieving higher NLU scores. However, this comes at the cost of lower syntactic precision. Their performance profile suggests a design or training emphasis on semantic comprehension over rigid structural correctness.

* **Syntax-Focused/Formalist Models (Bottom of Chart):** Models like Gemini 2.5 Flash and GPT-OSS-20B demonstrate near-flawless syntactic performance. Their tightly clustered, moderate NLU scores indicate that while they are exceptionally good at generating grammatically correct and structurally sound text, their grasp of deeper semantic meaning or nuanced understanding, as measured by this NLU metric, is consistent but not leading. This could reflect a training objective that heavily weights formal correctness.

The stark divergence implies that "accuracy" is not a monolithic concept for LLMs. A model's strength in one linguistic dimension (syntax) does not predict its strength in another (semantics/NLU). The outlier is the **Qwen3-Next 80B A3B Instruct** model, which has the second-lowest NLU score (46.8%) but a relatively high Syntax score (79.4%), making it an extreme example of the syntax-over-NLU profile. This chart is crucial for selecting a model based on the specific requirements of a task—whether it demands impeccable grammar or deep understanding of context and meaning.