## Screenshot: GPT-3 Text Reasonableness Evaluation

### Overview

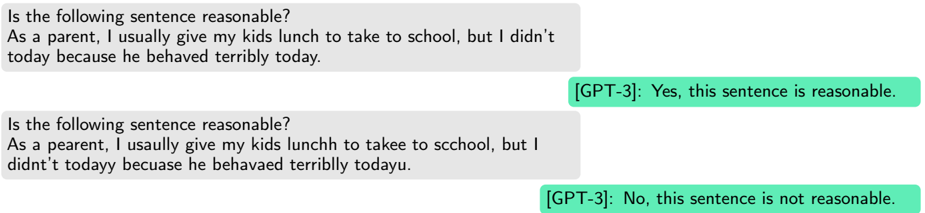

The image is a screenshot displaying two conversational exchanges. Each exchange consists of a user-provided sentence framed as a question about its "reasonableness," followed by a response labeled "[GPT-3]". The first example uses a correctly spelled sentence, which GPT-3 deems reasonable. The second example uses the same sentence but with numerous spelling and grammatical errors, which GPT-3 deems not reasonable. The interface uses gray bubbles for user input and green bubbles for GPT-3 responses.

### Components/Axes

* **Layout:** Vertical stack of two distinct Q&A pairs.

* **Visual Elements:**

* **User Input Bubbles:** Light gray, left-aligned, containing the question and the sentence to be evaluated.

* **Response Bubbles:** Light green, right-aligned, containing the judgment from "[GPT-3]".

* **Text Labels:** The label `[GPT-3]:` precedes each response.

### Content Details

**Example 1 (Top):**

* **User Input (Gray Bubble):**

> Is the following sentence reasonable?

> As a parent, I usually give my kids lunch to take to school, but I didn't today because he behaved terribly today.

* **GPT-3 Response (Green Bubble):**

> [GPT-3]: Yes, this sentence is reasonable.

**Example 2 (Bottom):**

* **User Input (Gray Bubble):**

> Is the following sentence reasonable?

> As a pearent, I usally give my kids lunchh to takee to scchool, but I didnt't todayy becuase he behavaed terriblly todayu.

* **GPT-3 Response (Green Bubble):**

> [GPT-3]: No, this sentence is not reasonable.

### Key Observations

1. **Identical Core Meaning:** The semantic content of the sentence in both examples is identical: a parent is explaining they did not provide school lunch as a consequence for a child's bad behavior.

2. **Divergent Judgments:** GPT-3's evaluation flips from "reasonable" to "not reasonable" based solely on surface-level textual errors.

3. **Error Catalog in Example 2:** The second sentence contains multiple, pervasive errors:

* Spelling: "pearent", "usally", "lunchh", "takee", "scchool", "didnt't", "todayy", "becuase", "behavaed", "terriblly", "todayu".

* Grammar/Punctuation: Missing apostrophe in "didnt't".

4. **Consistency in Logic:** GPT-3's responses are internally consistent with a strict interpretation of "reasonableness" that heavily weights grammatical correctness and standard spelling, potentially over semantic coherence.

### Interpretation

This screenshot demonstrates a specific limitation or characteristic of the GPT-3 model in this evaluation context. The model appears to conflate the **linguistic form** (correct spelling, grammar) with the **conceptual reasonableness** of the statement's content.

* **What it Suggests:** The model's training or fine-tuning for this task may have created a strong association between "well-formed text" and "reasonable content." It fails to separate the evaluation of the idea's logic from the evaluation of its presentation.

* **How Elements Relate:** The direct comparison between the two examples acts as a controlled experiment. By holding the meaning constant and varying only the orthographic correctness, the image isolates spelling/grammar as the decisive factor for GPT-3's judgment.

* **Notable Anomaly:** The most significant finding is the model's inability to recognize that the *proposition*—withholding lunch as a disciplinary action—remains logically consistent and "reasonable" (in a common-sense sense) regardless of how poorly it is spelled. This highlights a potential gap in robust, meaning-focused reasoning versus pattern-matching on textual features.

* **Broader Implication:** For technical document analysis or any task requiring understanding of intent behind noisy text, this behavior indicates that GPT-3 (as shown here) may produce unreliable or misleading evaluations if the input contains errors, even if the underlying information is clear to a human reader.