## Screenshot: Chat Interaction Analysis

### Overview

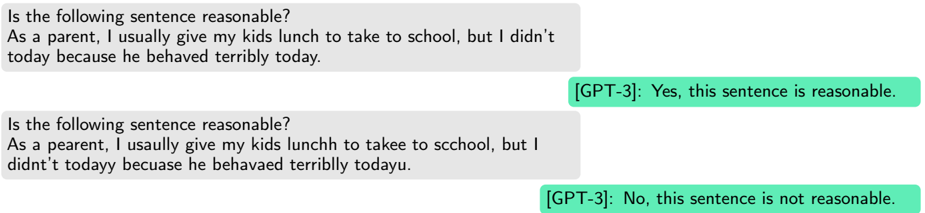

The image depicts a chat interface where a user queries GPT-3 about the reasonableness of two sentences. The first sentence is grammatically correct, while the second contains intentional typos. GPT-3 responds affirmatively to the first and negatively to the second.

### Components/Axes

- **User Messages**:

1. Correct sentence:

*"As a parent, I usually give my kids lunch to take to school, but I didn't today because he behaved terribly today."*

2. Typosentence:

*"As a pearent, I usaully give my kids lunchh to takeee to scchool, but I didn't todayy becausae he belhavaed terrribly todayu."*

- **GPT-3 Responses**:

1. For the correct sentence: *"Yes, this sentence is reasonable."*

2. For the typosentence: *"No, this sentence is not reasonable."*

### Detailed Analysis

- **Textual Content**:

- The first user message is free of grammatical errors and conveys a clear cause-effect relationship (behavior → action).

- The second user message contains multiple typos (e.g., "pearent" instead of "parent," "lunchh" instead of "lunch," "scchool" instead of "school"). These errors alter word forms and spacing but retain the original intent.

- **GPT-3 Responses**:

- The model explicitly ties its judgment to the presence of typos, suggesting that surface-level errors influence its assessment of "reasonableness."

### Key Observations

1. **Typos Impact Perception**: GPT-3 rejects the typosentence despite its semantic equivalence to the correct version, indicating sensitivity to orthographic errors.

2. **Consistency in Logic**: Both sentences share identical meaning and structure, yet the model’s response diverges based on textual fidelity.

3. **Ambiguity in "Reasonableness"**: The model’s criteria for "reasonableness" appear to include grammatical correctness, not just logical coherence.

### Interpretation

The interaction highlights how language models may conflate syntactic accuracy with semantic validity. While the typosentence’s meaning is preserved, GPT-3’s rejection implies that surface errors can override contextual understanding. This raises questions about the model’s ability to disentangle form from content in natural language processing tasks. The responses suggest that "reasonableness" in this context is evaluated through a lens of textual perfection, potentially disadvantaging inputs with minor errors despite their functional equivalence.