\n

## Box Plot Chart: Depthwise Average MIN-K% Across Three Language Models

### Overview

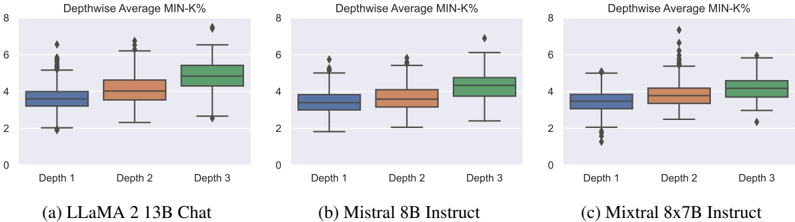

The image displays three horizontally arranged box plots, each comparing the distribution of a metric called "Depthwise Average MIN-K%" across three different depths (Depth 1, Depth 2, Depth 3) for three distinct large language models. The overall title for each subplot is "Depthwise Average MIN-K%". The models are identified by captions below each plot.

### Components/Axes

* **Chart Type:** Box-and-whisker plots (box plots).

* **Y-Axis:** Common to all three plots. Label: "Depthwise Average MIN-K%". Scale: Linear, ranging from 0 to 8, with major tick marks at 0, 2, 4, 6, and 8.

* **X-Axis:** For each subplot, categorical axis with three labels: "Depth 1", "Depth 2", "Depth 3".

* **Legend/Color Coding:** Consistent across all plots.

* Blue box: Depth 1

* Orange box: Depth 2

* Green box: Depth 3

* **Subplot Captions (Bottom):**

* (a) LLaMA 2 13B Chat

* (b) Mistral 8B Instruct

* (c) Mixtral 8x7B Instruct

### Detailed Analysis

**Plot (a) LLaMA 2 13B Chat:**

* **Depth 1 (Blue):** Median ≈ 3.5. Interquartile Range (IQR) ≈ 3.0 to 4.0. Whiskers extend from ≈ 2.0 to ≈ 5.0. One outlier diamond at ≈ 6.5.

* **Depth 2 (Orange):** Median ≈ 4.0. IQR ≈ 3.5 to 4.5. Whiskers extend from ≈ 2.5 to ≈ 6.0. One outlier diamond at ≈ 6.5.

* **Depth 3 (Green):** Median ≈ 5.0. IQR ≈ 4.5 to 5.5. Whiskers extend from ≈ 3.0 to ≈ 6.5. One outlier diamond at ≈ 7.5.

* **Trend:** The median MIN-K% value increases progressively from Depth 1 to Depth 3. The spread (IQR) also appears to increase slightly with depth.

**Plot (b) Mistral 8B Instruct:**

* **Depth 1 (Blue):** Median ≈ 3.5. IQR ≈ 3.0 to 4.0. Whiskers extend from ≈ 2.0 to ≈ 5.0. One outlier diamond at ≈ 5.5.

* **Depth 2 (Orange):** Median ≈ 3.8. IQR ≈ 3.2 to 4.2. Whiskers extend from ≈ 2.2 to ≈ 5.2. One outlier diamond at ≈ 5.8.

* **Depth 3 (Green):** Median ≈ 4.5. IQR ≈ 4.0 to 5.0. Whiskers extend from ≈ 2.5 to ≈ 6.0. One outlier diamond at ≈ 6.5.

* **Trend:** Similar increasing trend in median from Depth 1 to Depth 3, though the increase between Depth 1 and Depth 2 is less pronounced than in plot (a). The overall values are slightly lower than those for LLaMA 2.

**Plot (c) Mixtral 8x7B Instruct:**

* **Depth 1 (Blue):** Median ≈ 3.5. IQR ≈ 3.0 to 4.0. Whiskers extend from ≈ 1.5 to ≈ 5.0. Multiple outlier diamonds below the lower whisker, clustered between ≈ 1.0 and ≈ 2.0.

* **Depth 2 (Orange):** Median ≈ 4.0. IQR ≈ 3.5 to 4.5. Whiskers extend from ≈ 2.5 to ≈ 5.5. A significant cluster of outlier diamonds above the upper whisker, ranging from ≈ 6.0 to ≈ 7.5.

* **Depth 3 (Green):** Median ≈ 4.5. IQR ≈ 4.0 to 5.0. Whiskers extend from ≈ 3.0 to ≈ 6.0. One outlier diamond at ≈ 2.5 (below) and one at ≈ 6.0 (above).

* **Trend:** Median increases with depth. This model shows the highest variance, particularly at Depth 2, which has a large number of high-value outliers. Depth 1 also shows notable low-value outliers.

### Key Observations

1. **Consistent Depth Trend:** All three models exhibit a clear trend where the median "Depthwise Average MIN-K%" increases from Depth 1 to Depth 3.

2. **Model Comparison:** LLaMA 2 13B Chat (a) shows the highest median values at each corresponding depth, followed by Mixtral 8x7B Instruct (c), and then Mistral 8B Instruct (b).

3. **Variance and Outliers:** Mixtral 8x7B Instruct (c) displays the most significant variance and the most pronounced outlier behavior, especially the cluster of high outliers at Depth 2. LLaMA 2 and Mistral show fewer, more isolated outliers.

4. **Spread:** The interquartile range (box height) is relatively consistent across depths within each model, suggesting the central 50% of the data has a stable spread, even as the median shifts.

### Interpretation

The "Depthwise Average MIN-K%" metric appears to measure some property of model activations or performance that improves (increases) with network depth. The consistent upward trend across all three models suggests this is a fundamental characteristic related to how information is processed or refined in deeper layers of these transformer-based language models.

The differences between models are noteworthy. LLaMA 2's higher overall values might indicate a different internal scaling or a stronger effect at each depth. The high variance and outliers in Mixtral, a Mixture-of-Experts model, could reflect the specialized routing of tokens to different expert sub-networks, leading to more diverse activation patterns at certain depths (like Depth 2), which manifests as a wider spread and more extreme values in the metric.

This analysis suggests that depth is a critical factor for the MIN-K% metric. The presence of outliers, particularly in the MoE model, indicates that while the general trend is upward, individual data points (likely corresponding to specific tokens or sequences) can behave very differently, highlighting the complexity and non-uniformity of internal model computations.