TECHNICAL ASSET FINGERPRINT

9e14397e1accf0f7137aea3d

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

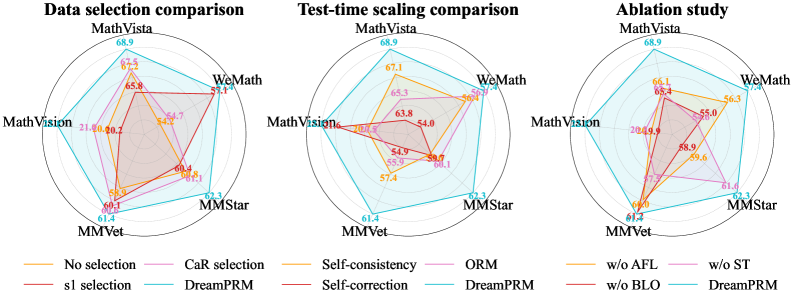

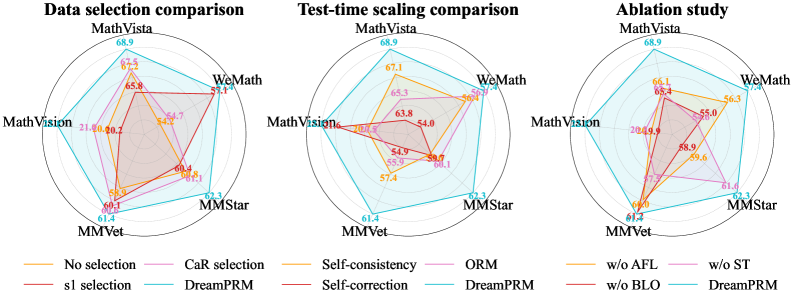

## Radar Charts: Data Selection, Test-Time Scaling, and Ablation Study

### Overview

The image presents three radar charts comparing different methods related to data selection, test-time scaling, and ablation studies. Each chart visualizes the performance across five categories: MathVista, WeMath, MMStar, MMVet, and MathVision. Different colored lines represent different selection methods or ablation conditions.

### Components/Axes

* **Chart Titles (Top):**

* Left: "Data selection comparison"

* Center: "Test-time scaling comparison"

* Right: "Ablation study"

* **Axes (Radial):** The radial axes represent performance metrics, presumably accuracy or a similar measure. The scale ranges approximately from 20 to 70.

* **Categories (Around the Circle):**

* MathVista (Top)

* WeMath (Top-Right)

* MMStar (Bottom-Right)

* MMVet (Bottom-Left)

* MathVision (Top-Left)

* **Axis Markers:** Concentric circles indicate approximate values. The outermost circle corresponds to a value near 70, and the innermost circle corresponds to a value near 20.

* **Legends (Bottom):**

* **Left Chart (Data selection comparison):**

* Orange: No selection

* Pink: CaR selection

* Red: s1 selection

* Cyan: DreamPRM

* **Center Chart (Test-time scaling comparison):**

* Orange: Self-consistency

* Pink: ORM

* Red: Self-correction

* Cyan: DreamPRM

* **Right Chart (Ablation study):**

* Orange: w/o AFL

* Pink: w/o ST

* Red: w/o BLO

* Cyan: DreamPRM

### Detailed Analysis

#### Data selection comparison (Left Chart)

* **No selection (Orange):** The "No selection" line forms a pentagon.

* MathVista: ~67.5

* WeMath: ~65.8

* MMStar: ~60.4

* MMVet: ~60.1

* MathVision: ~20.2

* **CaR selection (Pink):** The "CaR selection" line forms a pentagon.

* MathVista: ~68.9

* WeMath: ~57.1

* MMStar: ~62.3

* MMVet: ~61.4

* MathVision: ~54.7

* **s1 selection (Red):** The "s1 selection" line forms a pentagon.

* MathVista: ~21.0

* WeMath: ~54.2

* MMStar: ~61.1

* MMVet: ~58.9

* MathVision: ~20.7

* **DreamPRM (Cyan):** The "DreamPRM" line forms a pentagon.

* MathVista: ~68.9

* WeMath: ~57.1

* MMStar: ~62.3

* MMVet: ~61.4

* MathVision: ~21.0

#### Test-time scaling comparison (Center Chart)

* **Self-consistency (Orange):** The "Self-consistency" line forms a pentagon.

* MathVista: ~67.1

* WeMath: ~65.3

* MMStar: ~59.7

* MMVet: ~57.4

* MathVision: ~63.8

* **ORM (Pink):** The "ORM" line forms a pentagon.

* MathVista: ~68.9

* WeMath: ~56.9

* MMStar: ~62.3

* MMVet: ~61.4

* MathVision: ~20.5

* **Self-correction (Red):** The "Self-correction" line forms a pentagon.

* MathVista: ~21.5

* WeMath: ~54.0

* MMStar: ~60.1

* MMVet: ~55.9

* MathVision: ~54.9

* **DreamPRM (Cyan):** The "DreamPRM" line forms a pentagon.

* MathVista: ~68.9

* WeMath: ~57.1

* MMStar: ~62.3

* MMVet: ~61.4

* MathVision: ~20.5

#### Ablation study (Right Chart)

* **w/o AFL (Orange):** The "w/o AFL" line forms a pentagon.

* MathVista: ~66.1

* WeMath: ~55.0

* MMStar: ~59.6

* MMVet: ~61.2

* MathVision: ~65.4

* **w/o ST (Pink):** The "w/o ST" line forms a pentagon.

* MathVista: ~68.9

* WeMath: ~56.3

* MMStar: ~61.6

* MMVet: ~61.2

* MathVision: ~19.9

* **w/o BLO (Red):** The "w/o BLO" line forms a pentagon.

* MathVista: ~20.4

* WeMath: ~56.0

* MMStar: ~61.0

* MMVet: ~57.3

* MathVision: ~49.9

* **DreamPRM (Cyan):** The "DreamPRM" line forms a pentagon.

* MathVista: ~68.9

* WeMath: ~57.4

* MMStar: ~62.3

* MMVet: ~61.4

* MathVision: ~20.4

### Key Observations

* **DreamPRM:** The "DreamPRM" method (cyan line) consistently achieves high performance on MathVista, WeMath, MMStar, and MMVet, but performs poorly on MathVision across all three charts.

* **MathVision Performance:** MathVision consistently shows the lowest performance for most methods, especially in the "Data selection comparison" and "Test-time scaling comparison" charts.

* **Ablation Impact:** Removing AFL ("w/o AFL") seems to have a more significant impact on MathVista and MathVision compared to removing ST ("w/o ST") or BLO ("w/o BLO").

### Interpretation

The radar charts provide a comparative analysis of different methods and their impact on performance across various categories. The consistent high performance of DreamPRM on most categories suggests its robustness, while its poor performance on MathVision indicates a potential limitation or bias. The ablation study highlights the importance of AFL for MathVista and MathVision, suggesting that AFL plays a crucial role in these categories. The data suggests that the choice of data selection method, test-time scaling technique, and ablation conditions can significantly impact performance, and the optimal choice may depend on the specific category being considered.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Radar Charts: Performance Comparison of Math Problem Solving Techniques

### Overview

The image presents three radar charts comparing the performance of different techniques for solving math problems across four datasets: MathVista, MathVision, WeMath, and MMStar/MMVet. Each chart focuses on a different aspect of the comparison: data selection, test-time scaling, and ablation study. The performance is measured on a scale from approximately 0 to 70, indicated by the radial axis.

### Components/Axes

Each chart shares the following components:

* **Radial Axes:** Representing the four datasets: MathVista, MathVision, WeMath, and MMStar/MMVet. These are positioned equidistantly around the center of the chart.

* **Radial Scale:** A scale from 0 to 70, marked at intervals of approximately 10, indicating performance scores.

* **Lines:** Each line represents a different technique or configuration.

* **Legends:** Located at the bottom of each chart, identifying the color-coded lines.

The three charts have different titles and legends:

* **Chart 1: Data selection comparison**

* Legend:

* Yellow: No selection

* Orange: sl selection

* Red: CaR selection

* Pink: DreamPRM

* Light Blue: Self-consistency

* **Chart 2: Test-time scaling comparison**

* Legend:

* Yellow: Self-consistency

* Orange: ORM

* Red: Self-correction

* Pink: DreamPRM

* Light Blue: No scaling

* **Chart 3: Ablation study**

* Legend:

* Yellow: w/o AFL

* Orange: w/o BLO

* Red: w/o ST

* Pink: DreamPRM

* Light Blue: w/o all

### Detailed Analysis or Content Details

**Chart 1: Data selection comparison**

* **MathVista:** "No selection" (yellow) shows approximately 68.9, "sl selection" (orange) shows approximately 62.3, "CaR selection" (red) shows approximately 45.8, "DreamPRM" (pink) shows approximately 54.7, and "Self-consistency" (light blue) shows approximately 61.4.

* **MathVision:** "No selection" (yellow) shows approximately 21.0, "sl selection" (orange) shows approximately 20.2, "CaR selection" (red) shows approximately 40.4, "DreamPRM" (pink) shows approximately 57.4, and "Self-consistency" (light blue) shows approximately 61.3.

* **WeMath:** "No selection" (yellow) shows approximately 61.4, "sl selection" (orange) shows approximately 52.3, "CaR selection" (red) shows approximately 59.7, "DreamPRM" (pink) shows approximately 66.1, and "Self-consistency" (light blue) shows approximately 64.2.

* **MMStar/MMVet:** "No selection" (yellow) shows approximately 61.3, "sl selection" (orange) shows approximately 62.3, "CaR selection" (red) shows approximately 61.4, "DreamPRM" (pink) shows approximately 61.4, and "Self-consistency" (light blue) shows approximately 61.4.

**Chart 2: Test-time scaling comparison**

* **MathVista:** "Self-consistency" (yellow) shows approximately 68.9, "ORM" (orange) shows approximately 63.8, "Self-correction" (red) shows approximately 54.0, "DreamPRM" (pink) shows approximately 59.9, and "No scaling" (light blue) shows approximately 57.4.

* **MathVision:** "Self-consistency" (yellow) shows approximately 21.0, "ORM" (orange) shows approximately 20.2, "Self-correction" (red) shows approximately 40.4, "DreamPRM" (pink) shows approximately 57.4, and "No scaling" (light blue) shows approximately 61.3.

* **WeMath:** "Self-consistency" (yellow) shows approximately 61.4, "ORM" (orange) shows approximately 52.3, "Self-correction" (red) shows approximately 59.7, "DreamPRM" (pink) shows approximately 66.1, and "No scaling" (light blue) shows approximately 64.2.

* **MMStar/MMVet:** "Self-consistency" (yellow) shows approximately 61.3, "ORM" (orange) shows approximately 62.3, "Self-correction" (red) shows approximately 61.4, "DreamPRM" (pink) shows approximately 61.4, and "No scaling" (light blue) shows approximately 61.4.

**Chart 3: Ablation study**

* **MathVista:** "w/o AFL" (yellow) shows approximately 68.9, "w/o BLO" (orange) shows approximately 66.1, "w/o ST" (red) shows approximately 55.3, "DreamPRM" (pink) shows approximately 64.2, and "w/o all" (light blue) shows approximately 61.4.

* **MathVision:** "w/o AFL" (yellow) shows approximately 20.4, "w/o BLO" (orange) shows approximately 20.2, "w/o ST" (red) shows approximately 40.4, "DreamPRM" (pink) shows approximately 57.4, and "w/o all" (light blue) shows approximately 61.3.

* **WeMath:** "w/o AFL" (yellow) shows approximately 61.4, "w/o BLO" (orange) shows approximately 52.3, "w/o ST" (red) shows approximately 59.7, "DreamPRM" (pink) shows approximately 66.1, and "w/o all" (light blue) shows approximately 64.2.

* **MMStar/MMVet:** "w/o AFL" (yellow) shows approximately 61.3, "w/o BLO" (orange) shows approximately 62.3, "w/o ST" (red) shows approximately 61.4, "DreamPRM" (pink) shows approximately 61.4, and "w/o all" (light blue) shows approximately 61.4.

### Key Observations

* In all three charts, "DreamPRM" consistently performs well across all datasets, often achieving the highest scores.

* The performance varies significantly across the datasets. MathVista and WeMath generally show higher scores than MathVision and MMStar/MMVet.

* The ablation study (Chart 3) suggests that removing AFL ("w/o AFL") has a minimal impact on performance, while removing ST ("w/o ST") significantly reduces performance.

### Interpretation

These radar charts provide a comparative analysis of different techniques for math problem solving. The consistent strong performance of "DreamPRM" suggests it is a robust and effective approach. The variations in performance across datasets indicate that the effectiveness of different techniques may depend on the characteristics of the math problems within each dataset. The ablation study highlights the importance of the ST component for achieving high performance. The charts demonstrate a clear visual representation of the trade-offs between different techniques and their impact on performance across various datasets. The data suggests that DreamPRM is a strong baseline, and further improvements may be achieved by focusing on optimizing the ST component.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Radar Charts: Multi-Benchmark Performance Comparison

### Overview

The image displays three radar charts (also known as spider charts) arranged horizontally. Each chart compares the performance of different methods or model variants across five common benchmarks: MathVista, WeMath, MMStar, MMVet, and MathVision. The charts are titled "Data selection comparison," "Test-time scaling comparison," and "Ablation study," respectively. A consistent method labeled "DreamPRM" (cyan line) appears in all three charts, serving as a baseline for comparison.

### Components/Axes

- **Chart Type:** Radar Charts (Spider Plots)

- **Common Axes (Benchmarks):** Five axes radiate from the center, each representing a benchmark:

1. MathVista (Top)

2. WeMath (Top-Right)

3. MMStar (Bottom-Right)

4. MMVet (Bottom-Left)

5. MathVision (Top-Left)

- **Scale:** The concentric circles represent performance scores, increasing from the center (0) outward. The outermost ring appears to represent a score of approximately 70.

- **Legends:** Each chart has a legend positioned directly below it, mapping line colors to method names.

### Detailed Analysis

#### Chart 1: Data selection comparison

- **Legend (Bottom-Left):**

- Orange: No selection

- Purple: CaR selection

- Red: s1 selection

- Cyan: DreamPRM

- **Data Series & Approximate Values (Score on each benchmark):**

- **DreamPRM (Cyan):** Forms the outermost polygon. Values: MathVista ~68.9, WeMath ~57.1, MMStar ~61.1, MMVet ~60.1, MathVision ~65.0.

- **s1 selection (Red):** Forms an inner polygon. Values: MathVista ~65.8, WeMath ~52.7, MMStar ~50.1, MMVet ~50.1, MathVision ~60.0.

- **CaR selection (Purple):** Forms an inner polygon, generally inside the red line. Values: MathVista ~65.3, WeMath ~52.7, MMStar ~49.1, MMVet ~49.1, MathVision ~59.0.

- **No selection (Orange):** Forms the innermost polygon. Values: MathVista ~61.5, WeMath ~47.7, MMStar ~47.1, MMVet ~47.1, MathVision ~56.0.

- **Trend Verification:** The cyan line (DreamPRM) is consistently the outermost, indicating the highest performance across all five benchmarks. The red line (s1 selection) is generally next, followed by purple (CaR selection), with orange (No selection) being the innermost.

#### Chart 2: Test-time scaling comparison

- **Legend (Bottom-Center):**

- Orange: Self-consistency

- Purple: ORM

- Red: Self-correction

- Cyan: DreamPRM

- **Data Series & Approximate Values:**

- **DreamPRM (Cyan):** Outermost polygon. Values: MathVista ~68.9, WeMath ~60.1, MMStar ~62.3, MMVet ~61.3, MathVision ~65.0.

- **Self-correction (Red):** Inner polygon. Values: MathVista ~63.8, WeMath ~54.9, MMStar ~50.1, MMVet ~57.4, MathVision ~59.0.

- **ORM (Purple):** Inner polygon. Values: MathVista ~65.3, WeMath ~54.9, MMStar ~50.1, MMVet ~55.9, MathVision ~59.0.

- **Self-consistency (Orange):** Innermost polygon. Values: MathVista ~67.1, WeMath ~54.9, MMStar ~50.1, MMVet ~57.4, MathVision ~59.0.

- **Trend Verification:** DreamPRM (cyan) again forms the outermost shape. The other three methods (Self-consistency, ORM, Self-correction) are clustered more closely together in the middle range, with Self-consistency (orange) showing a notably higher score on MathVista compared to its performance on other axes.

#### Chart 3: Ablation study

- **Legend (Bottom-Right):**

- Orange: w/o AFL

- Purple: w/o ST

- Red: w/o BLO

- Cyan: DreamPRM

- **Data Series & Approximate Values:**

- **DreamPRM (Cyan):** Outermost polygon. Values: MathVista ~68.9, WeMath ~55.3, MMStar ~61.3, MMVet ~61.2, MathVision ~65.0.

- **w/o BLO (Red):** Inner polygon. Values: MathVista ~66.1, WeMath ~55.0, MMStar ~59.6, MMVet ~59.6, MathVision ~60.4.

- **w/o ST (Purple):** Inner polygon. Values: MathVista ~66.4, WeMath ~55.0, MMStar ~59.6, MMVet ~59.6, MathVision ~60.4.

- **w/o AFL (Orange):** Innermost polygon. Values: MathVista ~66.1, WeMath ~55.0, MMStar ~59.6, MMVet ~59.6, MathVision ~60.4.

- **Trend Verification:** DreamPRM (cyan) is the outermost. The three ablated versions (w/o AFL, w/o ST, w/o BLO) form nearly identical, overlapping polygons, suggesting that removing any one of these components (AFL, ST, BLO) has a similar, detrimental effect on performance across all benchmarks.

### Key Observations

1. **Consistent Superiority:** The "DreamPRM" method (cyan line) achieves the highest score on every single benchmark across all three comparison charts.

2. **Performance Hierarchy:** In the "Data selection comparison," a clear performance hierarchy is visible: DreamPRM > s1 selection > CaR selection > No selection.

3. **Clustering of Alternatives:** In the "Test-time scaling comparison," the alternative methods (Self-consistency, ORM, Self-correction) cluster together, performing significantly below DreamPRM but above the "No selection" baseline from the first chart.

4. **Impact of Ablation:** The "Ablation study" shows that removing any of the three components (AFL, ST, BLO) from the DreamPRM framework results in a similar and substantial drop in performance, indicating all are critical to its effectiveness.

5. **Benchmark Difficulty:** The relative ordering of benchmarks by score is not perfectly consistent across methods, but MathVista generally yields the highest scores, while MMStar and MMVet often yield the lowest for the non-DreamPRM methods.

### Interpretation

This set of charts presents a compelling technical narrative for the effectiveness of the "DreamPRM" method.

- **What the data suggests:** The data strongly suggests that DreamPRM is a superior approach for the task(s) measured by these five mathematical reasoning benchmarks (MathVista, WeMath, etc.). Its advantage is not marginal but substantial and consistent.

- **How elements relate:** The three charts build a logical argument:

1. **Chart 1** establishes that intelligent data selection (s1, CaR) helps, but DreamPRM's selection strategy is better.

2. **Chart 2** shows that even advanced test-time techniques (Self-consistency, ORM) are outperformed by DreamPRM's approach.

3. **Chart 3** deconstructs DreamPRM, revealing that its core components (AFL, ST, BLO) are all essential; removing any one degrades performance to a similar, lower level.

- **Notable Anomalies/Patterns:** The near-identical performance of the three ablated models in Chart 3 is striking. It suggests these components may be interdependent or contribute equally vital, non-redundant functionality. The high score of "Self-consistency" on MathVista in Chart 2, relative to its other scores, might indicate that this particular benchmark benefits more from simple ensemble methods than others do.

**In summary, the visual evidence positions DreamPRM as a state-of-the-art method whose performance gain stems from a synergistic combination of its core components, outperforming both simpler selection strategies and other sophisticated test-time scaling techniques.**

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Radar Charts: Method Performance Comparison Across Three Studies

### Overview

The image contains three radar charts comparing the performance of five methods (MathVista, WeMath, MathVision, MMVet, MMStar) across three studies: "Data selection comparison," "Test-time scaling comparison," and "Ablation study." Each chart uses colored lines to represent different experimental configurations (e.g., "No selection," "DreamPRM," "w/o AFL") and their corresponding performance metrics.

---

### Components/Axes

#### Common Elements Across All Charts:

- **Axes**: Labeled with method names:

`MathVista`, `WeMath`, `MathVision`, `MMVet`, `MMStar`

- **Legends**:

- **Data selection comparison**:

`No selection` (yellow), `s1 selection` (red), `CaR selection` (pink), `Self-consistency` (orange), `Self-correction` (purple), `ORM` (blue), `DreamPRM` (teal)

- **Test-time scaling comparison**:

`No selection` (yellow), `Self-consistency` (orange), `ORM` (blue), `DreamPRM` (teal), `w/o AFL` (orange), `w/o ST` (pink), `w/o BLO` (red), `DreamPRM` (teal)

- **Ablation study**:

`No selection` (yellow), `Self-consistency` (orange), `ORM` (blue), `DreamPRM` (teal), `w/o AFL` (orange), `w/o ST` (pink), `w/o BLO` (red), `DreamPRM` (teal)

- **Axis Markers**: Numerical values (e.g., 68.9, 57.4) placed at the outer edge of each axis.

#### Spatial Grounding:

- **Legends**: Positioned at the bottom of each chart.

- **Lines**: Colored lines connect data points for each configuration, radiating from the center to the axes.

- **Text Labels**: Numerical values are placed near the end of each line segment.

---

### Detailed Analysis

#### 1. **Data Selection Comparison**

- **MathVista**:

- Highest value: `68.9` (No selection, yellow).

- Lowest value: `54.7` (Self-correction, purple).

- **WeMath**:

- Highest value: `57.4` (DreamPRM, teal).

- Lowest value: `54.2` (Self-consistency, orange).

- **MathVision**:

- Highest value: `61.4` (No selection, yellow).

- Lowest value: `54.0` (Self-correction, purple).

- **MMVet**:

- Highest value: `60.1` (No selection, yellow).

- Lowest value: `54.9` (Self-correction, purple).

- **MMStar**:

- Highest value: `62.3` (No selection, yellow).

- Lowest value: `54.0` (Self-correction, purple).

#### 2. **Test-Time Scaling Comparison**

- **MathVista**:

- Highest value: `68.9` (No selection, yellow).

- Lowest value: `54.9` (w/o AFL, orange).

- **WeMath**:

- Highest value: `56.9` (DreamPRM, teal).

- Lowest value: `54.0` (w/o ST, pink).

- **MathVision**:

- Highest value: `61.4` (No selection, yellow).

- Lowest value: `54.0` (w/o ST, pink).

- **MMVet**:

- Highest value: `61.4` (No selection, yellow).

- Lowest value: `54.9` (w/o AFL, orange).

- **MMStar**:

- Highest value: `62.3` (No selection, yellow).

- Lowest value: `54.0` (w/o ST, pink).

#### 3. **Ablation Study**

- **MathVista**:

- Highest value: `68.9` (No selection, yellow).

- Lowest value: `54.9` (w/o BLO, red).

- **WeMath**:

- Highest value: `56.3` (DreamPRM, teal).

- Lowest value: `54.0` (w/o ST, pink).

- **MathVision**:

- Highest value: `61.4` (No selection, yellow).

- Lowest value: `54.0` (w/o ST, pink).

- **MMVet**:

- Highest value: `61.4` (No selection, yellow).

- Lowest value: `54.9` (w/o AFL, orange).

- **MMStar**:

- Highest value: `62.3` (No selection, yellow).

- Lowest value: `54.0` (w/o ST, pink).

---

### Key Observations

1. **Consistent Performance**:

- `MathVista` consistently achieves the highest values across all charts, particularly under "No selection" (yellow line).

- `DreamPRM` (teal) performs well in the first two charts but underperforms in the ablation study.

2. **Impact of Ablation**:

- Removing components (e.g., `w/o AFL`, `w/o ST`, `w/o BLO`) significantly reduces performance. For example:

- `w/o BLO` (red) in the ablation study shows the lowest values for all methods.

- `w/o ST` (pink) in the test-time scaling and ablation studies has the lowest values for `WeMath` and `MathVision`.

3. **Method-Specific Trends**:

- `WeMath` and `MathVision` show moderate performance, with `WeMath` benefiting more from `DreamPRM` in the first two charts.

- `MMVet` and `MMStar` exhibit similar trends, with `MMStar` slightly outperforming `MMVet` in the first chart.

---

### Interpretation

The data suggests that **data selection methods** (e.g., "No selection," "DreamPRM") have the most significant impact on performance, particularly for `MathVista`. The **ablation study** highlights the critical role of components like `BLO` (likely a key module) in maintaining high performance. Test-time scaling introduces variability, but the core methods (`MathVista`, `WeMath`) remain robust. The repeated use of `DreamPRM` in the legends may indicate a focus on its importance in data selection and test-time scaling, though its performance drops in the ablation study, suggesting dependencies on other components.

**Notable Outliers**:

- `w/o BLO` (red) in the ablation study consistently underperforms, indicating its necessity for optimal results.

- `Self-correction` (purple) in the data selection comparison shows the lowest values for most methods, suggesting it is less effective than other selection strategies.

This analysis underscores the importance of holistic system design, where individual components and selection strategies synergize to achieve peak performance.

DECODING INTELLIGENCE...