## Line Chart: Model Parameter Efficiency vs. Explained Variance

### Overview

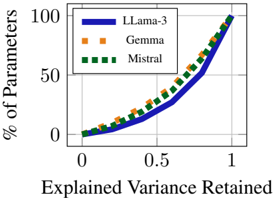

The image is a line chart comparing the relationship between the percentage of model parameters retained and the explained variance retained for three different large language models: LLama-3, Gemma, and Mistral. The chart illustrates a trade-off curve, showing how many parameters are needed to retain a certain amount of the model's explanatory power (variance).

### Components/Axes

* **Chart Type:** Line chart with three data series.

* **X-Axis:** Labeled "Explained Variance Retained". It is a linear scale ranging from 0 to 1, with major tick marks at 0, 0.5, and 1.

* **Y-Axis:** Labeled "% of Parameters". It is a linear scale ranging from 0 to 100, with major tick marks at 0, 50, and 100.

* **Legend:** Positioned in the top-left corner of the chart area. It contains three entries:

* `LLama-3`: Represented by a solid blue line.

* `Gemma`: Represented by a dashed orange line.

* `Mistral`: Represented by a dotted green line.

### Detailed Analysis

**Trend Verification:**

All three lines exhibit the same fundamental trend: they start near the origin (0,0) and curve upward in a convex, exponential-like fashion. This indicates that retaining a higher percentage of explained variance requires a disproportionately larger percentage of the model's parameters. The relationship is non-linear.

**Data Series & Approximate Points:**

1. **LLama-3 (Solid Blue Line):**

* Starts at approximately (0, 0).

* At x=0.5 (50% variance retained), y is approximately 10% of parameters.

* At x=0.75, y is approximately 30-35% of parameters.

* Ends at (1, 100).

* *Spatial Grounding:* This line is generally the lowest of the three for most of the x-axis range (0 to ~0.85), indicating it requires slightly fewer parameters to retain a given level of variance in that region.

2. **Gemma (Dashed Orange Line):**

* Starts at approximately (0, 0).

* At x=0.5, y is approximately 15-18% of parameters.

* At x=0.75, y is approximately 40-45% of parameters.

* Ends at (1, 100).

* *Spatial Grounding:* This line is positioned between the LLama-3 and Mistral lines for most of the chart.

3. **Mistral (Dotted Green Line):**

* Starts at approximately (0, 0).

* At x=0.5, y is approximately 18-20% of parameters.

* At x=0.75, y is approximately 45-50% of parameters.

* Ends at (1, 100).

* *Spatial Grounding:* This line is generally the highest of the three for most of the x-axis range (0 to ~0.85), indicating it requires slightly more parameters to retain a given level of variance in that region.

**Convergence:** All three lines converge at the point (1, 100), meaning 100% of parameters are required to retain 100% of the explained variance, which is a logical boundary condition.

### Key Observations

1. **Similar Efficiency Profiles:** The three models show remarkably similar trade-off curves. The vertical separation between the lines is small relative to the overall scale, suggesting comparable parameter efficiency for variance retention among these models.

2. **Diminishing Returns:** The steep upward curve demonstrates severe diminishing returns. The final ~25% of explained variance (from 0.75 to 1.0) requires approximately 55-70% of the total parameters.

3. **Minor Relative Ordering:** For the majority of the curve (explained variance retained < ~0.85), the approximate ordering from most to least parameter-efficient is: LLama-3 > Gemma > Mistral. This ordering appears to reverse slightly in the very high variance region (>0.9), where the lines become tightly clustered.

### Interpretation

This chart visualizes the **compression-efficiency trade-off** in large language models. It answers the question: "How much of a model's core predictive power (variance) can we preserve if we only use X% of its parameters?"

* **What it demonstrates:** The data suggests that a significant portion of a model's explanatory power is encoded in a relatively small subset of its parameters. For example, retaining 50% of the variance may only require 10-20% of the parameters. This is a foundational principle behind model pruning, distillation, and efficient inference techniques.

* **Relationship between elements:** The x-axis (Explained Variance Retained) is the independent variable representing the desired fidelity. The y-axis (% of Parameters) is the dependent variable representing the cost. The curves are the "cost functions" for each model architecture.

* **Notable implications:** The similarity between the curves indicates that the fundamental relationship between parameter count and representational power is consistent across these different model families (LLama-3, Gemma, Mistral). The steepness of the curve in the high-variance region highlights the challenge of achieving "full" model performance with significantly reduced size; the last few percentage points of capability are disproportionately expensive in terms of parameters. This insight is critical for engineers designing models for deployment on resource-constrained hardware.