TECHNICAL ASSET FINGERPRINT

9e6b91fb8e3e01390b7d2db9

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Line Charts: Llama-3.2 Model Performance

### Overview

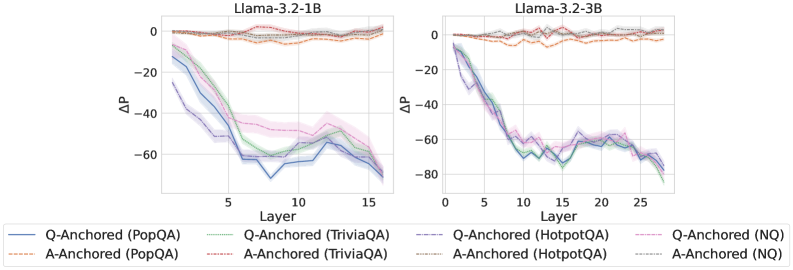

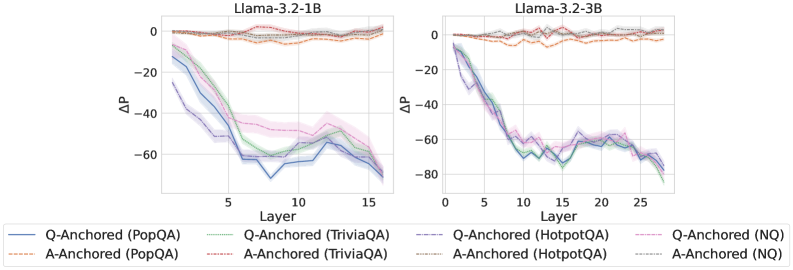

The image contains two line charts comparing the performance of Llama-3.2 models (1B and 3B) on various question-answering tasks. The charts plot the change in performance (ΔP) against the layer number of the model. Different lines represent different question-answering datasets, anchored either by question (Q-Anchored) or answer (A-Anchored).

### Components/Axes

**Left Chart (Llama-3.2-1B):**

* **Title:** Llama-3.2-1B

* **Y-axis:** ΔP (Change in Performance). Scale ranges from approximately -70 to 0. Markers at 0, -20, -40, -60.

* **X-axis:** Layer. Scale ranges from 0 to 15. Markers at 0, 5, 10, 15.

**Right Chart (Llama-3.2-3B):**

* **Title:** Llama-3.2-3B

* **Y-axis:** ΔP (Change in Performance). Scale ranges from approximately -80 to 0. Markers at 0, -20, -40, -60, -80.

* **X-axis:** Layer. Scale ranges from 0 to 25. Markers at 0, 5, 10, 15, 20, 25.

**Legend (Located below the charts):**

* **Q-Anchored (PopQA):** Solid Blue Line

* **A-Anchored (PopQA):** Dashed Orange Line

* **Q-Anchored (TriviaQA):** Dotted Green Line

* **A-Anchored (TriviaQA):** Dashed Pink Line

* **Q-Anchored (HotpotQA):** Dashed-dotted Purple Line

* **A-Anchored (HotpotQA):** Dotted Grey Line

* **Q-Anchored (NQ):** Dashed-dotted Pink Line

* **A-Anchored (NQ):** Dotted Grey Line

### Detailed Analysis

**Llama-3.2-1B Chart:**

* **Q-Anchored (PopQA):** (Solid Blue) Starts at approximately 0, rapidly decreases to around -60 by layer 5, then fluctuates between -60 and -70 until layer 15.

* **A-Anchored (PopQA):** (Dashed Orange) Remains relatively stable around 0 throughout all layers.

* **Q-Anchored (TriviaQA):** (Dotted Green) Starts at approximately 0, decreases to around -50 by layer 5, then fluctuates between -50 and -60 until layer 15.

* **A-Anchored (TriviaQA):** (Dashed Pink) Starts at approximately 0, decreases to around -40 by layer 5, then fluctuates between -40 and -50 until layer 15.

* **Q-Anchored (HotpotQA):** (Dashed-dotted Purple) Starts at approximately 0, decreases to around -50 by layer 5, then fluctuates between -50 and -60 until layer 15.

* **A-Anchored (HotpotQA):** (Dotted Grey) Remains relatively stable around 0 throughout all layers.

* **Q-Anchored (NQ):** (Dashed-dotted Pink) Starts at approximately 0, decreases to around -40 by layer 5, then fluctuates between -40 and -50 until layer 15.

* **A-Anchored (NQ):** (Dotted Grey) Remains relatively stable around 0 throughout all layers.

**Llama-3.2-3B Chart:**

* **Q-Anchored (PopQA):** (Solid Blue) Starts at approximately 0, rapidly decreases to around -70 by layer 5, then fluctuates between -60 and -80 until layer 25.

* **A-Anchored (PopQA):** (Dashed Orange) Remains relatively stable around 0 throughout all layers.

* **Q-Anchored (TriviaQA):** (Dotted Green) Starts at approximately 0, decreases to around -60 by layer 5, then fluctuates between -60 and -80 until layer 25.

* **A-Anchored (TriviaQA):** (Dashed Pink) Starts at approximately 0, decreases to around -60 by layer 5, then fluctuates between -60 and -70 until layer 25.

* **Q-Anchored (HotpotQA):** (Dashed-dotted Purple) Starts at approximately 0, decreases to around -60 by layer 5, then fluctuates between -60 and -80 until layer 25.

* **A-Anchored (HotpotQA):** (Dotted Grey) Remains relatively stable around 0 throughout all layers.

* **Q-Anchored (NQ):** (Dashed-dotted Pink) Starts at approximately 0, decreases to around -60 by layer 5, then fluctuates between -60 and -70 until layer 25.

* **A-Anchored (NQ):** (Dotted Grey) Remains relatively stable around 0 throughout all layers.

### Key Observations

* **Q-Anchored vs. A-Anchored:** Q-Anchored lines show a significant decrease in performance (negative ΔP) as the layer number increases, while A-Anchored lines remain relatively stable around 0.

* **Model Size:** The 3B model generally shows a slightly larger decrease in performance for Q-Anchored tasks compared to the 1B model.

* **Task Variation:** The specific question-answering task (PopQA, TriviaQA, HotpotQA, NQ) influences the magnitude of the performance decrease for Q-Anchored lines.

* **Layer Dependence:** The performance decrease for Q-Anchored tasks is most pronounced in the initial layers (up to layer 5), after which the performance fluctuates.

### Interpretation

The data suggests that anchoring the model by question (Q-Anchored) leads to a degradation in performance as the model processes deeper layers. This could indicate that the model struggles to maintain relevant information from the question as it progresses through the network. In contrast, anchoring by answer (A-Anchored) results in stable performance, suggesting that the model is better at retaining information related to the answer.

The larger performance decrease in the 3B model for Q-Anchored tasks might indicate that larger models are more susceptible to this degradation effect. The variation in performance decrease across different question-answering tasks suggests that the complexity or nature of the task influences the model's ability to retain question-related information.

The initial rapid decrease in performance followed by fluctuations suggests that the early layers of the model are critical for retaining question-related information, and that subsequent layers may not be able to fully compensate for any loss of information in the initial layers.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Line Chart: ΔP vs. Layer for Llama Models

### Overview

The image presents two line charts, side-by-side, depicting the change in performance (ΔP) as a function of layer depth in two different Llama language models: Llama-3.2-1B and Llama-3.2-3B. Each chart displays multiple lines representing different question-answering datasets and anchoring methods. The charts aim to visualize how performance changes across layers for each model and dataset combination.

### Components/Axes

* **X-axis:** Layer (ranging from approximately 0 to 15 for the 1B model and 0 to 25 for the 3B model). The axis is labeled "Layer".

* **Y-axis:** ΔP (ranging from approximately -80 to 0). The axis is labeled "ΔP".

* **Chart Titles:**

* Left Chart: "Llama-3.2-1B"

* Right Chart: "Llama-3.2-3B"

* **Legend:** Located at the bottom of the image, spanning both charts. The legend identifies the different lines based on dataset and anchoring method.

* Q-Anchored (PopQA) - Blue solid line

* A-Anchored (PopQA) - Orange dashed line

* Q-Anchored (TriviaQA) - Blue dashed-dotted line

* A-Anchored (TriviaQA) - Orange dashed-dotted line

* Q-Anchored (HotpotQA) - Purple dashed line

* A-Anchored (HotpotQA) - Purple dotted line

* Q-Anchored (NQ) - Green solid line

* A-Anchored (NQ) - Green dashed line

### Detailed Analysis or Content Details

**Llama-3.2-1B Chart (Left)**

* **Q-Anchored (PopQA):** Starts at approximately 0 ΔP at Layer 0, rapidly decreases to approximately -60 ΔP by Layer 5, and then plateaus around -60 ΔP for layers 5-15.

* **A-Anchored (PopQA):** Starts at approximately 0 ΔP at Layer 0, decreases to approximately -40 ΔP by Layer 5, and then plateaus around -40 ΔP for layers 5-15.

* **Q-Anchored (TriviaQA):** Starts at approximately 0 ΔP at Layer 0, decreases to approximately -50 ΔP by Layer 5, and then plateaus around -50 ΔP for layers 5-15.

* **A-Anchored (TriviaQA):** Starts at approximately 0 ΔP at Layer 0, decreases to approximately -30 ΔP by Layer 5, and then plateaus around -30 ΔP for layers 5-15.

* **Q-Anchored (HotpotQA):** Starts at approximately 0 ΔP at Layer 0, decreases to approximately -40 ΔP by Layer 5, and then plateaus around -40 ΔP for layers 5-15.

* **A-Anchored (HotpotQA):** Starts at approximately 0 ΔP at Layer 0, decreases to approximately -20 ΔP by Layer 5, and then plateaus around -20 ΔP for layers 5-15.

* **Q-Anchored (NQ):** Starts at approximately 0 ΔP at Layer 0, decreases to approximately -50 ΔP by Layer 5, and then plateaus around -50 ΔP for layers 5-15.

* **A-Anchored (NQ):** Starts at approximately 0 ΔP at Layer 0, decreases to approximately -30 ΔP by Layer 5, and then plateaus around -30 ΔP for layers 5-15.

**Llama-3.2-3B Chart (Right)**

* **Q-Anchored (PopQA):** Starts at approximately 0 ΔP at Layer 0, rapidly decreases to approximately -60 ΔP by Layer 5, and then continues to decrease, reaching approximately -80 ΔP by Layer 25.

* **A-Anchored (PopQA):** Starts at approximately 0 ΔP at Layer 0, decreases to approximately -40 ΔP by Layer 5, and then continues to decrease, reaching approximately -60 ΔP by Layer 25.

* **Q-Anchored (TriviaQA):** Starts at approximately 0 ΔP at Layer 0, decreases to approximately -50 ΔP by Layer 5, and then continues to decrease, reaching approximately -70 ΔP by Layer 25.

* **A-Anchored (TriviaQA):** Starts at approximately 0 ΔP at Layer 0, decreases to approximately -30 ΔP by Layer 5, and then continues to decrease, reaching approximately -50 ΔP by Layer 25.

* **Q-Anchored (HotpotQA):** Starts at approximately 0 ΔP at Layer 0, decreases to approximately -40 ΔP by Layer 5, and then continues to decrease, reaching approximately -60 ΔP by Layer 25.

* **A-Anchored (HotpotQA):** Starts at approximately 0 ΔP at Layer 0, decreases to approximately -20 ΔP by Layer 5, and then continues to decrease, reaching approximately -40 ΔP by Layer 25.

* **Q-Anchored (NQ):** Starts at approximately 0 ΔP at Layer 0, decreases to approximately -50 ΔP by Layer 5, and then continues to decrease, reaching approximately -70 ΔP by Layer 25.

* **A-Anchored (NQ):** Starts at approximately 0 ΔP at Layer 0, decreases to approximately -30 ΔP by Layer 5, and then continues to decrease, reaching approximately -50 ΔP by Layer 25.

### Key Observations

* In both models, all lines exhibit a decreasing trend in ΔP as the layer depth increases, indicating a performance degradation with deeper layers.

* The 3B model shows a more pronounced and continuous decrease in ΔP across all datasets and anchoring methods compared to the 1B model.

* Q-Anchored lines generally have lower ΔP values than A-Anchored lines for the same dataset, suggesting that question-anchoring leads to a greater performance drop with increasing layer depth.

* The performance drop appears to stabilize after a certain layer depth in the 1B model, while it continues to decrease in the 3B model.

### Interpretation

The charts demonstrate that increasing the depth of the Llama models (moving to deeper layers) generally leads to a decrease in performance, as measured by ΔP. This suggests that the later layers may not be contributing positively to the model's ability to answer questions accurately. The more significant performance drop in the 3B model could indicate that deeper models are more susceptible to issues like overfitting or vanishing gradients.

The difference between Q-Anchored and A-Anchored lines suggests that the method used to anchor the questions affects how performance degrades with depth. Question-anchoring might be more sensitive to the complexities introduced by deeper layers.

The stabilization of performance in the 1B model after a certain layer depth could be due to the model's limited capacity. Once the model reaches its capacity, adding more layers does not necessarily lead to further performance degradation. The continued decrease in the 3B model suggests that it has not yet reached its capacity and that deeper layers are still actively contributing to the performance drop.

These findings have implications for model architecture design and training strategies. It may be beneficial to explore techniques to mitigate performance degradation in deeper layers, such as regularization or layer pruning.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

\n

## Line Charts: Llama-3.2 Model Layer-wise ΔP Analysis

### Overview

The image displays two side-by-side line charts comparing the layer-wise change in probability (ΔP) for two different-sized language models (Llama-3.2-1B and Llama-3.2-3B) across four question-answering datasets. The analysis contrasts two anchoring methods: "Q-Anchored" (solid lines) and "A-Anchored" (dashed lines).

### Components/Axes

* **Chart Titles:**

* Left Chart: `Llama-3.2-1B`

* Right Chart: `Llama-3.2-3B`

* **X-Axis (Both Charts):** Label: `Layer`. Represents the layer number within the neural network model.

* Left Chart Scale: 0 to 15, with major ticks at 0, 5, 10, 15.

* Right Chart Scale: 0 to 25, with major ticks at 0, 5, 10, 15, 20, 25.

* **Y-Axis (Both Charts):** Label: `ΔP`. Represents the change in probability. The scale is negative, indicating a decrease.

* Left Chart Scale: 0 to -80, with major ticks at 0, -20, -40, -60.

* Right Chart Scale: 0 to -80, with major ticks at 0, -20, -40, -60, -80.

* **Legend (Bottom Center, spanning both charts):** Contains 8 entries, differentiating by line style (solid/dashed) and color.

* **Q-Anchored (Solid Lines):**

* Blue: `Q-Anchored (PopQA)`

* Green: `Q-Anchored (TriviaQA)`

* Purple: `Q-Anchored (HotpotQA)`

* Pink: `Q-Anchored (NQ)`

* **A-Anchored (Dashed Lines):**

* Orange: `A-Anchored (PopQA)`

* Red: `A-Anchored (TriviaQA)`

* Gray: `A-Anchored (HotpotQA)`

* Brown: `A-Anchored (NQ)`

### Detailed Analysis

**Left Chart (Llama-3.2-1B):**

* **A-Anchored Series (Dashed Lines):** All four dashed lines (Orange, Red, Gray, Brown) remain clustered tightly near the top of the chart, fluctuating between approximately ΔP = 0 and ΔP = -10 across all layers (0-15). They show minimal downward trend.

* **Q-Anchored Series (Solid Lines):** All four solid lines show a pronounced downward trend.

* **General Trend:** They start near ΔP = 0 at Layer 0, drop steeply until approximately Layer 7-8, then continue a more gradual decline with some fluctuations, ending between ΔP = -60 and -70 at Layer 15.

* **Specific Series (Approximate End Values at Layer 15):**

* Blue (PopQA): ~ -65

* Green (TriviaQA): ~ -60

* Purple (HotpotQA): ~ -68

* Pink (NQ): ~ -62

**Right Chart (Llama-3.2-3B):**

* **A-Anchored Series (Dashed Lines):** Similar to the 1B model, the dashed lines remain near the top, fluctuating between ΔP = 0 and ΔP = -10 across layers 0-25.

* **Q-Anchored Series (Solid Lines):** The downward trend is even more pronounced and extends over more layers.

* **General Trend:** A steep decline from Layer 0 to approximately Layer 10, followed by a plateau or slower decline with notable fluctuations between Layers 10-20, and a final drop towards Layer 25.

* **Specific Series (Approximate End Values at Layer 25):**

* Blue (PopQA): ~ -75

* Green (TriviaQA): ~ -70

* Purple (HotpotQA): ~ -78

* Pink (NQ): ~ -72

### Key Observations

1. **Fundamental Dichotomy:** There is a stark and consistent separation between the behavior of Q-Anchored (solid) and A-Anchored (dashed) methods across both models and all datasets. A-Anchoring results in near-zero ΔP, while Q-Anchoring leads to significant negative ΔP.

2. **Model Size Effect:** The Llama-3.2-3B model (right chart) shows a more extended and slightly deeper decline for Q-Anchored series compared to the 1B model, correlating with its greater number of layers.

3. **Dataset Consistency:** The relative ordering and shape of the Q-Anchored lines are remarkably consistent across datasets within each model. For example, the Purple line (HotpotQA) is consistently among the lowest, while the Pink line (NQ) is often among the highest of the solid lines.

4. **Mid-Layer Fluctuations:** Both models exhibit non-monotonic behavior in the Q-Anchored series, with noticeable "bumps" or temporary recoveries in ΔP around Layers 10-14 (1B) and Layers 12-18 (3B).

### Interpretation

This visualization demonstrates a core finding about the internal mechanics of these language models during a specific task (likely related to question answering or knowledge recall). The **ΔP** metric likely measures how much the model's probability assignment to a target answer changes as information is processed through its layers.

* **Anchoring Effect:** The "A-Anchored" condition (dashed lines) appears to provide a stable reference point that prevents significant probability drift, keeping ΔP near zero. In contrast, the "Q-Anchored" condition (solid lines) leads to a progressive and substantial decrease in probability as the signal propagates through the network. This suggests the model's internal representation or confidence in the answer is being systematically altered when anchored to the question versus the answer itself.

* **Layer-wise Processing:** The steep initial drop in Q-Anchored ΔP indicates that the most significant transformations occur in the early-to-mid layers. The fluctuations in deeper layers suggest complex, non-linear processing where the model may be integrating information or resolving conflicts, leading to temporary reversals in the probability trend.

* **Scalability:** The pattern holds across model sizes (1B vs. 3B parameters), indicating this is a fundamental characteristic of the model architecture or training, not an artifact of scale. The deeper model simply extends the process over more layers.

* **Robustness Across Domains:** The consistency across four distinct QA datasets (PopQA, TriviaQA, HotpotQA, NQ) implies this anchoring phenomenon is a general property of the model's operation, not specific to a single type of knowledge or question format.

In essence, the charts provide empirical evidence that the choice of anchoring point (question vs. answer) fundamentally dictates the trajectory of probability change through a transformer's layers, with Q-Anchoring inducing a strong, consistent decay in ΔP.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 2

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Graph: ΔP vs. Layer for Llama-3.2-1B and Llama-3.2-3B Models

### Overview

The image contains two line graphs comparing the performance (ΔP) of different Q-Anchored and A-Anchored models across layers in two versions of the Llama model (3.2-1B and 3.2-3B). The graphs show trends in ΔP values as a function of layer depth, with distinct lines representing different datasets (PopQA, TriviaQA, HotpotQA, NQ) and anchoring strategies (Q-Anchored vs. A-Anchored).

---

### Components/Axes

- **X-axis (Layer)**: Represents the depth of the model layers, ranging from 0 to 15 for Llama-3.2-1B and 0 to 25 for Llama-3.2-3B.

- **Y-axis (ΔP)**: Represents the performance metric (ΔP), with values ranging from -80 to 0.

- **Legends**:

- **Llama-3.2-1B (Left Graph)**:

- **Blue Solid**: Q-Anchored (PopQA)

- **Green Dashed**: Q-Anchored (TriviaQA)

- **Orange Dotted**: A-Anchored (PopQA)

- **Red Dashed**: A-Anchored (TriviaQA)

- **Purple Dotted**: Q-Anchored (HotpotQA)

- **Pink Dashed**: Q-Anchored (NQ)

- **Llama-3.2-3B (Right Graph)**:

- **Blue Solid**: Q-Anchored (PopQA)

- **Green Dashed**: Q-Anchored (TriviaQA)

- **Orange Dotted**: A-Anchored (PopQA)

- **Red Dashed**: A-Anchored (TriviaQA)

- **Purple Dotted**: Q-Anchored (HotpotQA)

- **Pink Dashed**: Q-Anchored (NQ)

---

### Detailed Analysis

#### Llama-3.2-1B (Left Graph)

- **Q-Anchored (PopQA)**: Starts at 0, drops sharply to ~-60 by layer 5, then stabilizes with minor fluctuations.

- **Q-Anchored (TriviaQA)**: Similar to PopQA but with a slightly less steep decline, reaching ~-50 by layer 5.

- **A-Anchored (PopQA)**: Starts at 0, declines to ~-40 by layer 5, then stabilizes.

- **A-Anchored (TriviaQA)**: Similar to A-Anchored (PopQA) but with a slightly less steep decline.

- **Q-Anchored (HotpotQA)**: Starts at 0, drops to ~-50 by layer 5, then stabilizes.

- **Q-Anchored (NQ)**: Remains flat at 0 across all layers.

#### Llama-3.2-3B (Right Graph)

- **Q-Anchored (PopQA)**: Starts at 0, drops sharply to ~-70 by layer 5, then stabilizes with minor fluctuations.

- **Q-Anchored (TriviaQA)**: Similar to PopQA but with a slightly less steep decline, reaching ~-60 by layer 5.

- **A-Anchored (PopQA)**: Starts at 0, declines to ~-50 by layer 5, then stabilizes.

- **A-Anchored (TriviaQA)**: Similar to A-Anchored (PopQA) but with a slightly less steep decline.

- **Q-Anchored (HotpotQA)**: Starts at 0, drops to ~-60 by layer 5, then stabilizes.

- **Q-Anchored (NQ)**: Remains flat at 0 across all layers.

---

### Key Observations

1. **Initial Sharp Decline**: All Q-Anchored models (PopQA, TriviaQA, HotpotQA) show a sharp drop in ΔP within the first 5 layers, followed by stabilization.

2. **A-Anchored Models**: Show similar trends but with less pronounced declines and more gradual stabilization.

3. **NQ Models**: Remain flat at 0, indicating no significant change in ΔP across layers.

4. **Layer Depth**: The 3B version (right graph) extends to 25 layers, showing consistent trends but with more variability in later layers (e.g., oscillations in Q-Anchored (HotpotQA) around layer 20).

---

### Interpretation

- **Model Behavior**: The sharp initial decline in ΔP for Q-Anchored models suggests a strong initial impact of anchoring strategies, which diminishes as layers deepen. This could indicate that anchoring effects are most pronounced in early layers.

- **Dataset Differences**: PopQA and TriviaQA show similar trends, while HotpotQA exhibits slightly more variability, possibly due to differences in data complexity or model sensitivity.

- **Anchoring Strategy**: Q-Anchored models consistently outperform A-Anchored models in terms of ΔP magnitude, suggesting that Q-Anchored strategies are more effective in this context.

- **NQ Models**: The flat line for NQ models implies that non-anchored approaches do not show significant layer-dependent performance changes, highlighting the importance of anchoring in this analysis.

---

### Spatial Grounding

- **Legends**: Positioned at the bottom of each graph, with labels aligned to the left. Colors and line styles match the corresponding data series.

- **Axes**: ΔP (y-axis) is on the left, Layer (x-axis) is at the bottom. Both axes are labeled clearly.

- **Data Series**: Lines are plotted with distinct styles (solid, dashed, dotted) and colors (blue, green, orange, red, purple, pink) as per the legend.

---

### Uncertainties

- Approximate ΔP values are estimated from the graph (e.g., ~-60, ~-50) due to the lack of explicit numerical markers. Minor fluctuations in later layers (e.g., Llama-3.2-3B) may introduce slight variability in trend interpretation.

DECODING INTELLIGENCE...