# Technical Data Extraction: LLM Inference Performance Comparison

This document provides a comprehensive extraction of data from a technical performance chart comparing Large Language Model (LLM) inference speeds across different hardware platforms and optimization methods.

## 1. Metadata and Global Legend

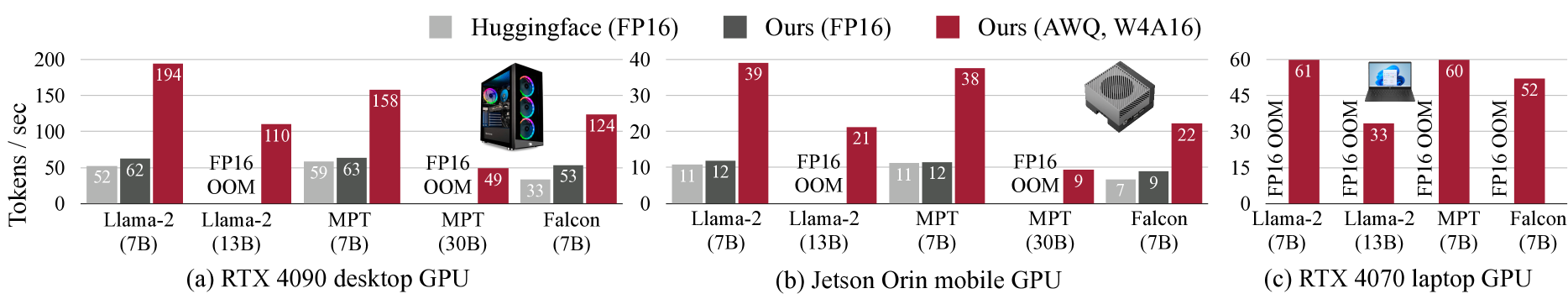

The image consists of three side-by-side bar charts comparing inference throughput measured in **Tokens / sec**.

**Global Legend (Top Center):**

* **Light Gray Square:** Huggingface (FP16)

* **Dark Gray Square:** Ours (FP16)

* **Maroon/Dark Red Square:** Ours (AWQ, W4A16)

**Common Abbreviations:**

* **OOM:** Out of Memory (indicates the model could not run on that specific hardware configuration).

* **FP16:** 16-bit Floating Point precision.

* **W4A16:** 4-bit Weight, 16-bit Activation quantization.

---

## 2. Component Analysis

### (a) RTX 4090 Desktop GPU

* **Y-Axis:** 0 to 200 Tokens / sec (increments of 50).

* **Visual Trend:** The "Ours (AWQ, W4A16)" method (Maroon) significantly outperforms both FP16 baselines across all models. Larger models (13B, 30B) that fail with FP16 are enabled by the AWQ method.

| Model | Huggingface (FP16) | Ours (FP16) | Ours (AWQ, W4A16) |

| :--- | :--- | :--- | :--- |

| **Llama-2 (7B)** | 52 | 62 | 194 |

| **Llama-2 (13B)** | FP16 OOM | FP16 OOM | 110 |

| **MPT (7B)** | 59 | 63 | 158 |

| **MPT (30B)** | FP16 OOM | FP16 OOM | 49 |

| **Falcon (7B)** | 33 | 53 | 124 |

---

### (b) Jetson Orin Mobile GPU

* **Y-Axis:** 0 to 40 Tokens / sec (increments of 10).

* **Visual Trend:** Throughput is lower than the desktop GPU, but the relative performance gain of the AWQ method remains high (approx. 3x-4x faster than FP16).

| Model | Huggingface (FP16) | Ours (FP16) | Ours (AWQ, W4A16) |

| :--- | :--- | :--- | :--- |

| **Llama-2 (7B)** | 11 | 12 | 39 |

| **Llama-2 (13B)** | FP16 OOM | FP16 OOM | 21 |

| **MPT (7B)** | 11 | 12 | 38 |

| **MPT (30B)** | FP16 OOM | FP16 OOM | 9 |

| **Falcon (7B)** | 7 | 9 | 22 |

---

### (c) RTX 4070 Laptop GPU

* **Y-Axis:** 0 to 60 Tokens / sec (increments of 15).

* **Visual Trend:** On this hardware, all FP16 baselines (both Huggingface and "Ours") result in **OOM** for every model tested. Only the "Ours (AWQ, W4A16)" method is capable of running the models.

| Model | Huggingface (FP16) | Ours (FP16) | Ours (AWQ, W4A16) |

| :--- | :--- | :--- | :--- |

| **Llama-2 (7B)** | FP16 OOM | FP16 OOM | 61 |

| **Llama-2 (13B)** | FP16 OOM | FP16 OOM | 33 |

| **MPT (7B)** | FP16 OOM | FP16 OOM | 60 |

| **Falcon (7B)** | FP16 OOM | FP16 OOM | 52 |

---

## 3. Summary of Findings

1. **Optimization Impact:** The "Ours (AWQ, W4A16)" method consistently provides the highest throughput across all tested hardware and models.

2. **Memory Efficiency:** The AWQ method allows larger models (Llama-2 13B and MPT 30B) to run on hardware where standard FP16 implementations fail due to memory constraints (OOM).

3. **Hardware Scaling:**

* The **RTX 4090** (Desktop) is the highest performing, reaching nearly 200 tokens/sec.

* The **RTX 4070** (Laptop) appears to have severe VRAM limitations for FP16, as it cannot run any tested model without quantization.

* The **Jetson Orin** (Mobile) provides functional but lower-speed inference suitable for edge deployment.