## State Transition Diagram & Memory Allocation Flowchart

### Overview

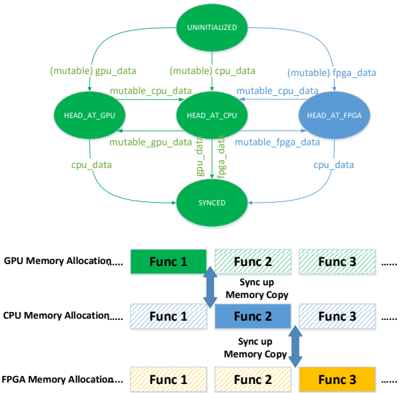

The image is a technical diagram composed of two distinct but related parts. The top section is a **state transition diagram** illustrating the lifecycle and synchronization states of a data "head" across three computing units: GPU, CPU, and FPGA. The bottom section is a **memory allocation flowchart** showing how functions are allocated and synchronized across the memory spaces of these same three units. The diagram uses color-coding (green for GPU, blue for CPU, yellow for FPGA) to link concepts between the two sections.

### Components/Axes

#### Top Section: State Transition Diagram

* **States (Ovals):**

* `UNINITIALIZED` (Green, top center)

* `HEAD_AT_GPU` (Green, left)

* `HEAD_AT_CPU` (Green, center)

* `HEAD_AT_FPGA` (Blue, right)

* `SYNCED` (Green, bottom center)

* **Transitions (Arrows & Labels):**

* From `UNINITIALIZED` to `HEAD_AT_GPU`: Label `{mutable} gpu_data`

* From `UNINITIALIZED` to `HEAD_AT_CPU`: Label `{mutable} cpu_data`

* From `UNINITIALIZED` to `HEAD_AT_FPGA`: Label `{mutable} fpga_data`

* Between `HEAD_AT_GPU` and `HEAD_AT_CPU`:

* GPU -> CPU: `mutable_cpu_data`

* CPU -> GPU: `mutable_gpu_data`

* Between `HEAD_AT_CPU` and `HEAD_AT_FPGA`:

* CPU -> FPGA: `mutable_fpga_data`

* FPGA -> CPU: `mutable_cpu_data`

* To `SYNCED` State:

* From `HEAD_AT_GPU`: `cpu_data`

* From `HEAD_AT_CPU`: `gpu_data`, `fpga_data`

* From `HEAD_AT_FPGA`: `cpu_data`

#### Bottom Section: Memory Allocation Flowchart

* **Rows (Memory Spaces):**

* `GPU Memory Allocation....` (Top row, green theme)

* `CPU Memory Allocation....` (Middle row, blue theme)

* `FPGA Memory Allocation....` (Bottom row, yellow theme)

* **Function Blocks (Rectangles):**

* Each row contains a sequence of blocks labeled `Func 1`, `Func 2`, `Func 3`, `......`.

* **GPU Row:** `Func 1` is solid green. `Func 2` and `Func 3` are hatched green.

* **CPU Row:** `Func 2` is solid blue. `Func 1` and `Func 3` are hatched blue.

* **FPGA Row:** `Func 3` is solid yellow. `Func 1` and `Func 2` are hatched yellow.

* **Synchronization Arrows:**

* A double-headed vertical arrow connects the solid `Func 1` (GPU) and hatched `Func 1` (CPU). Label: `Sync up Memory Copy`.

* A double-headed vertical arrow connects the solid `Func 2` (CPU) and hatched `Func 2` (FPGA). Label: `Sync up Memory Copy`.

### Detailed Analysis

The diagram describes a system for managing a data structure (the "head") that can reside primarily on one of three hardware units. The state machine governs where the authoritative copy is located and how it transitions.

1. **Initialization:** The system starts `UNINITIALIZED`. It can transition to having the head primarily on the GPU, CPU, or FPGA by acquiring `{mutable}` data for that unit.

2. **Inter-Unit Communication:** Once initialized, the head can move between units. The labels on the arrows between `HEAD_AT_*` states (e.g., `mutable_cpu_data`) indicate the type of data being transferred to effect the move.

3. **Synchronization:** All paths eventually lead to a `SYNCED` state. The labels on arrows entering `SYNCED` (`cpu_data`, `gpu_data`, `fpga_data`) suggest that in this state, the data is consistent and accessible across the relevant units.

4. **Memory Allocation Pattern:** The flowchart shows a pattern where a specific function's primary (solid-colored) allocation resides in one unit's memory, while its counterparts (hatched) exist in other units' memories. `Func 1` is primary on GPU, `Func 2` on CPU, and `Func 3` on FPGA.

5. **Data Synchronization Flow:** The "Sync up Memory Copy" arrows explicitly show that data for a given function is copied between the unit where it is primary and the unit where it has a secondary allocation. For example, `Func 1` data is synced between GPU (primary) and CPU (secondary).

### Key Observations

* **Color Consistency:** The color scheme is strictly maintained. Green is associated with GPU and the `UNINITIALIZED`/`SYNCED` states. Blue is used for `HEAD_AT_FPGA` and CPU memory. Yellow is used for FPGA memory. This visually links the state of the "head" to the memory allocation diagram.

* **Asymmetric State Color:** The `HEAD_AT_FPGA` state is blue, while `HEAD_AT_GPU` and `HEAD_AT_CPU` are green. This may indicate a different category or privilege level for the FPGA state within the state machine.

* **Solid vs. Hatched Blocks:** The solid block in each memory row denotes the "home" or primary allocation for that function. The hatched blocks represent cached or secondary copies that must be synchronized with the primary.

* **Central Role of CPU:** In the state diagram, `HEAD_AT_CPU` is the central node, connected directly to all other states. In the flowchart, the CPU acts as an intermediary, syncing with both GPU (`Func 1`) and FPGA (`Func 2`).

### Interpretation

This diagram illustrates a **heterogeneous computing memory coherency model**. It defines both the logical state of a shared data structure and the physical memory layout required to support operations across a CPU, GPU, and FPGA.

* **The State Machine** is the control logic. It ensures there is a single, mutable "head" of data at any time, preventing race conditions. The `SYNCED` state is the goal, representing a point where all units have a consistent view of the data.

* **The Memory Flowchart** is the data layout and synchronization plan. It shows a deliberate partitioning of work (`Func 1` on GPU, `Func 2` on CPU, `Func 3` on FPGA) and defines the necessary data copy operations to maintain consistency between the primary copy and its replicas on other units.

* **The Connection:** The two parts are two views of the same system. The state transitions (e.g., moving the head from GPU to CPU) would trigger or be triggered by the "Sync up Memory Copy" operations shown in the flowchart. The system is designed for tasks where different stages of computation are best suited to different hardware (e.g., parallel tasks on GPU, sequential logic on CPU, specialized acceleration on FPGA), but require shared access to intermediate data. The model prioritizes explicit control over data location and synchronization over automatic cache coherency, which is common in high-performance heterogeneous systems to minimize overhead and provide predictability.