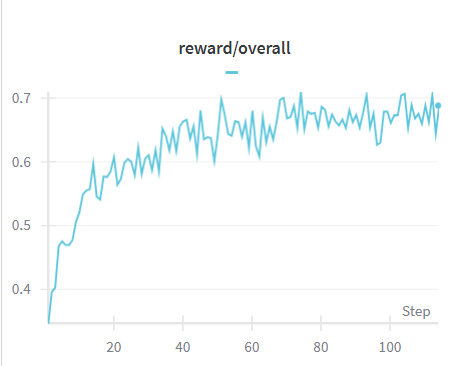

## Line Chart: Reward/Overall Over Training Steps

### Overview

The image displays a single-series line chart titled "reward/overall," plotting a performance metric against training steps. The chart shows a generally increasing trend with significant volatility, suggesting a learning or optimization process where the reward improves over time but with considerable step-to-step variation.

### Components/Axes

* **Chart Title:** "reward/overall" (centered at the top).

* **X-Axis:**

* **Label:** "Step" (positioned at the bottom-right corner).

* **Scale:** Linear scale from 0 to approximately 110.

* **Major Tick Marks:** Labeled at 20, 40, 60, 80, 100.

* **Y-Axis:**

* **Label:** No explicit axis title is present. The axis represents the "reward/overall" value.

* **Scale:** Linear scale from approximately 0.35 to 0.75.

* **Major Tick Marks:** Labeled at 0.4, 0.5, 0.6, 0.7.

* **Legend:**

* **Position:** Top-center, just below the title.

* **Content:** A short horizontal blue line followed by the text "reward/overall". This confirms the single data series plotted.

* **Data Series:**

* **Color:** Blue (matches the legend).

* **Type:** A continuous, jagged line connecting data points at each step.

### Detailed Analysis

**Trend Verification:** The blue line exhibits a clear upward trend from left to right. It begins with a steep positive slope, which gradually flattens but remains positive, albeit with high-frequency oscillations.

**Key Data Points and Segments:**

* **Start (Step ~0):** The line originates at a value of approximately **0.35**.

* **Initial Rapid Ascent (Steps 0-20):** The reward increases sharply, reaching approximately **0.60** by step 20. This segment has the steepest slope on the chart.

* **Volatile Plateau/Rise (Steps 20-110):** After step 20, the rate of increase slows. The line fluctuates significantly, creating a "noisy" upward channel.

* The value oscillates primarily between **0.60** and **0.70**.

* Notable local minima occur around steps 50 (~0.61) and 95 (~0.63).

* Notable local maxima occur around steps 55 (~0.70), 75 (~0.70), and 105 (~0.70).

* **End (Step ~110):** The final visible data point is near **0.69**, close to the series' high range.

### Key Observations

1. **High Volatility:** The line is highly jagged, indicating substantial variance in the "reward/overall" metric from one step to the next, even as the overall trend is positive.

2. **Diminishing Returns:** The most significant gains occur early (steps 0-20). Subsequent progress is slower and noisier.

3. **Performance Ceiling:** The metric appears to encounter resistance near the **0.70** level, touching or approaching it multiple times after step 50 but not sustaining a clear break above it within the visible range.

4. **Noisy Convergence:** The pattern is characteristic of a stochastic optimization process (e.g., reinforcement learning) where an agent's performance improves on average but is subject to exploration, environmental randomness, or policy instability.

### Interpretation

This chart likely visualizes the training progress of a machine learning model, specifically a reinforcement learning agent, where "reward/overall" is the primary performance metric. The data suggests:

* **Effective Learning:** The agent successfully learns a policy that improves its cumulative reward over the first ~20 steps.

* **Exploration-Exploitation Trade-off:** The persistent volatility after step 20 may indicate ongoing exploration (trying new actions) or inherent stochasticity in the environment, preventing smooth convergence.

* **Potential Plateau:** The repeated failure to decisively break above 0.70 could signal that the agent has reached a local optimum, the limit of its current model capacity, or the maximum achievable reward under the given conditions. Further training beyond step 110 would be needed to determine if this is a true plateau.

* **Diagnostic Value:** The chart is a crucial diagnostic tool. The high noise might prompt an engineer to adjust hyperparameters (like learning rate or batch size), increase the smoothing of the reported metric, or investigate the source of variance in the reward signal.