## Venn Diagram: Transparent AI

### Overview

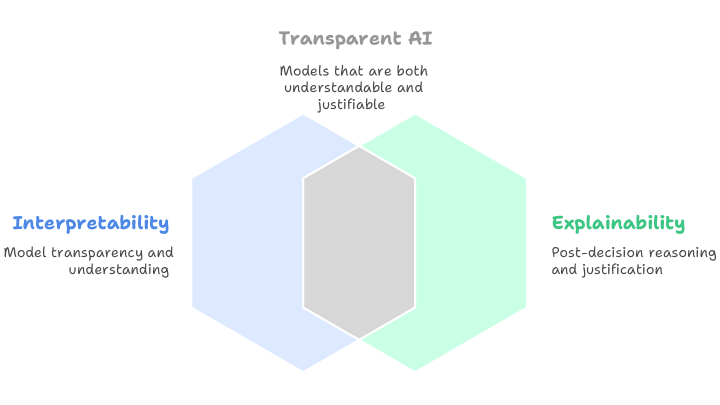

The image is a Venn diagram illustrating the relationship between **Interpretability**, **Explainability**, and **Transparent AI**. It uses three overlapping hexagons (blue, green, and gray) to represent these concepts, with textual descriptions embedded in each section. The diagram emphasizes the interplay between model transparency, post-hoc reasoning, and the ideal of "Transparent AI."

---

### Components/Axes

- **Title**: "Transparent AI" (centered at the top in gray text).

- **Subtitle**: "Models that are both understandable and justifiable" (below the title).

- **Hexagons**:

- **Left (Blue)**: Labeled "Interpretability" with the text: "Model transparency and understanding."

- **Right (Green)**: Labeled "Explainability" with the text: "Post-decision reasoning and justification."

- **Overlap (Gray)**: Represents the intersection of Interpretability and Explainability, labeled "Transparent AI."

- **Legend**: Implicitly defined by color coding (blue = Interpretability, green = Explainability, gray = Transparent AI).

---

### Detailed Analysis

1. **Interpretability (Blue Hexagon)**:

- Focuses on **model transparency and understanding**, emphasizing the need for AI systems to be inherently clear in their design and decision-making processes.

- Positioned on the left, it suggests foundational transparency as a prerequisite for broader AI accountability.

2. **Explainability (Green Hexagon)**:

- Addresses **post-decision reasoning and justification**, highlighting methods to interpret AI outputs after they are generated (e.g., LIME, SHAP).

- Positioned on the right, it represents reactive or supplementary transparency.

3. **Transparent AI (Gray Overlap)**:

- The intersection of blue and green hexagons, symbolizing the synthesis of interpretability and explainability.

- The subtitle reinforces this as the goal: models that are **both understandable** (interpretability) **and justifiable** (explainability).

---

### Key Observations

- The diagram visually prioritizes the **integration** of interpretability and explainability to achieve Transparent AI, rather than treating them as separate or competing goals.

- The use of overlapping hexagons (instead of circles) may imply a more nuanced, multi-dimensional relationship between the concepts.

- No numerical data or quantitative trends are present; the focus is on conceptual relationships.

---

### Interpretation

This diagram underscores the **holistic nature of Transparent AI**, arguing that it cannot exist as a standalone concept but requires the convergence of two critical dimensions:

1. **Interpretability**: Ensuring models are designed to be inherently transparent (e.g., via simpler architectures or inherently interpretable algorithms).

2. **Explainability**: Providing tools to retroactively justify decisions when full interpretability is impractical (e.g., in complex deep learning models).

The gray overlap (Transparent AI) acts as the **Venn diagram’s nucleus**, suggesting that true transparency in AI systems demands both proactive design choices (interpretability) and reactive analytical frameworks (explainability). The absence of numerical data implies this is a conceptual framework rather than an empirical study, likely intended for educational or strategic discussions in AI ethics and governance.