## Diagram: Transparent AI

### Overview

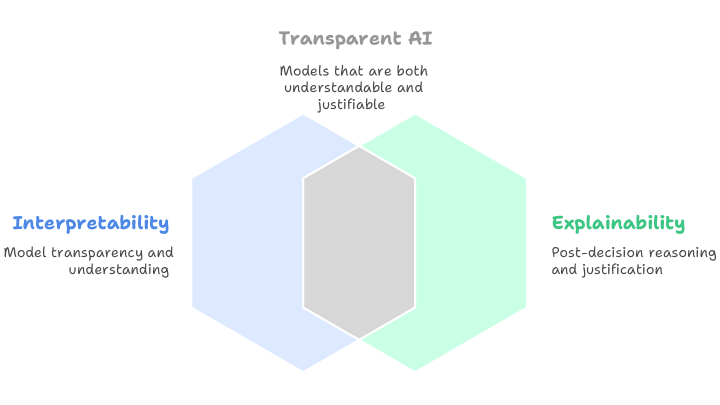

The image is a Venn diagram illustrating the concept of Transparent AI. It uses two overlapping hexagons to represent "Interpretability" and "Explainability," with the overlapping region representing the intersection of both concepts, which defines Transparent AI.

### Components/Axes

* **Title:** Transparent AI

* Subtitle: Models that are both understandable and justifiable

* **Left Hexagon:**

* Label: Interpretability

* Description: Model transparency and understanding

* Color: Light Blue

* **Right Hexagon:**

* Label: Explainability

* Description: Post-decision reasoning and justification

* Color: Light Green

* **Overlapping Region:**

* Color: Light Gray

### Detailed Analysis

The diagram visually represents the relationship between Interpretability and Explainability in the context of Transparent AI.

* **Interpretability (Light Blue Hexagon):** This refers to the ability to understand how a model works and why it makes certain predictions. It emphasizes model transparency.

* **Explainability (Light Green Hexagon):** This refers to the ability to provide reasons or justifications for a model's decisions, particularly after the decision has been made.

* **Transparent AI (Light Gray Overlap):** The overlapping region signifies that Transparent AI requires both Interpretability and Explainability. Models must be both understandable and justifiable to be considered truly transparent.

### Key Observations

* The diagram highlights that Interpretability and Explainability are distinct but related concepts.

* Transparent AI is presented as the intersection of these two concepts, implying that both are necessary for achieving transparency in AI models.

### Interpretation

The diagram suggests that Transparent AI is not simply about understanding how a model works (Interpretability) or justifying its decisions (Explainability) in isolation. Instead, it requires a combination of both. This implies that a truly transparent AI model should be both understandable in its internal workings and capable of providing clear justifications for its outputs. The overlapping region emphasizes the need for a holistic approach to AI transparency, where understanding and justification go hand in hand.