\n

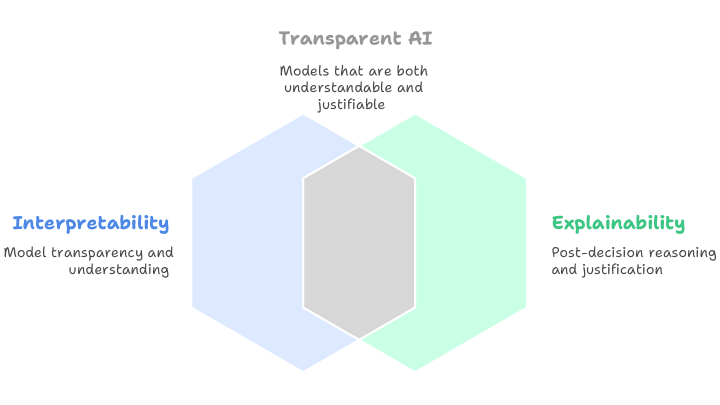

## Conceptual Diagram: Transparent AI Framework

### Overview

The image is a conceptual diagram illustrating the relationship between three key terms in artificial intelligence ethics and design: **Interpretability**, **Explainability**, and **Transparent AI**. It uses overlapping geometric shapes (hexagons) to visually represent how the first two concepts combine to form the third.

### Components/Axes

The diagram consists of three primary text labels, each associated with a colored hexagonal shape, and a central overlapping region.

1. **Top-Center Element:**

* **Label:** "Transparent AI"

* **Subtitle/Description:** "Models that are both understandable and justifiable"

* **Associated Shape:** A gray hexagon positioned in the center, formed by the overlap of the two larger hexagons below it.

2. **Left Element:**

* **Label:** "Interpretability"

* **Subtitle/Description:** "Model transparency and understanding"

* **Associated Shape:** A light blue hexagon positioned on the left side of the diagram.

3. **Right Element:**

* **Label:** "Explainability"

* **Subtitle/Description:** "Post-decision reasoning and justification"

* **Associated Shape:** A light green (mint) hexagon positioned on the right side of the diagram.

### Detailed Analysis

* **Spatial Layout:** The diagram is arranged with "Transparent AI" at the top. Below it, the "Interpretability" (blue) hexagon is on the left, and the "Explainability" (green) hexagon is on the right. They overlap significantly in the center.

* **Visual Metaphor:** The central gray hexagon is explicitly created by the intersection of the blue and green hexagons. This visually communicates that "Transparent AI" is the product or intersection of "Interpretability" and "Explainability."

* **Text Transcription:**

* **Top:** `Transparent AI` / `Models that are both understandable and justifiable`

* **Left:** `Interpretability` / `Model transparency and understanding`

* **Right:** `Explainability` / `Post-decision reasoning and justification`

### Key Observations

* The diagram uses a simple Venn-diagram-like logic with hexagons instead of circles.

* Color is used to differentiate the two foundational concepts (blue for Interpretability, green for Explainability) and to highlight their synthesis (gray for Transparent AI).

* The text is concise, providing a one-line definition for each term.

* The layout is balanced and symmetrical, emphasizing the equal importance of the two contributing concepts.

### Interpretation

This diagram presents a clear, hierarchical model for understanding AI transparency. It argues that **Transparent AI** is not a single property but a composite state achieved when an AI system possesses both:

1. **Interpretability:** The inherent, structural clarity of the model itself—how it works and arrives at decisions (the "understandable" part).

2. **Explainability:** The ability to provide meaningful, human-readable justifications for specific outputs or decisions after they occur (the "justifiable" part).

The visual overlap suggests these are distinct but deeply interconnected disciplines. A model might be interpretable in its architecture (e.g., a decision tree) but lack explainability for a specific prediction. Conversely, a complex "black box" model might have post-hoc explainability tools applied to it, offering justification without full inherent interpretability. The diagram posits that true, robust transparency requires both facets. The central, unified gray hexagon represents the ideal state where these two approaches converge, resulting in AI that is both comprehensible in its mechanics and accountable in its actions. This framework is crucial for building trust, ensuring fairness, and meeting regulatory requirements in AI systems.