TECHNICAL ASSET FINGERPRINT

9eb56edf6ac6dbe4f3febfb5

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## System Diagram: ReAct vs. RAP for Task Execution in ALFWorld and Franka Kitchen

### Overview

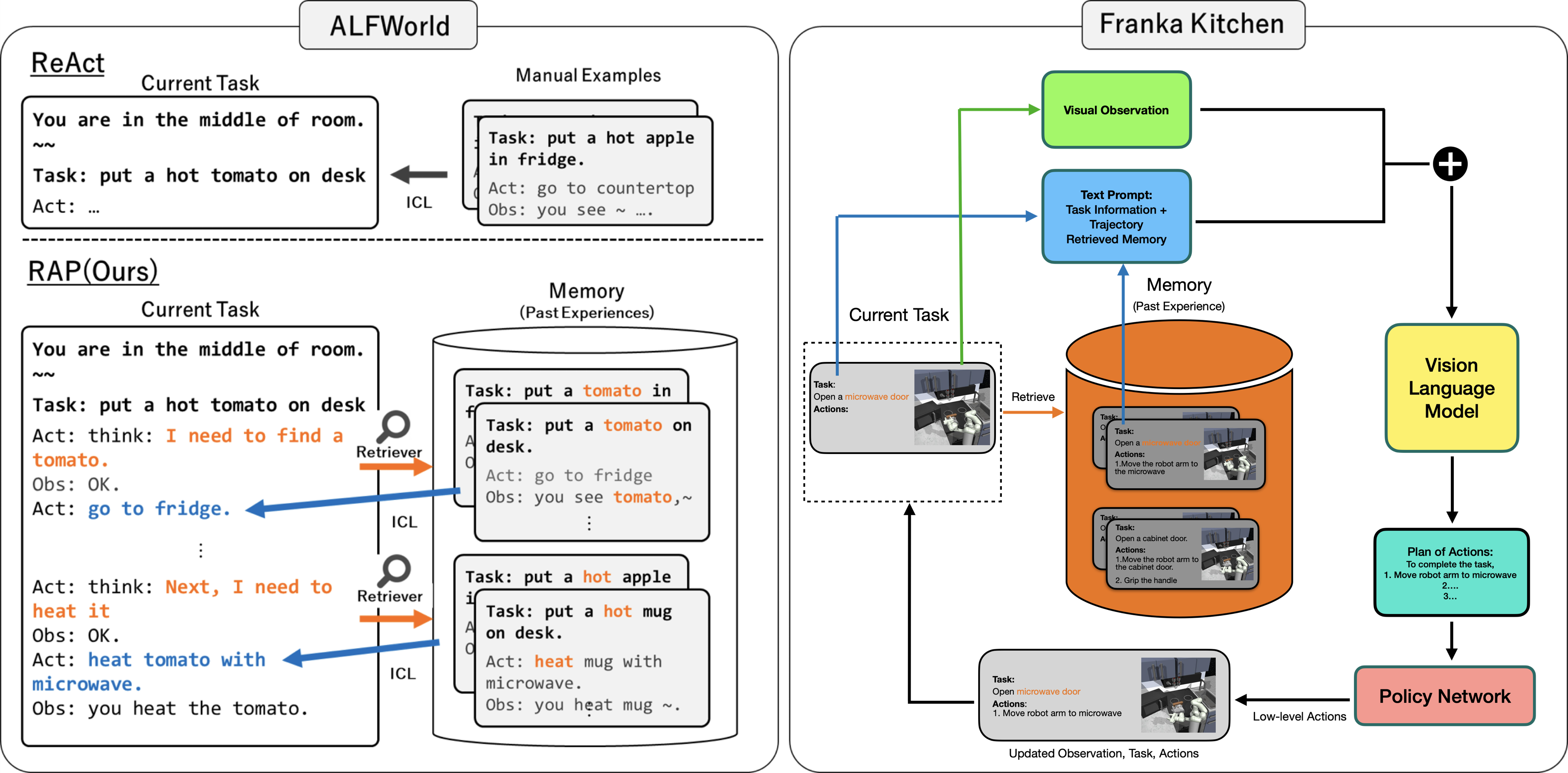

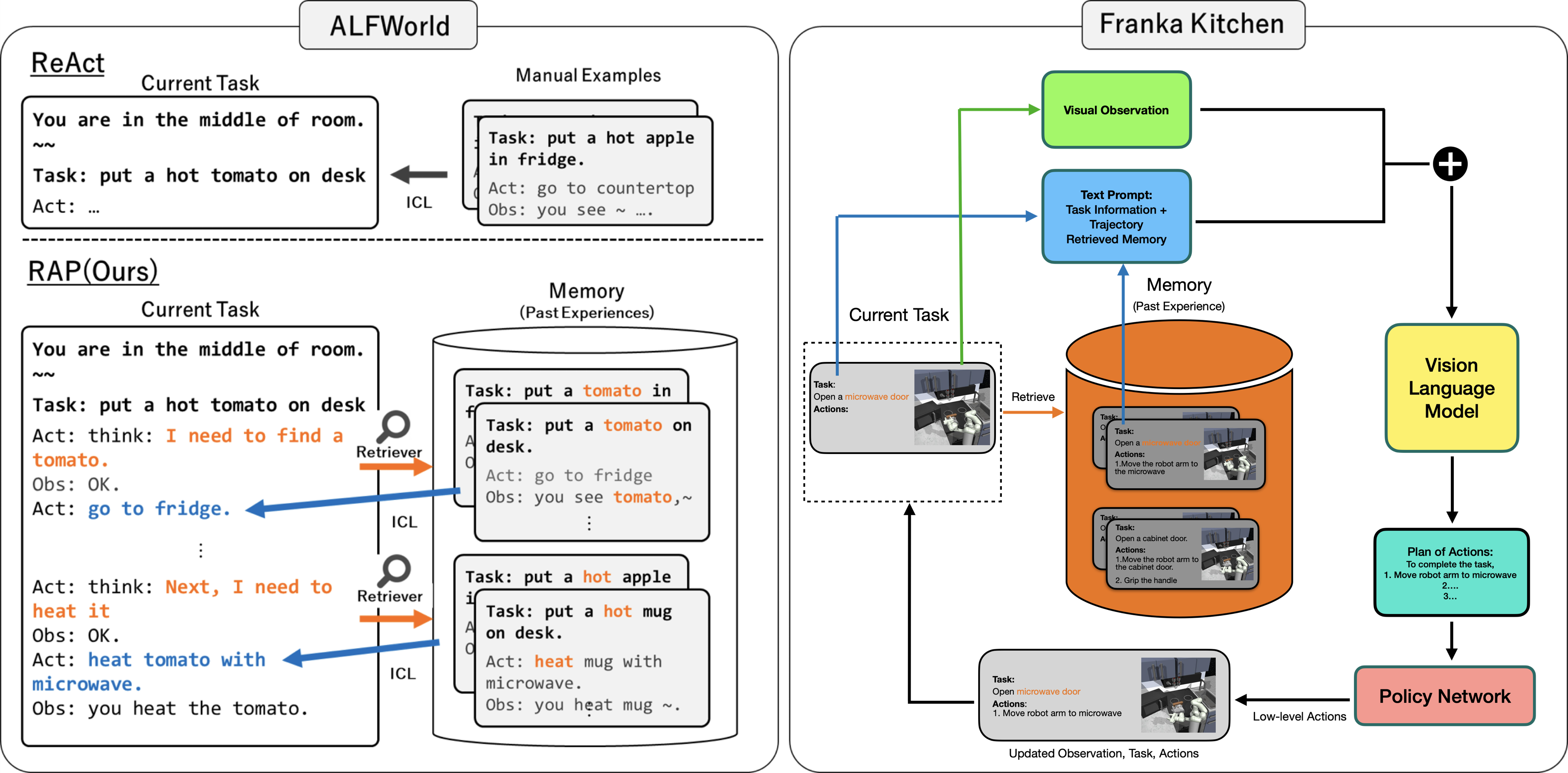

The image presents a system diagram comparing two approaches, ReAct and RAP(Ours), for task execution in two environments: ALFWorld and Franka Kitchen. The diagram illustrates the flow of information and actions within each system, highlighting the role of memory, visual observation, language models, and policy networks.

### Components/Axes

**ALFWorld (Left Side):**

* **ReAct:**

* **Current Task:** Displays the current task description: "You are in the middle of room. Task: put a hot tomato on desk. Act: ..."

* **ICL (In-Context Learning):** An arrow points from "Manual Examples" to "ReAct" indicating the use of manual examples for in-context learning.

* **RAP(Ours):**

* **Current Task:** Displays the current task description: "You are in the middle of room. Task: put a hot tomato on desk. Act: think: I need to find a tomato. Obs: OK. Act: go to fridge. ... Act: think: Next, I need to heat it. Obs: OK. Act: heat tomato with microwave. Obs: you heat the tomato."

* **Retriever:** A magnifying glass icon labeled "Retriever" points from the "Current Task" to the "Memory (Past Experiences)" cylinder.

* **Memory (Past Experiences):** A cylinder containing examples of past tasks and observations, such as "Task: put a tomato in fridge. Task: put a tomato on desk. Act: go to fridge. Obs: you see tomato, ~ ..." and "Task: put a hot apple on desk. Task: put a hot mug on desk. Act: heat mug with microwave. Obs: you heat mug ~."

* **ICL (In-Context Learning):** An arrow points from "Memory (Past Experiences)" to "RAP(Ours)" indicating the use of memory for in-context learning.

* **Manual Examples:** Contains examples of manual tasks, such as "Task: put a hot apple in fridge. Act: go to countertop. Obs: you see ~ ..."

**Franka Kitchen (Right Side):**

* **Visual Observation:** A green rectangle labeled "Visual Observation" at the top.

* **Text Prompt:** A blue rectangle labeled "Text Prompt: Task Information + Trajectory Retrieved Memory".

* **Memory (Past Experience):** An orange cylinder labeled "Memory (Past Experience)".

* Contains examples of past tasks and actions, such as "Task: Open a microwave door. Actions: 1. Move the robot arm to the microwave" and "Task: Open a cabinet door. Actions: 1. Move the robot arm to the cabinet door. 2. Grip the handle".

* **Current Task:** A dotted rectangle labeled "Current Task" containing the current task and actions, such as "Task: Open a microwave door. Actions:". It also contains a small image of a robot arm in a kitchen environment.

* **Vision Language Model:** A yellow rectangle labeled "Vision Language Model".

* **Plan of Actions:** A teal rectangle labeled "Plan of Actions: To complete the task, 1. Move robot arm to microwave 2... 3...".

* **Policy Network:** A pink rectangle labeled "Policy Network".

* **Arrows:** Arrows indicate the flow of information between components:

* Green arrow from "Visual Observation" to the "+" symbol.

* Blue arrow from "Text Prompt" to the "+" symbol.

* Black arrow from the "+" symbol to "Vision Language Model".

* Black arrow from "Vision Language Model" to "Plan of Actions".

* Black arrow from "Plan of Actions" to "Policy Network".

* Black arrow from "Policy Network" to "Current Task" labeled "Low-level Actions".

* Green arrow from "Current Task" to "Visual Observation".

* Blue arrow from "Current Task" to "Text Prompt".

* Orange arrow from "Memory (Past Experience)" to "Text Prompt".

* Orange arrow from "Memory (Past Experience)" to "Current Task" labeled "Retrieve".

* Black arrow from "Current Task" to "Updated Observation, Task, Actions".

### Detailed Analysis or Content Details

* **ReAct in ALFWorld:** The system starts with a current task and uses in-context learning (ICL) from manual examples to determine the next action.

* **RAP(Ours) in ALFWorld:** The system starts with a current task and uses a retriever to access relevant past experiences from memory. It then uses ICL to determine the next action.

* **Franka Kitchen System:** The system integrates visual observation and text prompts, combines them, and feeds them into a vision language model. The model generates a plan of actions, which is then executed by a policy network. The policy network's low-level actions update the current task and observation.

### Key Observations

* **ALFWorld:** ReAct relies on manual examples, while RAP(Ours) uses a retriever to access past experiences.

* **Franka Kitchen:** The system integrates visual and textual information to generate a plan of actions.

* **Memory:** Both systems utilize memory to inform decision-making.

* **Vision Language Model:** The Franka Kitchen system uses a vision language model to bridge the gap between visual and textual information.

### Interpretation

The diagram illustrates two different approaches to task execution. ReAct relies on manual examples for in-context learning, while RAP(Ours) uses a retriever to access past experiences. The Franka Kitchen system integrates visual and textual information to generate a plan of actions, which is then executed by a policy network.

The diagram suggests that RAP(Ours) may be more adaptable to new situations, as it can leverage past experiences to inform decision-making. The Franka Kitchen system may be more robust to noisy or incomplete information, as it integrates visual and textual information.

The use of a vision language model in the Franka Kitchen system highlights the importance of bridging the gap between visual and textual information in robotics.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: Robotic Task Planning and Execution

### Overview

This diagram illustrates a comparison between three approaches to robotic task planning and execution: ReAct, RAP (Ours), and a system utilizing visual observation in a simulated environment (Franka Kitchen). It depicts the flow of information and the use of memory and retrieval mechanisms in each approach. The diagram focuses on the task of manipulating objects (tomato, apple, mug) within a kitchen environment.

### Components/Axes

The diagram is segmented into three main sections, each representing a different approach. Each section includes:

* **Current Task:** Displays the current task being processed by the system.

* **Memory (Past Experiences):** Represents the system's memory of previous interactions.

* **Retrieval Mechanisms:** Illustrates how past experiences are retrieved to inform current actions.

* **ICL (In-Context Learning):** Shows the use of examples to guide the system.

* **Vision Language Model:** (Franka Kitchen section only) Represents the component that processes visual information.

* **Policy Network:** (Franka Kitchen section only) Represents the component that generates low-level actions.

The diagram also includes labels for actions ("Act:") and observations ("Obs:").

### Detailed Analysis or Content Details

**1. ReAct (Top-Left)**

* **Current Task:** "You are in the middle of room." and "Task: put a hot tomato on desk". "Act: ..."

* **Manual Examples:**

* "Task: put a hot apple in fridge." "Act: go to countertop" "Obs: you see ~"

* The flow is a direct arrow from the Current Task to the Manual Examples (ICL).

**2. RAP (Ours) (Center-Left)**

* **Current Task:** "You are in the middle of room." and "Task: put a hot tomato on desk". "Act: think: I need to find a tomato." "Obs: OK." "Act: go to fridge."

* **Memory (Past Experiences):** Contains two examples:

* "Task: put a tomato in fridge." "Act: go to fridge" "Obs: you see tomato, ~"

* "Task: put a hot apple on desk." "Act: heat mug with microwave." "Obs: you heat the tomato."

* **Retrieval Mechanisms:** Two "Retriever" blocks with arrows pointing from the Current Task to the Memory.

* **ICL:** Arrows connect the Retriever blocks to the Current Task, indicating the use of retrieved information.

**3. Franka Kitchen (Right)**

* **Visual Observation:** An image of a microwave is shown.

* **Text Prompt:** "Task Information + Trajectory Retrieved Memory"

* **Memory (Past Experience):**

* **Vision Language Model:** Receives input from the Text Prompt and outputs "Task: Open a microwave door".

* **Policy Network:** Receives input from the Vision Language Model and outputs "Plan of Actions: 1. Move robot arm to microwave 2. ..."

* **Low-level Actions:** "Updated Observation, Task, Actions"

* **Task Examples (within Vision Language Model):**

* "Task: Open a microwave door" "Actions: 1. Move robot arm to microwave 2. Grip the handle"

* "Task: Open a cabinet door" "Actions: 1. Move robot arm to cabinet door 2. Grip the handle"

* "Task: Open microwave door" "Actions: 1. Move robot arm to microwave 2. Grip the handle"

### Key Observations

* RAP (Ours) explicitly utilizes a memory of past experiences and a retrieval mechanism to inform current actions.

* ReAct relies on manual examples (ICL) without a dedicated memory component.

* Franka Kitchen integrates visual observation with language processing and policy planning.

* The Franka Kitchen section shows a more detailed breakdown of the task into sub-actions.

* The RAP section shows the system reasoning about the task ("think: I need to find a tomato.") before taking action.

### Interpretation

The diagram demonstrates a progression in robotic task planning complexity. ReAct represents a basic approach relying on pre-defined examples. RAP (Ours) introduces the concept of learning from past experiences and retrieving relevant information to improve performance. Franka Kitchen showcases a more sophisticated system that combines visual perception, language understanding, and action planning.

The use of memory and retrieval in RAP suggests an attempt to address the limitations of relying solely on pre-defined examples. The system can adapt to new situations by leveraging its past experiences. The Franka Kitchen approach highlights the importance of integrating visual information for robots operating in real-world environments.

The diagram suggests that a combination of these approaches – learning from experience, utilizing visual perception, and employing language understanding – is crucial for developing robust and adaptable robotic systems. The "plus" symbol connecting the Franka Kitchen section to the other two suggests a potential integration of these methods. The diagram is a conceptual illustration of different architectures and does not present quantitative data. It focuses on the flow of information and the components involved in each approach.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## [Diagram Type]: Technical Diagram (AI/Robotics System Comparison)

### Overview

The image is a technical diagram comparing two AI/robotics systems: **ALFWorld** (left) and **Franka Kitchen** (right). ALFWorld contrasts a baseline method (ReAct) with a proposed method (RAP, labeled "Ours"), while Franka Kitchen illustrates a vision-language model pipeline for robotic task execution. The diagram uses text, arrows, and colored boxes to explain components, data flow, and memory-augmented learning.

### Components/Axes (Diagram Structure)

#### Left Panel: ALFWorld

- **ReAct (Top-Left):**

- A box labeled "Current Task" with text: *"You are in the middle of room. ~~ Task: put a hot tomato on desk Act: ..."*

- A box labeled "Manual Examples" with text: *"Task: put a hot apple in fridge. Act: go to countertop Obs: you see ~ ..."*

- An arrow labeled **"ICL"** (In-Context Learning) connects "Manual Examples" to "Current Task".

- **RAP (Ours, Bottom-Left):**

- A box labeled "Current Task" with text: *"You are in the middle of room. ~~ Task: put a hot tomato on desk Act: think: I need to find a tomato. Obs: OK. Act: go to fridge. ... Act: think: Next, I need to heat it Obs: OK. Act: heat tomato with microwave. Obs: you heat the tomato."*

- A cylinder labeled **"Memory (Past Experiences)"** contains two example tasks:

- *"Task: put a tomato in fridge Act: go to fridge Obs: you see tomato,~ ..."*

- *"Task: put a hot apple in fridge Act: heat mug with microwave. Obs: you heat mug ~."*

- Arrows labeled **"Retriever"** (orange) and **"ICL"** (blue) connect "Current Task" to "Memory" examples.

#### Right Panel: Franka Kitchen

- **Current Task (Top-Left of Right Panel):**

- A dashed box with text: *"Task: Open a microwave door Actions: 1. Move the robot arm to the microwave"* (accompanied by a small image of a robot arm).

- **Memory (Past Experience, Orange Cylinder):**

- Contains two example tasks (with robot images):

- *"Task: Open a microwave door Actions: 1. Move the robot arm to the microwave"*

- *"Task: Open a cabinet door Actions: 1. Move the robot arm to the cabinet door. 2. Grip the handle"*

- **Visual Observation (Green Box):**

- Connected to "Current Task" (green arrow) and to a **"+"** symbol (combining inputs).

- **Text Prompt (Blue Box):**

- Text: *"Text Prompt: Task Information + Trajectory Retrieved Memory"*

- Connected to "Memory" (blue arrow) and to the **"+"** symbol.

- **Vision Language Model (Yellow Box):**

- Receives input from the **"+"** (Visual Observation + Text Prompt) and outputs to "Plan of Actions".

- **Plan of Actions (Teal Box):**

- Text: *"To complete the task, 1. Move robot arm to microwave 2. ... 3. ..."*

- **Policy Network (Red Box):**

- Receives "Plan of Actions" and outputs **"Low-level Actions"** to "Updated Observation, Task, Actions".

- **Updated Observation, Task, Actions (Gray Box):**

- Text: *"Task: Open microwave door Actions: 1. Move robot arm to microwave"* (accompanied by a robot image).

### Detailed Analysis (Text Transcription)

- **ALFWorld - ReAct:**

- Current Task: *"You are in the middle of room. ~~ Task: put a hot tomato on desk Act: ..."*

- Manual Examples: *"Task: put a hot apple in fridge. Act: go to countertop Obs: you see ~ ..."*

- Arrow: *"ICL"* (In-Context Learning) from Manual Examples to Current Task.

- **ALFWorld - RAP (Ours):**

- Current Task: *"You are in the middle of room. ~~ Task: put a hot tomato on desk Act: think: I need to find a tomato. Obs: OK. Act: go to fridge. ... Act: think: Next, I need to heat it Obs: OK. Act: heat tomato with microwave. Obs: you heat the tomato."*

- Memory (Past Experiences):

- Example 1: *"Task: put a tomato in fridge Act: go to fridge Obs: you see tomato,~ ..."*

- Example 2: *"Task: put a hot apple in fridge Act: heat mug with microwave. Obs: you heat mug ~."*

- Arrows: *"Retriever"* (orange) from Current Task to Memory, *"ICL"* (blue) from Memory to Current Task.

- **Franka Kitchen:**

- Current Task: *"Task: Open a microwave door Actions: 1. Move the robot arm to the microwave"* (robot image).

- Memory (Past Experience):

- Example 1: *"Task: Open a microwave door Actions: 1. Move the robot arm to the microwave"* (robot image).

- Example 2: *"Task: Open a cabinet door Actions: 1. Move the robot arm to the cabinet door. 2. Grip the handle"* (robot image).

- Visual Observation: Green box, connected to Current Task (green arrow) and to "+".

- Text Prompt: *"Text Prompt: Task Information + Trajectory Retrieved Memory"* (blue box), connected to Memory (blue arrow) and to "+".

- Vision Language Model: Yellow box, receives "+" (Visual Observation + Text Prompt), outputs to Plan of Actions.

- Plan of Actions: *"To complete the task, 1. Move robot arm to microwave 2. ... 3. ..."* (teal box).

- Policy Network: Red box, receives Plan of Actions, outputs "Low-level Actions" to Updated Observation, Task, Actions.

- Updated Observation, Task, Actions: *"Task: Open microwave door Actions: 1. Move robot arm to microwave"* (robot image).

### Key Observations

- **ALFWorld Comparison:** ReAct uses static manual examples for ICL, while RAP uses a retriever to access dynamic past experiences (memory), enabling more adaptive task-solving (e.g., recalling "heating a mug" to solve "heating a tomato").

- **Franka Kitchen Pipeline:** Integrates visual observation, text prompts (with retrieved memory), a vision-language model, and a policy network to generate low-level actions, emphasizing memory-augmented robotic autonomy.

- **Color Coding:** Green (Visual Observation), Blue (Text Prompt), Yellow (Vision Language Model), Teal (Plan of Actions), Red (Policy Network), Orange (Memory), and Gray (Task Boxes) distinguish components.

### Interpretation

- **ALFWorld:** The diagram contrasts a baseline (ReAct) with a proposed method (RAP) that leverages memory retrieval for in-context learning. RAP’s use of past experiences (e.g., heating a mug) to solve new tasks (heating a tomato) suggests improved adaptability in text-based task environments.

- **Franka Kitchen:** The pipeline demonstrates a modular approach to robotic task execution: visual input + text (with memory) → vision-language model → action plan → policy network → low-level actions. This highlights the role of memory and multimodal (vision + text) processing in physical robotic autonomy.

- **Overall:** Both sections emphasize memory-augmented AI for task planning, with ALFWorld focusing on text-based environments and Franka Kitchen on physical robotics, showcasing the versatility of such approaches across domains.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Diagram: Task Execution Systems Comparison (ReAct vs RAP)

### Overview

The diagram compares two task execution systems: **ReAct** (left) and **RAP (Ours)** (right), focusing on their components, memory integration, and action planning workflows. Both systems operate in simulated environments (ALFWorld and Franka Kitchen) and involve task execution, memory retrieval, and action planning.

---

### Components/Axes

#### **ReAct (Left Side)**

- **Current Task**: Text box with task description ("put a hot tomato on desk").

- **Manual Examples**: Arrows pointing to ICL (In-Context Learning) with example tasks (e.g., "put a hot apple in fridge").

- **Act**: Placeholder for action generation (empty in this diagram).

#### **RAP (Right Side)**

- **Current Task**: Text box with task description ("put a hot tomato on desk").

- **Memory (Past Experiences)**: Orange cylinder containing:

- Task: "put a tomato on desk" (with action: "go to fridge").

- Task: "put a hot apple in fridge" (with action: "heat mug with microwave").

- Task: "put a hot mug on desk" (with action: "heat mug with microwave").

- **Visual Observation**: Green box with robot arm and microwave (Franka Kitchen environment).

- **Text Prompt**: Blue box combining task info, trajectory, and retrieved memory.

- **Vision Language Model**: Yellow box processing inputs.

- **Plan of Actions**: Green box with step-by-step instructions (e.g., "Move robot arm to microwave").

- **Policy Network**: Pink box generating low-level actions (e.g., "Move robot arm to microwave").

---

### Detailed Analysis

#### **ReAct Workflow**

1. **Task**: "put a hot tomato on desk".

2. **Manual Examples**: Provides ICL examples (e.g., "put a hot apple in fridge").

3. **Act**: No explicit action shown; relies on ICL for task completion.

#### **RAP Workflow**

1. **Current Task**: "put a hot tomato on desk".

2. **Memory Retrieval**: Accesses past experiences (e.g., "put a tomato on desk" → "go to fridge").

3. **Visual Observation**: Robot arm and microwave in Franka Kitchen.

4. **Text Prompt**: Combines task, memory, and observation.

5. **Vision Language Model**: Processes inputs to generate a plan.

6. **Plan of Actions**: Step-by-step instructions (e.g., "Move robot arm to microwave").

7. **Policy Network**: Executes low-level actions (e.g., "Move robot arm to microwave").

---

### Key Observations

- **Memory Integration**: RAP explicitly uses a memory cylinder to store past experiences, while ReAct relies on manual examples.

- **Action Planning**: RAP includes a structured "Plan of Actions" step, whereas ReAct skips this.

- **Environment-Specific Tasks**: Franka Kitchen tasks involve physical interactions (e.g., robot arm movements), while ALFWorld tasks are text-based.

- **Color Coding**:

- Green: Visual Observation (Franka Kitchen).

- Blue: Text Prompt (task + memory).

- Orange: Memory cylinder (past experiences).

---

### Interpretation

- **ReAct** emphasizes **In-Context Learning (ICL)** with manual examples, suitable for text-based tasks in ALFWorld.

- **RAP** integrates **vision-language models** and **memory retrieval** for complex, environment-specific tasks (e.g., Franka Kitchen). Its workflow is more systematic, with explicit steps for action planning.

- **Memory Role**: RAP’s memory cylinder acts as a knowledge base, enabling the system to reuse past experiences (e.g., heating a mug) to solve new tasks.

- **Policy Network**: The final step in RAP translates high-level plans into executable actions, highlighting its focus on robotics.

This diagram illustrates how RAP enhances task execution by combining visual inputs, memory, and structured planning, whereas ReAct relies on simpler ICL mechanisms.

DECODING INTELLIGENCE...