## Diagram: Framework for Trustworthy Learning (TL) System

### Overview

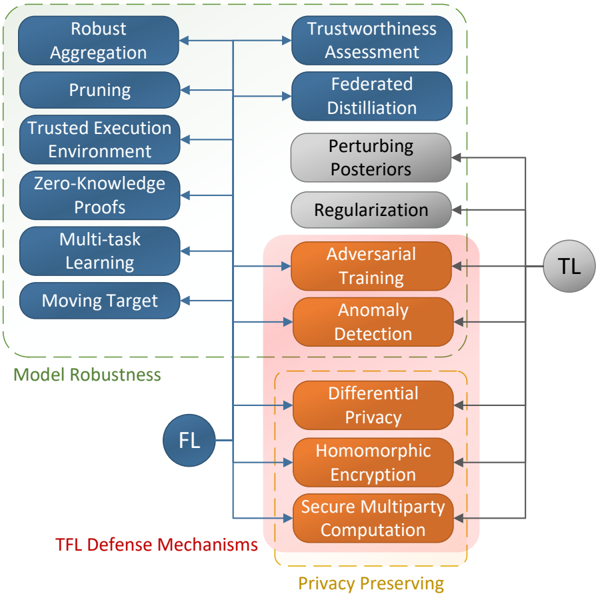

The diagram illustrates a conceptual framework for a Trustworthy Learning (TL) system, emphasizing three core pillars: **Model Robustness**, **TFL Defense Mechanisms**, and **Privacy Preserving**. These pillars converge into a central **TL** component, which integrates adversarial training, anomaly detection, and other mechanisms to ensure system reliability and security.

---

### Components/Axes

1. **Model Robustness** (Blue Boxes, Left):

- Subcategories: Robust Aggregation, Pruning, Trusted Execution Environment, Zero-Knowledge Proofs, Multi-task Learning, Moving Target.

- Arrows point to **Trustworthiness Assessment**, **Federated Distillation**, **Perturbing Priors**, and **Regularization** in the TL component.

2. **TFL Defense Mechanisms** (Red Boxes, Middle):

- Subcategories: Adversarial Training, Anomaly Detection, Differential Privacy, Homomorphic Encryption, Secure Multiparty Computation.

- Arrows point to **Perturbing Priors**, **Regularization**, and **Adversarial Training** in the TL component.

3. **Privacy Preserving** (Orange Boxes, Right):

- Subcategories: Differential Privacy, Homomorphic Encryption, Secure Multiparty Computation.

- Arrows point to **Adversarial Training**, **Anomaly Detection**, and **Secure Multiparty Computation** in the TL component.

4. **Central TL Component** (Gray Circle):

- Contains interconnected elements: Trustworthiness Assessment, Federated Distillation, Perturbing Priors, Regularization, Adversarial Training, Anomaly Detection, Differential Privacy, Homomorphic Encryption, Secure Multiparty Computation.

---

### Detailed Analysis

- **Model Robustness** focuses on enhancing model reliability through techniques like robust aggregation and zero-knowledge proofs. These feed into TL's trustworthiness assessment and federated distillation.

- **TFL Defense Mechanisms** address security threats via adversarial training and anomaly detection, which are integrated into TL's regularization and perturbing priors.

- **Privacy Preserving** ensures data confidentiality through encryption and secure computation, directly influencing adversarial training and anomaly detection in TL.

- **Color Coding**: Blue (robustness), red (defense), orange (privacy), and gray (TL) visually segregate the pillars and their contributions to the central system.

---

### Key Observations

1. **Interconnectedness**: All three pillars (robustness, defense, privacy) are tightly coupled to the TL component, indicating a holistic approach to trustworthy AI.

2. **Redundancy**: Some subcategories (e.g., Differential Privacy, Homomorphic Encryption) appear in both TFL Defense Mechanisms and Privacy Preserving, suggesting overlapping roles.

3. **Central Hub**: The TL component acts as a unifying layer, integrating inputs from all pillars to optimize system performance and security.

---

### Interpretation

This framework highlights the importance of balancing **model robustness**, **security defenses**, and **data privacy** to build trustworthy AI systems. The central TL component serves as a mediator, ensuring that adversarial attacks, privacy breaches, and model vulnerabilities are mitigated through collaborative mechanisms. For example:

- **Adversarial Training** (defense) and **Differential Privacy** (privacy) work together to harden models against attacks while preserving user data.

- **Federated Distillation** (robustness) and **Secure Multiparty Computation** (privacy) enable collaborative learning without exposing sensitive data.

The diagram underscores that trustworthy AI requires not just technical solutions but also systemic integration of these solutions to address multifaceted challenges.