## Diagram: TFL Defense Mechanisms

### Overview

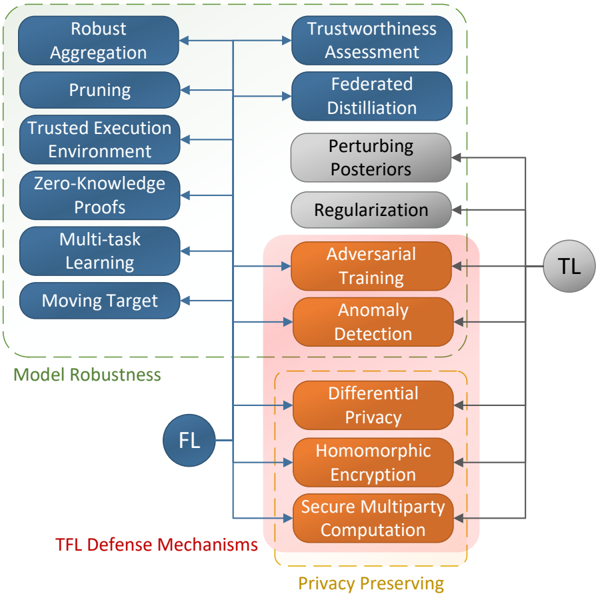

The image is a diagram illustrating different defense mechanisms for Trustworthy Federated Learning (TFL). It categorizes these mechanisms into Model Robustness and Privacy Preserving techniques, and shows their relationship to Federated Learning (FL) and Trustworthy Learning (TL).

### Components/Axes

* **Nodes:** The diagram contains several rounded rectangle nodes, each representing a specific defense mechanism. These nodes are colored blue, gray, or orange.

* **Edges:** Blue lines connect the nodes, indicating relationships between the categories and specific mechanisms.

* **Labels:** The diagram includes labels for each node, as well as category labels such as "Model Robustness," "Privacy Preserving," and "TFL Defense Mechanisms."

* **FL/TL Nodes:** Two circular nodes labeled "FL" and "TL" represent Federated Learning and Trustworthy Learning, respectively.

* **Grouping:** Dashed lines group related defense mechanisms.

### Detailed Analysis

The diagram can be broken down into the following sections:

1. **Model Robustness (Top-Left):**

* Enclosed in a dashed green line.

* Includes the following mechanisms (blue nodes):

* Robust Aggregation

* Pruning

* Trusted Execution Environment

* Zero-Knowledge Proofs

* Multi-task Learning

* Moving Target

2. **Privacy Preserving (Bottom-Right):**

* Enclosed in a dashed orange line.

* Includes the following mechanisms (orange nodes):

* Differential Privacy

* Homomorphic Encryption

* Secure Multiparty Computation

3. **Intermediate Mechanisms (Top-Right):**

* Not explicitly grouped.

* Includes the following mechanisms (blue and gray nodes):

* Trustworthiness Assessment (blue)

* Federated Distillation (blue)

* Perturbing Posteriors (gray)

* Regularization (gray)

* Adversarial Training (orange)

* Anomaly Detection (orange)

4. **FL and TL Nodes:**

* The "FL" node is positioned below the "Model Robustness" group.

* The "TL" node is positioned to the right of the "Privacy Preserving" group.

5. **Connections:**

* The "Model Robustness" mechanisms connect to "Trustworthiness Assessment" and "Federated Distillation".

* The "Privacy Preserving" mechanisms connect to the "TL" node.

* "Perturbing Posteriors" and "Regularization" connect to the "TL" node.

* "Adversarial Training" and "Anomaly Detection" connect to the "TL" node.

### Key Observations

* The diagram categorizes TFL defense mechanisms into Model Robustness and Privacy Preserving techniques.

* Some mechanisms, such as "Trustworthiness Assessment" and "Federated Distillation," are related to both Model Robustness and Trustworthy Learning.

* The diagram highlights the importance of both robustness and privacy in the context of federated learning.

### Interpretation

The diagram provides a high-level overview of the landscape of TFL defense mechanisms. It suggests that achieving trustworthy federated learning requires addressing both model robustness and privacy concerns. The connections between the different mechanisms indicate potential synergies and dependencies. The diagram also highlights the role of Federated Learning (FL) as a foundation for Trustworthy Learning (TL), with both robustness and privacy mechanisms contributing to the overall trustworthiness of the system. The placement of "FL" and "TL" nodes suggests a progression from federated learning to trustworthy learning, with the defense mechanisms acting as enablers.