\n

## Bar Chart: Performance Improvement of Different Models with Various Training Methods

### Overview

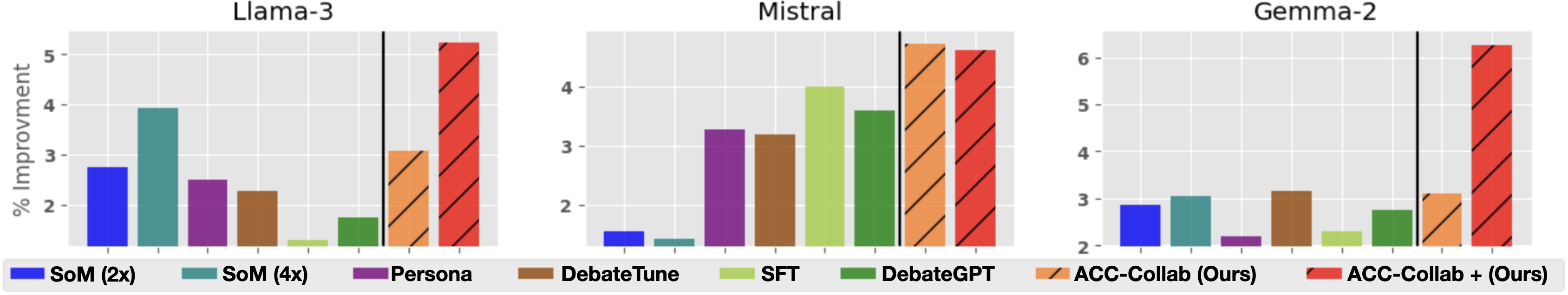

The image presents a comparative bar chart illustrating the percentage improvement achieved by three different language models – Llama-3, Mistral, and Gemma-2 – when trained using various methods. The training methods include SoM (2x and 4x), Persona, DebateTune, SFT, DebateGPT, and ACC-Collab (with and without "Ours"). The y-axis represents the percentage improvement, while the x-axis categorizes the training methods. Each model has its own set of bars representing the improvement for each method.

### Components/Axes

* **Y-axis Title:** "% Improvement" with a scale ranging from approximately 2 to 6.

* **X-axis:** Categorical, representing different training methods: "SoM (2x)", "SoM (4x)", "Persona", "DebateTune", "SFT", "DebateGPT", "ACC-Collab (Ours)", "ACC-Collab + (Ours)".

* **Models:** Three distinct sets of bars, one for each model: Llama-3, Mistral, and Gemma-2.

* **Legend:** Located at the bottom of the image, associating colors with each training method.

* SoM (2x) - Blue

* SoM (4x) - Green

* Persona - Purple

* DebateTune - Brown

* SFT - Dark Green

* DebateGPT - Light Green

* ACC-Collab (Ours) - Orange (patterned)

* ACC-Collab + (Ours) - Red (solid)

### Detailed Analysis

**Llama-3:**

* SoM (2x): Approximately 4.2% improvement.

* SoM (4x): Approximately 2.5% improvement.

* Persona: Approximately 3.2% improvement.

* DebateTune: Approximately 3.0% improvement.

* SFT: Approximately 2.8% improvement.

* DebateGPT: Approximately 5.4% improvement.

* ACC-Collab (Ours): Approximately 3.1% improvement.

* ACC-Collab + (Ours): Approximately 6.4% improvement.

**Mistral:**

* SoM (2x): Approximately 1.6% improvement.

* SoM (4x): Approximately 3.6% improvement.

* Persona: Approximately 3.4% improvement.

* DebateTune: Approximately 2.2% improvement.

* SFT: Approximately 4.2% improvement.

* DebateGPT: Approximately 4.8% improvement.

* ACC-Collab (Ours): Approximately 2.8% improvement.

* ACC-Collab + (Ours): Approximately 5.2% improvement.

**Gemma-2:**

* SoM (2x): Approximately 2.5% improvement.

* SoM (4x): Approximately 3.2% improvement.

* Persona: Approximately 2.8% improvement.

* DebateTune: Approximately 3.0% improvement.

* SFT: Approximately 3.1% improvement.

* DebateGPT: Approximately 2.6% improvement.

* ACC-Collab (Ours): Approximately 2.7% improvement.

* ACC-Collab + (Ours): Approximately 6.2% improvement.

### Key Observations

* Across all models, "ACC-Collab + (Ours)" consistently yields the highest percentage improvement.

* "DebateGPT" generally provides a significant improvement compared to other methods, especially for Llama-3 and Mistral.

* "SoM (2x)" often outperforms "SoM (4x)", which is counterintuitive and may warrant further investigation.

* Mistral shows the lowest overall improvement across most methods compared to Llama-3 and Gemma-2.

### Interpretation

The data suggests that the "ACC-Collab + (Ours)" training method is the most effective for enhancing the performance of all three language models. The consistent high improvement across Llama-3, Mistral, and Gemma-2 indicates a robust and generalizable benefit from this approach. The strong performance of "DebateGPT" suggests that incorporating debate-style training can significantly improve model capabilities. The unexpected result of "SoM (2x)" outperforming "SoM (4x)" could be due to overfitting with the larger sample size or other factors related to the specific implementation of SoM. The relatively lower improvement observed with Mistral might indicate that this model is already performing well or that it requires different training strategies to achieve substantial gains. The chart provides valuable insights into the effectiveness of different training methods and can guide future research and development efforts in language model optimization.