\n

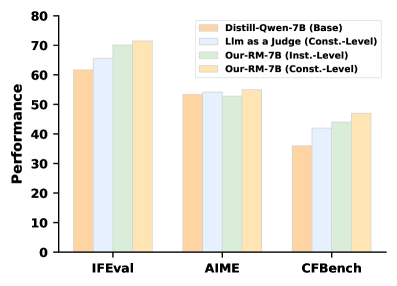

## Grouped Bar Chart: Model Performance Comparison

### Overview

The image displays a grouped bar chart comparing the performance of four different models or methods across three distinct benchmarks. The chart uses a vertical bar format with groups of four bars per benchmark category. The overall visual suggests a performance evaluation where different approaches are tested on standardized tasks.

### Components/Axes

* **Chart Type:** Grouped vertical bar chart.

* **Y-Axis:**

* **Label:** "Performance"

* **Scale:** Linear scale from 0 to 80, with major tick marks at intervals of 10 (0, 10, 20, 30, 40, 50, 60, 70, 80).

* **X-Axis:**

* **Categories (Benchmarks):** Three distinct groups labeled from left to right: "IFEval", "AIME", and "CFBench".

* **Legend:**

* **Position:** Top-right corner of the chart area.

* **Entries (from top to bottom):**

1. **Distill-Qwen-7B (Base)** - Represented by a light orange/peach colored bar.

2. **Llm as a Judge (Const.-Level)** - Represented by a light blue/gray colored bar.

3. **Our-RM-7B (Inst.-Level)** - Represented by a light green/mint colored bar.

4. **Our-RM-7B (Const.-Level)** - Represented by a light yellow/cream colored bar.

### Detailed Analysis

The analysis is segmented by the three benchmark categories on the x-axis.

**1. IFEval Benchmark (Leftmost Group):**

* **Trend:** All four models show their highest performance in this category compared to the other benchmarks. There is a clear, stepwise increasing trend from the first to the fourth bar within the group.

* **Data Points (Approximate Performance Values):**

* Distill-Qwen-7B (Base): ~62

* Llm as a Judge (Const.-Level): ~66

* Our-RM-7B (Inst.-Level): ~70

* Our-RM-7B (Const.-Level): ~72

**2. AIME Benchmark (Middle Group):**

* **Trend:** Performance is lower than IFEval for all models. The first three bars are very close in height, with the fourth bar showing a slight increase.

* **Data Points (Approximate Performance Values):**

* Distill-Qwen-7B (Base): ~53

* Llm as a Judge (Const.-Level): ~54

* Our-RM-7B (Inst.-Level): ~54

* Our-RM-7B (Const.-Level): ~55

**3. CFBench Benchmark (Rightmost Group):**

* **Trend:** This benchmark shows the lowest performance scores overall. There is a clear, stepwise increasing trend from the first to the fourth bar, similar to IFEval but at a lower absolute level.

* **Data Points (Approximate Performance Values):**

* Distill-Qwen-7B (Base): ~36

* Llm as a Judge (Const.-Level): ~42

* Our-RM-7B (Inst.-Level): ~44

* Our-RM-7B (Const.-Level): ~47

### Key Observations

1. **Consistent Hierarchy:** Across all three benchmarks, the performance hierarchy remains consistent: `Distill-Qwen-7B (Base)` < `Llm as a Judge (Const.-Level)` ≤ `Our-RM-7B (Inst.-Level)` < `Our-RM-7B (Const.-Level)`.

2. **Benchmark Difficulty:** The benchmarks appear to have varying difficulty levels, with IFEval being the "easiest" (highest scores) and CFBench being the "hardest" (lowest scores) for all evaluated models.

3. **Model Improvement:** The two "Our-RM-7B" variants consistently outperform the baseline (`Distill-Qwen-7B`) and the `Llm as a Judge` method. The `Const.-Level` variant of `Our-RM-7B` achieves the highest score in every benchmark.

4. **Performance Gap:** The performance gap between the best (`Our-RM-7B (Const.-Level)`) and worst (`Distill-Qwen-7B (Base)`) models is most pronounced in the IFEval (~10 points) and CFBench (~11 points) benchmarks, and smallest in the AIME benchmark (~2 points).

### Interpretation

The chart demonstrates the comparative effectiveness of different model evaluation or training methods. The data suggests that the proposed method, labeled "Our-RM-7B," provides a measurable performance improvement over the baseline model (`Distill-Qwen-7B`) and an alternative approach (`Llm as a Judge`). The consistent superiority of the `Const.-Level` variant over the `Inst.-Level` variant implies that the "Const.-Level" configuration or training objective is more effective for these tasks.

The variation in scores across benchmarks indicates that model performance is task-dependent. The fact that all models follow the same relative ranking across different tasks strengthens the conclusion that the observed improvements are robust and not specific to a single type of evaluation. The smallest gap in the AIME benchmark might suggest that this particular task is less sensitive to the differences between these methods, or that it represents a performance ceiling for the current model architectures being tested. Overall, the chart serves as evidence for the efficacy of the "Our-RM-7B" approach, particularly in its "Const.-Level" form, across a range of standardized tests.