# Technical Analysis of Large Language Model (LLM) Performance Characteristics

This document provides a detailed extraction of data and technical insights from the provided image, which consists of three sub-figures (a, b, and c) analyzing LLM inference stages.

---

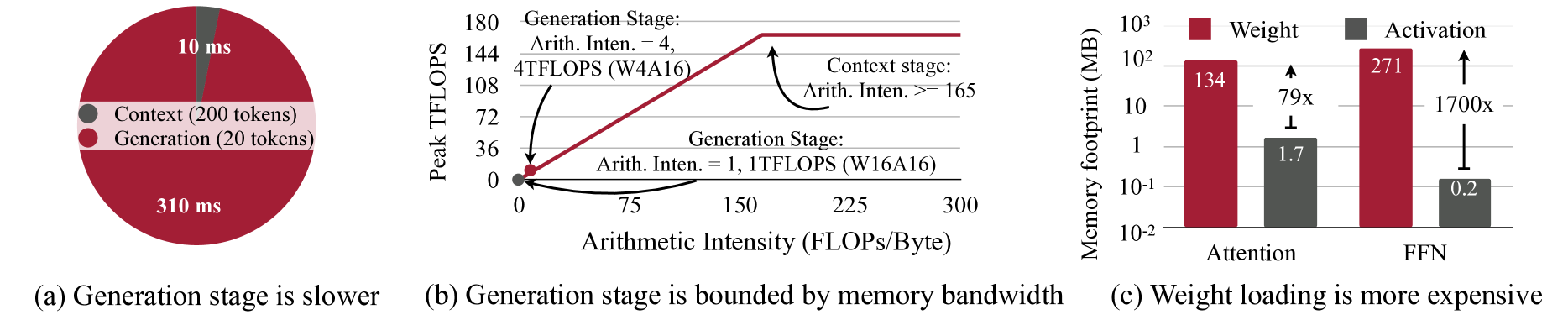

## Figure (a): Generation stage is slower

### Component Isolation: Pie Chart

* **Type:** Pie Chart comparing time duration of different inference stages.

* **Legend (Center Overlay):**

* **Dark Grey Circle:** Context (200 tokens)

* **Maroon Circle:** Generation (20 tokens)

* **Data Points:**

* **Context Stage:** Represented by a small dark grey slice at the top. Value: **10 ms**.

* **Generation Stage:** Represented by the large maroon section comprising the majority of the circle. Value: **310 ms**.

* **Trend/Insight:** Despite having 10x fewer tokens (20 vs 200), the Generation stage takes 31x longer to process than the Context stage, indicating a significant bottleneck in the autoregressive generation phase.

---

## Figure (b): Generation stage is bounded by memory bandwidth

### Component Isolation: Roofline Model Chart

* **X-Axis:** Arithmetic Intensity (FLOPs/Byte). Markers: 0, 75, 150, 225, 300.

* **Y-Axis:** Peak TFLOPS. Markers: 0, 36, 72, 108, 144, 180.

* **Legend/Annotations:**

* **Maroon Line:** Represents the performance ceiling. It slopes upward linearly from (0,0) until it hits a plateau at approximately Y=165.

* **Dark Grey Dot (at origin):** Labeled "Generation Stage: Arith. Inten. = 1, 1TFLOPS (W16A16)".

* **Maroon Dot (on the slope):** Labeled "Generation Stage: Arith. Inten. = 4, 4TFLOPS (W4A16)".

* **Context Stage Annotation:** Points to the plateau region. Text: "Context stage: Arith. Inten. >= 165".

### Data Extraction & Trends

1. **Memory Bound Region (Sloped Line):** The Generation stage (both W16A16 and W4A16) falls on the sloped part of the roofline. This indicates performance is limited by memory bandwidth, not compute power.

2. **Compute Bound Region (Plateau):** The Context stage reaches the plateau (approx. 165 TFLOPS), indicating it is compute-bound.

3. **Quantization Effect:** Moving from W16A16 (Weight 16-bit, Activation 16-bit) to W4A16 (Weight 4-bit) increases Arithmetic Intensity from 1 to 4 and increases performance from 1 TFLOPS to 4 TFLOPS, following the memory-bandwidth slope.

---

## Figure (c): Weight loading is more expensive

### Component Isolation: Bar Chart

* **Type:** Grouped Bar Chart with a logarithmic Y-axis.

* **X-Axis Categories:** Attention, FFN (Feed-Forward Network).

* **Y-Axis:** Memory footprint (MB). Scale: $10^{-2}, 10^{-1}, 1, 10, 10^2, 10^3$.

* **Legend (Top):**

* **Maroon Square:** Weight

* **Dark Grey Square:** Activation

### Data Table Reconstruction

| Category | Component | Value (MB) | Ratio (Weight/Activation) |

| :--- | :--- | :--- | :--- |

| **Attention** | Weight (Maroon) | 134 | 79x |

| **Attention** | Activation (Grey) | 1.7 | - |

| **FFN** | Weight (Maroon) | 271 | 1700x |

| **FFN** | Activation (Grey) | 0.2 | - |

### Trend Verification

* **Weight Dominance:** In both Attention and FFN modules, the memory footprint of Weights (Maroon) significantly dwarfs the Activations (Grey).

* **FFN Disparity:** The disparity is most extreme in the FFN layer, where weights require 1700x more memory than activations.

* **Visual Trend:** The maroon bars are consistently much taller than the grey bars across the logarithmic scale, emphasizing that weight loading is the primary memory bottleneck during the generation stage.